mirror of

https://github.com/didi/KnowStreaming.git

synced 2025-12-24 11:52:08 +08:00

Compare commits

245 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

6c62b019a6 | ||

|

|

d78512f6b7 | ||

|

|

e81c0f3040 | ||

|

|

462303fca0 | ||

|

|

4405703e42 | ||

|

|

23e398e121 | ||

|

|

b17bb89d04 | ||

|

|

5590cebf8f | ||

|

|

1fa043f09d | ||

|

|

3bd0af1451 | ||

|

|

1545962745 | ||

|

|

d032571681 | ||

|

|

33fb0acc7e | ||

|

|

1ec68a91e2 | ||

|

|

a23c113a46 | ||

|

|

371ae2c0a5 | ||

|

|

8f8f6ffa27 | ||

|

|

475fe0d91f | ||

|

|

3d74e60d03 | ||

|

|

83ac83bb28 | ||

|

|

8478fb857c | ||

|

|

7074bdaa9f | ||

|

|

58164294cc | ||

|

|

7c0e9df156 | ||

|

|

bd62212ecb | ||

|

|

2292039b42 | ||

|

|

73f8da8d5a | ||

|

|

e51dbe0ca7 | ||

|

|

482a375e31 | ||

|

|

689c5ce455 | ||

|

|

734a020ecc | ||

|

|

44d537f78c | ||

|

|

b4c60eb910 | ||

|

|

e120b32375 | ||

|

|

de54966d30 | ||

|

|

39a6302c18 | ||

|

|

05ceeea4b0 | ||

|

|

9f8e3373a8 | ||

|

|

42521cbae4 | ||

|

|

b23c35197e | ||

|

|

70f28d9ac4 | ||

|

|

912d73d98a | ||

|

|

2a720fce6f | ||

|

|

e4534c359f | ||

|

|

b91bec15f2 | ||

|

|

67ad5cacb7 | ||

|

|

b4a739476a | ||

|

|

a7bf2085db | ||

|

|

c3802cf48b | ||

|

|

54711c4491 | ||

|

|

fcb52a69c0 | ||

|

|

1b632f9754 | ||

|

|

73d7a0ecdc | ||

|

|

08943593b3 | ||

|

|

c949a88f20 | ||

|

|

a49c11f655 | ||

|

|

a66aed4a88 | ||

|

|

0045c953a0 | ||

|

|

fdce41b451 | ||

|

|

4d5e4d0f00 | ||

|

|

82c9b6481e | ||

|

|

053d4dcb18 | ||

|

|

e1b2c442aa | ||

|

|

0ed8ba8ca4 | ||

|

|

f195847c68 | ||

|

|

5beb13b17e | ||

|

|

7d9ec05062 | ||

|

|

fc604a9eaf | ||

|

|

4f3c1ad9b6 | ||

|

|

6d45ed586c | ||

|

|

1afb633b4f | ||

|

|

34d9f9174b | ||

|

|

3b0c208eff | ||

|

|

05022f8db4 | ||

|

|

3336de457a | ||

|

|

10a27bc29c | ||

|

|

542e5d3c2d | ||

|

|

7372617b14 | ||

|

|

89735a130b | ||

|

|

859cf74bd6 | ||

|

|

e2744ab399 | ||

|

|

16bd065098 | ||

|

|

71c52e6dd7 | ||

|

|

a7f8c3ced3 | ||

|

|

f3f0432c65 | ||

|

|

426ba2d150 | ||

|

|

2790099efa | ||

|

|

f6ba8bc95e | ||

|

|

d6181522c0 | ||

|

|

04cf071ca6 | ||

|

|

e4371b5d02 | ||

|

|

52c52b2a0d | ||

|

|

8f40f10575 | ||

|

|

fe0f6fcd0b | ||

|

|

31b1ad8bb4 | ||

|

|

373680d854 | ||

|

|

9e3bc80495 | ||

|

|

89405fe003 | ||

|

|

b9ea3865a5 | ||

|

|

b5bd643814 | ||

|

|

52ccaeffd5 | ||

|

|

18136c12fd | ||

|

|

dec3f9e75e | ||

|

|

ccc0ee4d18 | ||

|

|

69e9708080 | ||

|

|

5944ba099a | ||

|

|

ada2718b5e | ||

|

|

1f87bd63e7 | ||

|

|

c0f3259cf6 | ||

|

|

e1d5749a40 | ||

|

|

a8d7eb27d9 | ||

|

|

1eecdf3829 | ||

|

|

be8b345889 | ||

|

|

074da389b3 | ||

|

|

4df2dc09fe | ||

|

|

e8d42ba074 | ||

|

|

c036483680 | ||

|

|

2818584db6 | ||

|

|

37585f760d | ||

|

|

f5477a03a1 | ||

|

|

50388425b2 | ||

|

|

725c59eab0 | ||

|

|

7bf1de29a4 | ||

|

|

d90c3fc7dd | ||

|

|

80785ce072 | ||

|

|

44ea896de8 | ||

|

|

d30cb8a0f0 | ||

|

|

6c7b333b34 | ||

|

|

6d34a00e77 | ||

|

|

1f353e10ce | ||

|

|

4e10f8d1c5 | ||

|

|

a22cd853fc | ||

|

|

354e0d6a87 | ||

|

|

dfabe28645 | ||

|

|

fce230da48 | ||

|

|

055ba9bda6 | ||

|

|

ec19c3b4dd | ||

|

|

37aa526404 | ||

|

|

86c1faa40f | ||

|

|

8dcf15d0f9 | ||

|

|

6835e1e680 | ||

|

|

d8f89b8f67 | ||

|

|

ec28eba781 | ||

|

|

5ef8fff5bc | ||

|

|

4f317b76fa | ||

|

|

61672637dc | ||

|

|

ecf6e8f664 | ||

|

|

4115975320 | ||

|

|

21904a8609 | ||

|

|

10b0a3dabb | ||

|

|

b2091e9aed | ||

|

|

f2cb5bd77c | ||

|

|

19c61c52e6 | ||

|

|

b327359183 | ||

|

|

9e9bb72e17 | ||

|

|

a23907e009 | ||

|

|

ad131f5a2c | ||

|

|

dbeae4ca68 | ||

|

|

0fb0e94848 | ||

|

|

95d2a82d35 | ||

|

|

5bc6eb6774 | ||

|

|

3ba81e9aaa | ||

|

|

329a9b59c1 | ||

|

|

39cccd568e | ||

|

|

19b7f6ad8c | ||

|

|

41c000cf47 | ||

|

|

1b8ea61e87 | ||

|

|

22c26e24b1 | ||

|

|

396045177c | ||

|

|

4538593236 | ||

|

|

8086ef355b | ||

|

|

60d038fe46 | ||

|

|

ff0f4463be | ||

|

|

820571d993 | ||

|

|

e311d3767c | ||

|

|

24d7b80244 | ||

|

|

61f99e4d2e | ||

|

|

d5348bcf49 | ||

|

|

5d31d66365 | ||

|

|

29778a0154 | ||

|

|

165c0a5866 | ||

|

|

588323961e | ||

|

|

fd1c0b71c5 | ||

|

|

54fbdcadf9 | ||

|

|

69a30d0cf0 | ||

|

|

b8f9b44f38 | ||

|

|

cbf17d4eb5 | ||

|

|

327e025262 | ||

|

|

6b1e944bba | ||

|

|

668ed4d61b | ||

|

|

312c0584ed | ||

|

|

110d3acb58 | ||

|

|

ddbc60283b | ||

|

|

471bcecfd6 | ||

|

|

0245791b13 | ||

|

|

4794396ce8 | ||

|

|

c7088779d6 | ||

|

|

672905da12 | ||

|

|

47172b13be | ||

|

|

3668a10af6 | ||

|

|

a4e294c03f | ||

|

|

3fd6f4003f | ||

|

|

3eaf5cd530 | ||

|

|

c344fd8ca4 | ||

|

|

09639ca294 | ||

|

|

a81b6dca83 | ||

|

|

b74aefb08f | ||

|

|

fffc0c3add | ||

|

|

757f90aa7a | ||

|

|

022f9eb551 | ||

|

|

6e7b82cfcb | ||

|

|

b5fb24b360 | ||

|

|

b77345222c | ||

|

|

793e81406e | ||

|

|

cef1ec95d2 | ||

|

|

7e1b3c552b | ||

|

|

69736a63b6 | ||

|

|

fb4a9f9056 | ||

|

|

387d89d3af | ||

|

|

65d9ca9d39 | ||

|

|

8c842af4ba | ||

|

|

4faf9262c9 | ||

|

|

be7724c67d | ||

|

|

48d26347f7 | ||

|

|

bdb01ec8b5 | ||

|

|

9047815799 | ||

|

|

05bd94a2cc | ||

|

|

c9f7da84d0 | ||

|

|

bcc124e86a | ||

|

|

48d2733403 | ||

|

|

31fc6e4e56 | ||

|

|

fcdeef0146 | ||

|

|

1cd524c0cc | ||

|

|

0f746917a7 | ||

|

|

a2228d0169 | ||

|

|

e8a679d34b | ||

|

|

1912a42091 | ||

|

|

ca81f96635 | ||

|

|

eb3b8c4b31 | ||

|

|

6740d6d60b | ||

|

|

c46c35b248 | ||

|

|

0b2dcec4bc | ||

|

|

7256db8c4e | ||

|

|

25c3aeaa5f | ||

|

|

736d5a00b7 |

1

.gitignore

vendored

1

.gitignore

vendored

@@ -111,3 +111,4 @@ dist/

|

|||||||

dist/*

|

dist/*

|

||||||

kafka-manager-web/src/main/resources/templates/

|

kafka-manager-web/src/main/resources/templates/

|

||||||

.DS_Store

|

.DS_Store

|

||||||

|

kafka-manager-console/package-lock.json

|

||||||

|

|||||||

41

Dockerfile

Normal file

41

Dockerfile

Normal file

@@ -0,0 +1,41 @@

|

|||||||

|

ARG MAVEN_VERSION=3.8.4-openjdk-8-slim

|

||||||

|

ARG JAVA_VERSION=8-jdk-alpine3.9

|

||||||

|

FROM maven:${MAVEN_VERSION} AS builder

|

||||||

|

ARG CONSOLE_ENABLE=true

|

||||||

|

|

||||||

|

WORKDIR /opt

|

||||||

|

COPY . .

|

||||||

|

COPY distribution/conf/settings.xml /root/.m2/settings.xml

|

||||||

|

|

||||||

|

# whether to build console

|

||||||

|

RUN set -eux; \

|

||||||

|

if [ $CONSOLE_ENABLE = 'false' ]; then \

|

||||||

|

sed -i "/kafka-manager-console/d" pom.xml; \

|

||||||

|

fi \

|

||||||

|

&& mvn -Dmaven.test.skip=true clean install -U

|

||||||

|

|

||||||

|

FROM openjdk:${JAVA_VERSION}

|

||||||

|

|

||||||

|

RUN sed -i 's/dl-cdn.alpinelinux.org/mirrors.aliyun.com/g' /etc/apk/repositories && apk add --no-cache tini

|

||||||

|

|

||||||

|

ENV TZ=Asia/Shanghai

|

||||||

|

ENV AGENT_HOME=/opt/agent/

|

||||||

|

|

||||||

|

COPY --from=builder /opt/kafka-manager-web/target/kafka-manager.jar /opt

|

||||||

|

COPY --from=builder /opt/container/dockerfiles/docker-depends/config.yaml $AGENT_HOME

|

||||||

|

COPY --from=builder /opt/container/dockerfiles/docker-depends/jmx_prometheus_javaagent-0.15.0.jar $AGENT_HOME

|

||||||

|

COPY --from=builder /opt/distribution/conf/application-docker.yml /opt

|

||||||

|

|

||||||

|

WORKDIR /opt

|

||||||

|

|

||||||

|

ENV JAVA_AGENT="-javaagent:$AGENT_HOME/jmx_prometheus_javaagent-0.15.0.jar=9999:$AGENT_HOME/config.yaml"

|

||||||

|

ENV JAVA_HEAP_OPTS="-Xms1024M -Xmx1024M -Xmn100M "

|

||||||

|

ENV JAVA_OPTS="-verbose:gc \

|

||||||

|

-XX:MaxMetaspaceSize=256M -XX:+DisableExplicitGC -XX:+UseStringDeduplication \

|

||||||

|

-XX:+UseG1GC -XX:+HeapDumpOnOutOfMemoryError -XX:-UseContainerSupport"

|

||||||

|

|

||||||

|

EXPOSE 8080 9999

|

||||||

|

|

||||||

|

ENTRYPOINT ["tini", "--"]

|

||||||

|

|

||||||

|

CMD [ "sh", "-c", "java -jar $JAVA_AGENT $JAVA_HEAP_OPTS $JAVA_OPTS kafka-manager.jar --spring.config.location=application-docker.yml"]

|

||||||

57

README.md

57

README.md

@@ -1,13 +1,13 @@

|

|||||||

|

|

||||||

---

|

---

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

**一站式`Apache Kafka`集群指标监控与运维管控平台**

|

**一站式`Apache Kafka`集群指标监控与运维管控平台**

|

||||||

|

|

||||||

|

`LogiKM开源至今备受关注,考虑到开源项目应该更贴合Apache Kafka未来发展方向,经项目组慎重考虑,预计22年下半年将其品牌升级成Know Streaming,届时项目名称和Logo也将统一更新,感谢大家一如既往的支持,敬请期待!`

|

||||||

|

|

||||||

|

阅读本README文档,您可以了解到滴滴Logi-KafkaManager的用户群体、产品定位等信息,并通过体验地址,快速体验Kafka集群指标监控与运维管控的全流程。

|

||||||

阅读本README文档,您可以了解到滴滴Logi-KafkaManager的用户群体、产品定位等信息,并通过体验地址,快速体验Kafka集群指标监控与运维管控的全流程。<br>若滴滴Logi-KafkaManager已在贵司的生产环境进行使用,并想要获得官方更好地支持和指导,可以通过[`OCE认证`](http://obsuite.didiyun.com/open/openAuth),加入官方交流平台。

|

|

||||||

|

|

||||||

|

|

||||||

## 1 产品简介

|

## 1 产品简介

|

||||||

@@ -55,39 +55,56 @@

|

|||||||

## 2 相关文档

|

## 2 相关文档

|

||||||

|

|

||||||

### 2.1 产品文档

|

### 2.1 产品文档

|

||||||

- [滴滴Logi-KafkaManager 安装手册](docs/install_guide/install_guide_cn.md)

|

- [滴滴LogiKM 安装手册](docs/install_guide/install_guide_cn.md)

|

||||||

- [滴滴Logi-KafkaManager 接入集群](docs/user_guide/add_cluster/add_cluster.md)

|

- [滴滴LogiKM 接入集群](docs/user_guide/add_cluster/add_cluster.md)

|

||||||

- [滴滴Logi-KafkaManager 用户使用手册](docs/user_guide/user_guide_cn.md)

|

- [滴滴LogiKM 用户使用手册](docs/user_guide/user_guide_cn.md)

|

||||||

- [滴滴Logi-KafkaManager FAQ](docs/user_guide/faq.md)

|

- [滴滴LogiKM FAQ](docs/user_guide/faq.md)

|

||||||

|

|

||||||

### 2.2 社区文章

|

### 2.2 社区文章

|

||||||

- [滴滴云官网产品介绍](https://www.didiyun.com/production/logi-KafkaManager.html)

|

- [滴滴云官网产品介绍](https://www.didiyun.com/production/logi-KafkaManager.html)

|

||||||

- [7年沉淀之作--滴滴Logi日志服务套件](https://mp.weixin.qq.com/s/-KQp-Qo3WKEOc9wIR2iFnw)

|

- [7年沉淀之作--滴滴Logi日志服务套件](https://mp.weixin.qq.com/s/-KQp-Qo3WKEOc9wIR2iFnw)

|

||||||

- [滴滴Logi-KafkaManager 一站式Kafka监控与管控平台](https://mp.weixin.qq.com/s/9qSZIkqCnU6u9nLMvOOjIQ)

|

- [滴滴LogiKM 一站式Kafka监控与管控平台](https://mp.weixin.qq.com/s/9qSZIkqCnU6u9nLMvOOjIQ)

|

||||||

- [滴滴Logi-KafkaManager 开源之路](https://xie.infoq.cn/article/0223091a99e697412073c0d64)

|

- [滴滴LogiKM 开源之路](https://xie.infoq.cn/article/0223091a99e697412073c0d64)

|

||||||

- [滴滴Logi-KafkaManager 系列视频教程](https://mp.weixin.qq.com/s/9X7gH0tptHPtfjPPSdGO8g)

|

- [滴滴LogiKM 系列视频教程](https://space.bilibili.com/442531657/channel/seriesdetail?sid=571649)

|

||||||

- [kafka实践(十五):滴滴开源Kafka管控平台 Logi-KafkaManager研究--A叶子叶来](https://blog.csdn.net/yezonggang/article/details/113106244)

|

- [kafka最强最全知识图谱](https://www.szzdzhp.com/kafka/)

|

||||||

- [kafka的灵魂伴侣Logi-KafkaManager系列文章专栏 --石臻](https://blog.csdn.net/u010634066/category_10977588.html)

|

- [滴滴LogiKM新用户入门系列文章专栏 --石臻臻](https://www.szzdzhp.com/categories/LogIKM/)

|

||||||

|

- [kafka实践(十五):滴滴开源Kafka管控平台 LogiKM研究--A叶子叶来](https://blog.csdn.net/yezonggang/article/details/113106244)

|

||||||

|

- [基于云原生应用管理平台Rainbond安装 滴滴LogiKM](https://www.rainbond.com/docs/opensource-app/logikm/?channel=logikm)

|

||||||

|

|

||||||

## 3 滴滴Logi开源用户交流群

|

## 3 滴滴Logi开源用户交流群

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

微信加群:关注公众号 Obsuite(官方公众号) 回复 "Logi加群"

|

|

||||||

|

|

||||||

|

想跟各个大佬交流Kafka Es 等中间件/大数据相关技术请 加微信进群。

|

||||||

钉钉群ID:32821440

|

|

||||||

|

|

||||||

|

微信加群:添加<font color=red>mike_zhangliang</font>、<font color=red>danke-x</font>的微信号备注Logi加群或关注公众号 云原生可观测性 回复 "Logi加群"

|

||||||

|

|

||||||

## 4 OCE认证

|

## 4 知识星球

|

||||||

OCE是一个认证机制和交流平台,为滴滴Logi-KafkaManager生产用户量身打造,我们会为OCE企业提供更好的技术支持,比如专属的技术沙龙、企业一对一的交流机会、专属的答疑群等,如果贵司Logi-KafkaManager上了生产,[快来加入吧](http://obsuite.didiyun.com/open/openAuth)

|

|

||||||

|

|

||||||

|

<img width="447" alt="image" src="https://user-images.githubusercontent.com/71620349/147314042-843a371a-48c0-4d9a-a65e-ca40236f3300.png">

|

||||||

|

|

||||||

|

<br>

|

||||||

|

<center>

|

||||||

|

✅我们正在组建国内最大最权威的

|

||||||

|

</center>

|

||||||

|

<br>

|

||||||

|

<center>

|

||||||

|

<font color=red size=5><b>【Kafka中文社区】</b></font>

|

||||||

|

</center>

|

||||||

|

|

||||||

|

在这里你可以结交各大互联网Kafka大佬以及3000+Kafka爱好者,一起实现知识共享,实时掌控最新行业资讯,期待您的加入中~https://z.didi.cn/5gSF9

|

||||||

|

|

||||||

|

<font color=red size=5>有问必答~! </font>

|

||||||

|

|

||||||

|

<font color=red size=5>互动有礼~! </font>

|

||||||

|

|

||||||

|

PS:提问请尽量把问题一次性描述清楚,并告知环境信息情况哦~!如使用版本、操作步骤、报错/警告信息等,方便大V们快速解答~

|

||||||

|

|

||||||

## 5 项目成员

|

## 5 项目成员

|

||||||

|

|

||||||

### 5.1 内部核心人员

|

### 5.1 内部核心人员

|

||||||

|

|

||||||

`iceyuhui`、`liuyaguang`、`limengmonty`、`zhangliangmike`、`nullhuangyiming`、`zengqiao`、`eilenexuzhe`、`huangjiaweihjw`、`zhaoyinrui`、`marzkonglingxu`、`joysunchao`

|

`iceyuhui`、`liuyaguang`、`limengmonty`、`zhangliangmike`、`zhaoqingrong`、`xiepeng`、`nullhuangyiming`、`zengqiao`、`eilenexuzhe`、`huangjiaweihjw`、`zhaoyinrui`、`marzkonglingxu`、`joysunchao`、`石臻臻`

|

||||||

|

|

||||||

|

|

||||||

### 5.2 外部贡献者

|

### 5.2 外部贡献者

|

||||||

@@ -97,4 +114,4 @@ OCE是一个认证机制和交流平台,为滴滴Logi-KafkaManager生产用户

|

|||||||

|

|

||||||

## 6 协议

|

## 6 协议

|

||||||

|

|

||||||

`kafka-manager`基于`Apache-2.0`协议进行分发和使用,更多信息参见[协议文件](./LICENSE)

|

`LogiKM`基于`Apache-2.0`协议进行分发和使用,更多信息参见[协议文件](./LICENSE)

|

||||||

|

|||||||

@@ -7,6 +7,39 @@

|

|||||||

|

|

||||||

---

|

---

|

||||||

|

|

||||||

|

## v2.6.0

|

||||||

|

|

||||||

|

版本上线时间:2022-01-24

|

||||||

|

|

||||||

|

### 能力提升

|

||||||

|

- 增加简单回退工具类

|

||||||

|

|

||||||

|

### 体验优化

|

||||||

|

- 补充周期任务说明文档

|

||||||

|

- 补充集群安装部署使用说明文档

|

||||||

|

- 升级Swagger、SpringFramework、SpringBoot、EChats版本

|

||||||

|

- 优化Task模块的日志输出

|

||||||

|

- 优化corn表达式解析失败后退出无任何日志提示问题

|

||||||

|

- Ldap用户接入时,增加部门及邮箱信息等

|

||||||

|

- 对Jmx模块,增加连接失败后的回退机制及错误日志优化

|

||||||

|

- 增加线程池、客户端池可配置

|

||||||

|

- 删除无用的jmx_prometheus_javaagent-0.14.0.jar

|

||||||

|

- 优化迁移任务名称

|

||||||

|

- 优化创建Region时,Region容量信息不能立即被更新问题

|

||||||

|

- 引入lombok

|

||||||

|

- 更新视频教程

|

||||||

|

- 优化kcm_script.sh脚本中的LogiKM地址为可通过程序传入

|

||||||

|

- 第三方接口及网关接口,增加是否跳过登录的开关

|

||||||

|

- extends模块相关配置调整为非必须在application.yml中配置

|

||||||

|

|

||||||

|

### bug修复

|

||||||

|

- 修复批量往DB写入空指标数组时报SQL语法异常的问题

|

||||||

|

- 修复网关增加配置及修改配置时,version不变化问题

|

||||||

|

- 修复集群列表页,提示框遮挡问题

|

||||||

|

- 修复对高版本Broker元信息协议解析失败的问题

|

||||||

|

- 修复Dockerfile执行时提示缺少application.yml文件的问题

|

||||||

|

- 修复逻辑集群更新时,会报空指针的问题

|

||||||

|

|

||||||

## v2.4.1+

|

## v2.4.1+

|

||||||

|

|

||||||

版本上线时间:2021-05-21

|

版本上线时间:2021-05-21

|

||||||

|

|||||||

@@ -1,43 +0,0 @@

|

|||||||

FROM openjdk:16-jdk-alpine3.13

|

|

||||||

|

|

||||||

LABEL author="yangvipguang"

|

|

||||||

|

|

||||||

ENV VERSION 2.3.1

|

|

||||||

|

|

||||||

RUN sed -i 's/dl-cdn.alpinelinux.org/mirrors.aliyun.com/g' /etc/apk/repositories

|

|

||||||

RUN apk add --no-cache --virtual .build-deps \

|

|

||||||

font-adobe-100dpi \

|

|

||||||

ttf-dejavu \

|

|

||||||

fontconfig \

|

|

||||||

curl \

|

|

||||||

apr \

|

|

||||||

apr-util \

|

|

||||||

apr-dev \

|

|

||||||

tomcat-native \

|

|

||||||

&& apk del .build-deps

|

|

||||||

|

|

||||||

RUN apk add --no-cache tini

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

ENV AGENT_HOME /opt/agent/

|

|

||||||

|

|

||||||

WORKDIR /tmp

|

|

||||||

|

|

||||||

COPY $JAR_PATH/kafka-manager.jar app.jar

|

|

||||||

# COPY application.yml application.yml ##默认使用helm 挂载,防止敏感配置泄露

|

|

||||||

|

|

||||||

COPY docker-depends/config.yaml $AGENT_HOME

|

|

||||||

COPY docker-depends/jmx_prometheus_javaagent-0.15.0.jar $AGENT_HOME

|

|

||||||

|

|

||||||

ENV JAVA_AGENT="-javaagent:$AGENT_HOME/jmx_prometheus_javaagent-0.15.0.jar=9999:$AGENT_HOME/config.yaml"

|

|

||||||

ENV JAVA_HEAP_OPTS="-Xms1024M -Xmx1024M -Xmn100M "

|

|

||||||

ENV JAVA_OPTS="-verbose:gc \

|

|

||||||

-XX:MaxMetaspaceSize=256M -XX:+DisableExplicitGC -XX:+UseStringDeduplication \

|

|

||||||

-XX:+UseG1GC -XX:+HeapDumpOnOutOfMemoryError -XX:-UseContainerSupport"

|

|

||||||

EXPOSE 8080 9999

|

|

||||||

|

|

||||||

ENTRYPOINT ["tini", "--"]

|

|

||||||

|

|

||||||

CMD ["sh","-c","java -jar $JAVA_AGENT $JAVA_HEAP_OPTS $JAVA_OPTS app.jar --spring.config.location=application.yml"]

|

|

||||||

Binary file not shown.

13

container/dockerfiles/mysql/Dockerfile

Normal file

13

container/dockerfiles/mysql/Dockerfile

Normal file

@@ -0,0 +1,13 @@

|

|||||||

|

FROM mysql:5.7.37

|

||||||

|

|

||||||

|

COPY mysqld.cnf /etc/mysql/mysql.conf.d/

|

||||||

|

ENV TZ=Asia/Shanghai

|

||||||

|

ENV MYSQL_ROOT_PASSWORD=root

|

||||||

|

|

||||||

|

RUN apt-get update \

|

||||||

|

&& apt -y install wget \

|

||||||

|

&& wget https://ghproxy.com/https://raw.githubusercontent.com/didi/LogiKM/master/distribution/conf/create_mysql_table.sql -O /docker-entrypoint-initdb.d/create_mysql_table.sql

|

||||||

|

|

||||||

|

EXPOSE 3306

|

||||||

|

|

||||||

|

VOLUME ["/var/lib/mysql"]

|

||||||

24

container/dockerfiles/mysql/mysqld.cnf

Normal file

24

container/dockerfiles/mysql/mysqld.cnf

Normal file

@@ -0,0 +1,24 @@

|

|||||||

|

[client]

|

||||||

|

default-character-set = utf8

|

||||||

|

|

||||||

|

[mysqld]

|

||||||

|

character_set_server = utf8

|

||||||

|

pid-file = /var/run/mysqld/mysqld.pid

|

||||||

|

socket = /var/run/mysqld/mysqld.sock

|

||||||

|

datadir = /var/lib/mysql

|

||||||

|

symbolic-links=0

|

||||||

|

|

||||||

|

max_allowed_packet = 10M

|

||||||

|

sort_buffer_size = 1M

|

||||||

|

read_rnd_buffer_size = 2M

|

||||||

|

max_connections=2000

|

||||||

|

|

||||||

|

lower_case_table_names=1

|

||||||

|

character-set-server=utf8

|

||||||

|

|

||||||

|

max_allowed_packet = 1G

|

||||||

|

sql_mode=STRICT_TRANS_TABLES,NO_ZERO_IN_DATE,NO_ZERO_DATE,ERROR_FOR_DIVISION_BY_ZERO,NO_AUTO_CREATE_USER,NO_ENGINE_SUBSTITUTION

|

||||||

|

group_concat_max_len = 102400

|

||||||

|

default-time-zone = '+08:00'

|

||||||

|

[mysql]

|

||||||

|

default-character-set = utf8

|

||||||

6

container/helm/Chart.lock

Normal file

6

container/helm/Chart.lock

Normal file

@@ -0,0 +1,6 @@

|

|||||||

|

dependencies:

|

||||||

|

- name: mysql

|

||||||

|

repository: https://charts.bitnami.com/bitnami

|

||||||

|

version: 8.6.3

|

||||||

|

digest: sha256:d250c463c1d78ba30a24a338a06a551503c7a736621d974fe4999d2db7f6143e

|

||||||

|

generated: "2021-06-24T11:34:54.625217+08:00"

|

||||||

@@ -1,6 +1,6 @@

|

|||||||

apiVersion: v2

|

apiVersion: v2

|

||||||

name: didi-km

|

name: didi-km

|

||||||

description: A Helm chart for Kubernetes

|

description: Logi-KafkaManager

|

||||||

|

|

||||||

# A chart can be either an 'application' or a 'library' chart.

|

# A chart can be either an 'application' or a 'library' chart.

|

||||||

#

|

#

|

||||||

@@ -21,4 +21,9 @@ version: 0.1.0

|

|||||||

# incremented each time you make changes to the application. Versions are not expected to

|

# incremented each time you make changes to the application. Versions are not expected to

|

||||||

# follow Semantic Versioning. They should reflect the version the application is using.

|

# follow Semantic Versioning. They should reflect the version the application is using.

|

||||||

# It is recommended to use it with quotes.

|

# It is recommended to use it with quotes.

|

||||||

appVersion: "1.16.0"

|

appVersion: "2.4.2"

|

||||||

|

dependencies:

|

||||||

|

- condition: mysql.enabled

|

||||||

|

name: mysql

|

||||||

|

repository: https://charts.bitnami.com/bitnami

|

||||||

|

version: 8.x.x

|

||||||

|

|||||||

BIN

container/helm/charts/mysql-8.6.3.tgz

Normal file

BIN

container/helm/charts/mysql-8.6.3.tgz

Normal file

Binary file not shown.

@@ -1,7 +1,17 @@

|

|||||||

|

{{- define "datasource.mysql" -}}

|

||||||

|

{{- if .Values.mysql.enabled }}

|

||||||

|

{{- printf "%s-mysql" (include "didi-km.fullname" .) -}}

|

||||||

|

{{- else -}}

|

||||||

|

{{- printf "%s" .Values.externalDatabase.host -}}

|

||||||

|

{{- end -}}

|

||||||

|

{{- end -}}

|

||||||

|

|

||||||

apiVersion: v1

|

apiVersion: v1

|

||||||

kind: ConfigMap

|

kind: ConfigMap

|

||||||

metadata:

|

metadata:

|

||||||

name: km-cm

|

name: {{ include "didi-km.fullname" . }}-configs

|

||||||

|

labels:

|

||||||

|

{{- include "didi-km.labels" . | nindent 4 }}

|

||||||

data:

|

data:

|

||||||

application.yml: |

|

application.yml: |

|

||||||

server:

|

server:

|

||||||

@@ -17,9 +27,9 @@ data:

|

|||||||

name: kafkamanager

|

name: kafkamanager

|

||||||

datasource:

|

datasource:

|

||||||

kafka-manager:

|

kafka-manager:

|

||||||

jdbc-url: jdbc:mysql://xxxxx:3306/kafka-manager?characterEncoding=UTF-8&serverTimezone=GMT%2B8&useSSL=false

|

jdbc-url: jdbc:mysql://{{ include "datasource.mysql" . }}:3306/{{ .Values.mysql.auth.database }}?characterEncoding=UTF-8&serverTimezone=GMT%2B8&useSSL=false

|

||||||

username: admin

|

username: {{ .Values.mysql.auth.username }}

|

||||||

password: admin

|

password: {{ .Values.mysql.auth.password }}

|

||||||

driver-class-name: com.mysql.jdbc.Driver

|

driver-class-name: com.mysql.jdbc.Driver

|

||||||

main:

|

main:

|

||||||

allow-bean-definition-overriding: true

|

allow-bean-definition-overriding: true

|

||||||

@@ -45,7 +55,7 @@ data:

|

|||||||

didi:

|

didi:

|

||||||

app-topic-metrics-enabled: false

|

app-topic-metrics-enabled: false

|

||||||

topic-request-time-metrics-enabled: false

|

topic-request-time-metrics-enabled: false

|

||||||

topic-throttled-metrics: false

|

topic-throttled-metrics-enabled: false

|

||||||

save-days: 7

|

save-days: 7

|

||||||

|

|

||||||

# 任务相关的开关

|

# 任务相关的开关

|

||||||

@@ -54,7 +64,19 @@ data:

|

|||||||

sync-topic-enabled: false # 未落盘的Topic定期同步到DB中

|

sync-topic-enabled: false # 未落盘的Topic定期同步到DB中

|

||||||

|

|

||||||

account:

|

account:

|

||||||

|

# ldap settings

|

||||||

ldap:

|

ldap:

|

||||||

|

enabled: false

|

||||||

|

url: ldap://127.0.0.1:389/

|

||||||

|

basedn: dc=tsign,dc=cn

|

||||||

|

factory: com.sun.jndi.ldap.LdapCtxFactory

|

||||||

|

filter: sAMAccountName

|

||||||

|

security:

|

||||||

|

authentication: simple

|

||||||

|

principal: cn=admin,dc=tsign,dc=cn

|

||||||

|

credentials: admin

|

||||||

|

auth-user-registration: false

|

||||||

|

auth-user-registration-role: normal

|

||||||

|

|

||||||

kcm:

|

kcm:

|

||||||

enabled: false

|

enabled: false

|

||||||

|

|||||||

@@ -42,6 +42,10 @@ spec:

|

|||||||

protocol: TCP

|

protocol: TCP

|

||||||

resources:

|

resources:

|

||||||

{{- toYaml .Values.resources | nindent 12 }}

|

{{- toYaml .Values.resources | nindent 12 }}

|

||||||

|

volumeMounts:

|

||||||

|

- name: configs

|

||||||

|

mountPath: /tmp/application.yml

|

||||||

|

subPath: application.yml

|

||||||

{{- with .Values.nodeSelector }}

|

{{- with .Values.nodeSelector }}

|

||||||

nodeSelector:

|

nodeSelector:

|

||||||

{{- toYaml . | nindent 8 }}

|

{{- toYaml . | nindent 8 }}

|

||||||

@@ -54,3 +58,7 @@ spec:

|

|||||||

tolerations:

|

tolerations:

|

||||||

{{- toYaml . | nindent 8 }}

|

{{- toYaml . | nindent 8 }}

|

||||||

{{- end }}

|

{{- end }}

|

||||||

|

volumes:

|

||||||

|

- name: configs

|

||||||

|

configMap:

|

||||||

|

name: {{ include "didi-km.fullname" . }}-configs

|

||||||

|

|||||||

@@ -5,13 +5,14 @@

|

|||||||

replicaCount: 1

|

replicaCount: 1

|

||||||

|

|

||||||

image:

|

image:

|

||||||

repository: docker.io/yangvipguang/km

|

repository: docker.io/fengxsong/logi-kafka-manager

|

||||||

pullPolicy: IfNotPresent

|

pullPolicy: IfNotPresent

|

||||||

# Overrides the image tag whose default is the chart appVersion.

|

# Overrides the image tag whose default is the chart appVersion.

|

||||||

tag: "v18"

|

tag: "v2.4.2"

|

||||||

|

|

||||||

imagePullSecrets: []

|

imagePullSecrets: []

|

||||||

nameOverride: ""

|

nameOverride: ""

|

||||||

|

# fullnameOverride must set same as release name

|

||||||

fullnameOverride: "km"

|

fullnameOverride: "km"

|

||||||

|

|

||||||

serviceAccount:

|

serviceAccount:

|

||||||

@@ -59,10 +60,10 @@ resources:

|

|||||||

# resources, such as Minikube. If you do want to specify resources, uncomment the following

|

# resources, such as Minikube. If you do want to specify resources, uncomment the following

|

||||||

# lines, adjust them as necessary, and remove the curly braces after 'resources:'.

|

# lines, adjust them as necessary, and remove the curly braces after 'resources:'.

|

||||||

limits:

|

limits:

|

||||||

cpu: 50m

|

cpu: 500m

|

||||||

memory: 2048Mi

|

memory: 2048Mi

|

||||||

requests:

|

requests:

|

||||||

cpu: 10m

|

cpu: 100m

|

||||||

memory: 200Mi

|

memory: 200Mi

|

||||||

|

|

||||||

autoscaling:

|

autoscaling:

|

||||||

@@ -77,3 +78,16 @@ nodeSelector: {}

|

|||||||

tolerations: []

|

tolerations: []

|

||||||

|

|

||||||

affinity: {}

|

affinity: {}

|

||||||

|

|

||||||

|

# more configurations are set with configmap in file template/configmap.yaml

|

||||||

|

externalDatabase:

|

||||||

|

host: ""

|

||||||

|

mysql:

|

||||||

|

# if enabled is set to false, then you should manually specified externalDatabase.host

|

||||||

|

enabled: true

|

||||||

|

architecture: standalone

|

||||||

|

auth:

|

||||||

|

rootPassword: "s3cretR00t"

|

||||||

|

database: "logi_kafka_manager"

|

||||||

|

username: "logi_kafka_manager"

|

||||||

|

password: "n0tp@55w0rd"

|

||||||

|

|||||||

28

distribution/conf/application-docker.yml

Normal file

28

distribution/conf/application-docker.yml

Normal file

@@ -0,0 +1,28 @@

|

|||||||

|

|

||||||

|

## kafka-manager的配置文件,该文件中的配置会覆盖默认配置

|

||||||

|

## 下面的配置信息基本就是jar中的 application.yml默认配置了;

|

||||||

|

## 可以只修改自己变更的配置,其他的删除就行了; 比如只配置一下mysql

|

||||||

|

|

||||||

|

|

||||||

|

server:

|

||||||

|

port: 8080

|

||||||

|

tomcat:

|

||||||

|

accept-count: 1000

|

||||||

|

max-connections: 10000

|

||||||

|

max-threads: 800

|

||||||

|

min-spare-threads: 100

|

||||||

|

|

||||||

|

spring:

|

||||||

|

application:

|

||||||

|

name: kafkamanager

|

||||||

|

version: 2.6.0

|

||||||

|

profiles:

|

||||||

|

active: dev

|

||||||

|

datasource:

|

||||||

|

kafka-manager:

|

||||||

|

jdbc-url: jdbc:mysql://${LOGI_MYSQL_HOST:mysql}:${LOGI_MYSQL_PORT:3306}/${LOGI_MYSQL_DATABASE:logi_kafka_manager}?characterEncoding=UTF-8&useSSL=false&serverTimezone=GMT%2B8

|

||||||

|

username: ${LOGI_MYSQL_USER:root}

|

||||||

|

password: ${LOGI_MYSQL_PASSWORD:root}

|

||||||

|

driver-class-name: com.mysql.cj.jdbc.Driver

|

||||||

|

main:

|

||||||

|

allow-bean-definition-overriding: true

|

||||||

28

distribution/conf/application.yml

Normal file

28

distribution/conf/application.yml

Normal file

@@ -0,0 +1,28 @@

|

|||||||

|

|

||||||

|

## kafka-manager的配置文件,该文件中的配置会覆盖默认配置

|

||||||

|

## 下面的配置信息基本就是jar中的 application.yml默认配置了;

|

||||||

|

## 可以只修改自己变更的配置,其他的删除就行了; 比如只配置一下mysql

|

||||||

|

|

||||||

|

|

||||||

|

server:

|

||||||

|

port: 8080

|

||||||

|

tomcat:

|

||||||

|

accept-count: 1000

|

||||||

|

max-connections: 10000

|

||||||

|

max-threads: 800

|

||||||

|

min-spare-threads: 100

|

||||||

|

|

||||||

|

spring:

|

||||||

|

application:

|

||||||

|

name: kafkamanager

|

||||||

|

profiles:

|

||||||

|

active: dev

|

||||||

|

datasource:

|

||||||

|

kafka-manager:

|

||||||

|

jdbc-url: jdbc:mysql://localhost:3306/logi_kafka_manager?characterEncoding=UTF-8&useSSL=false&serverTimezone=GMT%2B8

|

||||||

|

username: root

|

||||||

|

password: 123456

|

||||||

|

driver-class-name: com.mysql.cj.jdbc.Driver

|

||||||

|

main:

|

||||||

|

allow-bean-definition-overriding: true

|

||||||

|

|

||||||

@@ -15,6 +15,8 @@ server:

|

|||||||

spring:

|

spring:

|

||||||

application:

|

application:

|

||||||

name: kafkamanager

|

name: kafkamanager

|

||||||

|

profiles:

|

||||||

|

active: dev

|

||||||

datasource:

|

datasource:

|

||||||

kafka-manager:

|

kafka-manager:

|

||||||

jdbc-url: jdbc:mysql://localhost:3306/logi_kafka_manager?characterEncoding=UTF-8&useSSL=false&serverTimezone=GMT%2B8

|

jdbc-url: jdbc:mysql://localhost:3306/logi_kafka_manager?characterEncoding=UTF-8&useSSL=false&serverTimezone=GMT%2B8

|

||||||

@@ -24,7 +26,6 @@ spring:

|

|||||||

main:

|

main:

|

||||||

allow-bean-definition-overriding: true

|

allow-bean-definition-overriding: true

|

||||||

|

|

||||||

|

|

||||||

servlet:

|

servlet:

|

||||||

multipart:

|

multipart:

|

||||||

max-file-size: 100MB

|

max-file-size: 100MB

|

||||||

@@ -34,29 +35,58 @@ logging:

|

|||||||

config: classpath:logback-spring.xml

|

config: classpath:logback-spring.xml

|

||||||

|

|

||||||

custom:

|

custom:

|

||||||

idc: cn # 部署的数据中心, 忽略该配置, 后续会进行删除

|

idc: cn

|

||||||

jmx:

|

|

||||||

max-conn: 10 # 2.3版本配置不在这个地方生效

|

|

||||||

store-metrics-task:

|

store-metrics-task:

|

||||||

community:

|

community:

|

||||||

broker-metrics-enabled: true # 社区部分broker metrics信息收集开关, 关闭之后metrics信息将不会进行收集及写DB

|

topic-metrics-enabled: true

|

||||||

topic-metrics-enabled: true # 社区部分topic的metrics信息收集开关, 关闭之后metrics信息将不会进行收集及写DB

|

didi: # 滴滴Kafka特有的指标

|

||||||

didi:

|

app-topic-metrics-enabled: false

|

||||||

app-topic-metrics-enabled: false # 滴滴埋入的指标, 社区AK不存在该指标,因此默认关闭

|

topic-request-time-metrics-enabled: false

|

||||||

topic-request-time-metrics-enabled: false # 滴滴埋入的指标, 社区AK不存在该指标,因此默认关闭

|

topic-throttled-metrics-enabled: false

|

||||||

topic-throttled-metrics: false # 滴滴埋入的指标, 社区AK不存在该指标,因此默认关闭

|

|

||||||

save-days: 7 #指标在DB中保持的天数,-1表示永久保存,7表示保存近7天的数据

|

|

||||||

|

|

||||||

# 任务相关的开关

|

# 任务相关的配置

|

||||||

task:

|

task:

|

||||||

op:

|

op:

|

||||||

sync-topic-enabled: false # 未落盘的Topic定期同步到DB中

|

sync-topic-enabled: false # 未落盘的Topic定期同步到DB中

|

||||||

order-auto-exec: # 工单自动化审批线程的开关

|

order-auto-exec: # 工单自动化审批线程的开关

|

||||||

topic-enabled: false # Topic工单自动化审批开关, false:关闭自动化审批, true:开启

|

topic-enabled: false # Topic工单自动化审批开关, false:关闭自动化审批, true:开启

|

||||||

app-enabled: false # App工单自动化审批开关, false:关闭自动化审批, true:开启

|

app-enabled: false # App工单自动化审批开关, false:关闭自动化审批, true:开启

|

||||||

|

metrics:

|

||||||

|

collect: # 收集指标

|

||||||

|

broker-metrics-enabled: true # 收集Broker指标

|

||||||

|

sink: # 上报指标

|

||||||

|

cluster-metrics: # 上报cluster指标

|

||||||

|

sink-db-enabled: true # 上报到db

|

||||||

|

broker-metrics: # 上报broker指标

|

||||||

|

sink-db-enabled: true # 上报到db

|

||||||

|

delete: # 删除指标

|

||||||

|

delete-limit-size: 1000 # 单次删除的批大小

|

||||||

|

cluster-metrics-save-days: 14 # 集群指标保存天数

|

||||||

|

broker-metrics-save-days: 14 # Broker指标保存天数

|

||||||

|

topic-metrics-save-days: 7 # Topic指标保存天数

|

||||||

|

topic-request-time-metrics-save-days: 7 # Topic请求耗时指标保存天数

|

||||||

|

topic-throttled-metrics-save-days: 7 # Topic限流指标保存天数

|

||||||

|

app-topic-metrics-save-days: 7 # App+Topic指标保存天数

|

||||||

|

|

||||||

|

thread-pool:

|

||||||

|

collect-metrics:

|

||||||

|

thread-num: 256 # 收集指标线程池大小

|

||||||

|

queue-size: 5000 # 收集指标线程池的queue大小

|

||||||

|

api-call:

|

||||||

|

thread-num: 16 # api服务线程池大小

|

||||||

|

queue-size: 5000 # api服务线程池的queue大小

|

||||||

|

|

||||||

|

client-pool:

|

||||||

|

kafka-consumer:

|

||||||

|

min-idle-client-num: 24 # 最小空闲客户端数

|

||||||

|

max-idle-client-num: 24 # 最大空闲客户端数

|

||||||

|

max-total-client-num: 24 # 最大客户端数

|

||||||

|

borrow-timeout-unit-ms: 3000 # 租借超时时间,单位毫秒

|

||||||

|

|

||||||

# ldap相关的配置

|

|

||||||

account:

|

account:

|

||||||

|

jump-login:

|

||||||

|

gateway-api: false # 网关接口

|

||||||

|

third-part-api: false # 第三方接口

|

||||||

ldap:

|

ldap:

|

||||||

enabled: false

|

enabled: false

|

||||||

url: ldap://127.0.0.1:389/

|

url: ldap://127.0.0.1:389/

|

||||||

@@ -70,28 +100,20 @@ account:

|

|||||||

auth-user-registration: true

|

auth-user-registration: true

|

||||||

auth-user-registration-role: normal

|

auth-user-registration-role: normal

|

||||||

|

|

||||||

# 集群升级部署相关的功能,需要配合夜莺及S3进行使用

|

kcm: # 集群安装部署,仅安装broker

|

||||||

kcm:

|

enabled: false # 是否开启

|

||||||

enabled: false

|

s3: # s3 存储服务

|

||||||

s3:

|

|

||||||

endpoint: s3.didiyunapi.com

|

endpoint: s3.didiyunapi.com

|

||||||

access-key: 1234567890

|

access-key: 1234567890

|

||||||

secret-key: 0987654321

|

secret-key: 0987654321

|

||||||

bucket: logi-kafka

|

bucket: logi-kafka

|

||||||

n9e:

|

n9e: # 夜莺

|

||||||

base-url: http://127.0.0.1:8004

|

base-url: http://127.0.0.1:8004 # 夜莺job服务地址

|

||||||

user-token: 12345678

|

user-token: 12345678 # 用户的token

|

||||||

timeout: 300

|

timeout: 300 # 当台操作的超时时间

|

||||||

account: root

|

account: root # 操作时使用的账号

|

||||||

script-file: kcm_script.sh

|

script-file: kcm_script.sh # 脚本,已内置好,在源码的kcm模块内,此处配置无需修改

|

||||||

|

logikm-url: http://127.0.0.1:8080 # logikm部署地址,部署时kcm_script.sh会调用logikm检查部署中的一些状态

|

||||||

# 监控告警相关的功能,需要配合夜莺进行使用

|

|

||||||

# enabled: 表示是否开启监控告警的功能, true: 开启, false: 不开启

|

|

||||||

# n9e.nid: 夜莺的节点ID

|

|

||||||

# n9e.user-token: 用户的密钥,在夜莺的个人设置中

|

|

||||||

# n9e.mon.base-url: 监控地址

|

|

||||||

# n9e.sink.base-url: 数据上报地址

|

|

||||||

# n9e.rdb.base-url: 用户资源中心地址

|

|

||||||

|

|

||||||

monitor:

|

monitor:

|

||||||

enabled: false

|

enabled: false

|

||||||

@@ -105,10 +127,9 @@ monitor:

|

|||||||

rdb:

|

rdb:

|

||||||

base-url: http://127.0.0.1:8000 # 夜莺v4版本,默认端口统一调整为了8000

|

base-url: http://127.0.0.1:8000 # 夜莺v4版本,默认端口统一调整为了8000

|

||||||

|

|

||||||

|

notify:

|

||||||

notify: # 通知的功能

|

kafka:

|

||||||

kafka: # 默认通知发送到kafka的指定Topic中

|

cluster-id: 95

|

||||||

cluster-id: 95 # Topic的集群ID

|

topic-name: didi-kafka-notify

|

||||||

topic-name: didi-kafka-notify # Topic名称

|

order:

|

||||||

order: # 部署的KM的地址

|

detail-url: http://127.0.0.1

|

||||||

detail-url: http://127.0.0.1

|

|

||||||

|

|||||||

@@ -13,6 +13,9 @@ CREATE TABLE `account` (

|

|||||||

`username` varchar(128) CHARACTER SET utf8 COLLATE utf8_bin NOT NULL DEFAULT '' COMMENT '用户名',

|

`username` varchar(128) CHARACTER SET utf8 COLLATE utf8_bin NOT NULL DEFAULT '' COMMENT '用户名',

|

||||||

`password` varchar(128) NOT NULL DEFAULT '' COMMENT '密码',

|

`password` varchar(128) NOT NULL DEFAULT '' COMMENT '密码',

|

||||||

`role` tinyint(8) NOT NULL DEFAULT '0' COMMENT '角色类型, 0:普通用户 1:研发 2:运维',

|

`role` tinyint(8) NOT NULL DEFAULT '0' COMMENT '角色类型, 0:普通用户 1:研发 2:运维',

|

||||||

|

`department` varchar(256) DEFAULT '' COMMENT '部门名',

|

||||||

|

`display_name` varchar(256) DEFAULT '' COMMENT '用户姓名',

|

||||||

|

`mail` varchar(256) DEFAULT '' COMMENT '邮箱',

|

||||||

`status` int(16) NOT NULL DEFAULT '0' COMMENT '0标识使用中,-1标识已废弃',

|

`status` int(16) NOT NULL DEFAULT '0' COMMENT '0标识使用中,-1标识已废弃',

|

||||||

`gmt_create` timestamp NOT NULL DEFAULT CURRENT_TIMESTAMP COMMENT '创建时间',

|

`gmt_create` timestamp NOT NULL DEFAULT CURRENT_TIMESTAMP COMMENT '创建时间',

|

||||||

`gmt_modify` timestamp NOT NULL DEFAULT CURRENT_TIMESTAMP ON UPDATE CURRENT_TIMESTAMP COMMENT '修改时间',

|

`gmt_modify` timestamp NOT NULL DEFAULT CURRENT_TIMESTAMP ON UPDATE CURRENT_TIMESTAMP COMMENT '修改时间',

|

||||||

@@ -588,4 +591,63 @@ CREATE TABLE `work_order` (

|

|||||||

`gmt_create` timestamp NOT NULL DEFAULT CURRENT_TIMESTAMP COMMENT '创建时间',

|

`gmt_create` timestamp NOT NULL DEFAULT CURRENT_TIMESTAMP COMMENT '创建时间',

|

||||||

`gmt_modify` timestamp NOT NULL DEFAULT CURRENT_TIMESTAMP ON UPDATE CURRENT_TIMESTAMP COMMENT '修改时间',

|

`gmt_modify` timestamp NOT NULL DEFAULT CURRENT_TIMESTAMP ON UPDATE CURRENT_TIMESTAMP COMMENT '修改时间',

|

||||||

PRIMARY KEY (`id`)

|

PRIMARY KEY (`id`)

|

||||||

) ENGINE=InnoDB DEFAULT CHARSET=utf8 COMMENT='工单表';

|

) ENGINE=InnoDB DEFAULT CHARSET=utf8 COMMENT='工单表';

|

||||||

|

|

||||||

|

create table ha_active_standby_relation

|

||||||

|

(

|

||||||

|

id bigint unsigned auto_increment comment 'id'

|

||||||

|

primary key,

|

||||||

|

active_cluster_phy_id bigint default -1 not null comment '主集群ID',

|

||||||

|

active_res_name varchar(192) collate utf8_bin default '' not null comment '主资源名称',

|

||||||

|

standby_cluster_phy_id bigint default -1 not null comment '备集群ID',

|

||||||

|

standby_res_name varchar(192) collate utf8_bin default '' not null comment '备资源名称',

|

||||||

|

res_type int default -1 not null comment '资源类型',

|

||||||

|

status int default -1 not null comment '关系状态',

|

||||||

|

unique_field varchar(1024) default '' not null comment '唯一字段',

|

||||||

|

create_time timestamp default CURRENT_TIMESTAMP not null comment '创建时间',

|

||||||

|

modify_time timestamp default CURRENT_TIMESTAMP not null on update CURRENT_TIMESTAMP comment '修改时间',

|

||||||

|

kafka_status int default 0 null comment '高可用配置是否完全建立 1:Kafka上该主备关系正常,0:Kafka上该主备关系异常',

|

||||||

|

constraint uniq_unique_field

|

||||||

|

unique (unique_field)

|

||||||

|

)

|

||||||

|

comment 'HA主备关系表' charset = utf8;

|

||||||

|

|

||||||

|

create index idx_type_active

|

||||||

|

on ha_active_standby_relation (res_type, active_cluster_phy_id);

|

||||||

|

|

||||||

|

create index idx_type_standby

|

||||||

|

on ha_active_standby_relation (res_type, standby_cluster_phy_id);

|

||||||

|

|

||||||

|

create table ha_active_standby_switch_job

|

||||||

|

(

|

||||||

|

id bigint unsigned auto_increment comment 'id'

|

||||||

|

primary key,

|

||||||

|

active_cluster_phy_id bigint default -1 not null comment '主集群ID',

|

||||||

|

standby_cluster_phy_id bigint default -1 not null comment '备集群ID',

|

||||||

|

job_status int default -1 not null comment '任务状态',

|

||||||

|

operator varchar(256) default '' not null comment '操作人',

|

||||||

|

create_time timestamp default CURRENT_TIMESTAMP not null comment '创建时间',

|

||||||

|

modify_time timestamp default CURRENT_TIMESTAMP not null on update CURRENT_TIMESTAMP comment '修改时间',

|

||||||

|

type int default 5 not null comment '1:topic 2:实例 3:逻辑集群 4:物理集群',

|

||||||

|

active_business_id varchar(100) default '-1' not null comment '主业务id(topicName,实例id,逻辑集群id,物理集群id)',

|

||||||

|

standby_business_id varchar(100) default '-1' not null comment '备业务id(topicName,实例id,逻辑集群id,物理集群id)'

|

||||||

|

)

|

||||||

|

comment 'HA主备关系切换-子任务表' charset = utf8;

|

||||||

|

|

||||||

|

|

||||||

|

create table ha_active_standby_switch_sub_job

|

||||||

|

(

|

||||||

|

id bigint unsigned auto_increment comment 'id'

|

||||||

|

primary key,

|

||||||

|

job_id bigint default -1 not null comment '任务ID',

|

||||||

|

active_cluster_phy_id bigint default -1 not null comment '主集群ID',

|

||||||

|

active_res_name varchar(192) collate utf8_bin default '' not null comment '主资源名称',

|

||||||

|

standby_cluster_phy_id bigint default -1 not null comment '备集群ID',

|

||||||

|

standby_res_name varchar(192) collate utf8_bin default '' not null comment '备资源名称',

|

||||||

|

res_type int default -1 not null comment '资源类型',

|

||||||

|

job_status int default -1 not null comment '任务状态',

|

||||||

|

extend_data text null comment '扩展数据',

|

||||||

|

create_time timestamp default CURRENT_TIMESTAMP not null comment '创建时间',

|

||||||

|

modify_time timestamp default CURRENT_TIMESTAMP not null on update CURRENT_TIMESTAMP comment '修改时间'

|

||||||

|

)

|

||||||

|

comment 'HA主备关系-切换任务表' charset = utf8;

|

||||||

|

|||||||

10

distribution/conf/settings.xml

Normal file

10

distribution/conf/settings.xml

Normal file

@@ -0,0 +1,10 @@

|

|||||||

|

<settings>

|

||||||

|

<mirrors>

|

||||||

|

<mirror>

|

||||||

|

<id>aliyunmaven</id>

|

||||||

|

<mirrorOf>*</mirrorOf>

|

||||||

|

<name>阿里云公共仓库</name>

|

||||||

|

<url>https://maven.aliyun.com/repository/public</url>

|

||||||

|

</mirror>

|

||||||

|

</mirrors>

|

||||||

|

</settings>

|

||||||

@@ -4,7 +4,6 @@

|

|||||||

> 当您从一个很低的版本升级时候,应该依次执行中间有过变更的sql脚本

|

> 当您从一个很低的版本升级时候,应该依次执行中间有过变更的sql脚本

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

**一站式`Apache Kafka`集群指标监控与运维管控平台**

|

**一站式`Apache Kafka`集群指标监控与运维管控平台**

|

||||||

|

|

||||||

@@ -40,4 +39,14 @@ ALTER TABLE `gateway_config`

|

|||||||

ADD COLUMN `description` TEXT NULL COMMENT '描述信息' AFTER `version`;

|

ADD COLUMN `description` TEXT NULL COMMENT '描述信息' AFTER `version`;

|

||||||

```

|

```

|

||||||

|

|

||||||

|

### 升级至`2.6.0`版本

|

||||||

|

|

||||||

|

#### 1.mysql变更

|

||||||

|

`2.6.0`版本在`account`表增加用户姓名,部门名,邮箱三个字段,因此需要执行下面的sql进行字段的增加。

|

||||||

|

|

||||||

|

```sql

|

||||||

|

ALTER TABLE `account`

|

||||||

|

ADD COLUMN `display_name` VARCHAR(256) NOT NULL DEFAULT '' COMMENT '用户名' AFTER `role`,

|

||||||

|

ADD COLUMN `department` VARCHAR(256) NOT NULL DEFAULT '' COMMENT '部门名' AFTER `display_name`,

|

||||||

|

ADD COLUMN `mail` VARCHAR(256) NOT NULL DEFAULT '' COMMENT '邮箱' AFTER `department`;

|

||||||

|

```

|

||||||

|

|||||||

Binary file not shown.

|

Before Width: | Height: | Size: 20 KiB |

47

docs/dev_guide/LogiKM单元测试和集成测试.md

Normal file

47

docs/dev_guide/LogiKM单元测试和集成测试.md

Normal file

@@ -0,0 +1,47 @@

|

|||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

**一站式`Apache Kafka`集群指标监控与运维管控平台**

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

|

||||||

|

# LogiKM单元测试和集成测试

|

||||||

|

|

||||||

|

## 1、单元测试

|

||||||

|

### 1.1 单元测试介绍

|

||||||

|

单元测试又称模块测试,是针对软件设计的最小单位——程序模块进行正确性检验的测试工作。

|

||||||

|

其目的在于检查每个程序单元能否正确实现详细设计说明中的模块功能、性能、接口和设计约束等要求,

|

||||||

|

发现各模块内部可能存在的各种错误。单元测试需要从程序的内部结构出发设计测试用例。

|

||||||

|

多个模块可以平行地独立进行单元测试。

|

||||||

|

|

||||||

|

### 1.2 LogiKM单元测试思路

|

||||||

|

LogiKM单元测试思路主要是测试Service层的方法,通过罗列方法的各种参数,

|

||||||

|

判断方法返回的结果是否符合预期。单元测试的基类加了@SpringBootTest注解,即每次运行单测用例都启动容器

|

||||||

|

|

||||||

|

### 1.3 LogiKM单元测试注意事项

|

||||||

|

1. 单元测试用例在kafka-manager-core以及kafka-manager-extends下的test包中

|

||||||

|

2. 配置在resources/application.yml,包括运行单元测试用例启用的数据库配置等等

|

||||||

|

3. 编译打包项目时,加上参数-DskipTests可不执行测试用例,例如使用命令行mvn -DskipTests进行打包

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

## 2、集成测试

|

||||||

|

### 2.1 集成测试介绍

|

||||||

|

集成测试又称组装测试,是一种黑盒测试。通常在单元测试的基础上,将所有的程序模块进行有序的、递增的测试。

|

||||||

|

集成测试是检验程序单元或部件的接口关系,逐步集成为符合概要设计要求的程序部件或整个系统。

|

||||||

|

|

||||||

|

### 2.2 LogiKM集成测试思路

|

||||||

|

LogiKM集成测试主要思路是对Controller层的接口发送Http请求。

|

||||||

|

通过罗列测试用例,模拟用户的操作,对接口发送Http请求,判断结果是否达到预期。

|

||||||

|

本地运行集成测试用例时,无需加@SpringBootTest注解(即无需每次运行测试用例都启动容器)

|

||||||

|

|

||||||

|

### 2.3 LogiKM集成测试注意事项

|

||||||

|

1. 集成测试用例在kafka-manager-web的test包下

|

||||||

|

2. 因为对某些接口发送Http请求需要先登陆,比较麻烦,可以绕过登陆,方法可见教程见docs -> user_guide -> call_api_bypass_login

|

||||||

|

3. 集成测试的配置在resources/integrationTest-settings.properties文件下,包括集群地址,zk地址的配置等等

|

||||||

|

4. 如果需要运行集成测试用例,需要本地先启动LogiKM项目

|

||||||

|

5. 编译打包项目时,加上参数-DskipTests可不执行测试用例,例如使用命令行mvn -DskipTests进行打包

|

||||||

Binary file not shown.

|

After Width: | Height: | Size: 785 KiB |

Binary file not shown.

|

After Width: | Height: | Size: 2.5 MiB |

BIN

docs/dev_guide/assets/kcm/kcm_principle.png

Normal file

BIN

docs/dev_guide/assets/kcm/kcm_principle.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 69 KiB |

@@ -29,6 +29,7 @@

|

|||||||

- `JMX`配置错误:见`2、解决方法`。

|

- `JMX`配置错误:见`2、解决方法`。

|

||||||

- 存在防火墙或者网络限制:网络通的另外一台机器`telnet`试一下看是否可以连接上。

|

- 存在防火墙或者网络限制:网络通的另外一台机器`telnet`试一下看是否可以连接上。

|

||||||

- 需要进行用户名及密码的认证:见`3、解决方法 —— 认证的JMX`。

|

- 需要进行用户名及密码的认证:见`3、解决方法 —— 认证的JMX`。

|

||||||

|

- 当logikm和kafka不在同一台机器上时,kafka的Jmx端口不允许其他机器访问:见`4、解决方法`。

|

||||||

|

|

||||||

|

|

||||||

错误日志例子:

|

错误日志例子:

|

||||||

@@ -98,4 +99,9 @@ fi

|

|||||||

SQL的例子:

|

SQL的例子:

|

||||||

```sql

|

```sql

|

||||||

UPDATE cluster SET jmx_properties='{ "maxConn": 10, "username": "xxxxx", "password": "xxxx", "openSSL": false }' where id={xxx};

|

UPDATE cluster SET jmx_properties='{ "maxConn": 10, "username": "xxxxx", "password": "xxxx", "openSSL": false }' where id={xxx};

|

||||||

```

|

```

|

||||||

|

### 4、解决方法 —— 不允许其他机器访问

|

||||||

|

|

||||||

|

|

||||||

|

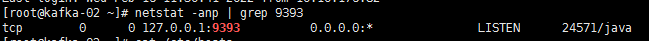

该图中的127.0.0.1表明该端口只允许本机访问.

|

||||||

|

在cdh中可以点击配置->搜索jmx->寻找broker_java_opts 修改com.sun.management.jmxremote.host和java.rmi.server.hostname为本机ip

|

||||||

|

|||||||

89

docs/dev_guide/drawio/KCM实现原理.drawio

Normal file

89

docs/dev_guide/drawio/KCM实现原理.drawio

Normal file

@@ -0,0 +1,89 @@

|

|||||||

|

<mxfile host="65bd71144e">

|

||||||

|

<diagram id="bhaMuW99Q1BzDTtcfRXp" name="Page-1">

|

||||||

|

<mxGraphModel dx="1138" dy="830" grid="1" gridSize="10" guides="1" tooltips="1" connect="1" arrows="1" fold="1" page="1" pageScale="1" pageWidth="1169" pageHeight="827" math="0" shadow="0">

|

||||||

|

<root>

|

||||||

|

<mxCell id="0"/>

|

||||||

|

<mxCell id="1" parent="0"/>

|

||||||

|

<mxCell id="11" value="待部署Kafka-Broker的机器" style="rounded=0;whiteSpace=wrap;html=1;absoluteArcSize=1;arcSize=14;strokeWidth=1;labelPosition=center;verticalLabelPosition=bottom;align=center;verticalAlign=top;dashed=1;" vertex="1" parent="1">

|

||||||

|

<mxGeometry x="380" y="240" width="320" height="240" as="geometry"/>

|

||||||

|

</mxCell>

|

||||||

|

<mxCell id="24" value="" style="rounded=0;whiteSpace=wrap;html=1;absoluteArcSize=1;arcSize=14;strokeWidth=1;labelPosition=center;verticalLabelPosition=bottom;align=center;verticalAlign=top;dashed=1;fillColor=#eeeeee;strokeColor=#36393d;" vertex="1" parent="1">

|

||||||

|

<mxGeometry x="410" y="310" width="260" height="160" as="geometry"/>

|

||||||

|

</mxCell>

|

||||||

|

<mxCell id="6" style="edgeStyle=none;html=1;entryX=0;entryY=0.5;entryDx=0;entryDy=0;" edge="1" parent="1" source="2" target="3">

|

||||||

|

<mxGeometry relative="1" as="geometry"/>

|

||||||

|

</mxCell>

|

||||||

|

<mxCell id="7" value="调用夜莺接口,<br>创建集群安装部署任务" style="edgeLabel;html=1;align=center;verticalAlign=middle;resizable=0;points=[];" vertex="1" connectable="0" parent="6">

|

||||||

|

<mxGeometry x="-0.0875" y="1" relative="1" as="geometry">

|

||||||

|

<mxPoint x="9" y="1" as="offset"/>

|

||||||

|

</mxGeometry>

|

||||||

|

</mxCell>

|

||||||

|

<mxCell id="9" style="edgeStyle=none;html=1;" edge="1" parent="1" source="2" target="4">

|

||||||

|

<mxGeometry relative="1" as="geometry"/>

|

||||||

|

</mxCell>

|

||||||

|

<mxCell id="10" value="通过版本管理,将Kafka的安装包,<br>server配置上传到s3中" style="edgeLabel;html=1;align=center;verticalAlign=middle;resizable=0;points=[];" vertex="1" connectable="0" parent="9">

|

||||||

|

<mxGeometry x="0.0125" y="2" relative="1" as="geometry">

|

||||||

|

<mxPoint as="offset"/>

|

||||||

|

</mxGeometry>

|

||||||

|

</mxCell>

|

||||||

|

<mxCell id="2" value="LogiKM" style="rounded=0;whiteSpace=wrap;html=1;absoluteArcSize=1;arcSize=14;strokeWidth=1;fillColor=#dae8fc;strokeColor=#6c8ebf;" vertex="1" parent="1">

|

||||||

|

<mxGeometry x="40" y="100" width="120" height="40" as="geometry"/>

|

||||||

|

</mxCell>

|

||||||

|

<mxCell id="12" style="edgeStyle=none;html=1;exitX=0.5;exitY=1;exitDx=0;exitDy=0;entryX=0.5;entryY=0;entryDx=0;entryDy=0;" edge="1" parent="1" source="3" target="5">

|

||||||

|

<mxGeometry relative="1" as="geometry"/>

|

||||||

|

</mxCell>

|

||||||

|

<mxCell id="13" value="1、下发任务脚本(kcm_script.sh);<br>2、下发任务操作命令;" style="edgeLabel;html=1;align=center;verticalAlign=middle;resizable=0;points=[];" vertex="1" connectable="0" parent="12">

|

||||||

|

<mxGeometry x="-0.0731" y="2" relative="1" as="geometry">

|

||||||

|

<mxPoint x="-2" y="-16" as="offset"/>

|

||||||

|

</mxGeometry>

|

||||||

|

</mxCell>

|

||||||

|

<mxCell id="3" value="夜莺——任务中心" style="rounded=0;whiteSpace=wrap;html=1;absoluteArcSize=1;arcSize=14;strokeWidth=1;fillColor=#cdeb8b;strokeColor=#36393d;" vertex="1" parent="1">

|

||||||

|

<mxGeometry x="480" y="100" width="120" height="40" as="geometry"/>

|

||||||

|

</mxCell>

|

||||||

|

<mxCell id="4" value="S3" style="rounded=0;whiteSpace=wrap;html=1;absoluteArcSize=1;arcSize=14;strokeWidth=1;fillColor=#ffe6cc;strokeColor=#d79b00;" vertex="1" parent="1">

|

||||||

|

<mxGeometry x="40" y="310" width="120" height="40" as="geometry"/>

|

||||||

|

</mxCell>

|

||||||

|

<mxCell id="5" value="夜莺——Agent(<font color="#ff3333">代理执行kcm_script.sh脚本</font>)" style="rounded=0;whiteSpace=wrap;html=1;absoluteArcSize=1;arcSize=14;strokeWidth=1;fillColor=#d5e8d4;strokeColor=#82b366;" vertex="1" parent="1">

|

||||||

|

<mxGeometry x="400" y="260" width="280" height="40" as="geometry"/>

|

||||||

|

</mxCell>

|

||||||

|

<mxCell id="22" style="edgeStyle=orthogonalEdgeStyle;html=1;entryX=1;entryY=0.5;entryDx=0;entryDy=0;fontColor=#FF3333;exitX=0;exitY=0.5;exitDx=0;exitDy=0;" edge="1" parent="1" source="14" target="4">

|

||||||

|

<mxGeometry relative="1" as="geometry"/>

|

||||||

|

</mxCell>

|

||||||

|

<mxCell id="25" value="下载安装包" style="edgeLabel;html=1;align=center;verticalAlign=middle;resizable=0;points=[];fontColor=#000000;" vertex="1" connectable="0" parent="22">

|

||||||

|

<mxGeometry x="0.2226" y="-2" relative="1" as="geometry">

|

||||||

|

<mxPoint x="27" y="2" as="offset"/>

|

||||||

|

</mxGeometry>

|

||||||

|

</mxCell>

|

||||||

|

<mxCell id="14" value="执行kcm_script.sh脚本:下载安装包" style="rounded=0;whiteSpace=wrap;html=1;absoluteArcSize=1;arcSize=14;strokeWidth=1;fillColor=#eeeeee;strokeColor=#36393d;" vertex="1" parent="1">

|

||||||

|

<mxGeometry x="425" y="320" width="235" height="20" as="geometry"/>

|

||||||

|

</mxCell>

|

||||||

|

<mxCell id="18" value="执行kcm_script.sh脚本:安装" style="rounded=0;whiteSpace=wrap;html=1;absoluteArcSize=1;arcSize=14;strokeWidth=1;fillColor=#eeeeee;strokeColor=#36393d;" vertex="1" parent="1">

|

||||||

|

<mxGeometry x="425" y="350" width="235" height="20" as="geometry"/>

|

||||||

|

</mxCell>

|

||||||

|

<mxCell id="19" value="执行kcm_script.sh脚本:检查安装结果" style="rounded=0;whiteSpace=wrap;html=1;absoluteArcSize=1;arcSize=14;strokeWidth=1;fillColor=#eeeeee;strokeColor=#36393d;" vertex="1" parent="1">

|

||||||

|

<mxGeometry x="425" y="380" width="235" height="20" as="geometry"/>

|

||||||

|

</mxCell>

|

||||||

|

<mxCell id="23" style="edgeStyle=orthogonalEdgeStyle;html=1;entryX=0.5;entryY=0;entryDx=0;entryDy=0;fontColor=#FF3333;exitX=1;exitY=0.5;exitDx=0;exitDy=0;" edge="1" parent="1" source="20" target="2">

|

||||||

|

<mxGeometry relative="1" as="geometry">

|

||||||

|

<Array as="points">

|

||||||

|

<mxPoint x="770" y="420"/>

|

||||||

|

<mxPoint x="770" y="40"/>

|

||||||

|

<mxPoint x="100" y="40"/>

|

||||||

|

</Array>

|

||||||

|

</mxGeometry>

|

||||||

|

</mxCell>

|

||||||

|

<mxCell id="26" value="检查副本同步状态" style="edgeLabel;html=1;align=center;verticalAlign=middle;resizable=0;points=[];fontColor=#000000;" vertex="1" connectable="0" parent="23">

|

||||||

|

<mxGeometry x="-0.3344" relative="1" as="geometry">

|

||||||

|

<mxPoint as="offset"/>

|

||||||

|

</mxGeometry>

|

||||||

|

</mxCell>

|

||||||

|

<mxCell id="20" value="执行kcm_script.sh脚本:检查副本同步" style="rounded=0;whiteSpace=wrap;html=1;absoluteArcSize=1;arcSize=14;strokeWidth=1;fillColor=#eeeeee;strokeColor=#36393d;" vertex="1" parent="1">

|

||||||

|

<mxGeometry x="425" y="410" width="235" height="20" as="geometry"/>

|

||||||

|

</mxCell>

|

||||||

|

<mxCell id="21" value="执行kcm_script.sh脚本:结束" style="rounded=0;whiteSpace=wrap;html=1;absoluteArcSize=1;arcSize=14;strokeWidth=1;fillColor=#eeeeee;strokeColor=#36393d;" vertex="1" parent="1">

|

||||||

|

<mxGeometry x="425" y="440" width="235" height="20" as="geometry"/>

|

||||||

|

</mxCell>

|

||||||

|

</root>

|

||||||

|

</mxGraphModel>

|

||||||

|

</diagram>

|

||||||

|

</mxfile>

|

||||||

@@ -136,7 +136,8 @@ EXPIRED_TOPIC_CONFIG

|

|||||||

配置Value:

|

配置Value:

|

||||||

```json

|

```json

|

||||||

{

|

{

|

||||||

"minExpiredDay": 30, #过期时间大于此值才显示

|

"minExpiredDay": 30, #过期时间大于此值才显示,

|

||||||

|

"filterRegex": ".*XXX\\s+", #忽略符合此正则规则的Topic

|

||||||

"ignoreClusterIdList": [ # 忽略的集群

|

"ignoreClusterIdList": [ # 忽略的集群

|

||||||

50

|

50

|

||||||

]

|

]

|

||||||

|

|||||||

@@ -1,27 +0,0 @@

|

|||||||

|

|

||||||

---

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

**一站式`Apache Kafka`集群指标监控与运维管控平台**

|

|

||||||

|

|

||||||

---

|

|

||||||

|

|

||||||

# 升级至`2.2.0`版本

|

|

||||||

|

|

||||||

`2.2.0`版本在`cluster`表及`logical_cluster`各增加了一个字段,因此需要执行下面的sql进行字段的增加。

|

|

||||||

|

|

||||||

```sql

|

|

||||||

# 往cluster表中增加jmx_properties字段, 这个字段会用于存储jmx相关的认证以及配置信息

|

|

||||||

ALTER TABLE `cluster` ADD COLUMN `jmx_properties` TEXT NULL COMMENT 'JMX配置' AFTER `security_properties`;

|

|

||||||

|

|

||||||

# 往logical_cluster中增加identification字段, 同时数据和原先name数据相同, 最后增加一个唯一键.

|

|

||||||

# 此后, name字段还是表示集群名称, 而identification字段表示的是集群标识, 只能是字母数字及下划线组成,

|

|

||||||

# 数据上报到监控系统时, 集群这个标识采用的字段就是identification字段, 之前使用的是name字段.

|

|

||||||

ALTER TABLE `logical_cluster` ADD COLUMN `identification` VARCHAR(192) NOT NULL DEFAULT '' COMMENT '逻辑集群标识' AFTER `name`;

|

|

||||||

|

|

||||||

UPDATE `logical_cluster` SET `identification`=`name` WHERE id>=0;

|

|

||||||

|

|

||||||

ALTER TABLE `logical_cluster` ADD INDEX `uniq_identification` (`identification` ASC);

|

|

||||||

```

|

|

||||||

|

|

||||||

@@ -1,17 +0,0 @@

|

|||||||

|

|

||||||

---

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

**一站式`Apache Kafka`集群指标监控与运维管控平台**

|

|

||||||

|

|

||||||

---

|

|

||||||

|

|

||||||

# 升级至`2.3.0`版本

|

|

||||||

|

|

||||||

`2.3.0`版本在`gateway_config`表增加了一个描述说明的字段,因此需要执行下面的sql进行字段的增加。

|

|

||||||

|

|

||||||

```sql

|

|

||||||