mirror of

https://github.com/didi/KnowStreaming.git

synced 2025-12-25 04:32:12 +08:00

Compare commits

425 Commits

v3.0.0-bet

...

ve_prd

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

66e3da5d2f | ||

|

|

5f2adfe74e | ||

|

|

cecfde906a | ||

|

|

258385dc9a | ||

|

|

65238231f0 | ||

|

|

cb22e02fbe | ||

|

|

aa0bec1206 | ||

|

|

793c780015 | ||

|

|

ec6f063450 | ||

|

|

f25c65b98b | ||

|

|

2d99aae779 | ||

|

|

a8847dc282 | ||

|

|

4852c01c88 | ||

|

|

3d6f405b69 | ||

|

|

18e3fbf41d | ||

|

|

ae8cc3092b | ||

|

|

5c26e8947b | ||

|

|

fbe6945d3b | ||

|

|

7dc8f2dc48 | ||

|

|

91c60ce72c | ||

|

|

687eea80c8 | ||

|

|

9bfe3fd1db | ||

|

|

03f81bc6de | ||

|

|

eed9571ffa | ||

|

|

e4651ef749 | ||

|

|

f715cf7a8d | ||

|

|

fad9ddb9a1 | ||

|

|

b6e4f50849 | ||

|

|

5c6911e398 | ||

|

|

a0371ab88b | ||

|

|

fa2abadc25 | ||

|

|

f03460f3cd | ||

|

|

b5683b73c2 | ||

|

|

c062586c7e | ||

|

|

98a5c7b776 | ||

|

|

e204023b1f | ||

|

|

4c5ffccc45 | ||

|

|

fbcf58e19c | ||

|

|

e5c6d00438 | ||

|

|

ab6a4d7099 | ||

|

|

78b2b8a45e | ||

|

|

add2af4f3f | ||

|

|

235c0ed30e | ||

|

|

5bd93aa478 | ||

|

|

f95be2c1b3 | ||

|

|

5110b30f62 | ||

|

|

861faa5df5 | ||

|

|

efdf624c67 | ||

|

|

caccf9cef5 | ||

|

|

6ba3dceb84 | ||

|

|

9b7c41e804 | ||

|

|

346aee8fe7 | ||

|

|

353d781bca | ||

|

|

3ce4bf231a | ||

|

|

d046cb8bf4 | ||

|

|

da95c63503 | ||

|

|

915e48de22 | ||

|

|

256f770971 | ||

|

|

16e251cbe8 | ||

|

|

67743b859a | ||

|

|

c275b42632 | ||

|

|

a02760417b | ||

|

|

0e50bfc5d4 | ||

|

|

eab988e18f | ||

|

|

dd6004b9d4 | ||

|

|

ac7c32acd5 | ||

|

|

f4a219ceef | ||

|

|

a8b56fb613 | ||

|

|

2925a20e8e | ||

|

|

6b3eb05735 | ||

|

|

17e0c39f83 | ||

|

|

4994639111 | ||

|

|

c187b5246f | ||

|

|

6ed6d5ec8a | ||

|

|

0735b332a8 | ||

|

|

344cec19fe | ||

|

|

6ef365e201 | ||

|

|

edfa6a9f71 | ||

|

|

860d0b92e2 | ||

|

|

5bceed7105 | ||

|

|

44a2fe0398 | ||

|

|

218459ad1b | ||

|

|

7db757bc12 | ||

|

|

896a943587 | ||

|

|

cd2c388e68 | ||

|

|

4543a339b7 | ||

|

|

1c4fbef9f2 | ||

|

|

b2f0f69365 | ||

|

|

c4fb18a73c | ||

|

|

5cad7b4106 | ||

|

|

f3c4133cd2 | ||

|

|

d9c59cb3d3 | ||

|

|

7a0db7161b | ||

|

|

6aefc16fa0 | ||

|

|

186dcd07e0 | ||

|

|

e8652d5db5 | ||

|

|

fb5964af84 | ||

|

|

249fe7c700 | ||

|

|

cc2a590b33 | ||

|

|

5b3f3e5575 | ||

|

|

36cf285397 | ||

|

|

4386563c2c | ||

|

|

0123ce4a5a | ||

|

|

c3d47d3093 | ||

|

|

9735c4f885 | ||

|

|

3a3141a361 | ||

|

|

ac30436324 | ||

|

|

7176e418f5 | ||

|

|

ca794f507e | ||

|

|

0f8be4fadc | ||

|

|

7066246e8f | ||

|

|

7d1bb48b59 | ||

|

|

dd0d519677 | ||

|

|

4293d05fca | ||

|

|

2c82baf9fc | ||

|

|

921161d6d0 | ||

|

|

e632c6c13f | ||

|

|

5833a8644c | ||

|

|

fab41e892f | ||

|

|

7a52cf67b0 | ||

|

|

175b8d643a | ||

|

|

6241eb052a | ||

|

|

c2fd0a8410 | ||

|

|

5127b600ec | ||

|

|

feb03aede6 | ||

|

|

47b6c5d86a | ||

|

|

c4a81613f4 | ||

|

|

daeb5c4cec | ||

|

|

38def45ad6 | ||

|

|

4b29a2fdfd | ||

|

|

a165ecaeef | ||

|

|

6637ba4ccc | ||

|

|

2f807eec2b | ||

|

|

636c2c6a83 | ||

|

|

898a55c703 | ||

|

|

8ffe7e7101 | ||

|

|

7661826ea5 | ||

|

|

e456be91ef | ||

|

|

da0a97cabf | ||

|

|

c1031a492a | ||

|

|

3c8aaf528c | ||

|

|

70ff20a2b0 | ||

|

|

6918f4babe | ||

|

|

805a704d34 | ||

|

|

c69c289bc4 | ||

|

|

dd5869e246 | ||

|

|

b51ffb81a3 | ||

|

|

ed0efd6bd2 | ||

|

|

39d2fe6195 | ||

|

|

7471d05c20 | ||

|

|

3492688733 | ||

|

|

a603783615 | ||

|

|

5c9096d564 | ||

|

|

c27786a257 | ||

|

|

81910d1958 | ||

|

|

55d5fc4bde | ||

|

|

f30586b150 | ||

|

|

37037c19f0 | ||

|

|

1a5e2c7309 | ||

|

|

941dd4fd65 | ||

|

|

5f6df3681c | ||

|

|

7d045dbf05 | ||

|

|

4ff4accdc3 | ||

|

|

bbe967c4a8 | ||

|

|

b101cec6fa | ||

|

|

e98ec562a2 | ||

|

|

0e71ecc587 | ||

|

|

0f11a65df8 | ||

|

|

da00c8c877 | ||

|

|

8b177877bb | ||

|

|

ea199dca8d | ||

|

|

88b5833f77 | ||

|

|

127b5be651 | ||

|

|

80f001cdd5 | ||

|

|

30d297cae1 | ||

|

|

a96853db90 | ||

|

|

c1502152c0 | ||

|

|

afda292796 | ||

|

|

163cab78ae | ||

|

|

8f4ff36c09 | ||

|

|

47b6b3577a | ||

|

|

f3eca3b214 | ||

|

|

62f7d3f72f | ||

|

|

26e60d8a64 | ||

|

|

df655a250c | ||

|

|

811fc9b400 | ||

|

|

83df02783c | ||

|

|

6a5efce874 | ||

|

|

fa0ae5e474 | ||

|

|

cafd665a2d | ||

|

|

e8f77a456b | ||

|

|

4510c62ebd | ||

|

|

79864955e1 | ||

|

|

ff26a8d46c | ||

|

|

cc226d552e | ||

|

|

962f89475b | ||

|

|

ec204a1605 | ||

|

|

58d7623938 | ||

|

|

8f4ecfcdc0 | ||

|

|

ef719cedbc | ||

|

|

b7856c892b | ||

|

|

7435a78883 | ||

|

|

f49206b316 | ||

|

|

7d500a0721 | ||

|

|

98a519f20b | ||

|

|

39b655bb43 | ||

|

|

78d56a49fe | ||

|

|

d2e9d1fa01 | ||

|

|

41ff914dc3 | ||

|

|

3ba447fac2 | ||

|

|

e9cc380a2e | ||

|

|

017cac9bbe | ||

|

|

9ad72694af | ||

|

|

e8f9821870 | ||

|

|

bb167b9f8d | ||

|

|

28fbb5e130 | ||

|

|

16101e81e8 | ||

|

|

aced504d2a | ||

|

|

abb064d9d1 | ||

|

|

dc1899a1cd | ||

|

|

442f34278c | ||

|

|

a6dcbcd35b | ||

|

|

2b600e96eb | ||

|

|

177bb80f31 | ||

|

|

63fbe728c4 | ||

|

|

b33020840b | ||

|

|

c5caf7c0d6 | ||

|

|

0f0473db4c | ||

|

|

beadde3e06 | ||

|

|

a423a20480 | ||

|

|

79f0a23813 | ||

|

|

780fdea2cc | ||

|

|

1c0fda1adf | ||

|

|

9cf13e9b30 | ||

|

|

87cd058fd8 | ||

|

|

81b1ec48c2 | ||

|

|

66dd82f4fd | ||

|

|

ce35b23911 | ||

|

|

e79342acf5 | ||

|

|

3fc9f39d24 | ||

|

|

0221fb3a4a | ||

|

|

f009f8b7ba | ||

|

|

b76959431a | ||

|

|

975370b593 | ||

|

|

7275030971 | ||

|

|

99b0be5a95 | ||

|

|

edd3f95fc4 | ||

|

|

479f983b09 | ||

|

|

7650332252 | ||

|

|

8f1a021851 | ||

|

|

ce4df4d5fd | ||

|

|

bd43ae1b5d | ||

|

|

8fa34116b9 | ||

|

|

7e92553017 | ||

|

|

b7e243a693 | ||

|

|

35d4888afb | ||

|

|

b3e8a4f0f6 | ||

|

|

321125caee | ||

|

|

e01427aa4f | ||

|

|

14652e7f7a | ||

|

|

7c05899dbd | ||

|

|

56726b703f | ||

|

|

6237b0182f | ||

|

|

be5b662f65 | ||

|

|

224698355c | ||

|

|

8f47138ecd | ||

|

|

d159746391 | ||

|

|

63df93ea5e | ||

|

|

38948c0daa | ||

|

|

6c610427b6 | ||

|

|

b4cc31c459 | ||

|

|

7d781712c9 | ||

|

|

dd61ce9b2a | ||

|

|

69a7212986 | ||

|

|

ff05a951fd | ||

|

|

89d5357b40 | ||

|

|

7ca3d65c42 | ||

|

|

7b5c2d800f | ||

|

|

f414b47a78 | ||

|

|

44f4e2f0f9 | ||

|

|

2361008bdf | ||

|

|

7377ef3ec5 | ||

|

|

a28d064b7a | ||

|

|

e2e57e8575 | ||

|

|

9d90bd2835 | ||

|

|

7445e68df4 | ||

|

|

ab42625ad2 | ||

|

|

18789a0a53 | ||

|

|

68a37bb56a | ||

|

|

3b33652c47 | ||

|

|

1e0c4c3904 | ||

|

|

04e223de16 | ||

|

|

c4a691aa8a | ||

|

|

ff9dde163a | ||

|

|

eb7efbd1a5 | ||

|

|

8c8c362c54 | ||

|

|

66e119ad5d | ||

|

|

6dedc04a05 | ||

|

|

0cf8bad0df | ||

|

|

95c9582d8b | ||

|

|

7815126ff5 | ||

|

|

a5fa9de54b | ||

|

|

95f1a2c630 | ||

|

|

1e256ae1fd | ||

|

|

9fc9c54fa1 | ||

|

|

1b362b1e02 | ||

|

|

04e3172cca | ||

|

|

1caab7f3f7 | ||

|

|

9d33c725ad | ||

|

|

6ed1d38106 | ||

|

|

0f07ddedaf | ||

|

|

289945b471 | ||

|

|

f331a6d144 | ||

|

|

0c8c12a651 | ||

|

|

028c3bb2fa | ||

|

|

d7a5a0d405 | ||

|

|

5ef5f6e531 | ||

|

|

1d205734b3 | ||

|

|

5edd43884f | ||

|

|

c1992373bc | ||

|

|

ed562f9c8a | ||

|

|

b4d44ef8c7 | ||

|

|

ad0c16a1b4 | ||

|

|

7eabe66853 | ||

|

|

3983d73695 | ||

|

|

161d4c4562 | ||

|

|

9a1e89564e | ||

|

|

0c18c5b4f6 | ||

|

|

3e12ba34f7 | ||

|

|

e71e29391b | ||

|

|

9b7b9a7af0 | ||

|

|

a23819c308 | ||

|

|

6cb1825d96 | ||

|

|

77b8c758dc | ||

|

|

e5a582cfad | ||

|

|

ec83db267e | ||

|

|

bfd026cae7 | ||

|

|

35f1dd8082 | ||

|

|

7ed0e7dd23 | ||

|

|

1a3cbf7a9d | ||

|

|

d9e4abc3de | ||

|

|

a4186085d3 | ||

|

|

26b1846bb4 | ||

|

|

1aa89527a6 | ||

|

|

eac76d7ad0 | ||

|

|

cea0cd56f6 | ||

|

|

c4b897f282 | ||

|

|

47389dbabb | ||

|

|

a2f8b1a851 | ||

|

|

feac0a058f | ||

|

|

27eeac9fd4 | ||

|

|

a14db4b194 | ||

|

|

54ee271a47 | ||

|

|

a3a9be4f7f | ||

|

|

d4f0a832f3 | ||

|

|

7dc533372c | ||

|

|

1737d87713 | ||

|

|

dbb98dea11 | ||

|

|

802b382b36 | ||

|

|

fc82999d45 | ||

|

|

08aa000c07 | ||

|

|

39015b5100 | ||

|

|

0d635ad419 | ||

|

|

9133205915 | ||

|

|

725ac10c3d | ||

|

|

2b76358c8f | ||

|

|

833c360698 | ||

|

|

7da1e67b01 | ||

|

|

7eb86a47dd | ||

|

|

d67e383c28 | ||

|

|

8749d3e1f5 | ||

|

|

30fba21c48 | ||

|

|

d83d35aee9 | ||

|

|

1d3caeea7d | ||

|

|

c8806dbb4d | ||

|

|

e5802c7f50 | ||

|

|

590f684d66 | ||

|

|

8e5a67f565 | ||

|

|

8d2fbce11e | ||

|

|

26916f6632 | ||

|

|

fbfa0d2d2a | ||

|

|

e626b99090 | ||

|

|

203859b71b | ||

|

|

9a25c22f3a | ||

|

|

0a03f41a7c | ||

|

|

56191939c8 | ||

|

|

beb754aaaa | ||

|

|

f234f740ca | ||

|

|

e14679694c | ||

|

|

e06712397e | ||

|

|

b6c6df7ffc | ||

|

|

375c6f56c9 | ||

|

|

0bf85c97b5 | ||

|

|

630e582321 | ||

|

|

a89fe23bdd | ||

|

|

a7a5fa9a31 | ||

|

|

c73a7eee2f | ||

|

|

121f8468d5 | ||

|

|

7b0b6936e0 | ||

|

|

597ea04a96 | ||

|

|

f7f90aeaaa | ||

|

|

227479f695 | ||

|

|

6477fb3fe0 | ||

|

|

4223f4f3c4 | ||

|

|

7288874d72 | ||

|

|

68f76f2daf | ||

|

|

fe6ddebc49 | ||

|

|

12b5acd073 | ||

|

|

a6f1fe07b3 | ||

|

|

85e3f2a946 | ||

|

|

d4f416de14 | ||

|

|

0d9a6702c1 | ||

|

|

d11285cdbf | ||

|

|

5f1f33d2b9 | ||

|

|

474daf752d | ||

|

|

27d1b92690 | ||

|

|

792f8d939d | ||

|

|

0c14c641d0 | ||

|

|

61efdf492f | ||

|

|

405e6e0c1d | ||

|

|

0d227aef49 | ||

|

|

0e49002f42 | ||

|

|

8e50d145d5 | ||

|

|

0f35427645 | ||

|

|

fa7ad64140 |

51

.github/ISSUE_TEMPLATE/bug_report.md

vendored

Normal file

51

.github/ISSUE_TEMPLATE/bug_report.md

vendored

Normal file

@@ -0,0 +1,51 @@

|

||||

---

|

||||

name: 报告Bug

|

||||

about: 报告KnowStreaming的相关Bug

|

||||

title: ''

|

||||

labels: bug

|

||||

assignees: ''

|

||||

|

||||

---

|

||||

|

||||

- [ ] 我已经在 [issues](https://github.com/didi/KnowStreaming/issues) 搜索过相关问题了,并没有重复的。

|

||||

|

||||

你是否希望来认领这个Bug。

|

||||

|

||||

「 Y / N 」

|

||||

|

||||

### 环境信息

|

||||

|

||||

* KnowStreaming version : <font size=4 color =red> xxx </font>

|

||||

* Operating System version : <font size=4 color =red> xxx </font>

|

||||

* Java version : <font size=4 color =red> xxx </font>

|

||||

|

||||

|

||||

### 重现该问题的步骤

|

||||

|

||||

1. xxx

|

||||

|

||||

|

||||

|

||||

2. xxx

|

||||

|

||||

|

||||

3. xxx

|

||||

|

||||

|

||||

|

||||

### 预期结果

|

||||

|

||||

<!-- 写下应该出现的预期结果?-->

|

||||

|

||||

### 实际结果

|

||||

|

||||

<!-- 实际发生了什么? -->

|

||||

|

||||

|

||||

---

|

||||

|

||||

如果有异常,请附上异常Trace:

|

||||

|

||||

```

|

||||

Just put your stack trace here!

|

||||

```

|

||||

8

.github/ISSUE_TEMPLATE/config.yml

vendored

Normal file

8

.github/ISSUE_TEMPLATE/config.yml

vendored

Normal file

@@ -0,0 +1,8 @@

|

||||

blank_issues_enabled: true

|

||||

contact_links:

|

||||

- name: 讨论问题

|

||||

url: https://github.com/didi/KnowStreaming/discussions/new

|

||||

about: 发起问题、讨论 等等

|

||||

- name: KnowStreaming官网

|

||||

url: https://knowstreaming.com/

|

||||

about: KnowStreaming website

|

||||

26

.github/ISSUE_TEMPLATE/detail_optimizing.md

vendored

Normal file

26

.github/ISSUE_TEMPLATE/detail_optimizing.md

vendored

Normal file

@@ -0,0 +1,26 @@

|

||||

---

|

||||

name: 优化建议

|

||||

about: 相关功能优化建议

|

||||

title: ''

|

||||

labels: Optimization Suggestions

|

||||

assignees: ''

|

||||

|

||||

---

|

||||

|

||||

- [ ] 我已经在 [issues](https://github.com/didi/KnowStreaming/issues) 搜索过相关问题了,并没有重复的。

|

||||

|

||||

你是否希望来认领这个优化建议。

|

||||

|

||||

「 Y / N 」

|

||||

|

||||

### 环境信息

|

||||

|

||||

* KnowStreaming version : <font size=4 color =red> xxx </font>

|

||||

* Operating System version : <font size=4 color =red> xxx </font>

|

||||

* Java version : <font size=4 color =red> xxx </font>

|

||||

|

||||

### 需要优化的功能点

|

||||

|

||||

|

||||

### 建议如何优化

|

||||

|

||||

20

.github/ISSUE_TEMPLATE/feature_request.md

vendored

Normal file

20

.github/ISSUE_TEMPLATE/feature_request.md

vendored

Normal file

@@ -0,0 +1,20 @@

|

||||

---

|

||||

name: 提议新功能/需求

|

||||

about: 给KnowStreaming提一个功能需求

|

||||

title: ''

|

||||

labels: feature

|

||||

assignees: ''

|

||||

|

||||

---

|

||||

|

||||

- [ ] 我在 [issues](https://github.com/didi/KnowStreaming/issues) 中并未搜索到与此相关的功能需求。

|

||||

- [ ] 我在 [release note](https://github.com/didi/KnowStreaming/releases) 已经发布的版本中并没有搜到相关功能.

|

||||

|

||||

你是否希望来认领这个Feature。

|

||||

|

||||

「 Y / N 」

|

||||

|

||||

|

||||

## 这里描述需求

|

||||

<!--请尽可能的描述清楚您的需求 -->

|

||||

|

||||

12

.github/ISSUE_TEMPLATE/question.md

vendored

Normal file

12

.github/ISSUE_TEMPLATE/question.md

vendored

Normal file

@@ -0,0 +1,12 @@

|

||||

---

|

||||

name: 提个问题

|

||||

about: 问KnowStreaming相关问题

|

||||

title: ''

|

||||

labels: question

|

||||

assignees: ''

|

||||

|

||||

---

|

||||

|

||||

- [ ] 我已经在 [issues](https://github.com/didi/KnowStreaming/issues) 搜索过相关问题了,并没有重复的。

|

||||

|

||||

## 在这里提出你的问题

|

||||

23

.github/PULL_REQUEST_TEMPLATE.md

vendored

Normal file

23

.github/PULL_REQUEST_TEMPLATE.md

vendored

Normal file

@@ -0,0 +1,23 @@

|

||||

请不要在没有先创建Issue的情况下创建Pull Request。

|

||||

|

||||

## 变更的目的是什么

|

||||

|

||||

XXXXX

|

||||

|

||||

## 简短的更新日志

|

||||

|

||||

XX

|

||||

|

||||

## 验证这一变化

|

||||

|

||||

XXXX

|

||||

|

||||

请遵循此清单,以帮助我们快速轻松地整合您的贡献:

|

||||

|

||||

* [ ] 一个 PR(Pull Request的简写)只解决一个问题,禁止一个 PR 解决多个问题;

|

||||

* [ ] 确保 PR 有对应的 Issue(通常在您开始处理之前创建),除非是书写错误之类的琐碎更改不需要 Issue ;

|

||||

* [ ] 格式化 PR 及 Commit-Log 的标题及内容,例如 #861 。PS:Commit-Log 需要在 Git Commit 代码时进行填写,在 GitHub 上修改不了;

|

||||

* [ ] 编写足够详细的 PR 描述,以了解 PR 的作用、方式和原因;

|

||||

* [ ] 编写必要的单元测试来验证您的逻辑更正。如果提交了新功能或重大更改,请记住在 test 模块中添加 integration-test;

|

||||

* [ ] 确保编译通过,集成测试通过;

|

||||

|

||||

6

.gitignore

vendored

6

.gitignore

vendored

@@ -109,4 +109,8 @@ out/*

|

||||

dist/

|

||||

dist/*

|

||||

km-rest/src/main/resources/templates/

|

||||

*dependency-reduced-pom*

|

||||

*dependency-reduced-pom*

|

||||

#filter flattened xml

|

||||

*/.flattened-pom.xml

|

||||

.flattened-pom.xml

|

||||

*/*/.flattened-pom.xml

|

||||

@@ -1,28 +0,0 @@

|

||||

# Contribution Guideline

|

||||

|

||||

Thanks for considering to contribute this project. All issues and pull requests are highly appreciated.

|

||||

|

||||

## Pull Requests

|

||||

|

||||

Before sending pull request to this project, please read and follow guidelines below.

|

||||

|

||||

1. Branch: We only accept pull request on `dev` branch.

|

||||

2. Coding style: Follow the coding style used in LogiKM.

|

||||

3. Commit message: Use English and be aware of your spell.

|

||||

4. Test: Make sure to test your code.

|

||||

|

||||

Add device mode, API version, related log, screenshots and other related information in your pull request if possible.

|

||||

|

||||

NOTE: We assume all your contribution can be licensed under the [Apache License 2.0](LICENSE).

|

||||

|

||||

## Issues

|

||||

|

||||

We love clearly described issues. :)

|

||||

|

||||

Following information can help us to resolve the issue faster.

|

||||

|

||||

* Device mode and hardware information.

|

||||

* API version.

|

||||

* Logs.

|

||||

* Screenshots.

|

||||

* Steps to reproduce the issue.

|

||||

BIN

KS-PRD-3.0-beta1.docx

Normal file

BIN

KS-PRD-3.0-beta1.docx

Normal file

Binary file not shown.

BIN

KS-PRD-3.0-beta2.docx

Normal file

BIN

KS-PRD-3.0-beta2.docx

Normal file

Binary file not shown.

BIN

KS-PRD-3.1-ZK.docx

Normal file

BIN

KS-PRD-3.1-ZK.docx

Normal file

Binary file not shown.

BIN

KS-PRD-3.2-Connect.docx

Normal file

BIN

KS-PRD-3.2-Connect.docx

Normal file

Binary file not shown.

BIN

KS-PRD-3.3-MM2.docx

Normal file

BIN

KS-PRD-3.3-MM2.docx

Normal file

Binary file not shown.

139

README.md

139

README.md

@@ -1,139 +0,0 @@

|

||||

|

||||

<p align="center">

|

||||

<img src="https://user-images.githubusercontent.com/71620349/185368586-aed82d30-1534-453d-86ff-ecfa9d0f35bd.png" width = "256" div align=center />

|

||||

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

<a href="https://knowstreaming.com">产品官网</a> |

|

||||

<a href="https://github.com/didi/KnowStreaming/releases">下载地址</a> |

|

||||

<a href="https://doc.knowstreaming.com/product">文档资源</a> |

|

||||

<a href="https://demo.knowstreaming.com">体验环境</a>

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

<!--最近一次提交时间-->

|

||||

<a href="https://img.shields.io/github/last-commit/didi/KnowStreaming">

|

||||

<img src="https://img.shields.io/github/last-commit/didi/KnowStreaming" alt="LastCommit">

|

||||

</a>

|

||||

|

||||

<!--最新版本-->

|

||||

<a href="https://github.com/didi/KnowStreaming/blob/master/LICENSE">

|

||||

<img src="https://img.shields.io/github/v/release/didi/KnowStreaming" alt="License">

|

||||

</a>

|

||||

|

||||

<!--License信息-->

|

||||

<a href="https://github.com/didi/KnowStreaming/blob/master/LICENSE">

|

||||

<img src="https://img.shields.io/github/license/didi/KnowStreaming" alt="License">

|

||||

</a>

|

||||

|

||||

<!--Open-Issue-->

|

||||

<a href="https://github.com/didi/KnowStreaming/issues">

|

||||

<img src="https://img.shields.io/github/issues-raw/didi/KnowStreaming" alt="Issues">

|

||||

</a>

|

||||

|

||||

<!--知识星球-->

|

||||

<a href="https://z.didi.cn/5gSF9">

|

||||

<img src="https://img.shields.io/badge/join-%E7%9F%A5%E8%AF%86%E6%98%9F%E7%90%83-red" alt="Slack">

|

||||

</a>

|

||||

|

||||

</p>

|

||||

|

||||

|

||||

---

|

||||

|

||||

|

||||

## `Know Streaming` 简介

|

||||

|

||||

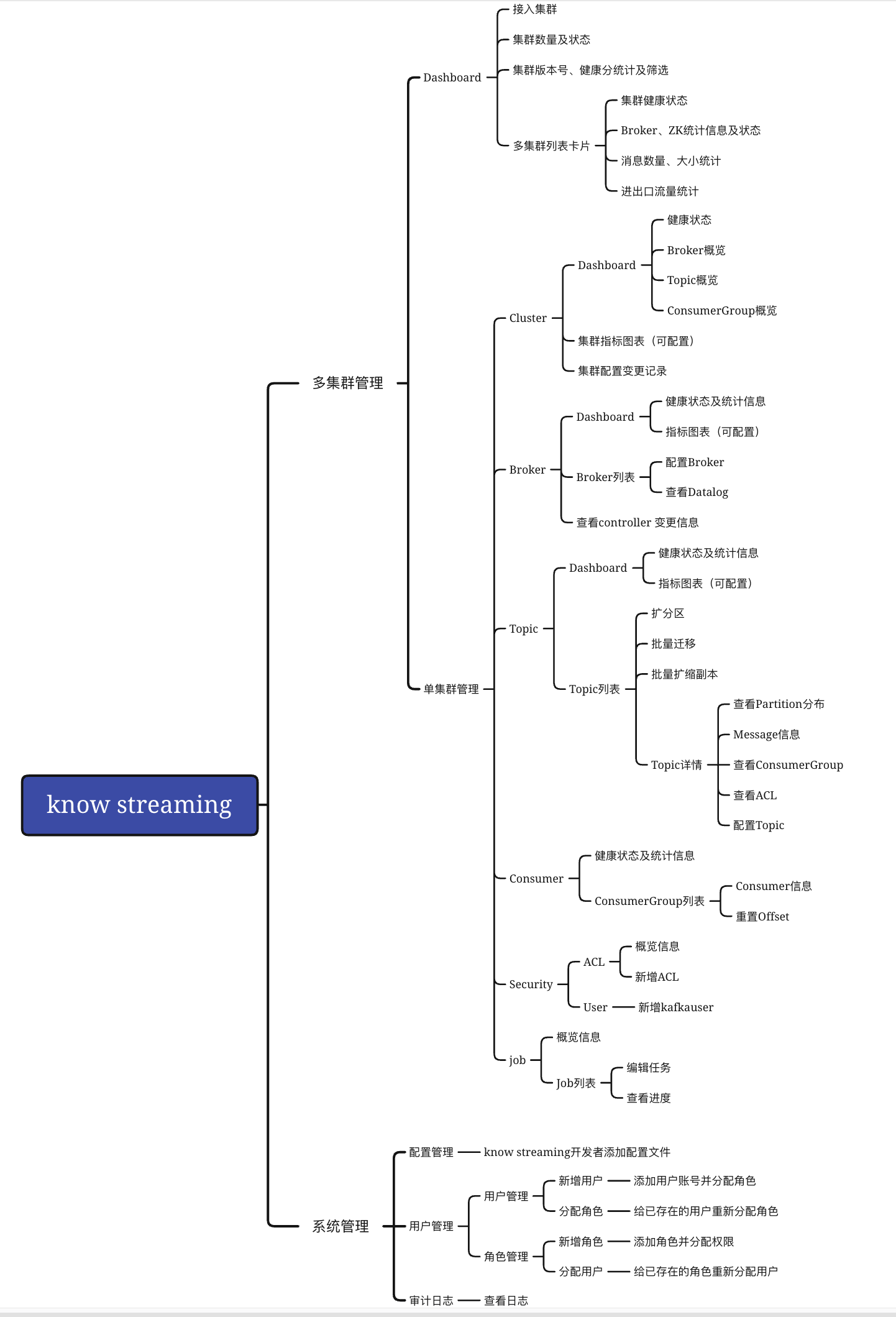

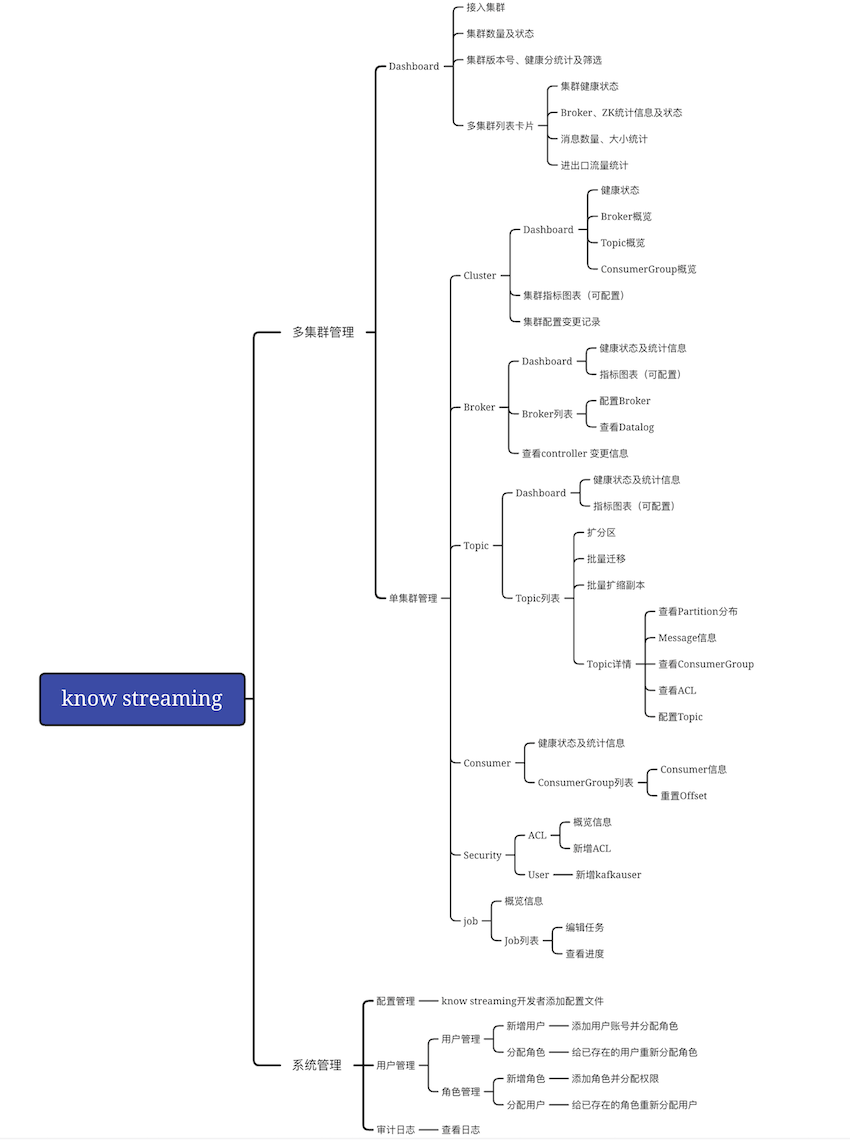

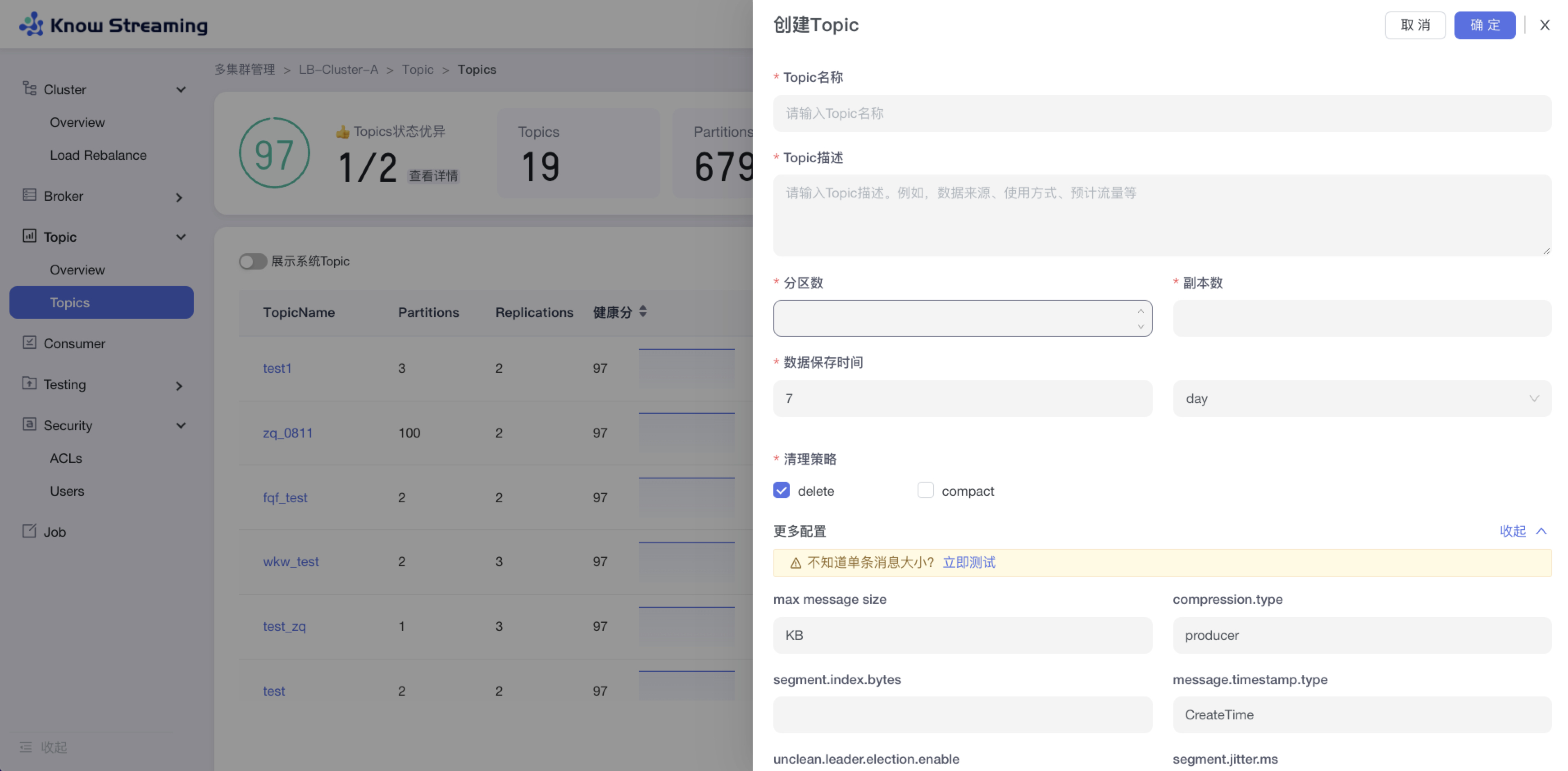

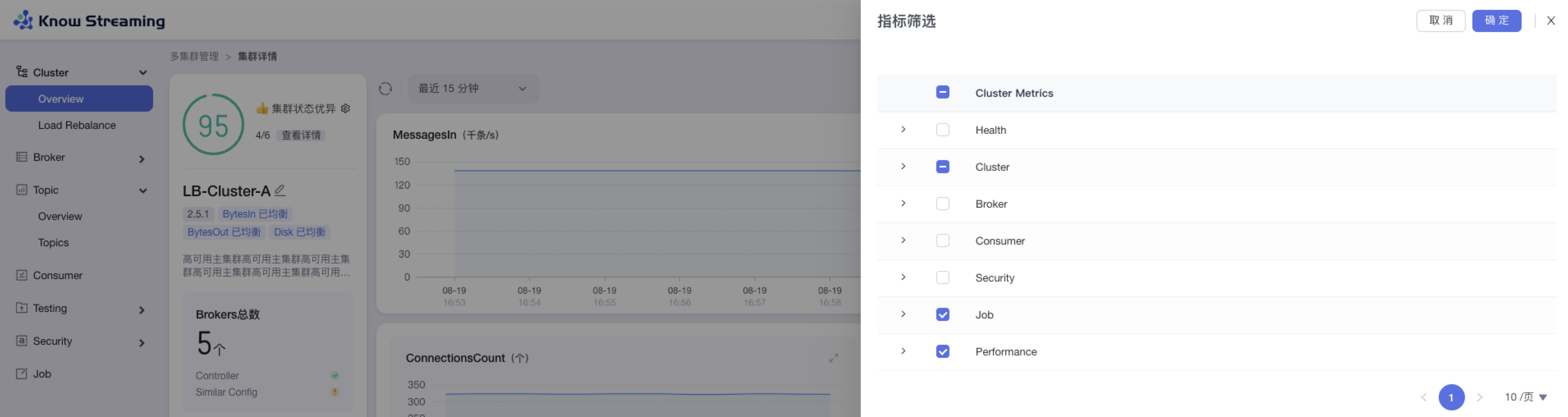

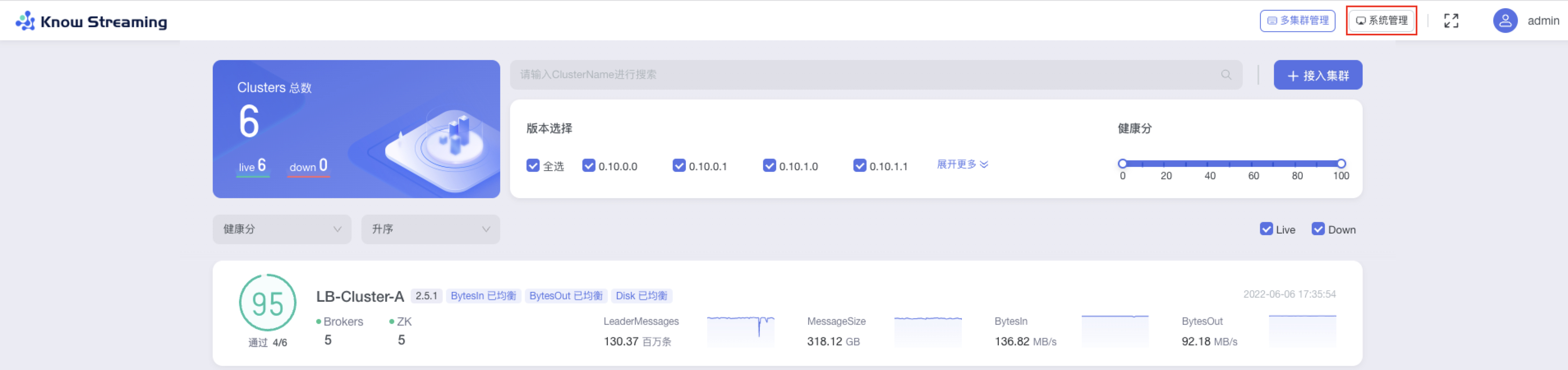

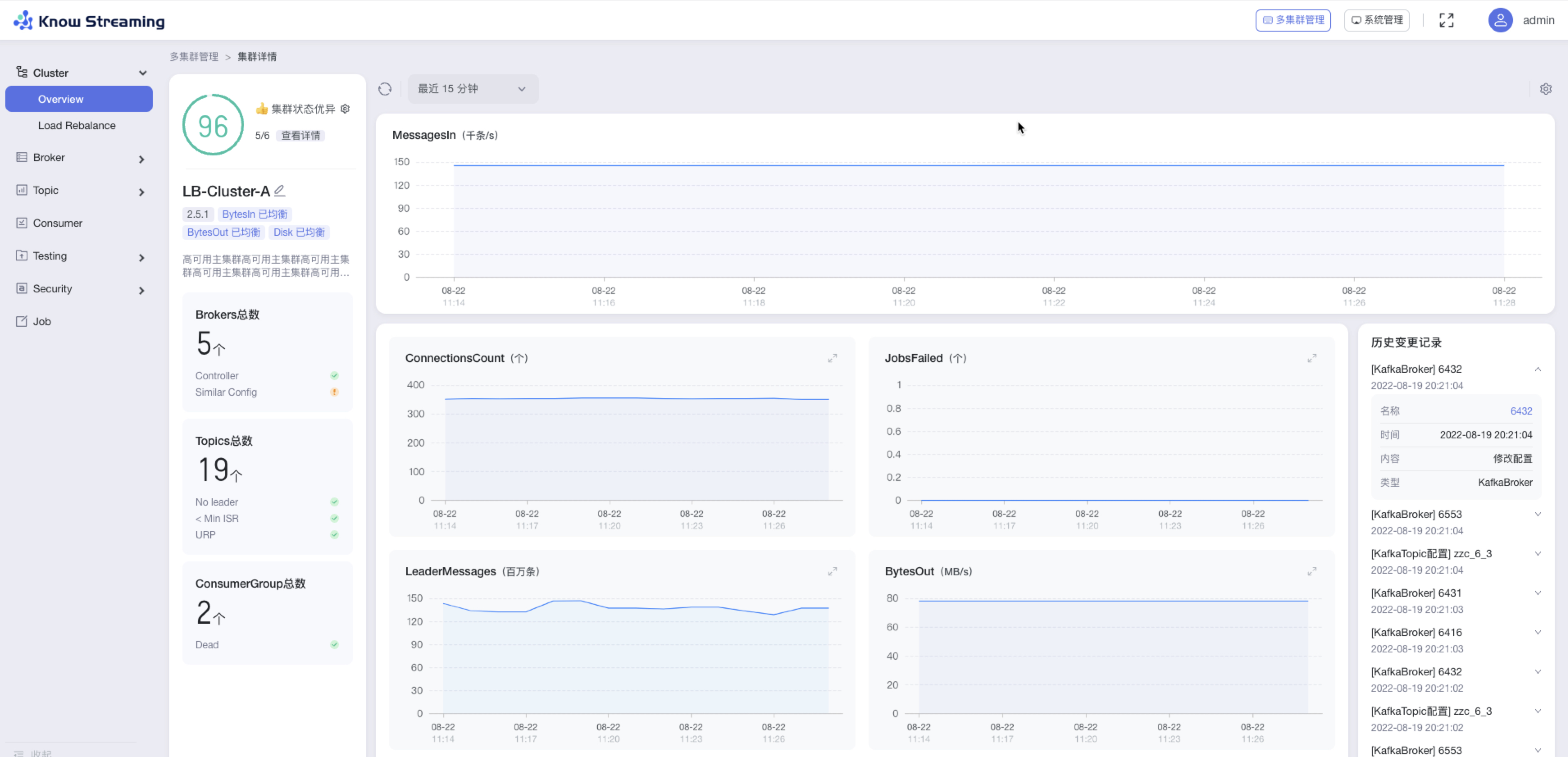

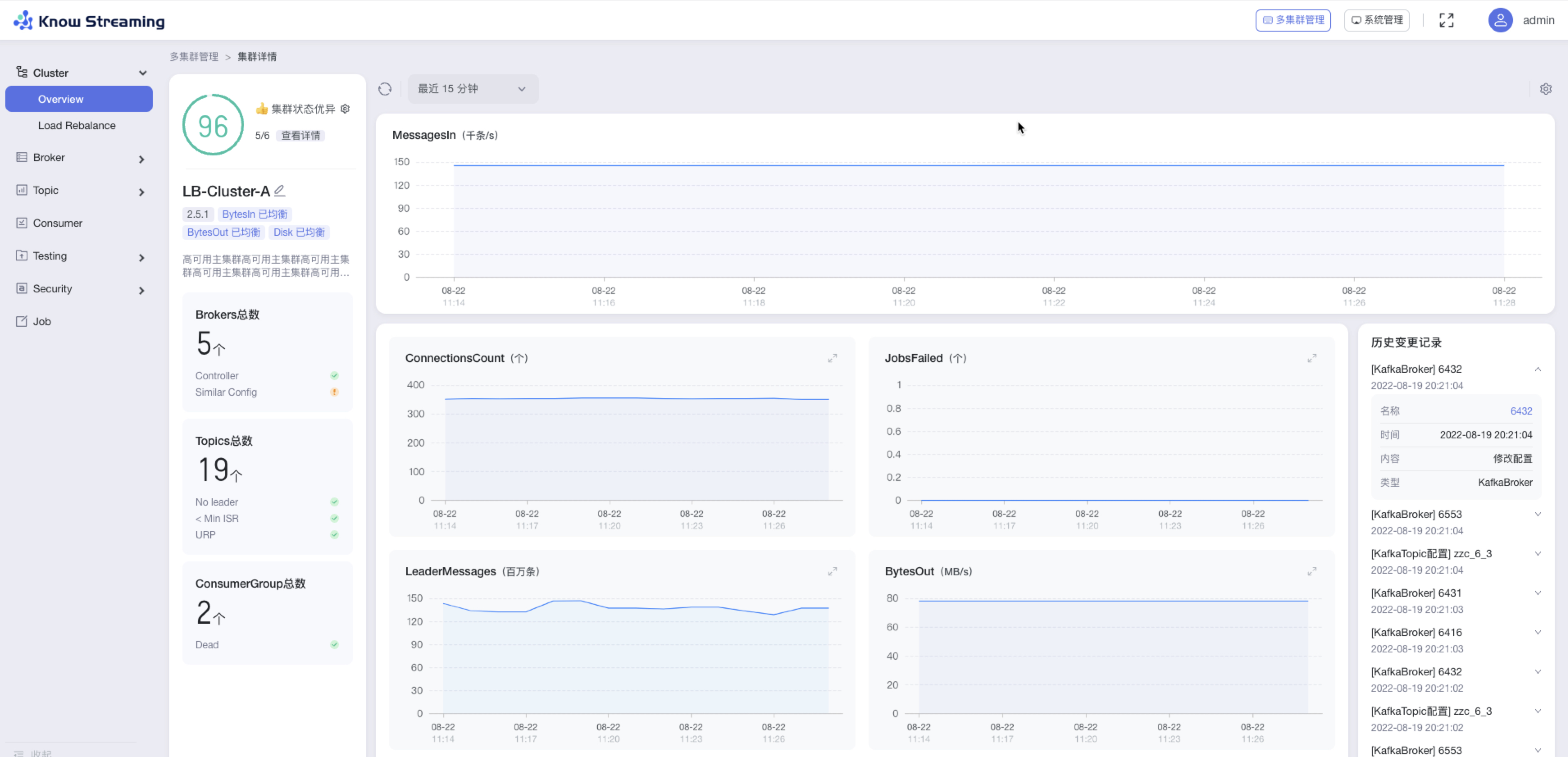

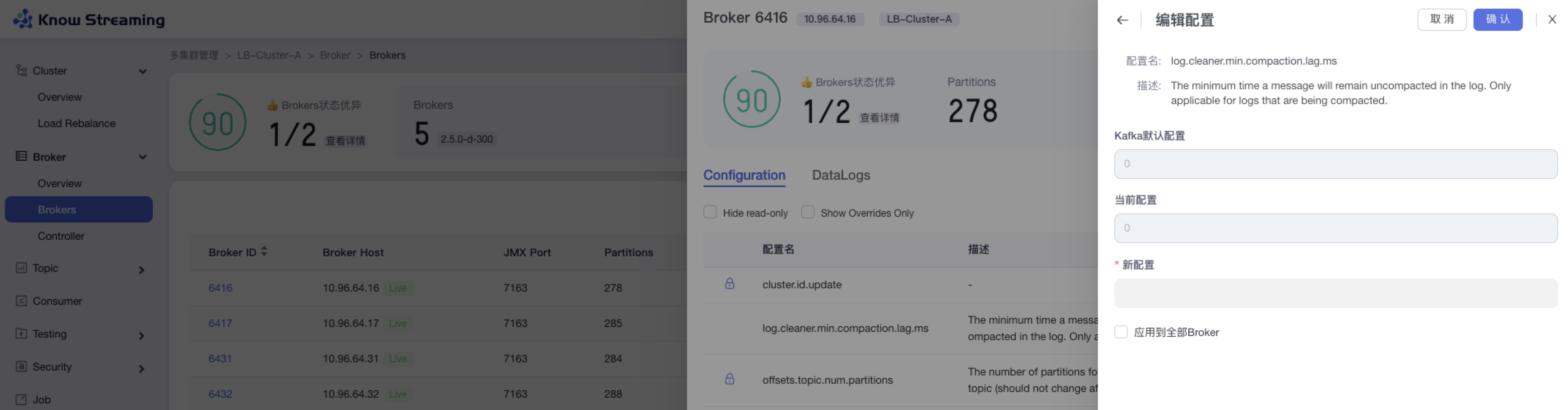

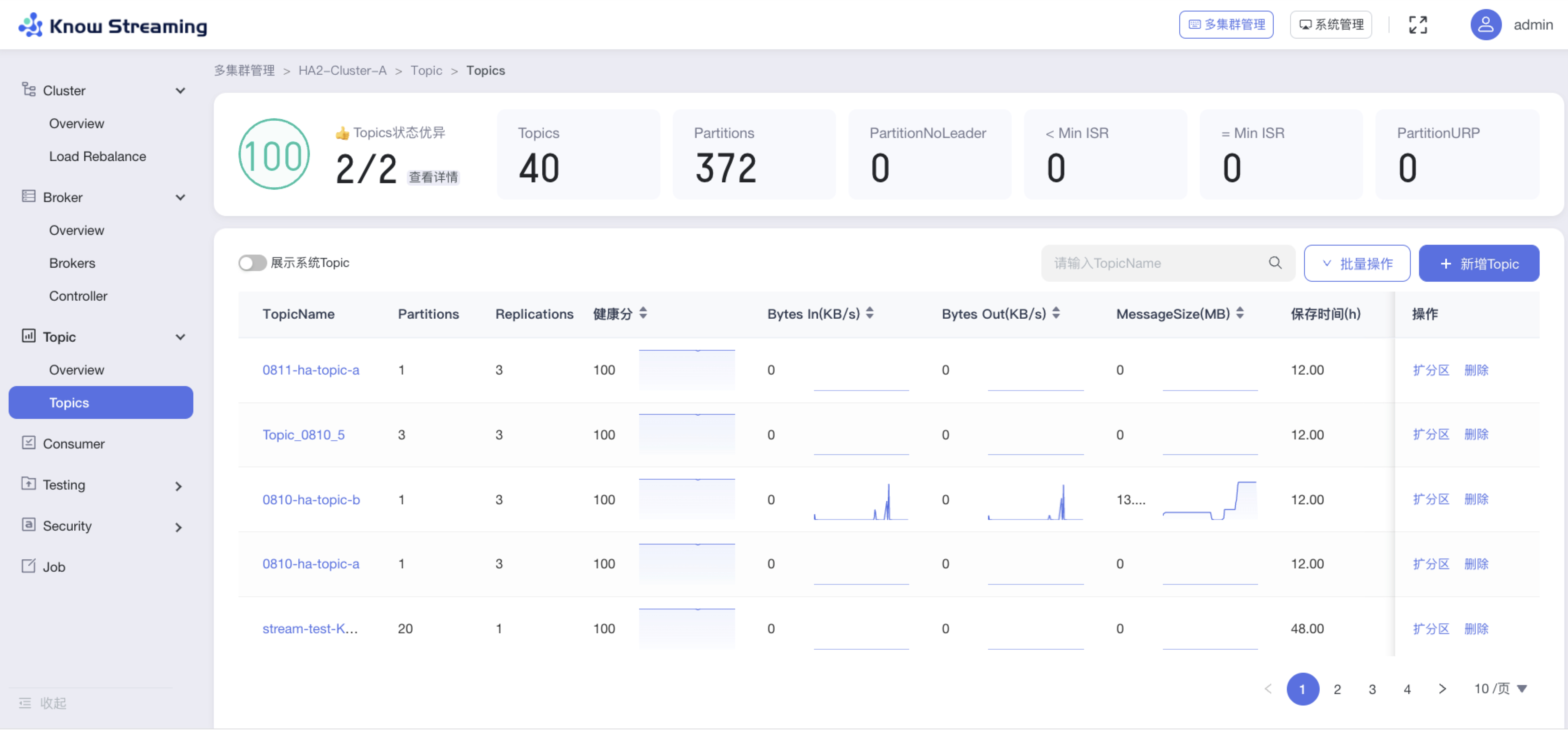

`Know Streaming`是一套云原生的Kafka管控平台,脱胎于众多互联网内部多年的Kafka运营实践经验,专注于Kafka运维管控、监控告警、资源治理、多活容灾等核心场景。在用户体验、监控、运维管控上进行了平台化、可视化、智能化的建设,提供一系列特色的功能,极大地方便了用户和运维人员的日常使用,让普通运维人员都能成为Kafka专家。整体具有以下特点:

|

||||

|

||||

- 👀 **零侵入、全覆盖**

|

||||

- 无需侵入改造 `Apache Kafka` ,一键便能纳管 `0.10.x` ~ `3.x.x` 众多版本的Kafka,包括 `ZK` 或 `Raft` 运行模式的版本,同时在兼容架构上具备良好的扩展性,帮助您提升集群管理水平;

|

||||

|

||||

- 🌪️ **零成本、界面化**

|

||||

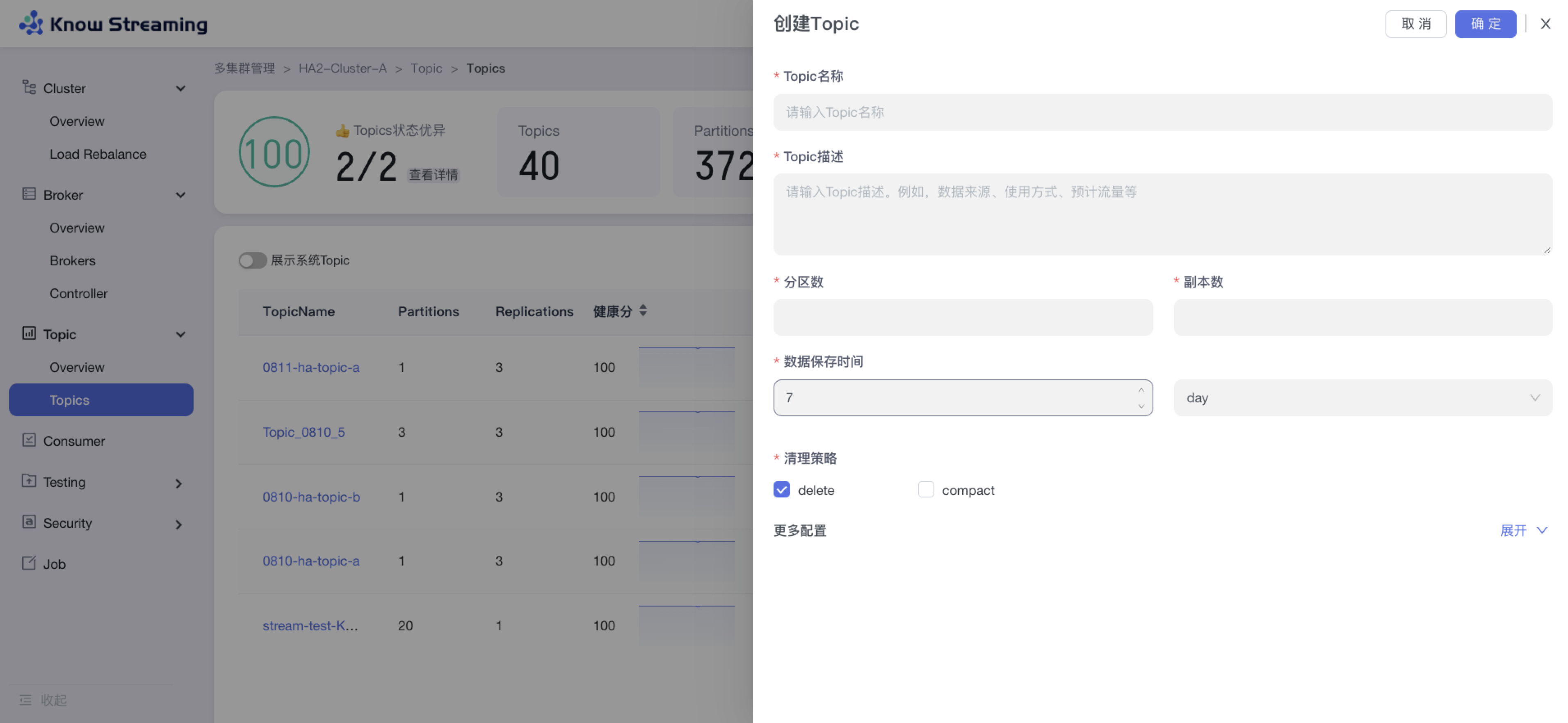

- 提炼高频 CLI 能力,设计合理的产品路径,提供清新美观的 GUI 界面,支持 Cluster、Broker、Topic、Group、Message、ACL 等组件 GUI 管理,普通用户5分钟即可上手;

|

||||

|

||||

- 👏 **云原生、插件化**

|

||||

- 基于云原生构建,具备水平扩展能力,只需要增加节点即可获取更强的采集及对外服务能力,提供众多可热插拔的企业级特性,覆盖可观测性生态整合、资源治理、多活容灾等核心场景;

|

||||

|

||||

- 🚀 **专业能力**

|

||||

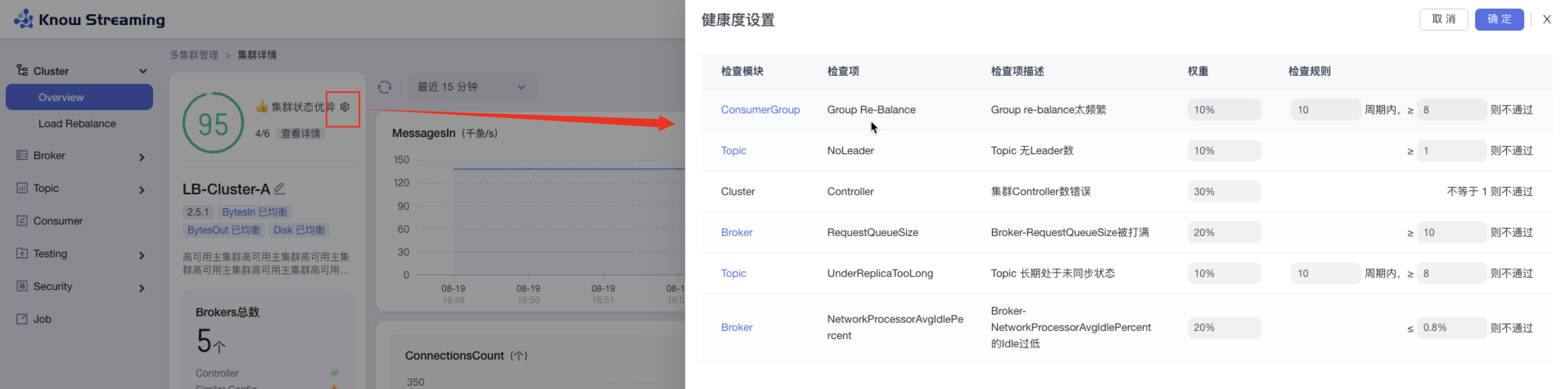

- 集群管理:支持集群一键纳管,健康分析、核心组件观测 等功能;

|

||||

- 观测提升:多维度指标观测大盘、观测指标最佳实践 等功能;

|

||||

- 异常巡检:集群多维度健康巡检、集群多维度健康分 等功能;

|

||||

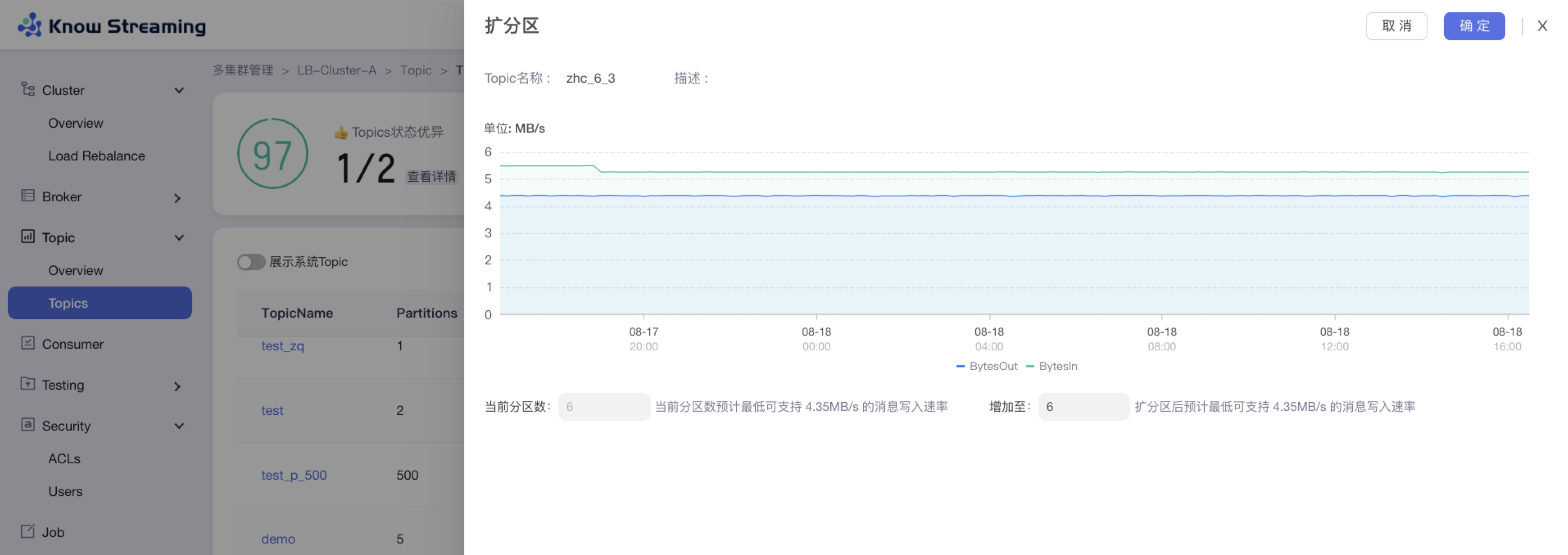

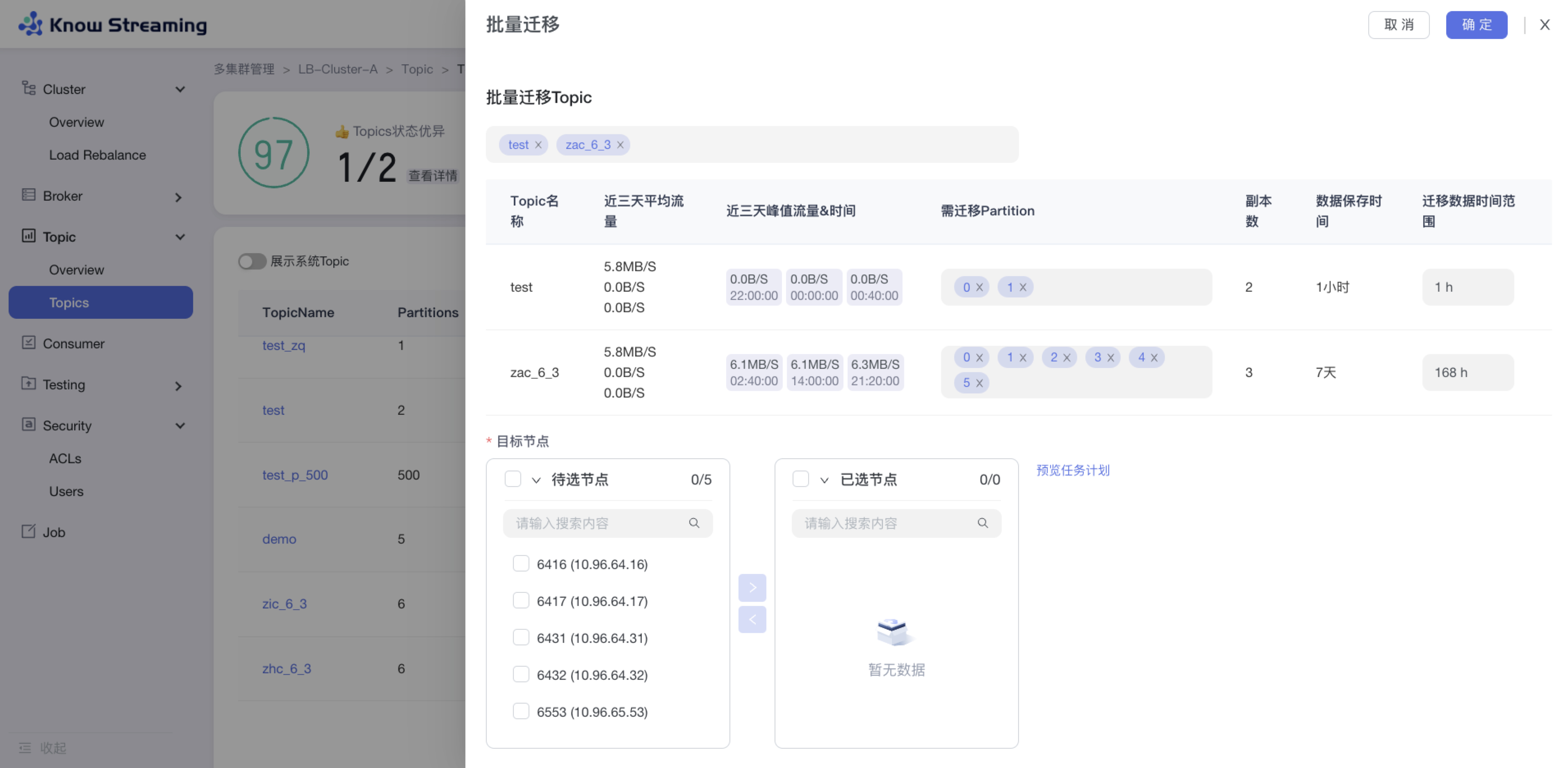

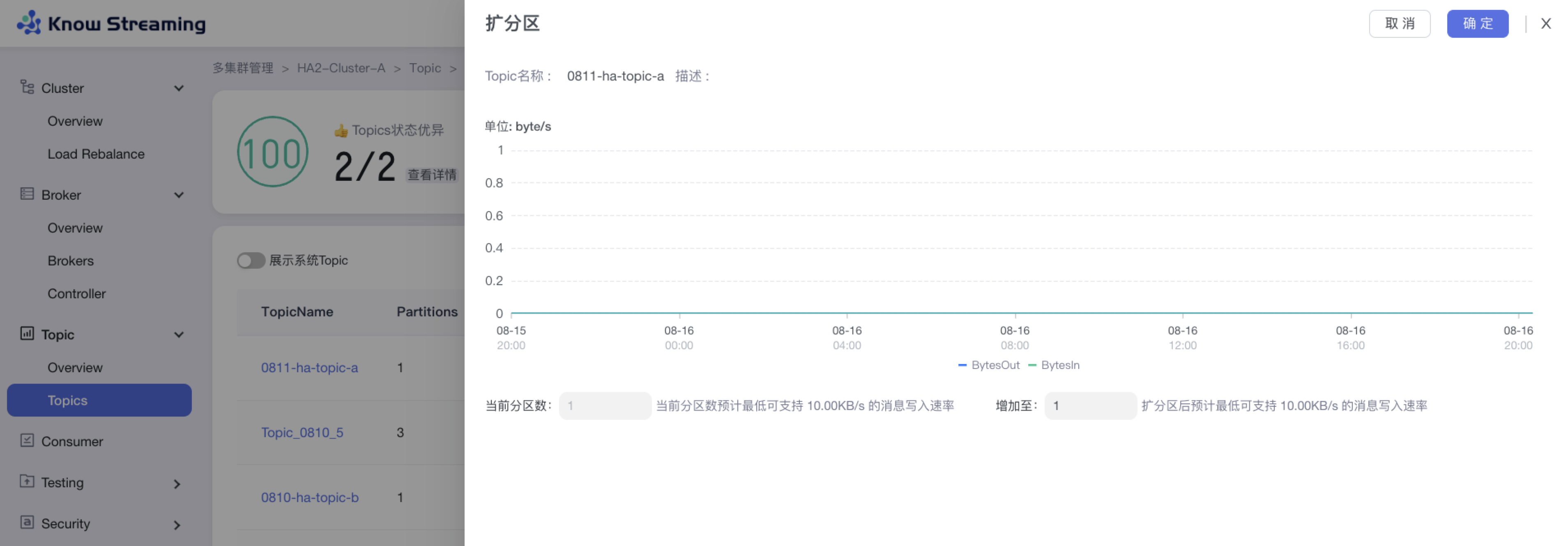

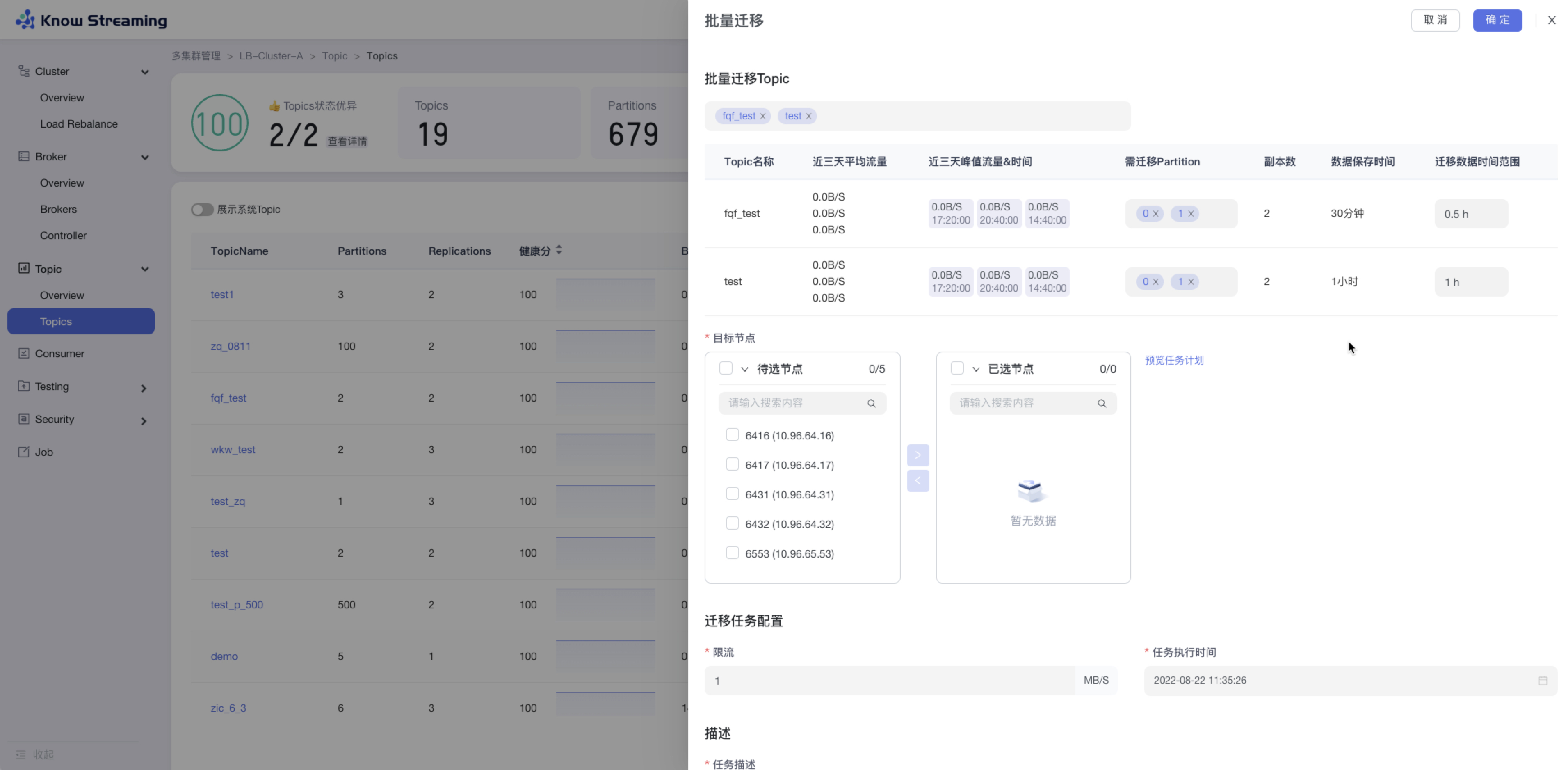

- 能力增强:Topic扩缩副本、Topic副本迁移 等功能;

|

||||

|

||||

|

||||

|

||||

**产品图**

|

||||

|

||||

<p align="center">

|

||||

|

||||

<img src="http://img-ys011.didistatic.com/static/dc2img/do1_sPmS4SNLX9m1zlpmHaLJ" width = "768" height = "473" div align=center />

|

||||

|

||||

</p>

|

||||

|

||||

|

||||

|

||||

|

||||

## 文档资源

|

||||

|

||||

**`开发相关手册`**

|

||||

|

||||

- [打包编译手册](docs/install_guide/源码编译打包手册.md)

|

||||

- [单机部署手册](docs/install_guide/单机部署手册.md)

|

||||

- [版本升级手册](docs/install_guide/版本升级手册.md)

|

||||

- [本地源码启动手册](docs/dev_guide/本地源码启动手册.md)

|

||||

|

||||

**`产品相关手册`**

|

||||

|

||||

- [产品使用指南](docs/user_guide/用户使用手册.md)

|

||||

- [2.x与3.x新旧对比手册](docs/user_guide/新旧对比手册.md)

|

||||

- [FAQ](docs/user_guide/faq.md)

|

||||

|

||||

|

||||

**点击 [这里](https://doc.knowstreaming.com/product),也可以从官网获取到更多文档**

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

## 成为社区贡献者

|

||||

|

||||

点击 [这里](CONTRIBUTING.md),了解如何成为 Know Streaming 的贡献者

|

||||

|

||||

|

||||

|

||||

## 加入技术交流群

|

||||

|

||||

**`1、知识星球`**

|

||||

|

||||

<p align="left">

|

||||

<img src="https://user-images.githubusercontent.com/71620349/185357284-fdff1dad-c5e9-4ddf-9a82-0be1c970980d.JPG" height = "180" div align=left />

|

||||

</p>

|

||||

|

||||

<br/>

|

||||

<br/>

|

||||

<br/>

|

||||

<br/>

|

||||

<br/>

|

||||

<br/>

|

||||

<br/>

|

||||

<br/>

|

||||

|

||||

👍 我们正在组建国内最大,最权威的 **[Kafka中文社区](https://z.didi.cn/5gSF9)**

|

||||

|

||||

在这里你可以结交各大互联网的 Kafka大佬 以及 4000+ Kafka爱好者,一起实现知识共享,实时掌控最新行业资讯,期待 👏 您的加入中~ https://z.didi.cn/5gSF9

|

||||

|

||||

有问必答~! 互动有礼~!

|

||||

|

||||

PS: 提问请尽量把问题一次性描述清楚,并告知环境信息情况~!如使用版本、操作步骤、报错/警告信息等,方便大V们快速解答~

|

||||

|

||||

|

||||

|

||||

**`2、微信群`**

|

||||

|

||||

微信加群:添加`mike_zhangliang`、`PenceXie`的微信号备注KnowStreaming加群。

|

||||

|

||||

## Star History

|

||||

|

||||

[](https://star-history.com/#didi/KnowStreaming&Date)

|

||||

@@ -1,335 +0,0 @@

|

||||

|

||||

|

||||

## v3.0.0-beta.2

|

||||

|

||||

**文档**

|

||||

- 新增登录系统对接文档

|

||||

- 优化前端工程打包构建部分文档说明

|

||||

- FAQ补充KnowStreaming连接特定JMX IP的说明

|

||||

|

||||

|

||||

**Bug修复**

|

||||

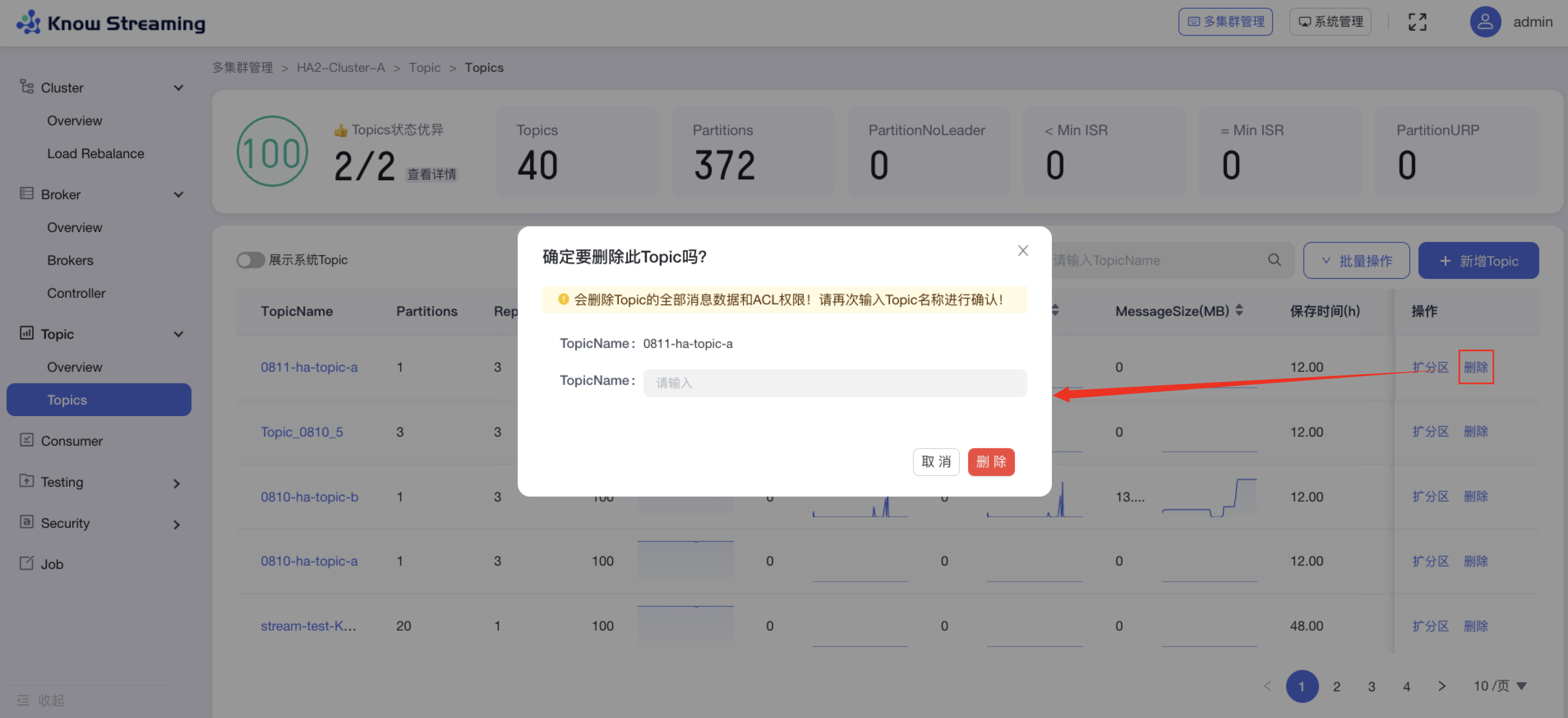

- 修复logi_security_oplog表字段过短,导致删除Topic等操作无法记录的问题

|

||||

- 修复ES查询时,抛java.lang.NumberFormatException: For input string: "{"value":0,"relation":"eq"}" 问题

|

||||

- 修复LogStartOffset和LogEndOffset指标单位错误问题

|

||||

- 修复进行副本变更时,旧副本数为NULL的问题

|

||||

- 修复集群Group列表,在第二页搜索时,搜索时返回的分页信息错误问题

|

||||

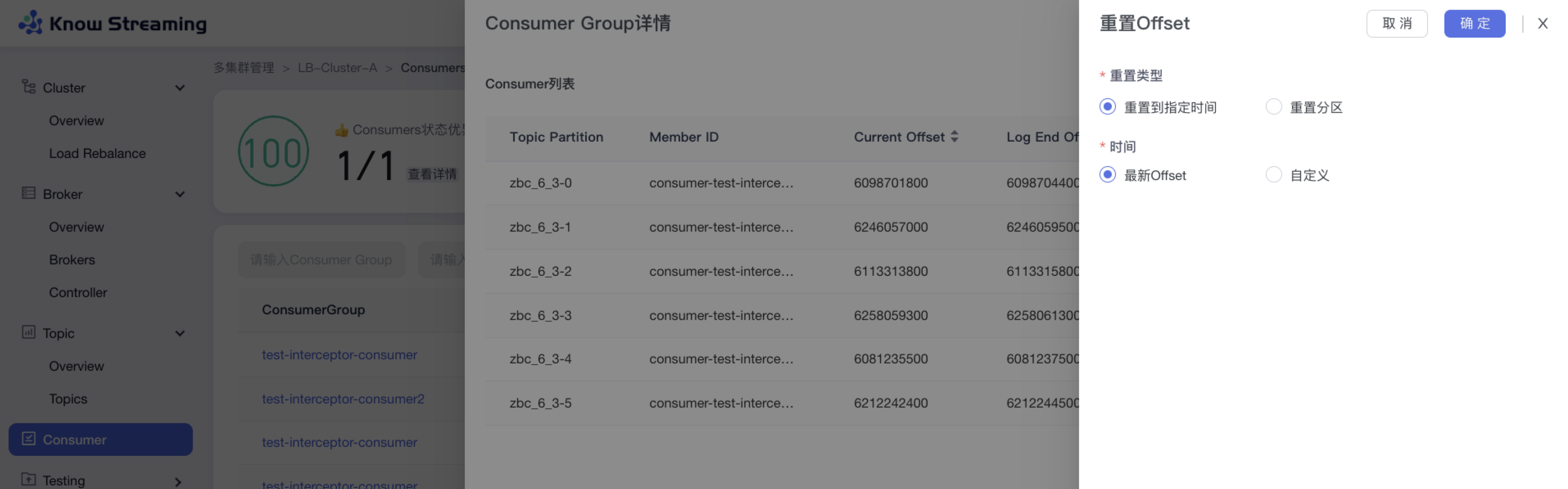

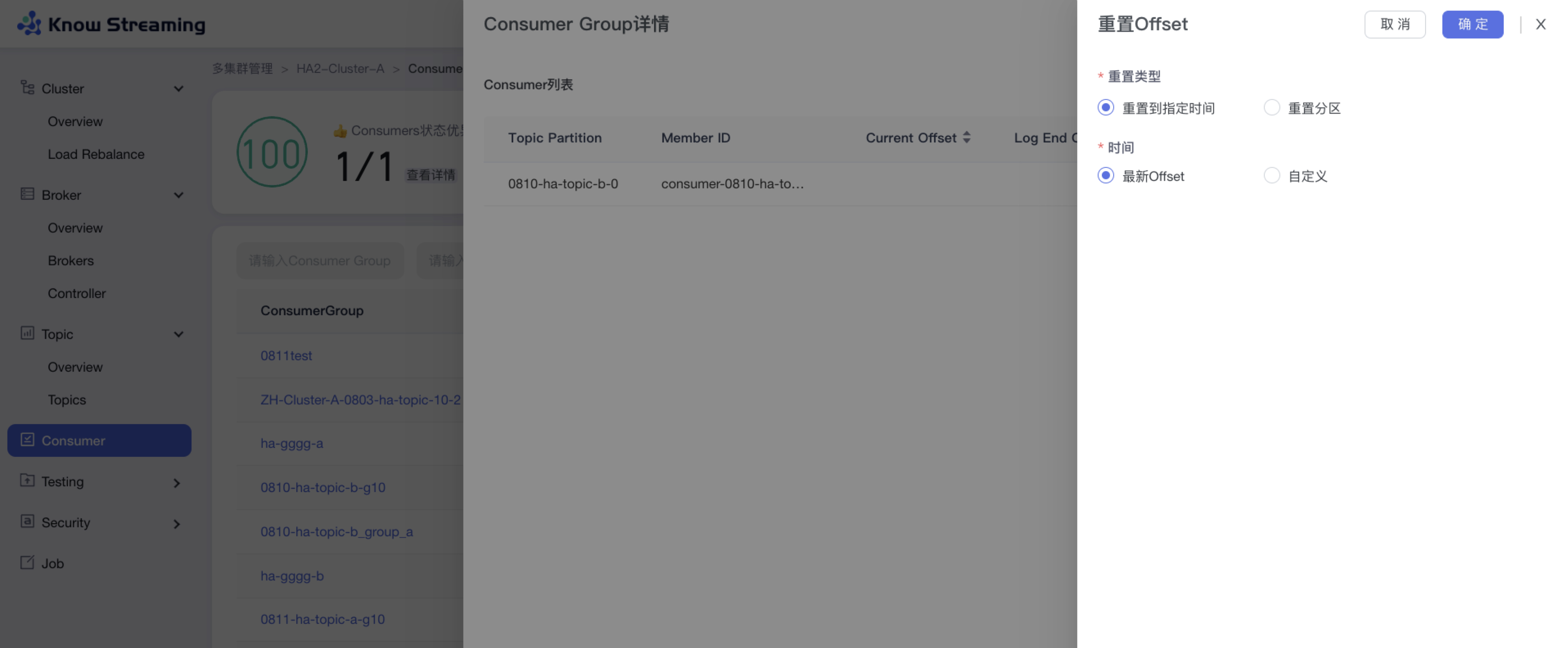

- 修复重置Offset时,返回的错误信息提示不一致的问题

|

||||

- 修复集群查看,系统查看,LoadRebalance等页面权限点缺失问题

|

||||

- 修复查询不存在的Topic时,错误信息提示不明显的问题

|

||||

- 修复Windows用户打包前端工程报错的问题

|

||||

- package-lock.json锁定前端依赖版本号,修复因依赖自动升级导致打包失败等问题

|

||||

- 系统管理子应用,补充后端返回的Code码拦截,解决后端接口返回报错不展示的问题

|

||||

- 修复用户登出后,依旧可以访问系统的问题

|

||||

- 修复巡检任务配置时,数值显示错误的问题

|

||||

- 修复Broker/Topic Overview 图表和图表详情问题

|

||||

- 修复Job扩缩副本任务明细数据错误的问题

|

||||

- 修复重置Offset时,分区ID,Offset数值无限制问题

|

||||

- 修复扩缩/迁移副本时,无法选中Kafka系统Topic的问题

|

||||

- 修复Topic的Config页面,编辑表单时不能正确回显当前值的问题

|

||||

- 修复Broker Card返回数据后依旧展示加载态的问题

|

||||

|

||||

|

||||

|

||||

**体验优化**

|

||||

- 优化默认用户密码为 admin/admin

|

||||

- 缩短新增集群后,集群信息加载的耗时

|

||||

- 集群Broker列表,增加Controller角色信息

|

||||

- 副本变更任务结束后,增加进行优先副本选举的操作

|

||||

- Task模块任务分为Metrics、Common、Metadata三类任务,每类任务配备独立线程池,减少对Job模块的线程池,以及不同类任务之间的相互影响

|

||||

- 删除代码中存在的多余无用文件

|

||||

- 自动新增ES索引模版及近7天索引,减少用户搭建时需要做的事项

|

||||

- 优化前端工程打包流程

|

||||

- 优化登录页文案,页面左侧栏内容,单集群详情样式,Topic列表趋势图等

|

||||

- 首次进入Broker/Topic图表详情时,进行预缓存数据从而优化体验

|

||||

- 优化Topic详情Partition Tab的展示

|

||||

- 多集群列表页增加编辑功能

|

||||

- 优化副本变更时,迁移时间支持分钟级别粒度

|

||||

- logi-security版本升级至2.10.13

|

||||

- logi-elasticsearch-client版本升级至1.0.24

|

||||

|

||||

|

||||

**能力提升**

|

||||

- 支持Ldap登录认证

|

||||

|

||||

---

|

||||

|

||||

## v3.0.0-beta.1

|

||||

|

||||

**文档**

|

||||

- 新增Task模块说明文档

|

||||

- FAQ补充 `Specified key was too long; max key length is 767 bytes ` 错误说明

|

||||

- FAQ补充 `出现ESIndexNotFoundException报错` 错误说明

|

||||

|

||||

|

||||

**Bug修复**

|

||||

- 修复 Consumer 点击 Stop 未停止检索的问题

|

||||

- 修复创建/编辑角色权限报错问题

|

||||

- 修复多集群管理/单集群详情均衡卡片状态错误问题

|

||||

- 修复版本列表未排序问题

|

||||

- 修复Raft集群Controller信息不断记录问题

|

||||

- 修复部分版本消费组描述信息获取失败问题

|

||||

- 修复分区Offset获取失败的日志中,缺少Topic名称信息问题

|

||||

- 修复GitHub图地址错误,及图裂问题

|

||||

- 修复Broker默认使用的地址和注释不一致问题

|

||||

- 修复 Consumer 列表分页不生效问题

|

||||

- 修复操作记录表operation_methods字段缺少默认值问题

|

||||

- 修复集群均衡表中move_broker_list字段无效的问题

|

||||

- 修复KafkaUser、KafkaACL信息获取时,日志一直重复提示不支持问题

|

||||

- 修复指标缺失时,曲线出现掉底的问题

|

||||

|

||||

|

||||

**体验优化**

|

||||

- 优化前端构建时间和打包体积,增加依赖打包的分包策略

|

||||

- 优化产品样式和文案展示

|

||||

- 优化ES客户端数为可配置

|

||||

- 优化日志中大量出现的MySQL Key冲突日志

|

||||

|

||||

|

||||

**能力提升**

|

||||

- 增加周期任务,用于主动创建缺少的ES模版及索引的能力,减少额外的脚本操作

|

||||

- 增加JMX连接的Broker地址可选择的能力

|

||||

|

||||

---

|

||||

|

||||

## v3.0.0-beta.0

|

||||

|

||||

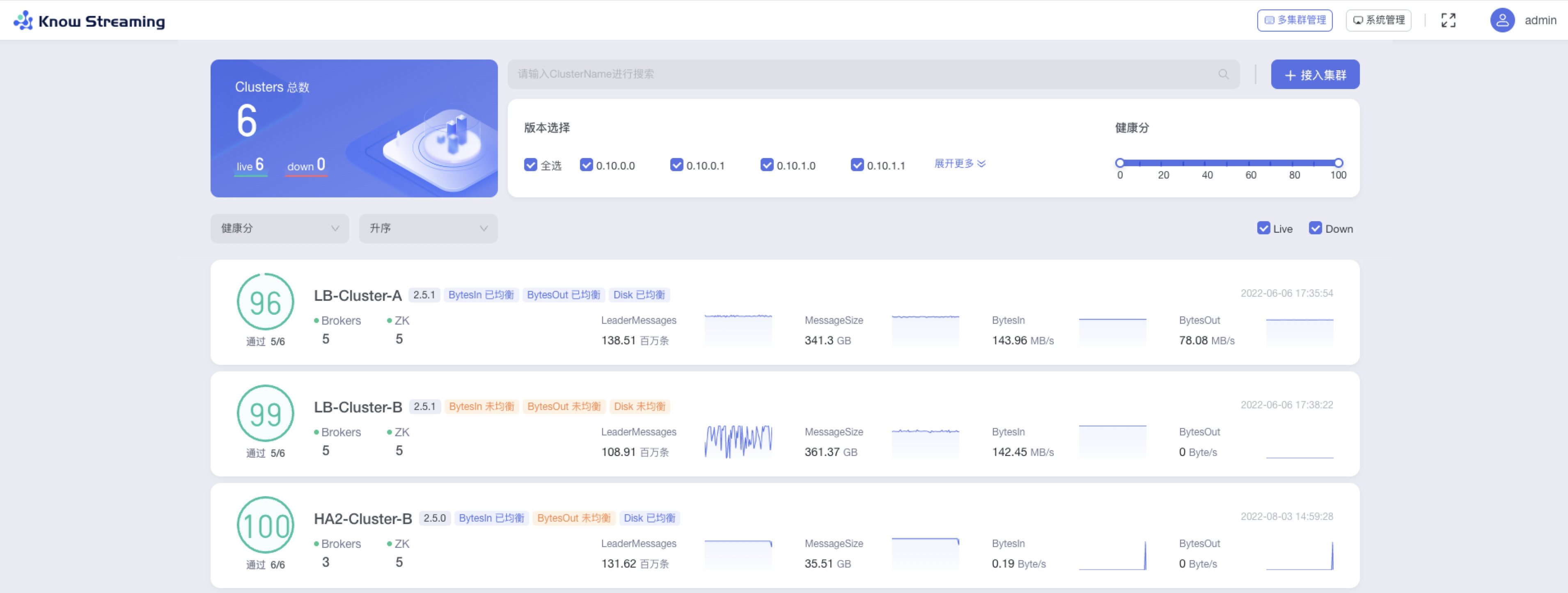

**1、多集群管理**

|

||||

|

||||

- 增加健康监测体系、关键组件&指标 GUI 展示

|

||||

- 增加 2.8.x 以上 Kafka 集群接入,覆盖 0.10.x-3.x

|

||||

- 删除逻辑集群、共享集群、Region 概念

|

||||

|

||||

**2、Cluster 管理**

|

||||

|

||||

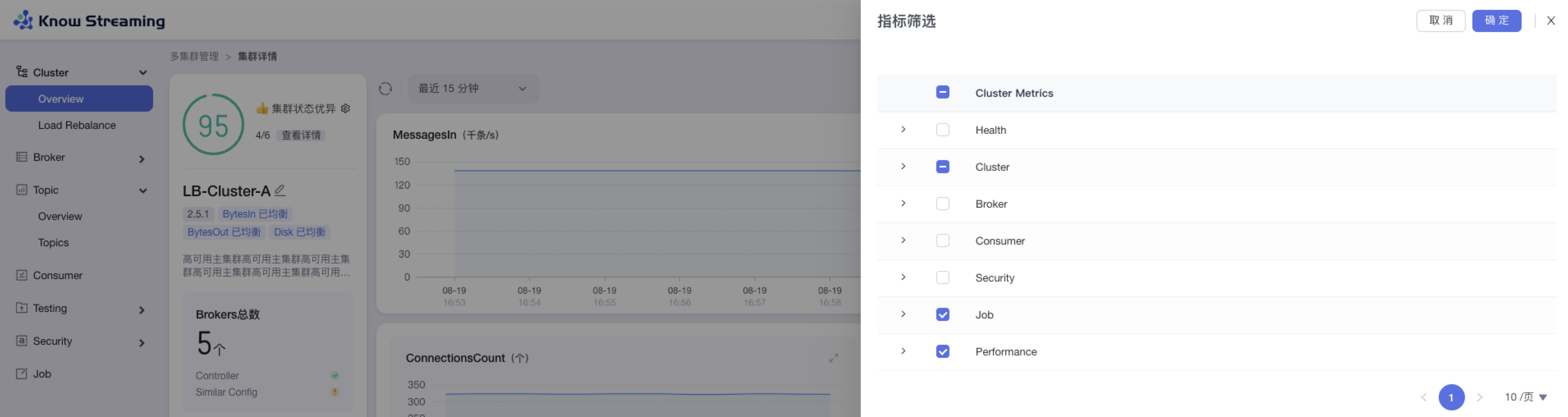

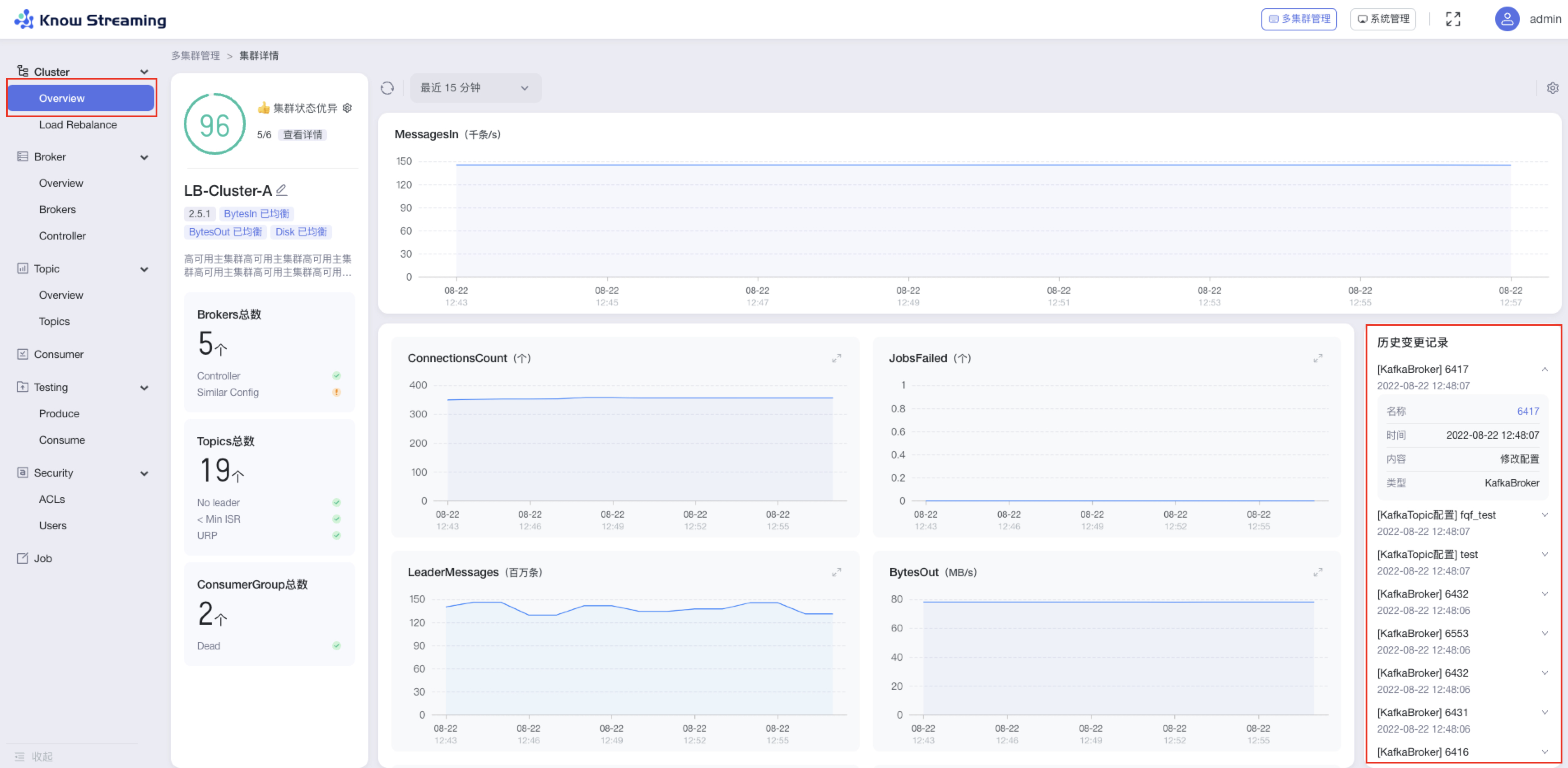

- 增加集群概览信息、集群配置变更记录

|

||||

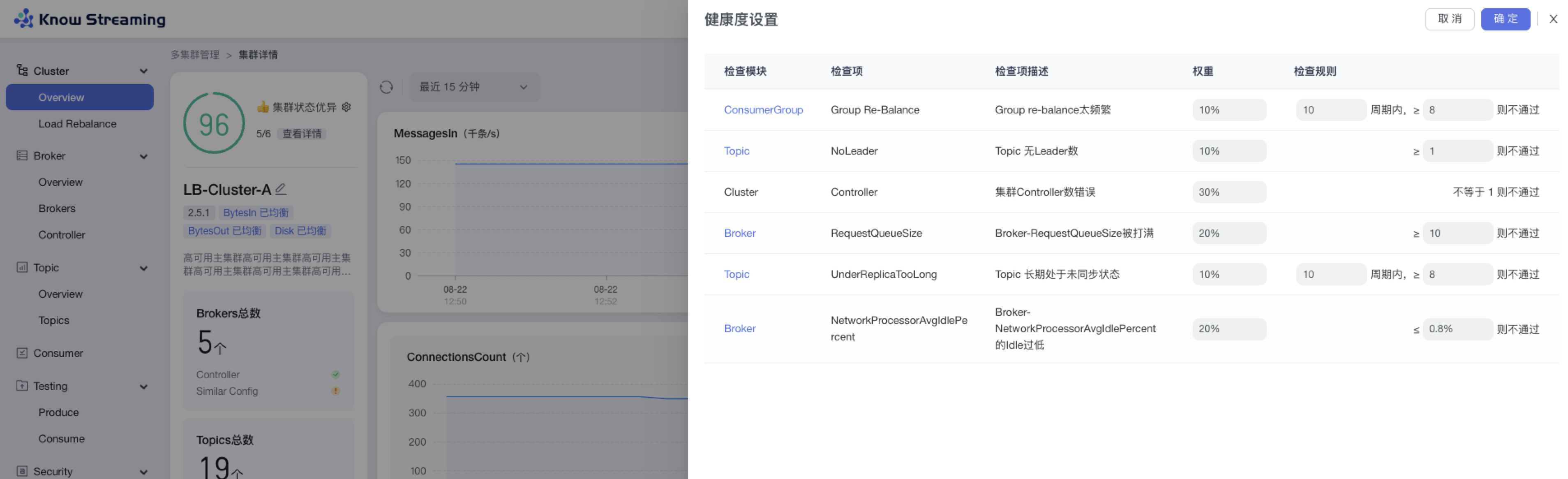

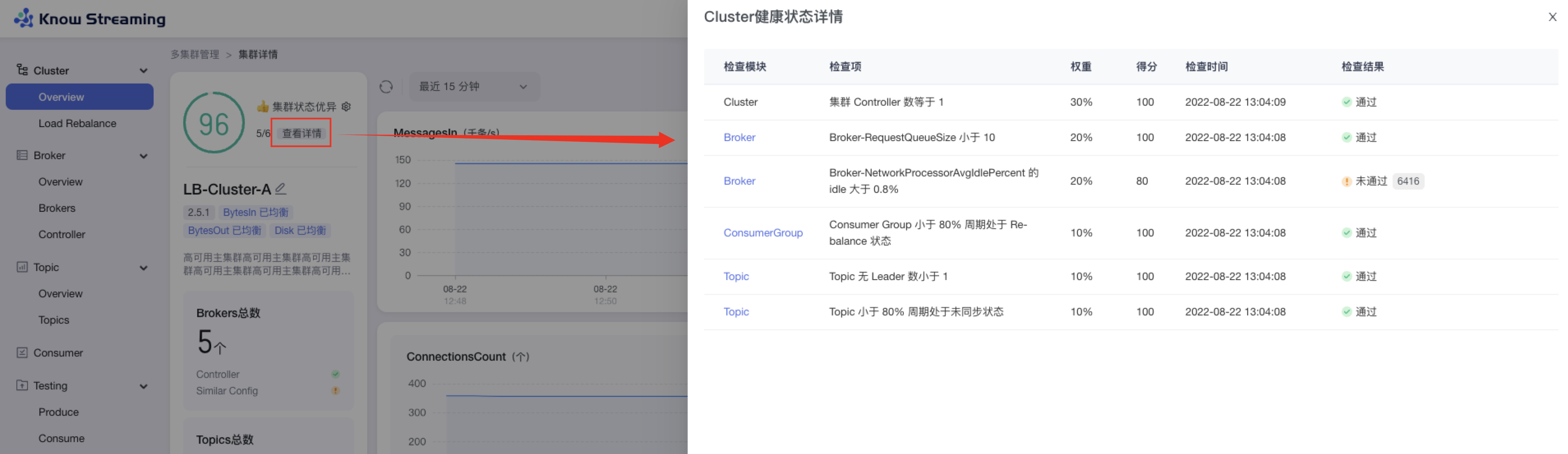

- 增加 Cluster 健康分,健康检查规则支持自定义配置

|

||||

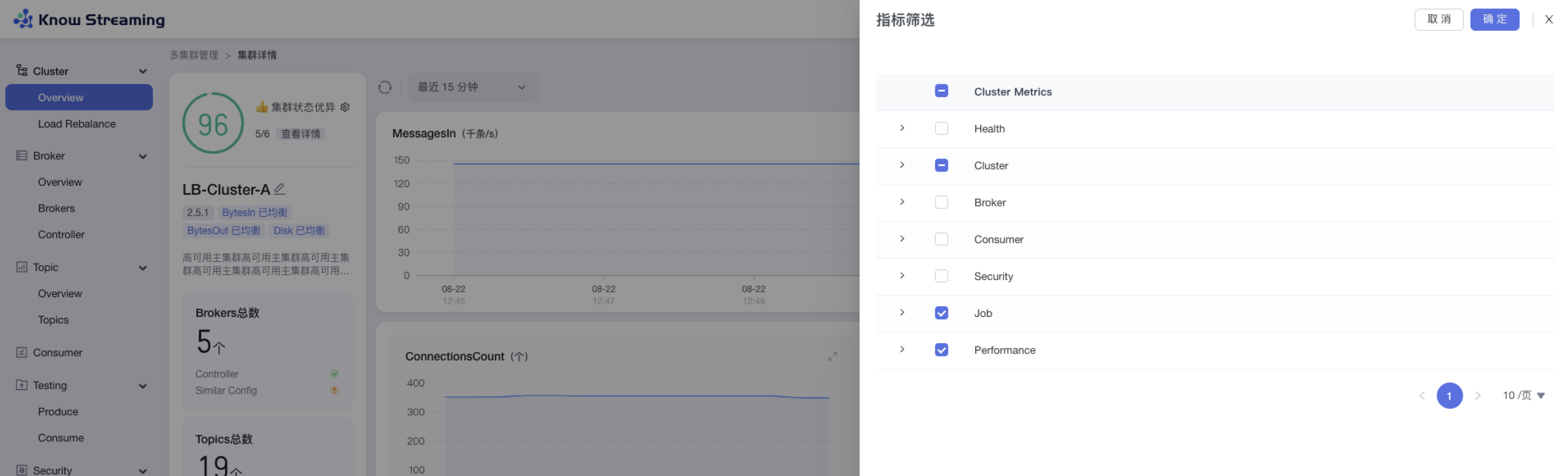

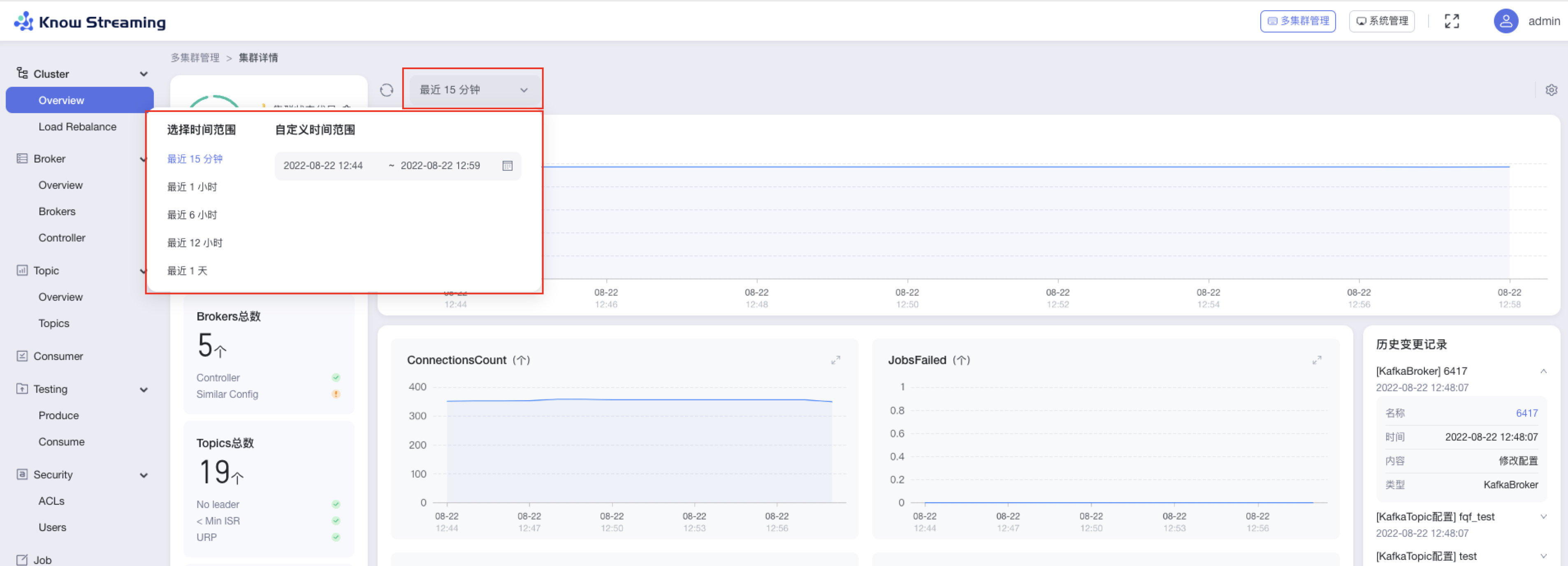

- 增加 Cluster 关键指标统计和 GUI 展示,支持自定义配置

|

||||

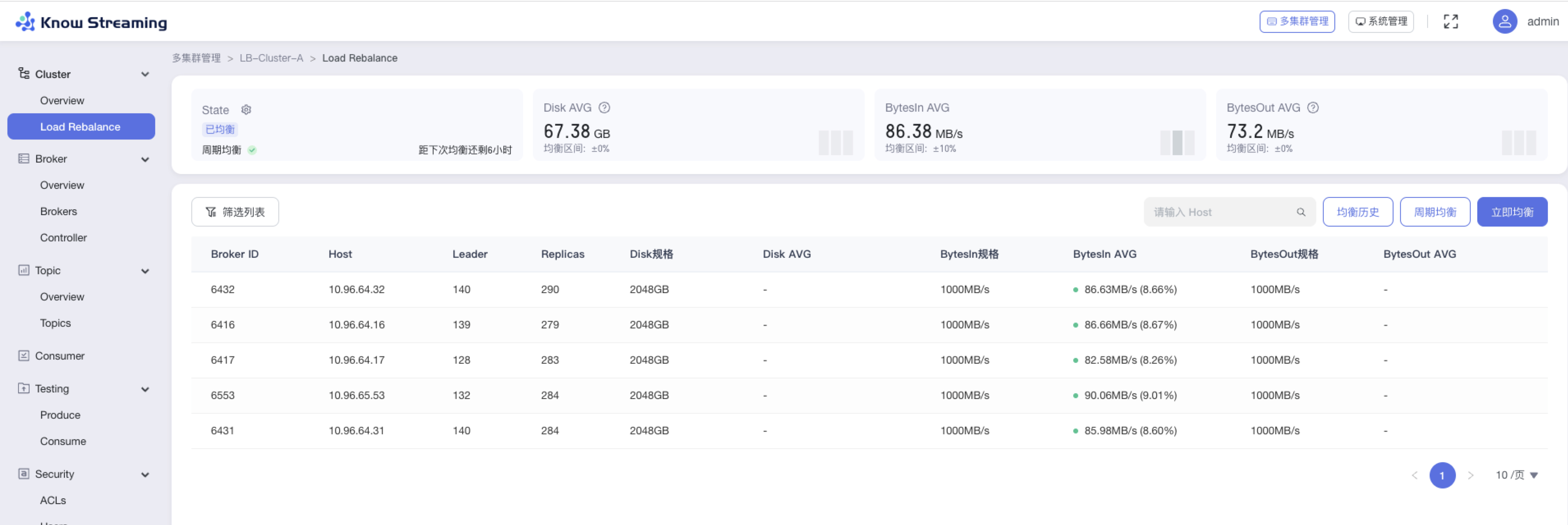

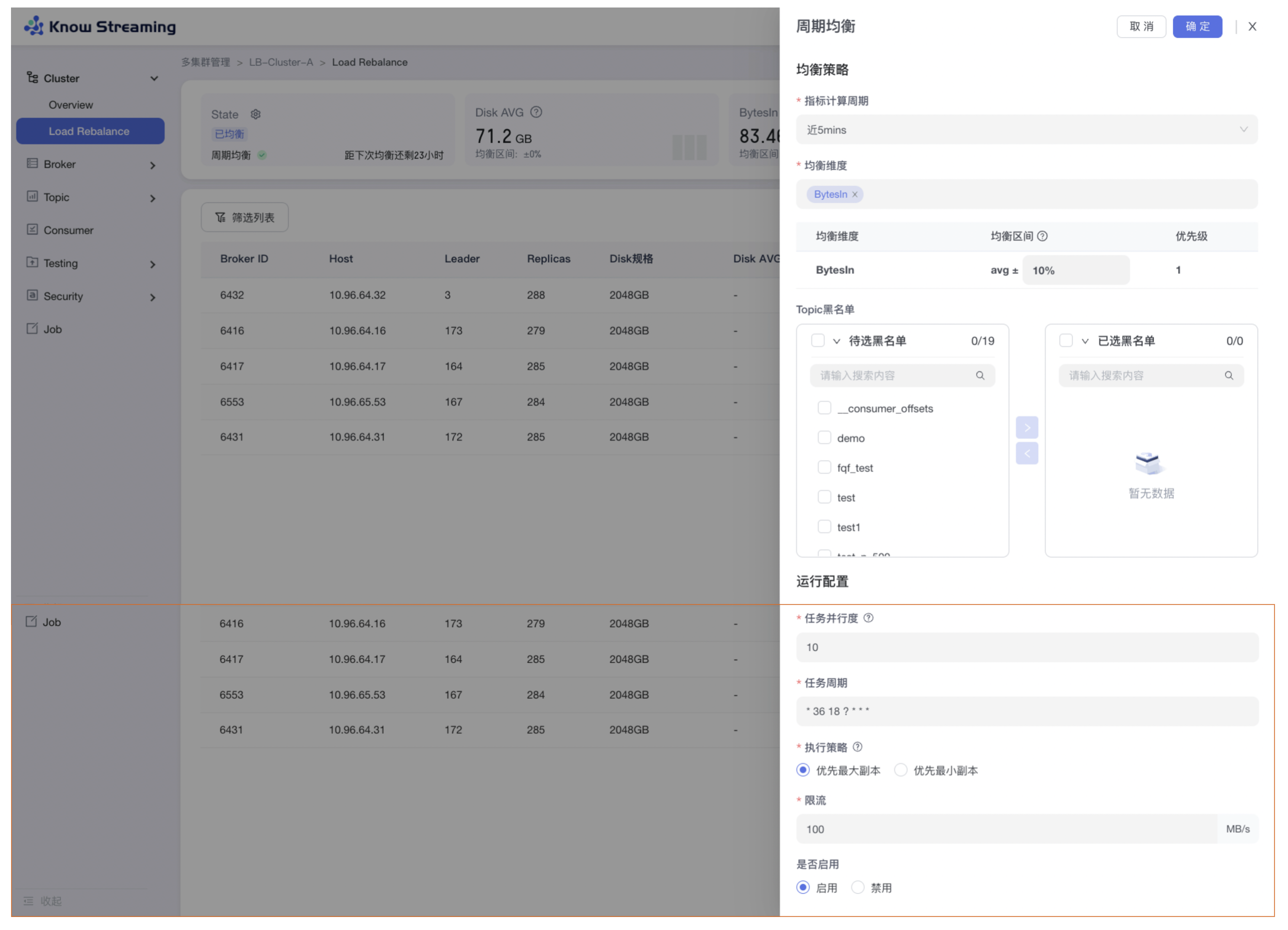

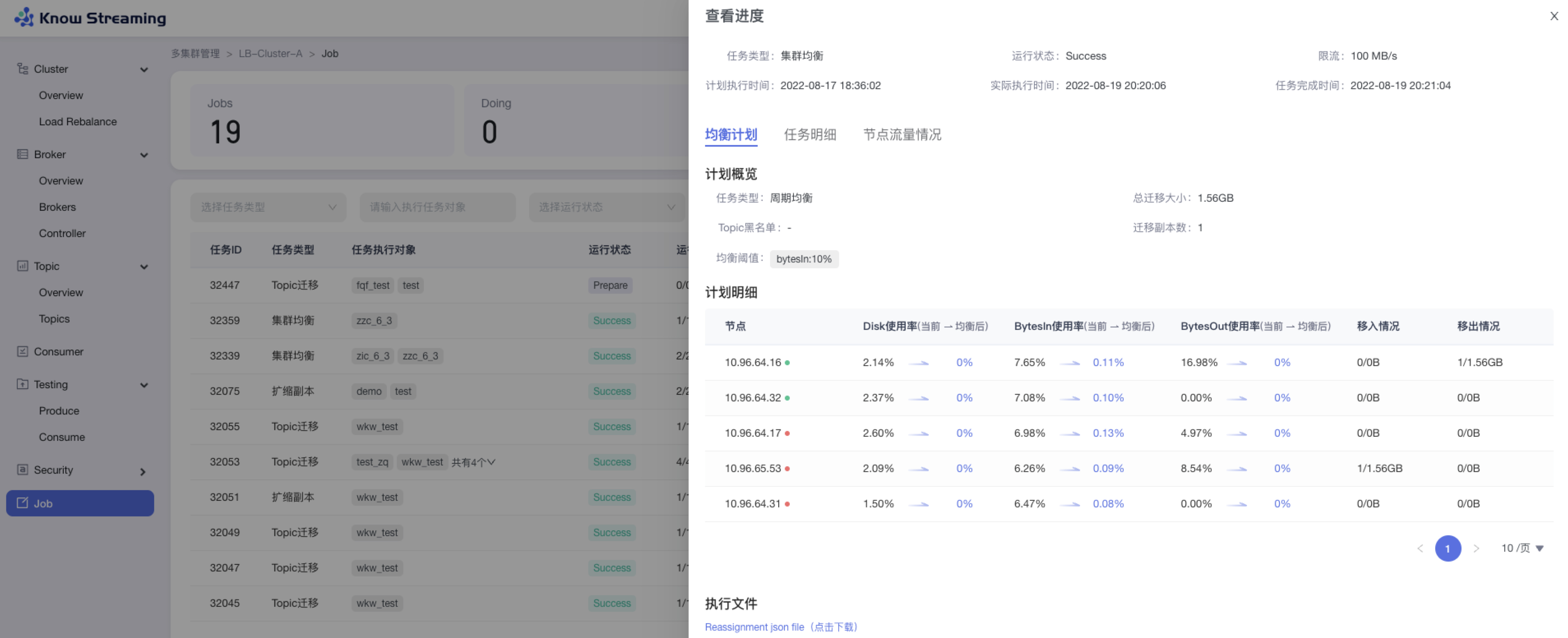

- 增加 Cluster 层 I/O、Disk 的 Load Reblance 功能,支持定时均衡任务(企业版)

|

||||

- 删除限流、鉴权功能

|

||||

- 删除 APPID 概念

|

||||

|

||||

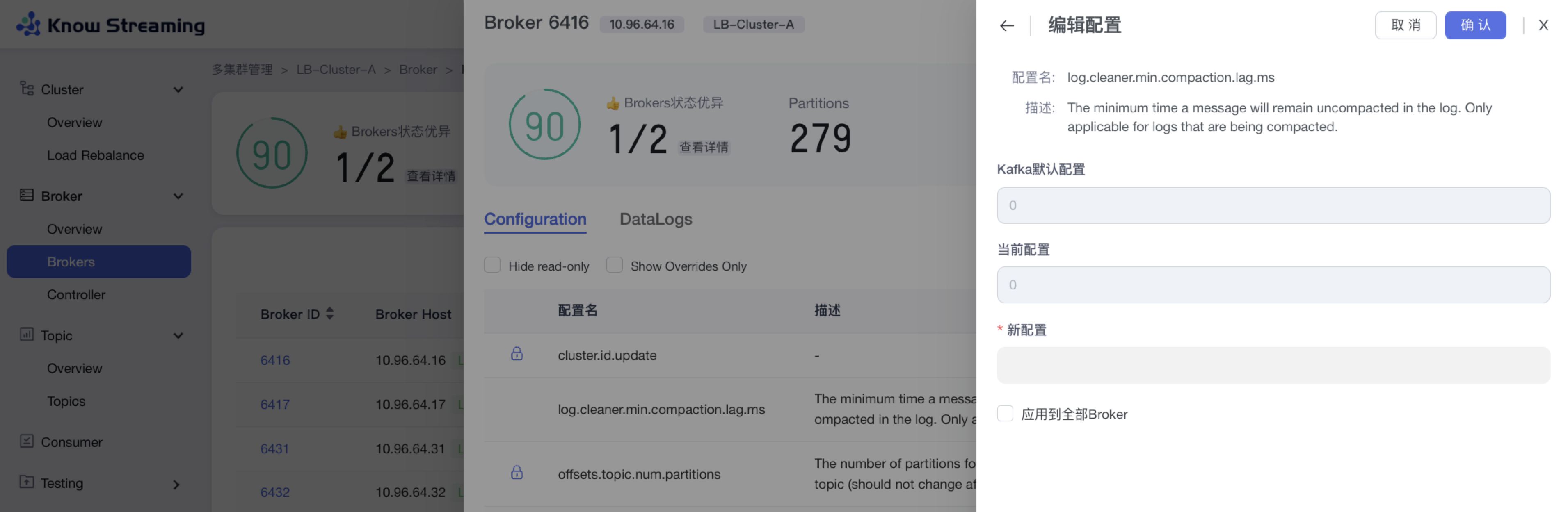

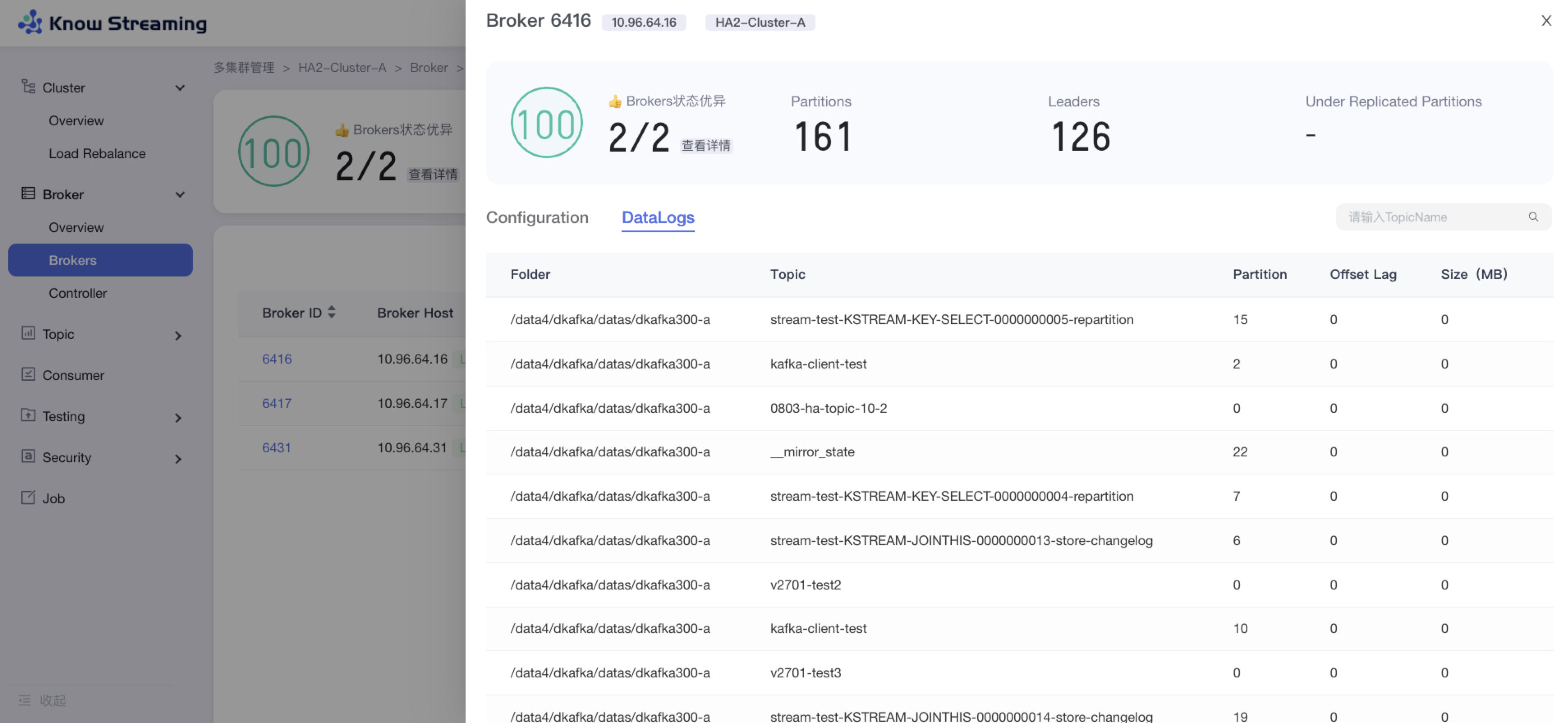

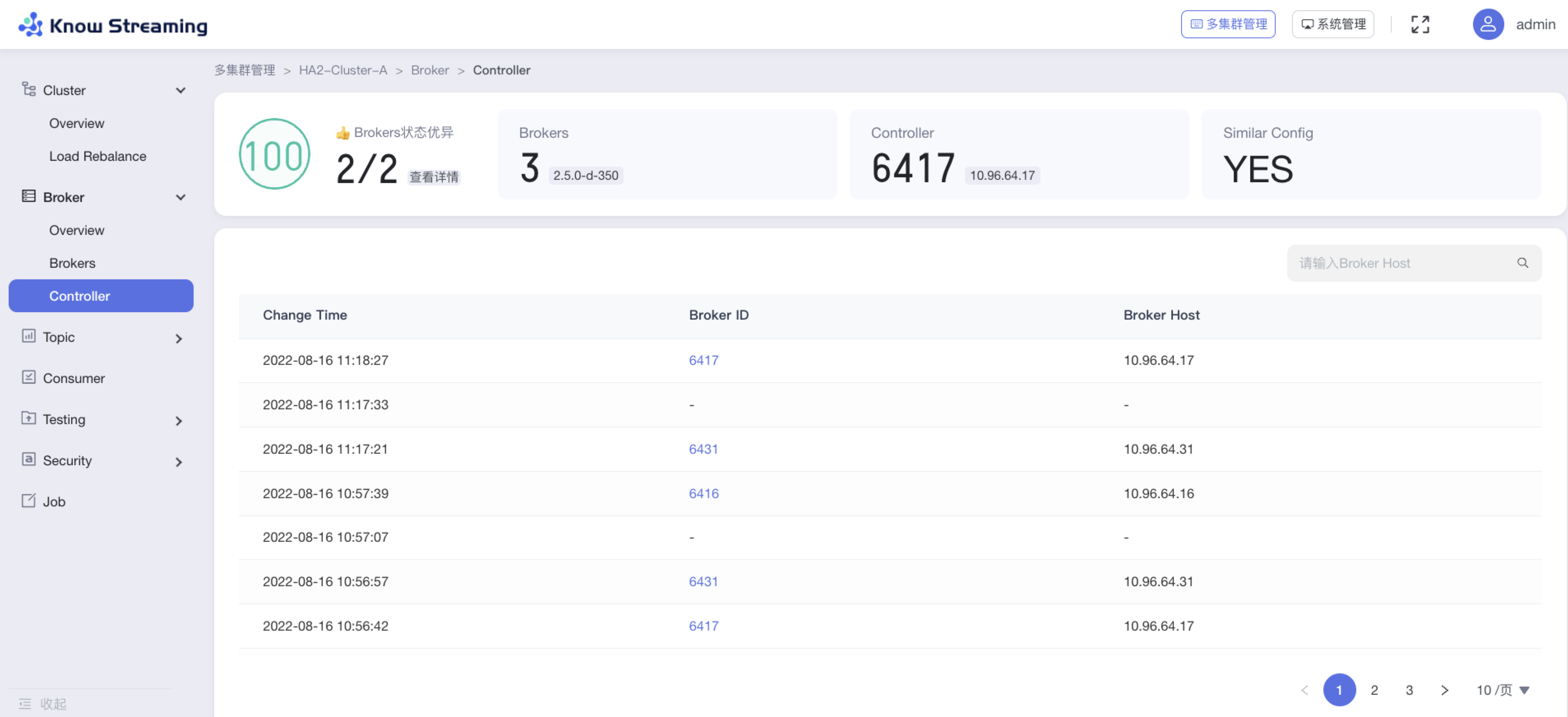

**3、Broker 管理**

|

||||

|

||||

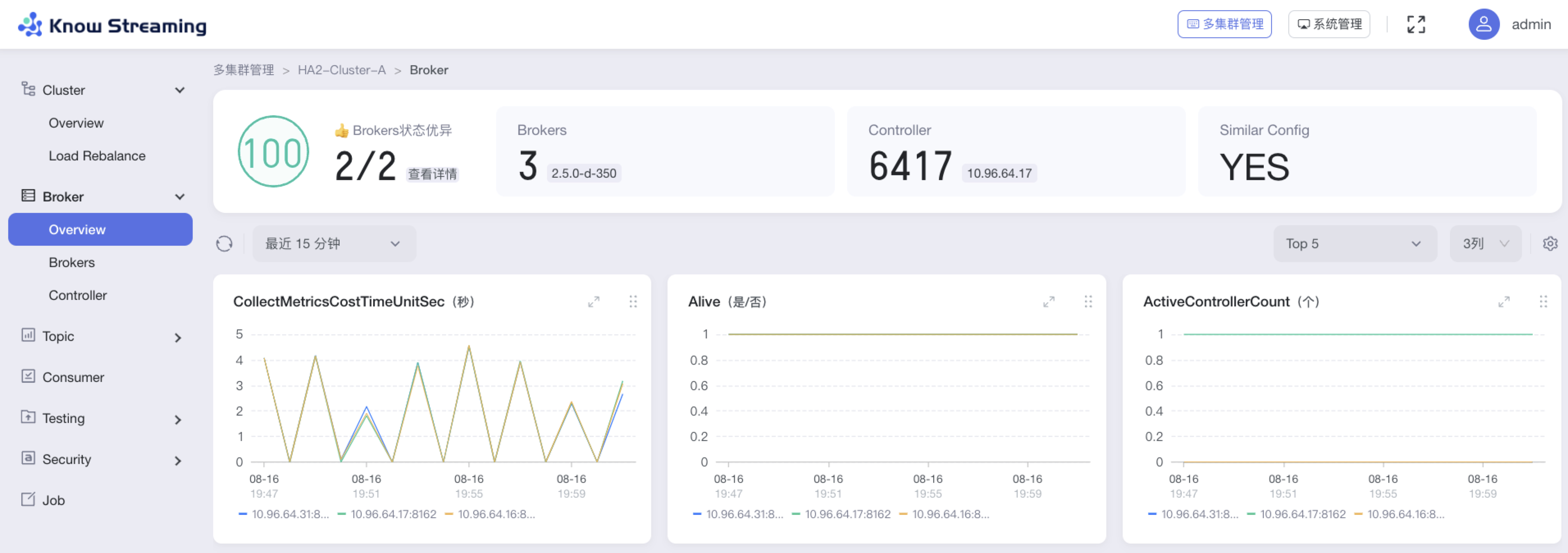

- 增加 Broker 健康分

|

||||

- 增加 Broker 关键指标统计和 GUI 展示,支持自定义配置

|

||||

- 增加 Broker 参数配置功能,需重启生效

|

||||

- 增加 Controller 变更记录

|

||||

- 增加 Broker Datalogs 记录

|

||||

- 删除 Leader Rebalance 功能

|

||||

- 删除 Broker 优先副本选举

|

||||

|

||||

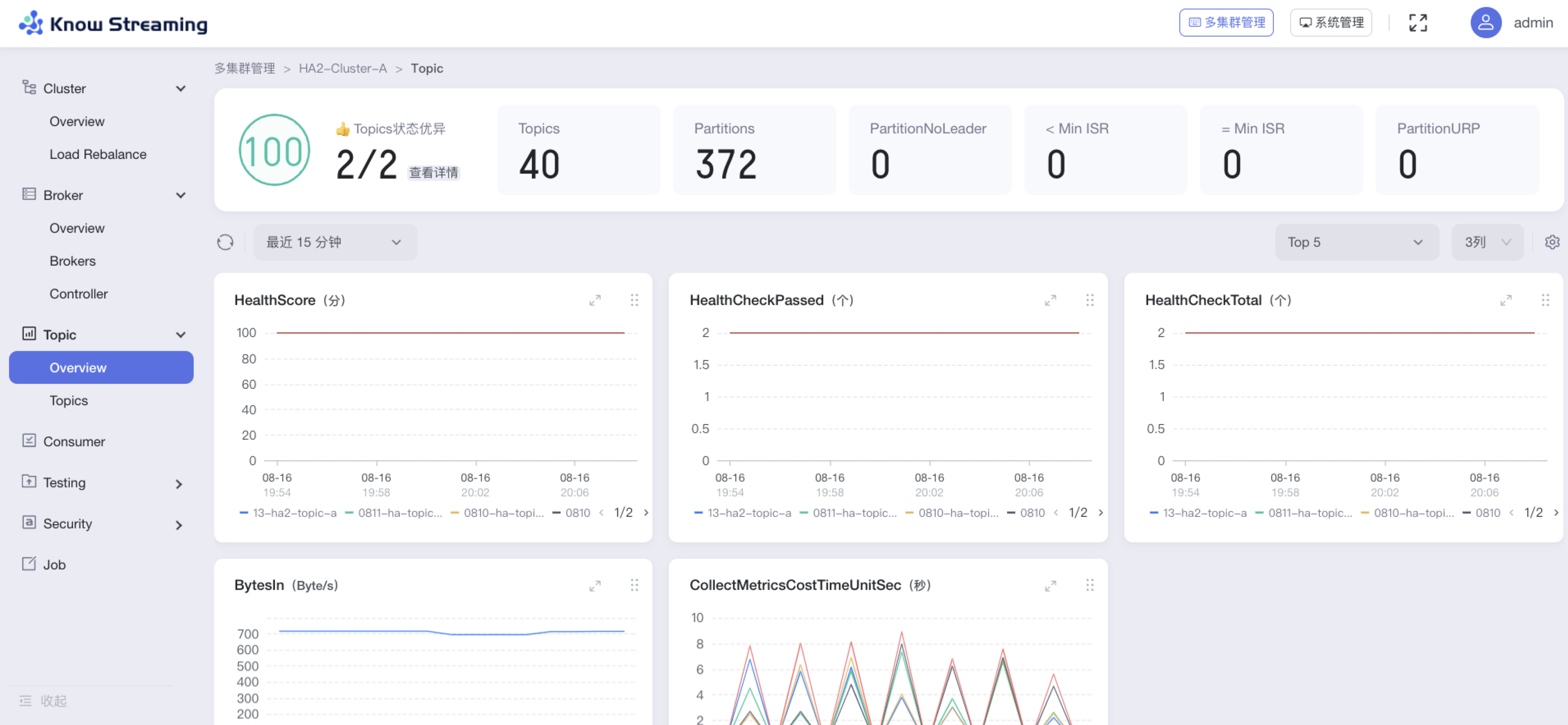

**4、Topic 管理**

|

||||

|

||||

- 增加 Topic 健康分

|

||||

- 增加 Topic 关键指标统计和 GUI 展示,支持自定义配置

|

||||

- 增加 Topic 参数配置功能,可实时生效

|

||||

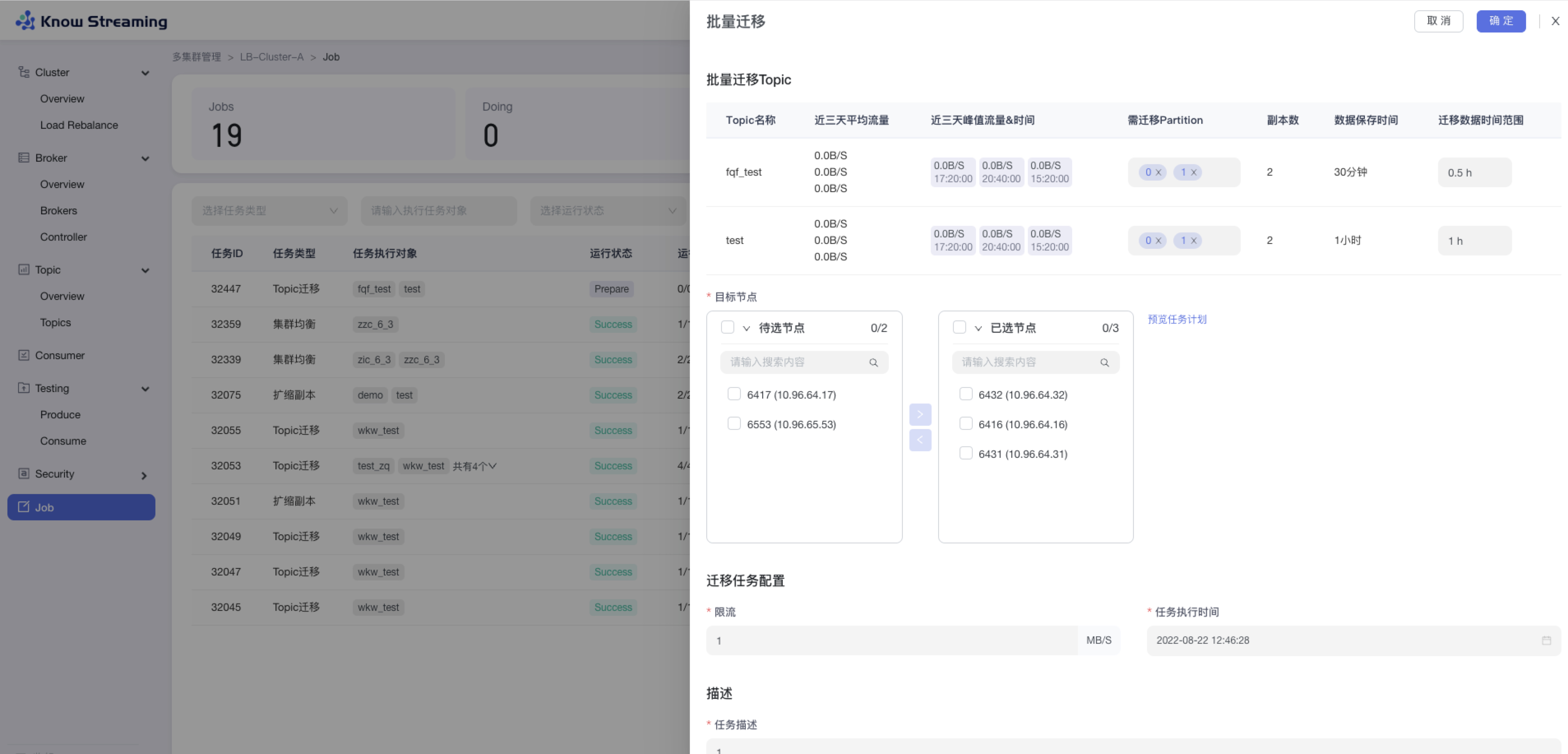

- 增加 Topic 批量迁移、Topic 批量扩缩副本功能

|

||||

- 增加查看系统 Topic 功能

|

||||

- 优化 Partition 分布的 GUI 展示

|

||||

- 优化 Topic Message 数据采样

|

||||

- 删除 Topic 过期概念

|

||||

- 删除 Topic 申请配额功能

|

||||

|

||||

**5、Consumer 管理**

|

||||

|

||||

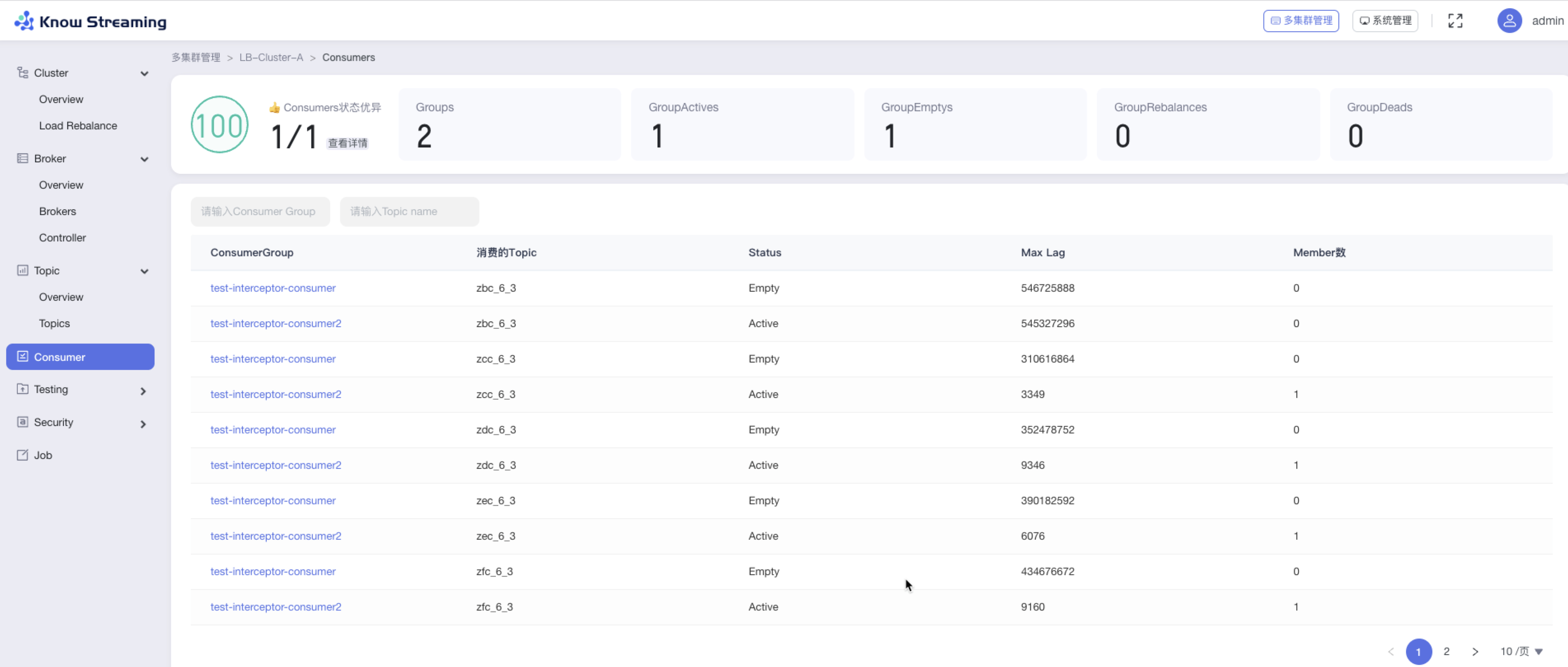

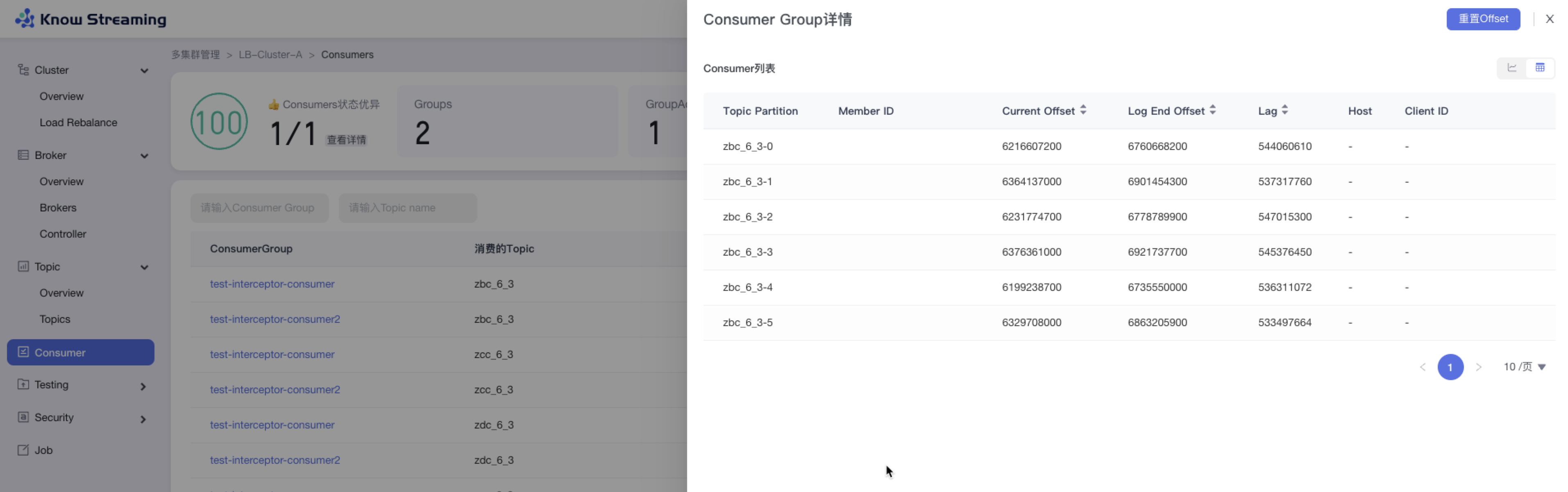

- 优化了 ConsumerGroup 展示形式,增加 Consumer Lag 的 GUI 展示

|

||||

|

||||

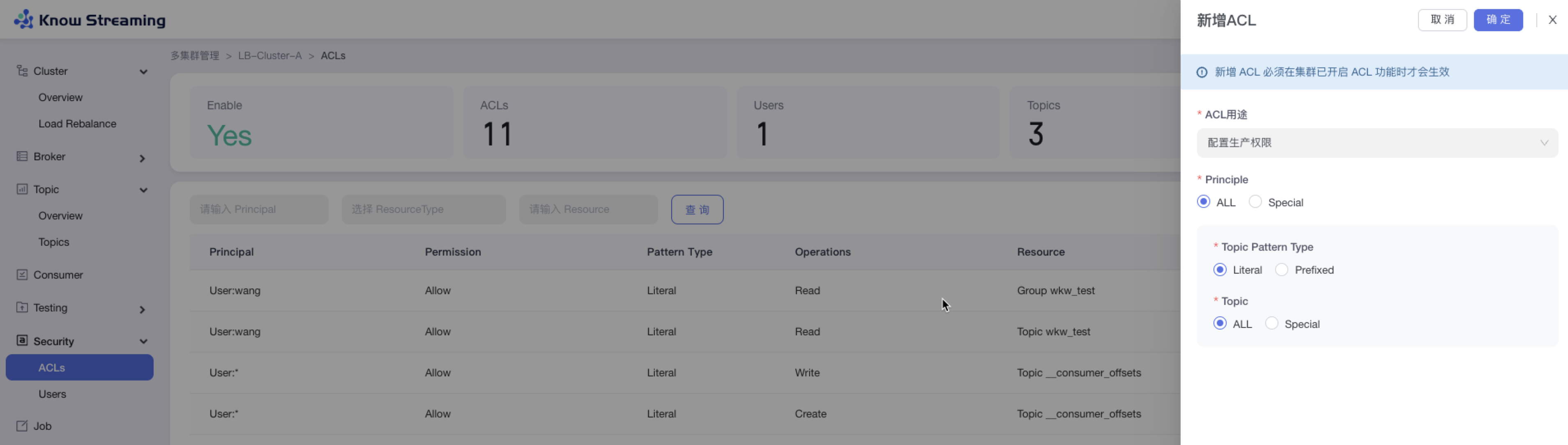

**6、ACL 管理**

|

||||

|

||||

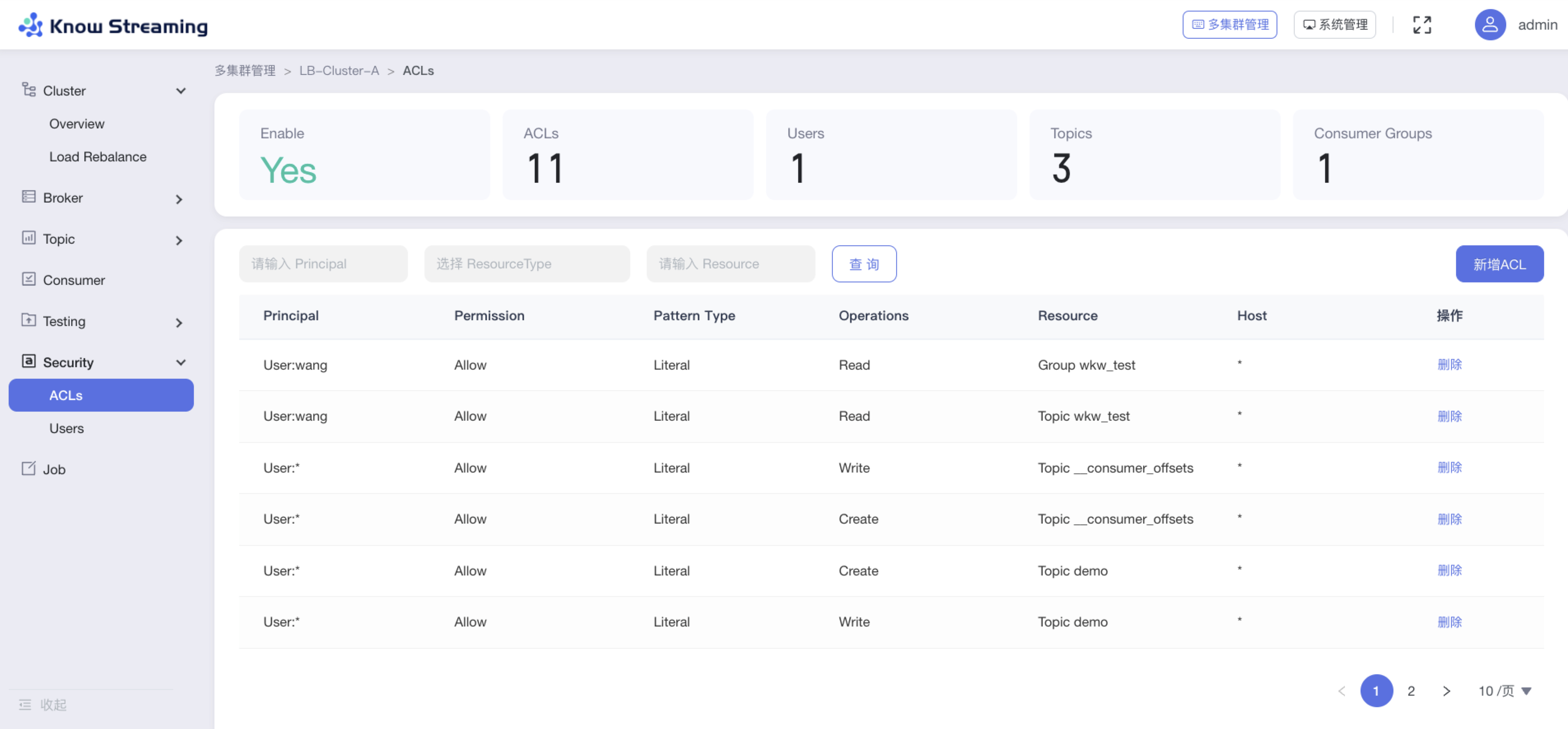

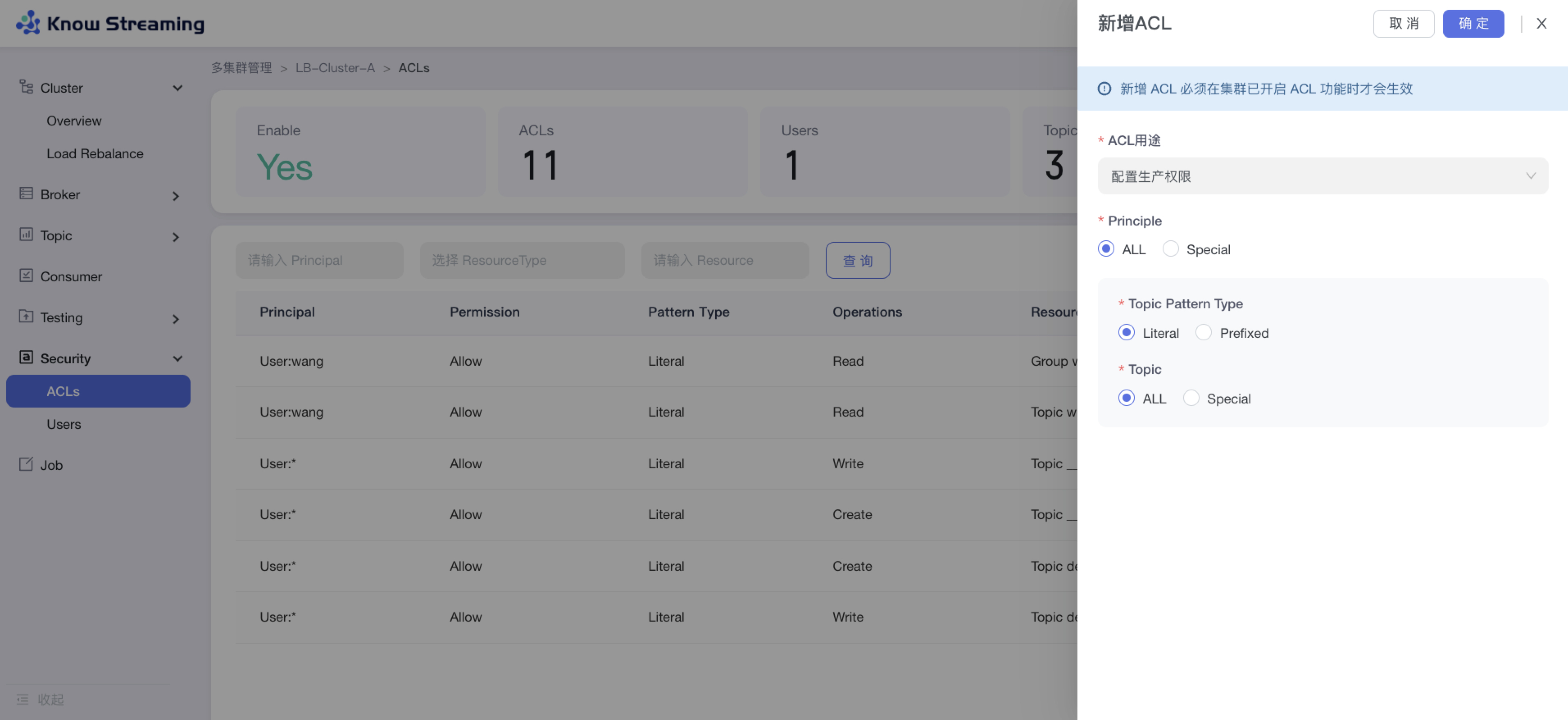

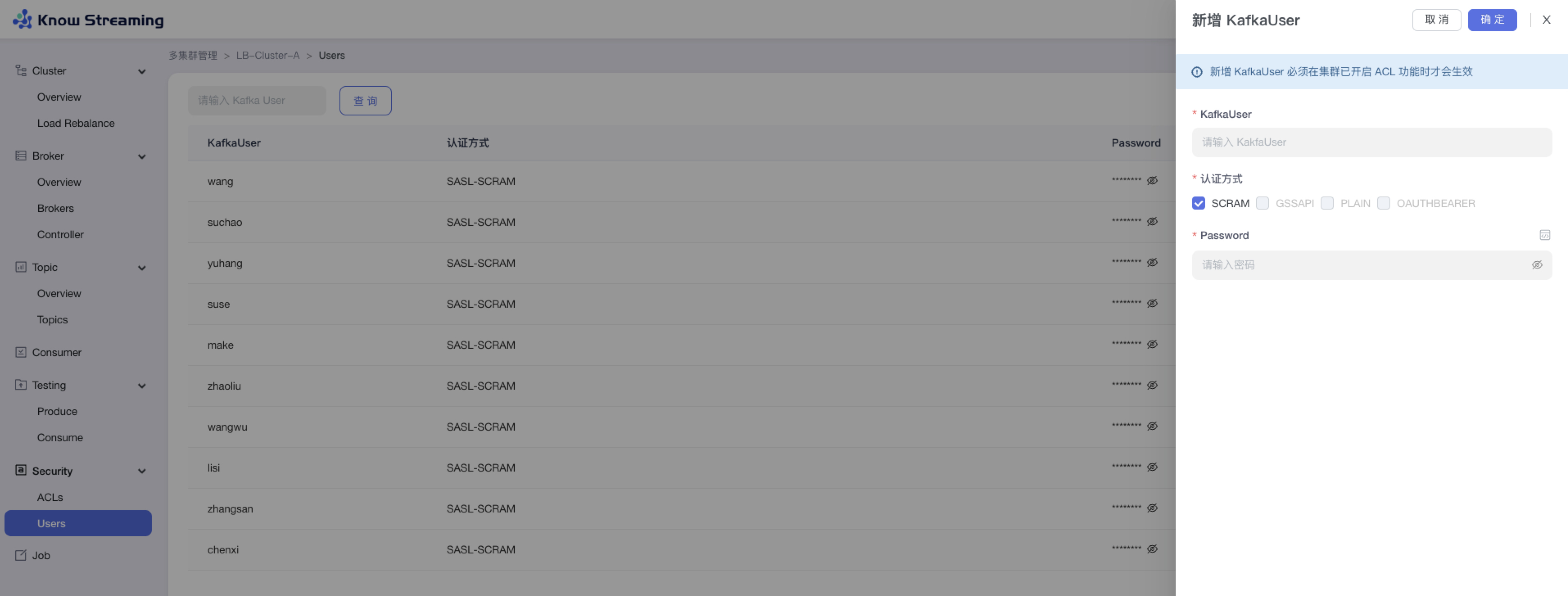

- 增加原生 ACL GUI 配置功能,可配置生产、消费、自定义多种组合权限

|

||||

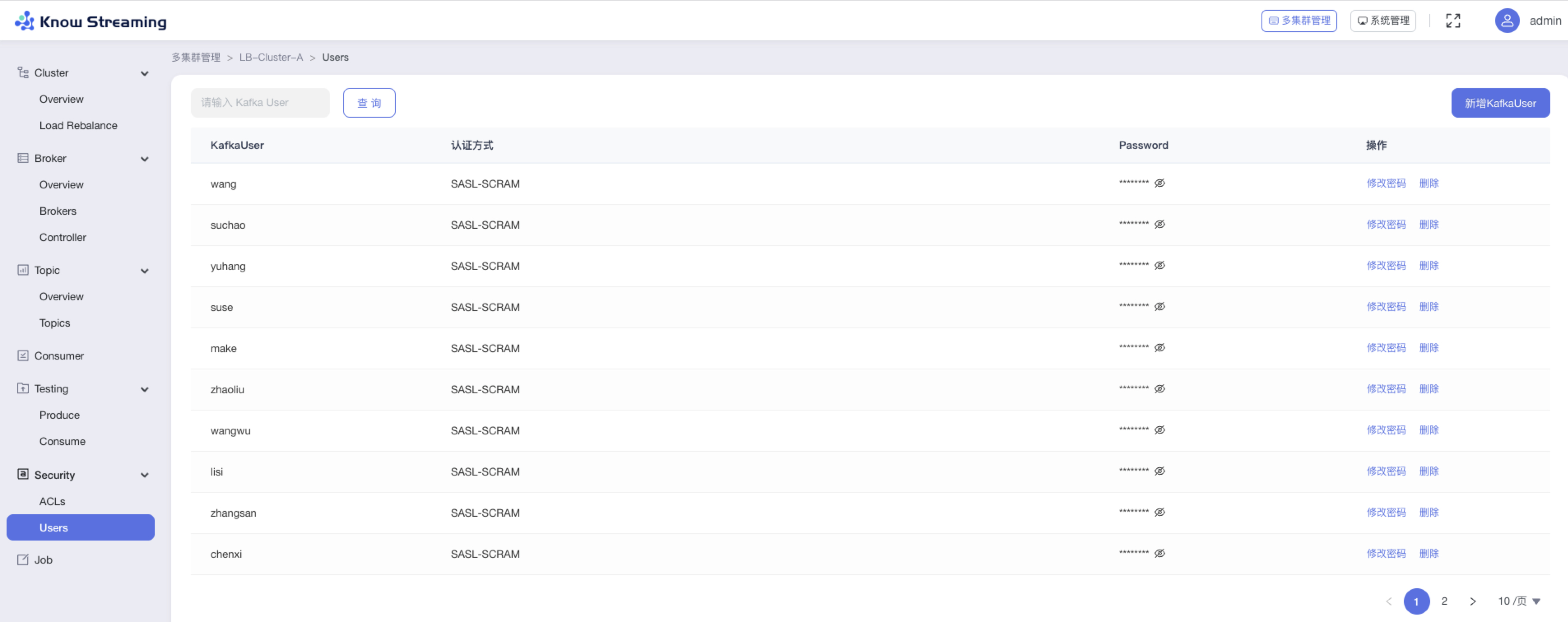

- 增加 KafkaUser 功能,可自定义新增 KafkaUser

|

||||

|

||||

**7、消息测试(企业版)**

|

||||

|

||||

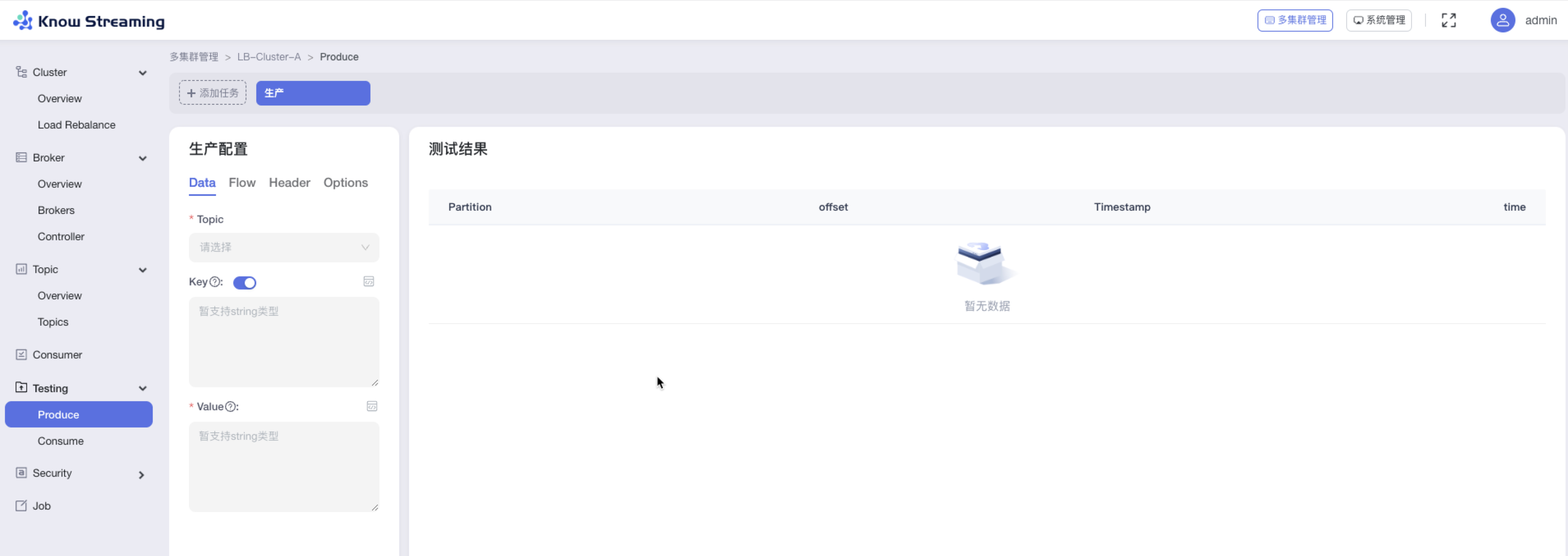

- 增加生产者消息模拟器,支持 Data、Flow、Header、Options 自定义配置(企业版)

|

||||

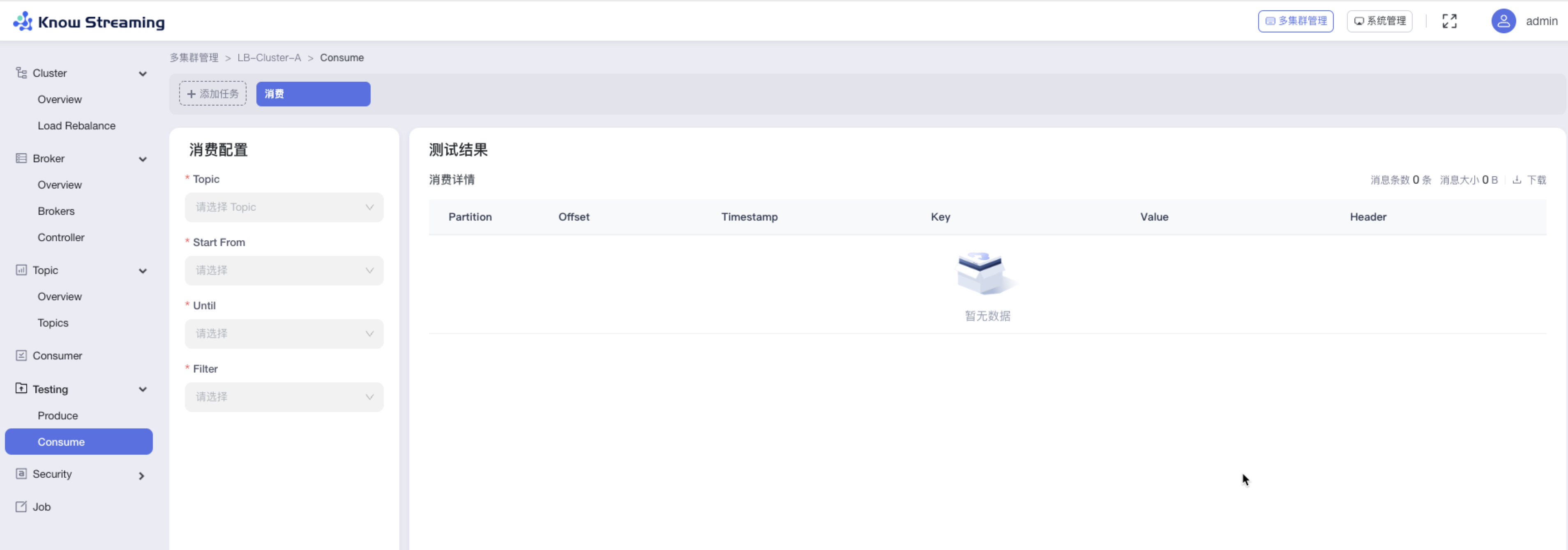

- 增加消费者消息模拟器,支持 Data、Flow、Header、Options 自定义配置(企业版)

|

||||

|

||||

**8、Job**

|

||||

|

||||

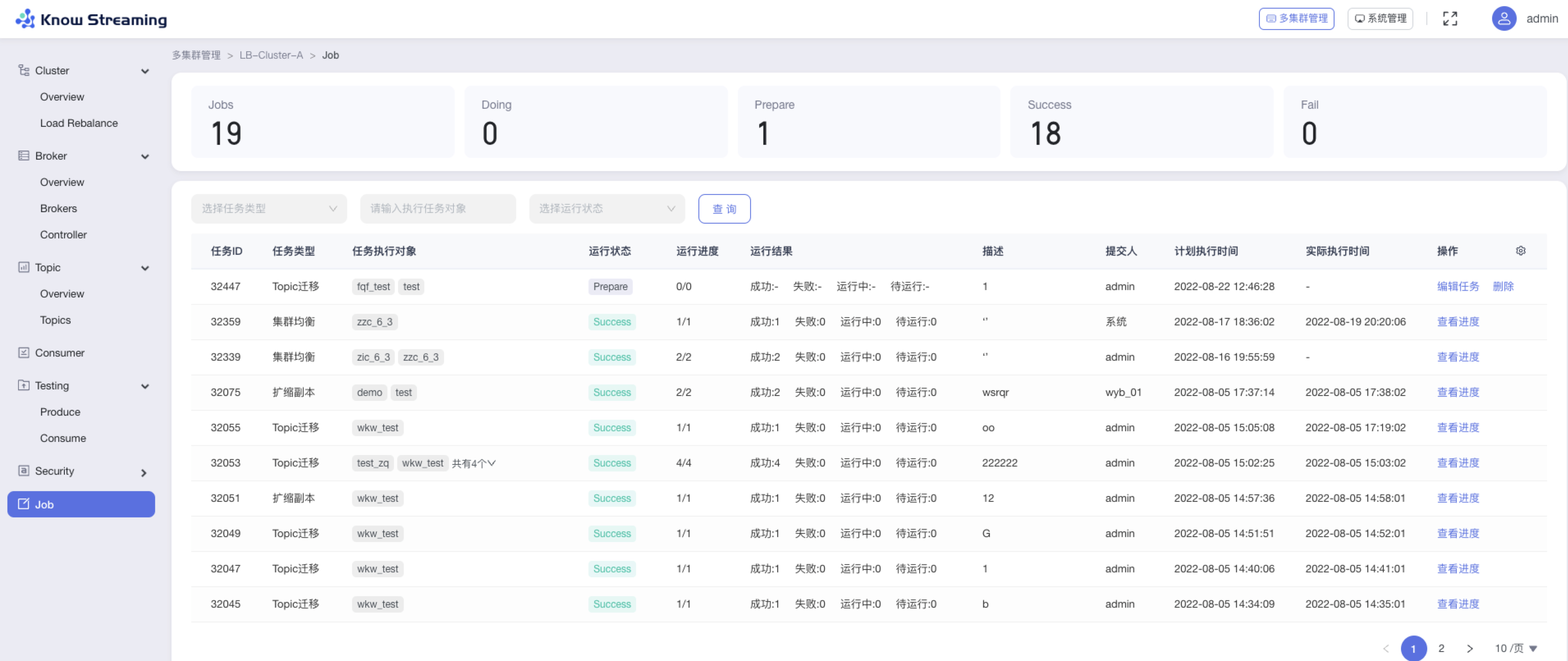

- 优化 Job 模块,支持任务进度管理

|

||||

|

||||

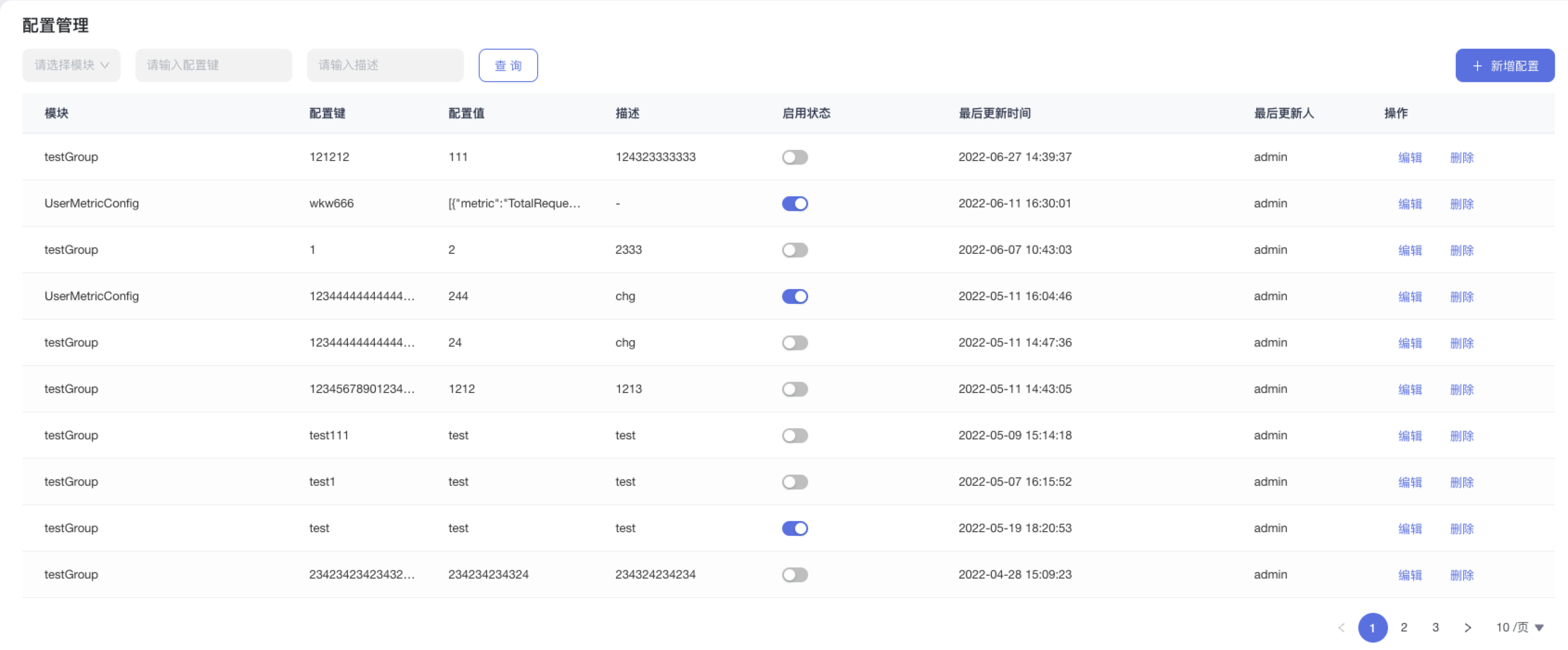

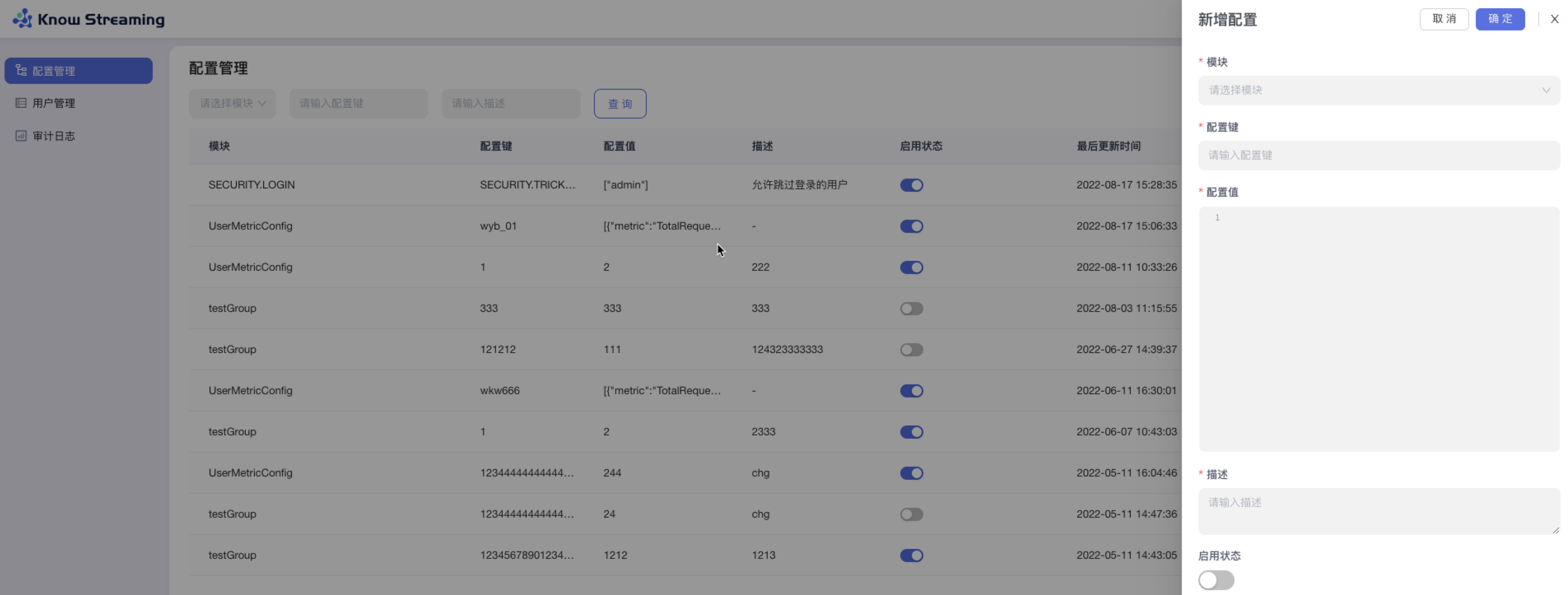

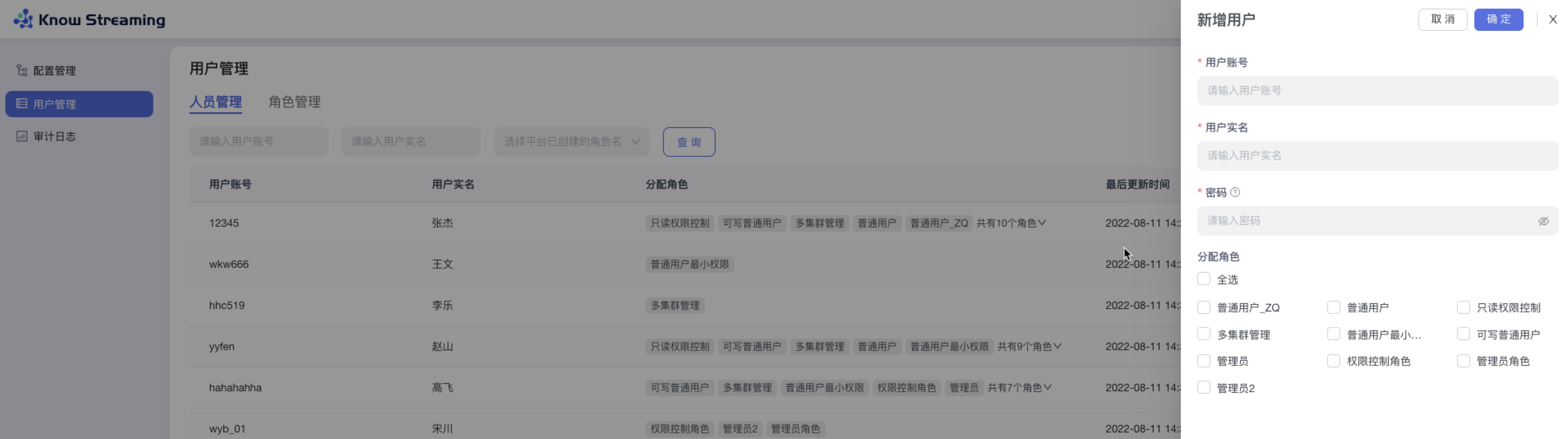

**9、系统管理**

|

||||

|

||||

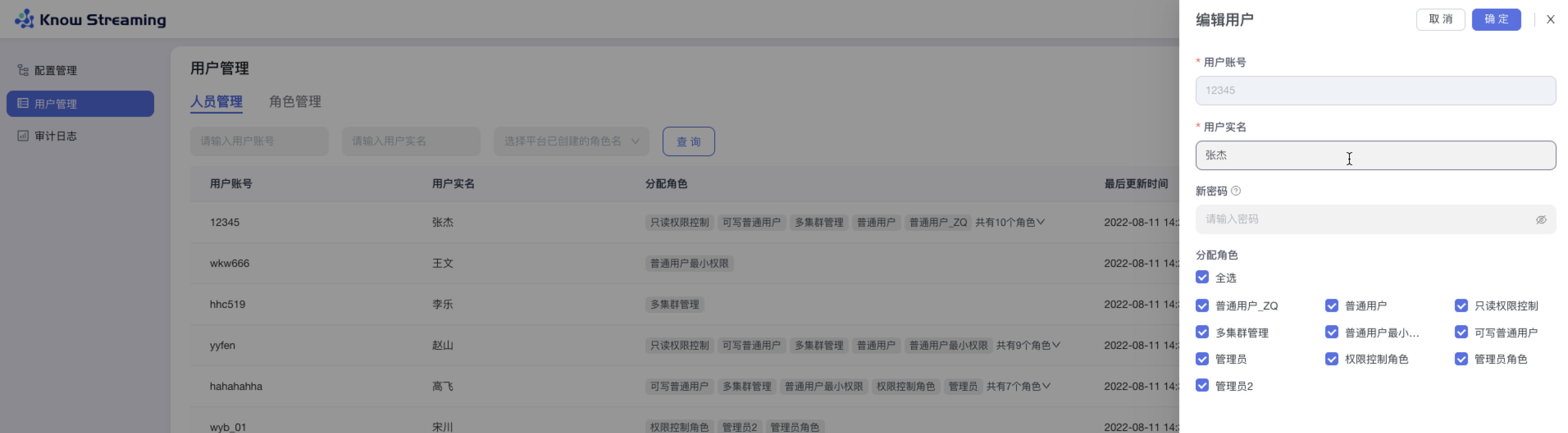

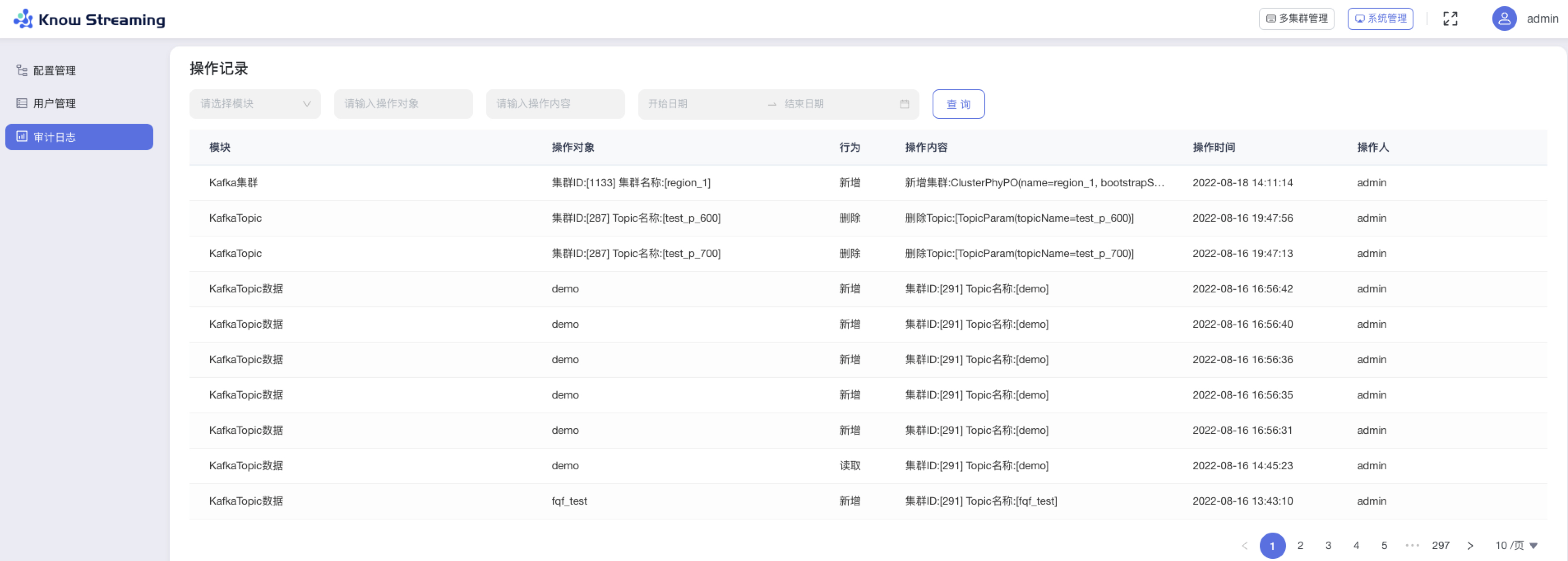

- 优化用户、角色管理体系,支持自定义角色配置页面及操作权限

|

||||

- 优化审计日志信息

|

||||

- 删除多租户体系

|

||||

- 删除工单流程

|

||||

|

||||

---

|

||||

|

||||

## v2.6.0

|

||||

|

||||

版本上线时间:2022-01-24

|

||||

|

||||

### 能力提升

|

||||

- 增加简单回退工具类

|

||||

|

||||

### 体验优化

|

||||

- 补充周期任务说明文档

|

||||

- 补充集群安装部署使用说明文档

|

||||

- 升级Swagger、SpringFramework、SpringBoot、EChats版本

|

||||

- 优化Task模块的日志输出

|

||||

- 优化corn表达式解析失败后退出无任何日志提示问题

|

||||

- Ldap用户接入时,增加部门及邮箱信息等

|

||||

- 对Jmx模块,增加连接失败后的回退机制及错误日志优化

|

||||

- 增加线程池、客户端池可配置

|

||||

- 删除无用的jmx_prometheus_javaagent-0.14.0.jar

|

||||

- 优化迁移任务名称

|

||||

- 优化创建Region时,Region容量信息不能立即被更新问题

|

||||

- 引入lombok

|

||||

- 更新视频教程

|

||||

- 优化kcm_script.sh脚本中的LogiKM地址为可通过程序传入

|

||||

- 第三方接口及网关接口,增加是否跳过登录的开关

|

||||

- extends模块相关配置调整为非必须在application.yml中配置

|

||||

|

||||

### bug修复

|

||||

- 修复批量往DB写入空指标数组时报SQL语法异常的问题

|

||||

- 修复网关增加配置及修改配置时,version不变化问题

|

||||

- 修复集群列表页,提示框遮挡问题

|

||||

- 修复对高版本Broker元信息协议解析失败的问题

|

||||

- 修复Dockerfile执行时提示缺少application.yml文件的问题

|

||||

- 修复逻辑集群更新时,会报空指针的问题

|

||||

|

||||

|

||||

## v2.5.0

|

||||

|

||||

版本上线时间:2021-07-10

|

||||

|

||||

### 体验优化

|

||||

- 更改产品名为LogiKM

|

||||

- 更新产品图标

|

||||

|

||||

|

||||

## v2.4.1+

|

||||

|

||||

版本上线时间:2021-05-21

|

||||

|

||||

### 能力提升

|

||||

- 增加直接增加权限和配额的接口(v2.4.1)

|

||||

- 增加接口调用可绕过登录的功能(v2.4.1)

|

||||

|

||||

### 体验优化

|

||||

- Tomcat 版本提升至8.5.66(v2.4.2)

|

||||

- op接口优化,拆分util接口为topic、leader两类接口(v2.4.1)

|

||||

- 简化Gateway配置的Key长度(v2.4.1)

|

||||

|

||||

### bug修复

|

||||

- 修复页面展示版本错误问题(v2.4.2)

|

||||

|

||||

|

||||

## v2.4.0

|

||||

|

||||

版本上线时间:2021-05-18

|

||||

|

||||

|

||||

### 能力提升

|

||||

|

||||

- 增加App与Topic自动化审批开关

|

||||

- Broker元信息中增加Rack信息

|

||||

- 升级MySQL 驱动,支持MySQL 8+

|

||||

- 增加操作记录查询界面

|

||||

|

||||

### 体验优化

|

||||

|

||||

- FAQ告警组说明优化

|

||||

- 用户手册共享及 独享集群概念优化

|

||||

- 用户管理界面,前端限制用户删除自己

|

||||

|

||||

### bug修复

|

||||

|

||||

- 修复op-util类中创建Topic失败的接口

|

||||

- 周期同步Topic到DB的任务修复,将Topic列表查询从缓存调整为直接查DB

|

||||

- 应用下线审批失败的功能修复,将权限为0(无权限)的数据进行过滤

|

||||

- 修复登录及权限绕过的漏洞

|

||||

- 修复研发角色展示接入集群、暂停监控等按钮的问题

|

||||

|

||||

|

||||

## v2.3.0

|

||||

|

||||

版本上线时间:2021-02-08

|

||||

|

||||

|

||||

### 能力提升

|

||||

|

||||

- 新增支持docker化部署

|

||||

- 可指定Broker作为候选controller

|

||||

- 可新增并管理网关配置

|

||||

- 可获取消费组状态

|

||||

- 增加集群的JMX认证

|

||||

|

||||

### 体验优化

|

||||

|

||||

- 优化编辑用户角色、修改密码的流程

|

||||

- 新增consumerID的搜索功能

|

||||

- 优化“Topic连接信息”、“消费组重置消费偏移”、“修改Topic保存时间”的文案提示

|

||||

- 在相应位置增加《资源申请文档》链接

|

||||

|

||||

### bug修复

|

||||

|

||||

- 修复Broker监控图表时间轴展示错误的问题

|

||||

- 修复创建夜莺监控告警规则时,使用的告警周期的单位不正确的问题

|

||||

|

||||

|

||||

|

||||

## v2.2.0

|

||||

|

||||

版本上线时间:2021-01-25

|

||||

|

||||

|

||||

|

||||

### 能力提升

|

||||

|

||||

- 优化工单批量操作流程

|

||||

- 增加获取Topic75分位/99分位的实时耗时数据

|

||||

- 增加定时任务,可将无主未落DB的Topic定期写入DB

|

||||

|

||||

### 体验优化

|

||||

|

||||

- 在相应位置增加《集群接入文档》链接

|

||||

- 优化物理集群、逻辑集群含义

|

||||

- 在Topic详情页、Topic扩分区操作弹窗增加展示Topic所属Region的信息

|

||||

- 优化Topic审批时,Topic数据保存时间的配置流程

|

||||

- 优化Topic/应用申请、审批时的错误提示文案

|

||||

- 优化Topic数据采样的操作项文案

|

||||

- 优化运维人员删除Topic时的提示文案

|

||||

- 优化运维人员删除Region的删除逻辑与提示文案

|

||||

- 优化运维人员删除逻辑集群的提示文案

|

||||

- 优化上传集群配置文件时的文件类型限制条件

|

||||

|

||||

### bug修复

|

||||

|

||||

- 修复填写应用名称时校验特殊字符出错的问题

|

||||

- 修复普通用户越权访问应用详情的问题

|

||||

- 修复由于Kafka版本升级,导致的数据压缩格式无法获取的问题

|

||||

- 修复删除逻辑集群或Topic之后,界面依旧展示的问题

|

||||

- 修复进行Leader rebalance操作时执行结果重复提示的问题

|

||||

|

||||

|

||||

## v2.1.0

|

||||

|

||||

版本上线时间:2020-12-19

|

||||

|

||||

|

||||

|

||||

### 体验优化

|

||||

|

||||

- 优化页面加载时的背景样式

|

||||

- 优化普通用户申请Topic权限的流程

|

||||

- 优化Topic申请配额、申请分区的权限限制

|

||||

- 优化取消Topic权限的文案提示

|

||||

- 优化申请配额表单的表单项名称

|

||||

- 优化重置消费偏移的操作流程

|

||||

- 优化创建Topic迁移任务的表单内容

|

||||

- 优化Topic扩分区操作的弹窗界面样式

|

||||

- 优化集群Broker监控可视化图表样式

|

||||

- 优化创建逻辑集群的表单内容

|

||||

- 优化集群安全协议的提示文案

|

||||

|

||||

### bug修复

|

||||

|

||||

- 修复偶发性重置消费偏移失败的问题

|

||||

|

||||

|

||||

|

||||

|

||||

@@ -1,655 +0,0 @@

|

||||

esaddr=127.0.0.1

|

||||

port=8060

|

||||

curl -s --connect-timeout 10 -o /dev/null http://${esaddr}:${port}/_cat/nodes >/dev/null 2>&1

|

||||

if [ "$?" != "0" ];then

|

||||

echo "Elasticserach 访问失败, 请安装完后检查并重新执行该脚本 "

|

||||

exit

|

||||

fi

|

||||

|

||||

curl -s --connect-timeout 10 -o /dev/null -X POST -H 'cache-control: no-cache' -H 'content-type: application/json' http://${esaddr}:${port}/_template/ks_kafka_broker_metric -d '{

|

||||

"order" : 10,

|

||||

"index_patterns" : [

|

||||

"ks_kafka_broker_metric*"

|

||||

],

|

||||

"settings" : {

|

||||

"index" : {

|

||||

"number_of_shards" : "10"

|

||||

}

|

||||

},

|

||||

"mappings" : {

|

||||

"properties" : {

|

||||

"brokerId" : {

|

||||

"type" : "long"

|

||||

},

|

||||

"routingValue" : {

|

||||

"type" : "text",

|

||||

"fields" : {

|

||||

"keyword" : {

|

||||

"ignore_above" : 256,

|

||||

"type" : "keyword"

|

||||

}

|

||||

}

|

||||

},

|

||||

"clusterPhyId" : {

|

||||

"type" : "long"

|

||||

},

|

||||

"metrics" : {

|

||||

"properties" : {

|

||||

"NetworkProcessorAvgIdle" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"UnderReplicatedPartitions" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"BytesIn_min_15" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"HealthCheckTotal" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"RequestHandlerAvgIdle" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"connectionsCount" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"BytesIn_min_5" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"HealthScore" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"BytesOut" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"BytesOut_min_15" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"BytesIn" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"BytesOut_min_5" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"TotalRequestQueueSize" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"MessagesIn" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"TotalProduceRequests" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"HealthCheckPassed" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"TotalResponseQueueSize" : {

|

||||

"type" : "float"

|

||||

}

|

||||

}

|

||||

},

|

||||

"key" : {

|

||||

"type" : "text",

|

||||

"fields" : {

|

||||

"keyword" : {

|

||||

"ignore_above" : 256,

|

||||

"type" : "keyword"

|

||||

}

|

||||

}

|

||||

},

|

||||

"timestamp" : {

|

||||

"format" : "yyyy-MM-dd HH:mm:ss Z||yyyy-MM-dd HH:mm:ss||yyyy-MM-dd HH:mm:ss.SSS Z||yyyy-MM-dd HH:mm:ss.SSS||yyyy-MM-dd HH:mm:ss,SSS||yyyy/MM/dd HH:mm:ss||yyyy-MM-dd HH:mm:ss,SSS Z||yyyy/MM/dd HH:mm:ss,SSS Z||epoch_millis",

|

||||

"index" : true,

|

||||

"type" : "date",

|

||||

"doc_values" : true

|

||||

}

|

||||

}

|

||||

},

|

||||

"aliases" : { }

|

||||

}'

|

||||

|

||||

curl -s -o /dev/null -X POST -H 'cache-control: no-cache' -H 'content-type: application/json' http://${esaddr}:${port}/_template/ks_kafka_cluster_metric -d '{

|

||||

"order" : 10,

|

||||

"index_patterns" : [

|

||||

"ks_kafka_cluster_metric*"

|

||||

],

|

||||

"settings" : {

|

||||

"index" : {

|

||||

"number_of_shards" : "10"

|

||||

}

|

||||

},

|

||||

"mappings" : {

|

||||

"properties" : {

|

||||

"routingValue" : {

|

||||

"type" : "text",

|

||||

"fields" : {

|

||||

"keyword" : {

|

||||

"ignore_above" : 256,

|

||||

"type" : "keyword"

|

||||

}

|

||||

}

|

||||

},

|

||||

"clusterPhyId" : {

|

||||

"type" : "long"

|

||||

},

|

||||

"metrics" : {

|

||||

"properties" : {

|

||||

"Connections" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"BytesIn_min_15" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"PartitionURP" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthScore_Topics" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"EventQueueSize" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"ActiveControllerCount" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"GroupDeads" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"BytesIn_min_5" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthCheckTotal_Topics" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"Partitions" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"BytesOut" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"Groups" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"BytesOut_min_15" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"TotalRequestQueueSize" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthCheckPassed_Groups" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"TotalProduceRequests" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthCheckPassed" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"TotalLogSize" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"GroupEmptys" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"PartitionNoLeader" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthScore_Brokers" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"Messages" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"Topics" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"PartitionMinISR_E" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthCheckTotal" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"Brokers" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"Replicas" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthCheckTotal_Groups" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"GroupRebalances" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"MessageIn" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthScore" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthCheckPassed_Topics" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthCheckTotal_Brokers" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"PartitionMinISR_S" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"BytesIn" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"BytesOut_min_5" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"GroupActives" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"MessagesIn" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"GroupReBalances" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthCheckPassed_Brokers" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthScore_Groups" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"TotalResponseQueueSize" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"Zookeepers" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"LeaderMessages" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthScore_Cluster" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthCheckPassed_Cluster" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthCheckTotal_Cluster" : {

|

||||

"type" : "double"

|

||||

}

|

||||

}

|

||||

},

|

||||

"key" : {

|

||||

"type" : "text",

|

||||

"fields" : {

|

||||

"keyword" : {

|

||||

"ignore_above" : 256,

|

||||

"type" : "keyword"

|

||||

}

|

||||

}

|

||||

},

|

||||

"timestamp" : {

|

||||

"format" : "yyyy-MM-dd HH:mm:ss Z||yyyy-MM-dd HH:mm:ss||yyyy-MM-dd HH:mm:ss.SSS Z||yyyy-MM-dd HH:mm:ss.SSS||yyyy-MM-dd HH:mm:ss,SSS||yyyy/MM/dd HH:mm:ss||yyyy-MM-dd HH:mm:ss,SSS Z||yyyy/MM/dd HH:mm:ss,SSS Z||epoch_millis",

|

||||

"type" : "date"

|

||||

}

|

||||

}

|

||||

},

|

||||

"aliases" : { }

|

||||

}'

|

||||

|

||||

curl -s -o /dev/null -X POST -H 'cache-control: no-cache' -H 'content-type: application/json' http://${esaddr}:${port}/_template/ks_kafka_group_metric -d '{

|

||||

"order" : 10,

|

||||

"index_patterns" : [

|

||||

"ks_kafka_group_metric*"

|

||||

],

|

||||

"settings" : {

|

||||

"index" : {

|

||||

"number_of_shards" : "10"

|

||||

}

|

||||

},

|

||||

"mappings" : {

|

||||

"properties" : {

|

||||

"group" : {

|

||||

"type" : "keyword"

|

||||

},

|

||||

"partitionId" : {

|

||||

"type" : "long"

|

||||

},

|

||||

"routingValue" : {

|

||||

"type" : "text",

|

||||

"fields" : {

|

||||

"keyword" : {

|

||||

"ignore_above" : 256,

|

||||

"type" : "keyword"

|

||||

}

|

||||

}

|

||||

},

|

||||

"clusterPhyId" : {

|

||||

"type" : "long"

|

||||

},

|

||||

"topic" : {

|

||||

"type" : "keyword"

|

||||

},

|

||||

"metrics" : {

|

||||

"properties" : {

|

||||

"HealthScore" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"Lag" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"OffsetConsumed" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"HealthCheckTotal" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"HealthCheckPassed" : {

|

||||

"type" : "float"

|

||||

}

|

||||

}

|

||||

},

|

||||

"groupMetric" : {

|

||||

"type" : "keyword"

|

||||

},

|

||||

"key" : {

|

||||

"type" : "text",

|

||||

"fields" : {

|

||||

"keyword" : {

|

||||

"ignore_above" : 256,

|

||||

"type" : "keyword"

|

||||

}

|

||||

}

|

||||

},

|

||||

"timestamp" : {

|

||||

"format" : "yyyy-MM-dd HH:mm:ss Z||yyyy-MM-dd HH:mm:ss||yyyy-MM-dd HH:mm:ss.SSS Z||yyyy-MM-dd HH:mm:ss.SSS||yyyy-MM-dd HH:mm:ss,SSS||yyyy/MM/dd HH:mm:ss||yyyy-MM-dd HH:mm:ss,SSS Z||yyyy/MM/dd HH:mm:ss,SSS Z||epoch_millis",

|

||||

"index" : true,

|

||||

"type" : "date",

|

||||

"doc_values" : true

|

||||

}

|

||||

}

|

||||

},

|

||||

"aliases" : { }

|

||||

}'

|

||||

|

||||

curl -s -o /dev/null -X POST -H 'cache-control: no-cache' -H 'content-type: application/json' http://${esaddr}:${port}/_template/ks_kafka_partition_metric -d '{

|

||||

"order" : 10,

|

||||

"index_patterns" : [

|

||||

"ks_kafka_partition_metric*"

|

||||

],

|

||||

"settings" : {

|

||||

"index" : {

|

||||

"number_of_shards" : "10"

|

||||

}

|

||||

},

|

||||

"mappings" : {

|

||||

"properties" : {

|

||||

"brokerId" : {

|

||||

"type" : "long"

|

||||

},

|

||||

"partitionId" : {

|

||||

"type" : "long"

|

||||

},

|

||||

"routingValue" : {

|

||||

"type" : "text",

|

||||

"fields" : {

|

||||

"keyword" : {

|

||||

"ignore_above" : 256,

|

||||

"type" : "keyword"

|

||||

}

|

||||

}

|

||||

},

|

||||

"clusterPhyId" : {

|

||||

"type" : "long"

|

||||

},

|

||||

"topic" : {

|

||||

"type" : "keyword"

|

||||

},

|

||||

"metrics" : {

|

||||

"properties" : {

|

||||

"LogStartOffset" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"Messages" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"LogEndOffset" : {

|

||||

"type" : "float"

|

||||

}

|

||||

}

|

||||

},

|

||||

"key" : {

|

||||

"type" : "text",

|

||||

"fields" : {

|

||||

"keyword" : {

|

||||

"ignore_above" : 256,

|

||||

"type" : "keyword"

|

||||

}

|

||||

}

|

||||

},

|

||||

"timestamp" : {

|

||||

"format" : "yyyy-MM-dd HH:mm:ss Z||yyyy-MM-dd HH:mm:ss||yyyy-MM-dd HH:mm:ss.SSS Z||yyyy-MM-dd HH:mm:ss.SSS||yyyy-MM-dd HH:mm:ss,SSS||yyyy/MM/dd HH:mm:ss||yyyy-MM-dd HH:mm:ss,SSS Z||yyyy/MM/dd HH:mm:ss,SSS Z||epoch_millis",

|

||||

"index" : true,

|

||||

"type" : "date",

|

||||

"doc_values" : true

|

||||

}

|

||||

}

|

||||

},

|

||||

"aliases" : { }

|

||||

}'

|

||||

|

||||

curl -s -o /dev/null -X POST -H 'cache-control: no-cache' -H 'content-type: application/json' http://${esaddr}:${port}/_template/ks_kafka_replication_metric -d '{

|

||||

"order" : 10,

|

||||

"index_patterns" : [

|

||||

"ks_kafka_partition_metric*"

|

||||

],

|

||||

"settings" : {

|

||||

"index" : {

|

||||

"number_of_shards" : "10"

|

||||

}

|

||||

},

|

||||

"mappings" : {

|

||||

"properties" : {

|

||||

"brokerId" : {

|

||||

"type" : "long"

|

||||

},

|

||||

"partitionId" : {

|

||||

"type" : "long"

|

||||

},

|

||||

"routingValue" : {

|

||||

"type" : "text",

|

||||

"fields" : {

|

||||

"keyword" : {

|

||||

"ignore_above" : 256,

|

||||

"type" : "keyword"

|

||||

}

|

||||

}

|

||||

},

|

||||

"clusterPhyId" : {

|

||||

"type" : "long"

|

||||

},

|

||||

"topic" : {

|

||||

"type" : "keyword"

|

||||

},

|

||||

"metrics" : {

|

||||

"properties" : {

|

||||

"LogStartOffset" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"Messages" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"LogEndOffset" : {

|

||||

"type" : "float"

|

||||

}

|

||||

}

|

||||

},

|

||||

"key" : {

|

||||

"type" : "text",

|

||||

"fields" : {

|

||||

"keyword" : {

|

||||

"ignore_above" : 256,

|

||||

"type" : "keyword"

|

||||

}

|

||||

}

|

||||

},

|

||||

"timestamp" : {

|

||||

"format" : "yyyy-MM-dd HH:mm:ss Z||yyyy-MM-dd HH:mm:ss||yyyy-MM-dd HH:mm:ss.SSS Z||yyyy-MM-dd HH:mm:ss.SSS||yyyy-MM-dd HH:mm:ss,SSS||yyyy/MM/dd HH:mm:ss||yyyy-MM-dd HH:mm:ss,SSS Z||yyyy/MM/dd HH:mm:ss,SSS Z||epoch_millis",

|

||||

"index" : true,

|

||||

"type" : "date",

|

||||

"doc_values" : true

|

||||

}

|

||||

}

|

||||

},

|

||||

"aliases" : { }

|

||||

}[root@10-255-0-23 template]# cat ks_kafka_replication_metric

|

||||

PUT _template/ks_kafka_replication_metric

|

||||

{

|

||||

"order" : 10,

|

||||

"index_patterns" : [

|

||||

"ks_kafka_replication_metric*"

|

||||

],

|

||||

"settings" : {

|

||||

"index" : {

|

||||

"number_of_shards" : "10"

|

||||

}

|

||||

},

|

||||

"mappings" : {

|

||||

"properties" : {

|

||||

"timestamp" : {

|

||||

"format" : "yyyy-MM-dd HH:mm:ss Z||yyyy-MM-dd HH:mm:ss||yyyy-MM-dd HH:mm:ss.SSS Z||yyyy-MM-dd HH:mm:ss.SSS||yyyy-MM-dd HH:mm:ss,SSS||yyyy/MM/dd HH:mm:ss||yyyy-MM-dd HH:mm:ss,SSS Z||yyyy/MM/dd HH:mm:ss,SSS Z||epoch_millis",

|

||||

"index" : true,

|

||||

"type" : "date",

|

||||

"doc_values" : true

|

||||

}

|

||||

}

|

||||

},

|

||||

"aliases" : { }

|

||||

}'

|

||||

|

||||

curl -s -o /dev/null -X POST -H 'cache-control: no-cache' -H 'content-type: application/json' http://${esaddr}:${port}/_template/ks_kafka_topic_metric -d '{

|

||||

"order" : 10,

|

||||

"index_patterns" : [

|

||||

"ks_kafka_topic_metric*"

|

||||

],

|

||||

"settings" : {

|

||||

"index" : {

|

||||

"number_of_shards" : "10"

|

||||

}

|

||||

},

|

||||

"mappings" : {

|

||||

"properties" : {

|

||||

"brokerId" : {

|

||||

"type" : "long"

|

||||

},

|

||||

"routingValue" : {

|

||||

"type" : "text",

|

||||

"fields" : {

|

||||

"keyword" : {

|

||||

"ignore_above" : 256,

|

||||

"type" : "keyword"

|

||||

}

|

||||

}

|

||||

},

|

||||

"topic" : {

|

||||

"type" : "keyword"

|

||||

},

|

||||

"clusterPhyId" : {

|

||||

"type" : "long"

|

||||

},

|

||||

"metrics" : {

|

||||

"properties" : {

|

||||

"BytesIn_min_15" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"Messages" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"BytesRejected" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"PartitionURP" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"HealthCheckTotal" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"ReplicationCount" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"ReplicationBytesOut" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"ReplicationBytesIn" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"FailedFetchRequests" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"BytesIn_min_5" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"HealthScore" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"LogSize" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"BytesOut" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"BytesOut_min_15" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"FailedProduceRequests" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"BytesIn" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"BytesOut_min_5" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"MessagesIn" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"TotalProduceRequests" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"HealthCheckPassed" : {

|

||||

"type" : "float"

|

||||

}

|

||||

}

|

||||

},

|

||||

"brokerAgg" : {

|

||||

"type" : "keyword"

|

||||

},

|

||||

"key" : {

|

||||

"type" : "text",

|

||||

"fields" : {

|

||||

"keyword" : {

|

||||

"ignore_above" : 256,

|

||||

"type" : "keyword"

|

||||

}

|

||||

}

|

||||

},

|

||||

"timestamp" : {

|

||||

"format" : "yyyy-MM-dd HH:mm:ss Z||yyyy-MM-dd HH:mm:ss||yyyy-MM-dd HH:mm:ss.SSS Z||yyyy-MM-dd HH:mm:ss.SSS||yyyy-MM-dd HH:mm:ss,SSS||yyyy/MM/dd HH:mm:ss||yyyy-MM-dd HH:mm:ss,SSS Z||yyyy/MM/dd HH:mm:ss,SSS Z||epoch_millis",

|

||||

"index" : true,

|

||||

"type" : "date",

|

||||

"doc_values" : true

|

||||

}

|

||||

}

|

||||

},

|

||||

"aliases" : { }

|

||||

}'

|

||||

|

||||

for i in {0..6};

|

||||

do

|

||||

logdate=_$(date -d "${i} day ago" +%Y-%m-%d)

|

||||

curl -s --connect-timeout 10 -o /dev/null -X PUT http://${esaddr}:${port}/ks_kafka_broker_metric${logdate} && \

|

||||

curl -s -o /dev/null -X PUT http://${esaddr}:${port}/ks_kafka_cluster_metric${logdate} && \

|

||||

curl -s -o /dev/null -X PUT http://${esaddr}:${port}/ks_kafka_group_metric${logdate} && \

|

||||

curl -s -o /dev/null -X PUT http://${esaddr}:${port}/ks_kafka_partition_metric${logdate} && \

|

||||

curl -s -o /dev/null -X PUT http://${esaddr}:${port}/ks_kafka_replication_metric${logdate} && \

|

||||

curl -s -o /dev/null -X PUT http://${esaddr}:${port}/ks_kafka_topic_metric${logdate} || \

|

||||

exit 2

|

||||

done

|

||||

@@ -1,16 +0,0 @@

|

||||

#!/bin/bash

|

||||

|

||||

cd `dirname $0`/../libs

|

||||

target_dir=`pwd`

|

||||

|

||||

pid=`ps ax | grep -i 'ks-km' | grep ${target_dir} | grep java | grep -v grep | awk '{print $1}'`

|

||||

if [ -z "$pid" ] ; then

|

||||

echo "No ks-km running."

|

||||

exit -1;

|

||||

fi

|

||||

|

||||

echo "The ks-km (${pid}) is running..."

|

||||

|

||||

kill ${pid}

|

||||

|

||||

echo "Send shutdown request to ks-km (${pid}) OK"

|

||||

@@ -1,82 +0,0 @@

|

||||

error_exit ()

|

||||

{

|

||||

echo "ERROR: $1 !!"

|

||||

exit 1

|

||||

}

|

||||

|

||||

[ ! -e "$JAVA_HOME/bin/java" ] && JAVA_HOME=$HOME/jdk/java

|

||||

[ ! -e "$JAVA_HOME/bin/java" ] && JAVA_HOME=/usr/java

|

||||

[ ! -e "$JAVA_HOME/bin/java" ] && unset JAVA_HOME

|

||||

|

||||

if [ -z "$JAVA_HOME" ]; then

|

||||

if $darwin; then

|

||||

|

||||

if [ -x '/usr/libexec/java_home' ] ; then

|

||||

export JAVA_HOME=`/usr/libexec/java_home`

|

||||

|

||||

elif [ -d "/System/Library/Frameworks/JavaVM.framework/Versions/CurrentJDK/Home" ]; then

|

||||

export JAVA_HOME="/System/Library/Frameworks/JavaVM.framework/Versions/CurrentJDK/Home"

|

||||

fi

|

||||

else

|

||||

JAVA_PATH=`dirname $(readlink -f $(which javac))`

|

||||

if [ "x$JAVA_PATH" != "x" ]; then

|

||||

export JAVA_HOME=`dirname $JAVA_PATH 2>/dev/null`

|

||||

fi

|

||||

fi

|

||||

if [ -z "$JAVA_HOME" ]; then

|

||||

error_exit "Please set the JAVA_HOME variable in your environment, We need java(x64)! jdk8 or later is better!"

|

||||

fi

|

||||

fi

|

||||

|

||||

|

||||

|

||||

|

||||

export WEB_SERVER="ks-km"

|

||||

export JAVA_HOME

|

||||

export JAVA="$JAVA_HOME/bin/java"

|

||||

export BASE_DIR=`cd $(dirname $0)/..; pwd`

|

||||