Compare commits

731 Commits

v2.0.0-alp

...

v3.0.0-bet

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

13354145fc | ||

|

|

2de27719c1 | ||

|

|

21db57b537 | ||

|

|

dfe8d09477 | ||

|

|

90dfa22c64 | ||

|

|

7909f60ff8 | ||

|

|

9a1a8a4c30 | ||

|

|

0b376bd69c | ||

|

|

8a0c23339d | ||

|

|

e7ab3aff16 | ||

|

|

d0948797b9 | ||

|

|

04a5e17451 | ||

|

|

47065c8042 | ||

|

|

488c778736 | ||

|

|

d10a7bcc75 | ||

|

|

afe44a2537 | ||

|

|

9eadafe850 | ||

|

|

dab3eefcc0 | ||

|

|

2b9a6b28d8 | ||

|

|

465f98ca2b | ||

|

|

a0312be4fd | ||

|

|

4a5161372b | ||

|

|

4c9921f752 | ||

|

|

6dd72d40ee | ||

|

|

db49c234bb | ||

|

|

4a9df0c4d9 | ||

|

|

461573c2ba | ||

|

|

291992753f | ||

|

|

fcefe7ac38 | ||

|

|

7da712fcff | ||

|

|

2fd8687624 | ||

|

|

639b1f8336 | ||

|

|

ab3b83e42a | ||

|

|

4818629c40 | ||

|

|

61784c860a | ||

|

|

d5667254f2 | ||

|

|

af2b93983f | ||

|

|

8281301cbd | ||

|

|

0043ab8371 | ||

|

|

500eaace82 | ||

|

|

28e8540c78 | ||

|

|

69adf682e2 | ||

|

|

69cd1ff6e1 | ||

|

|

415d67cc32 | ||

|

|

46a2fec79b | ||

|

|

560b322fca | ||

|

|

effe17ac85 | ||

|

|

7699acfc1b | ||

|

|

6e058240b3 | ||

|

|

f005c6bc44 | ||

|

|

7be462599f | ||

|

|

271ab432d9 | ||

|

|

4114777a4e | ||

|

|

9189a54442 | ||

|

|

b95ee762e3 | ||

|

|

9e3c4dc06b | ||

|

|

1891a3ac86 | ||

|

|

9ecdcac06d | ||

|

|

790cb6a2e1 | ||

|

|

4a98e5f025 | ||

|

|

507abc1d84 | ||

|

|

9b732fbbad | ||

|

|

220f1c6fc3 | ||

|

|

7a950c67b6 | ||

|

|

78f625dc8c | ||

|

|

211d26a3ed | ||

|

|

dce2bc6326 | ||

|

|

90e5d7f6f0 | ||

|

|

71d4e0f9e6 | ||

|

|

580b4534e0 | ||

|

|

fc835e09c6 | ||

|

|

c6e782a637 | ||

|

|

1ddfbfc833 | ||

|

|

dbf637fe0f | ||

|

|

110e129622 | ||

|

|

677e9d1b54 | ||

|

|

ad2adb905e | ||

|

|

5e9de7ac14 | ||

|

|

c63fb8380c | ||

|

|

2d39acc224 | ||

|

|

e68358e05f | ||

|

|

a96f10edf0 | ||

|

|

f03d94935b | ||

|

|

9c1320cd95 | ||

|

|

4f2ae588a5 | ||

|

|

eff51034b7 | ||

|

|

18832dc448 | ||

|

|

5262ae8907 | ||

|

|

7f251679fa | ||

|

|

5f5920b427 | ||

|

|

65a16d058a | ||

|

|

a73484d23a | ||

|

|

47887a20c6 | ||

|

|

9465c6f198 | ||

|

|

c09872c8c2 | ||

|

|

b0501cc80d | ||

|

|

f0792db6b3 | ||

|

|

e1514c901b | ||

|

|

e90c5003ae | ||

|

|

92a0d5d52c | ||

|

|

8912cb5323 | ||

|

|

d008c19149 | ||

|

|

e844b6444a | ||

|

|

02606cdce2 | ||

|

|

0081720f0e | ||

|

|

cca1e92868 | ||

|

|

69b774a074 | ||

|

|

5656b03fb4 | ||

|

|

02d0dcbb7f | ||

|

|

7b2e06df12 | ||

|

|

4259ae63d7 | ||

|

|

d7b11803bc | ||

|

|

fed298a6d4 | ||

|

|

51832385b1 | ||

|

|

462303fca0 | ||

|

|

4405703e42 | ||

|

|

23e398e121 | ||

|

|

b17bb89d04 | ||

|

|

5590cebf8f | ||

|

|

1fa043f09d | ||

|

|

3bd0af1451 | ||

|

|

1545962745 | ||

|

|

d032571681 | ||

|

|

33fb0acc7e | ||

|

|

1ec68a91e2 | ||

|

|

a23c113a46 | ||

|

|

371ae2c0a5 | ||

|

|

8f8f6ffa27 | ||

|

|

475fe0d91f | ||

|

|

3d74e60d03 | ||

|

|

83ac83bb28 | ||

|

|

8478fb857c | ||

|

|

7074bdaa9f | ||

|

|

58164294cc | ||

|

|

7c0e9df156 | ||

|

|

bd62212ecb | ||

|

|

2292039b42 | ||

|

|

73f8da8d5a | ||

|

|

e51dbe0ca7 | ||

|

|

482a375e31 | ||

|

|

689c5ce455 | ||

|

|

734a020ecc | ||

|

|

44d537f78c | ||

|

|

b4c60eb910 | ||

|

|

e120b32375 | ||

|

|

de54966d30 | ||

|

|

39a6302c18 | ||

|

|

05ceeea4b0 | ||

|

|

9f8e3373a8 | ||

|

|

42521cbae4 | ||

|

|

b23c35197e | ||

|

|

70f28d9ac4 | ||

|

|

912d73d98a | ||

|

|

2a720fce6f | ||

|

|

e4534c359f | ||

|

|

b91bec15f2 | ||

|

|

67ad5cacb7 | ||

|

|

b4a739476a | ||

|

|

a7bf2085db | ||

|

|

c3802cf48b | ||

|

|

54711c4491 | ||

|

|

fcb52a69c0 | ||

|

|

1b632f9754 | ||

|

|

73d7a0ecdc | ||

|

|

08943593b3 | ||

|

|

c949a88f20 | ||

|

|

a49c11f655 | ||

|

|

a66aed4a88 | ||

|

|

0045c953a0 | ||

|

|

fdce41b451 | ||

|

|

4d5e4d0f00 | ||

|

|

82c9b6481e | ||

|

|

053d4dcb18 | ||

|

|

e1b2c442aa | ||

|

|

0ed8ba8ca4 | ||

|

|

f195847c68 | ||

|

|

5beb13b17e | ||

|

|

7d9ec05062 | ||

|

|

fc604a9eaf | ||

|

|

4f3c1ad9b6 | ||

|

|

6d45ed586c | ||

|

|

1afb633b4f | ||

|

|

34d9f9174b | ||

|

|

3b0c208eff | ||

|

|

05022f8db4 | ||

|

|

3336de457a | ||

|

|

10a27bc29c | ||

|

|

542e5d3c2d | ||

|

|

7372617b14 | ||

|

|

89735a130b | ||

|

|

859cf74bd6 | ||

|

|

e2744ab399 | ||

|

|

16bd065098 | ||

|

|

71c52e6dd7 | ||

|

|

a7f8c3ced3 | ||

|

|

f3f0432c65 | ||

|

|

426ba2d150 | ||

|

|

2790099efa | ||

|

|

f6ba8bc95e | ||

|

|

d6181522c0 | ||

|

|

04cf071ca6 | ||

|

|

e4371b5d02 | ||

|

|

52c52b2a0d | ||

|

|

8f40f10575 | ||

|

|

fe0f6fcd0b | ||

|

|

31b1ad8bb4 | ||

|

|

373680d854 | ||

|

|

9e3bc80495 | ||

|

|

89405fe003 | ||

|

|

b9ea3865a5 | ||

|

|

b5bd643814 | ||

|

|

52ccaeffd5 | ||

|

|

18136c12fd | ||

|

|

dec3f9e75e | ||

|

|

ccc0ee4d18 | ||

|

|

69e9708080 | ||

|

|

5944ba099a | ||

|

|

ada2718b5e | ||

|

|

1f87bd63e7 | ||

|

|

c0f3259cf6 | ||

|

|

e1d5749a40 | ||

|

|

a8d7eb27d9 | ||

|

|

1eecdf3829 | ||

|

|

be8b345889 | ||

|

|

074da389b3 | ||

|

|

4df2dc09fe | ||

|

|

e8d42ba074 | ||

|

|

c036483680 | ||

|

|

2818584db6 | ||

|

|

37585f760d | ||

|

|

f5477a03a1 | ||

|

|

50388425b2 | ||

|

|

725c59eab0 | ||

|

|

7bf1de29a4 | ||

|

|

d90c3fc7dd | ||

|

|

80785ce072 | ||

|

|

44ea896de8 | ||

|

|

d30cb8a0f0 | ||

|

|

6c7b333b34 | ||

|

|

6d34a00e77 | ||

|

|

1f353e10ce | ||

|

|

4e10f8d1c5 | ||

|

|

a22cd853fc | ||

|

|

354e0d6a87 | ||

|

|

dfabe28645 | ||

|

|

fce230da48 | ||

|

|

055ba9bda6 | ||

|

|

ec19c3b4dd | ||

|

|

37aa526404 | ||

|

|

86c1faa40f | ||

|

|

8dcf15d0f9 | ||

|

|

6835e1e680 | ||

|

|

d8f89b8f67 | ||

|

|

ec28eba781 | ||

|

|

5ef8fff5bc | ||

|

|

4f317b76fa | ||

|

|

61672637dc | ||

|

|

ecf6e8f664 | ||

|

|

4115975320 | ||

|

|

21904a8609 | ||

|

|

10b0a3dabb | ||

|

|

b2091e9aed | ||

|

|

f2cb5bd77c | ||

|

|

19c61c52e6 | ||

|

|

b327359183 | ||

|

|

9e9bb72e17 | ||

|

|

a23907e009 | ||

|

|

ad131f5a2c | ||

|

|

dbeae4ca68 | ||

|

|

0fb0e94848 | ||

|

|

95d2a82d35 | ||

|

|

5bc6eb6774 | ||

|

|

3ba81e9aaa | ||

|

|

329a9b59c1 | ||

|

|

39cccd568e | ||

|

|

19b7f6ad8c | ||

|

|

41c000cf47 | ||

|

|

1b8ea61e87 | ||

|

|

22c26e24b1 | ||

|

|

396045177c | ||

|

|

4538593236 | ||

|

|

8086ef355b | ||

|

|

60d038fe46 | ||

|

|

ff0f4463be | ||

|

|

820571d993 | ||

|

|

e311d3767c | ||

|

|

24d7b80244 | ||

|

|

61f99e4d2e | ||

|

|

d5348bcf49 | ||

|

|

5d31d66365 | ||

|

|

29778a0154 | ||

|

|

165c0a5866 | ||

|

|

588323961e | ||

|

|

fd1c0b71c5 | ||

|

|

54fbdcadf9 | ||

|

|

69a30d0cf0 | ||

|

|

b8f9b44f38 | ||

|

|

cbf17d4eb5 | ||

|

|

327e025262 | ||

|

|

6b1e944bba | ||

|

|

668ed4d61b | ||

|

|

312c0584ed | ||

|

|

110d3acb58 | ||

|

|

ddbc60283b | ||

|

|

471bcecfd6 | ||

|

|

0245791b13 | ||

|

|

4794396ce8 | ||

|

|

c7088779d6 | ||

|

|

672905da12 | ||

|

|

47172b13be | ||

|

|

3668a10af6 | ||

|

|

a4e294c03f | ||

|

|

3fd6f4003f | ||

|

|

3eaf5cd530 | ||

|

|

c344fd8ca4 | ||

|

|

09639ca294 | ||

|

|

a81b6dca83 | ||

|

|

b74aefb08f | ||

|

|

fffc0c3add | ||

|

|

757f90aa7a | ||

|

|

022f9eb551 | ||

|

|

6e7b82cfcb | ||

|

|

b5fb24b360 | ||

|

|

b77345222c | ||

|

|

793e81406e | ||

|

|

cef1ec95d2 | ||

|

|

7e1b3c552b | ||

|

|

69736a63b6 | ||

|

|

fb4a9f9056 | ||

|

|

387d89d3af | ||

|

|

65d9ca9d39 | ||

|

|

8c842af4ba | ||

|

|

4faf9262c9 | ||

|

|

be7724c67d | ||

|

|

48d26347f7 | ||

|

|

bdb01ec8b5 | ||

|

|

9047815799 | ||

|

|

05bd94a2cc | ||

|

|

c9f7da84d0 | ||

|

|

bcc124e86a | ||

|

|

48d2733403 | ||

|

|

31fc6e4e56 | ||

|

|

fcdeef0146 | ||

|

|

1cd524c0cc | ||

|

|

0f746917a7 | ||

|

|

a2228d0169 | ||

|

|

e8a679d34b | ||

|

|

1912a42091 | ||

|

|

ca81f96635 | ||

|

|

eb3b8c4b31 | ||

|

|

6740d6d60b | ||

|

|

c46c35b248 | ||

|

|

0b2dcec4bc | ||

|

|

f8e2a4aff4 | ||

|

|

7256db8c4e | ||

|

|

b14d5d9bee | ||

|

|

12e15c3e4b | ||

|

|

51911bf272 | ||

|

|

6dc8061401 | ||

|

|

b8fa4f8797 | ||

|

|

cc0bea7f45 | ||

|

|

4e9124b244 | ||

|

|

f0eabef7b0 | ||

|

|

23e5557958 | ||

|

|

b1d02afa85 | ||

|

|

2edc380f47 | ||

|

|

cea8295c09 | ||

|

|

244bfc993a | ||

|

|

3a272a4493 | ||

|

|

a3300db770 | ||

|

|

b0394ce261 | ||

|

|

3123089790 | ||

|

|

f13cf66676 | ||

|

|

0c8c4d87fb | ||

|

|

066088fdeb | ||

|

|

cf641e41c7 | ||

|

|

5b48322e1b | ||

|

|

9d3f680d58 | ||

|

|

bed28d57e6 | ||

|

|

2538525103 | ||

|

|

6ed798db8c | ||

|

|

8e9d966829 | ||

|

|

be16640f92 | ||

|

|

0e1376dd2e | ||

|

|

0494575aa7 | ||

|

|

bed57534e0 | ||

|

|

1862d631d1 | ||

|

|

c977ce5690 | ||

|

|

84df377516 | ||

|

|

4d9a284f6e | ||

|

|

da7ad8b44a | ||

|

|

4164046323 | ||

|

|

72e743dfd1 | ||

|

|

7eb7edaf0a | ||

|

|

49368aaf76 | ||

|

|

b8c07a966f | ||

|

|

c6bcc0e3aa | ||

|

|

7719339f23 | ||

|

|

8ad64722ed | ||

|

|

611f8b8865 | ||

|

|

38bdc173e8 | ||

|

|

52244325d9 | ||

|

|

3fd3d99b8c | ||

|

|

d4ee5e91a2 | ||

|

|

c2ad2d7238 | ||

|

|

892e195f0e | ||

|

|

c5b1bed7dc | ||

|

|

0e388d7aa7 | ||

|

|

c3a0dbbe48 | ||

|

|

8b95b3ffc7 | ||

|

|

42b78461cd | ||

|

|

9190a41ca5 | ||

|

|

28a7251319 | ||

|

|

20565866ef | ||

|

|

246f10aee5 | ||

|

|

960017280d | ||

|

|

7218aaf52e | ||

|

|

62050cc7b6 | ||

|

|

f88a14ac0a | ||

|

|

9286761c30 | ||

|

|

07c3273247 | ||

|

|

eb8fe77582 | ||

|

|

b68ba0bff6 | ||

|

|

696657c09e | ||

|

|

12bea9b60a | ||

|

|

9334e9552f | ||

|

|

a43b04a98b | ||

|

|

f359ff995d | ||

|

|

9185d2646b | ||

|

|

33e61c762c | ||

|

|

e342e646ff | ||

|

|

ed163a80e0 | ||

|

|

b390df08b5 | ||

|

|

f0b3b9f7f4 | ||

|

|

a67d732507 | ||

|

|

ca0ebe0d75 | ||

|

|

94d113cbe0 | ||

|

|

25c3aeaa5f | ||

|

|

736d5a00b7 | ||

|

|

f1627b214c | ||

|

|

d9265ec7ea | ||

|

|

663e871bed | ||

|

|

5c5eaddef7 | ||

|

|

edaec4f1ae | ||

|

|

6d19acaa6c | ||

|

|

d29a619fbf | ||

|

|

b17808dd91 | ||

|

|

c5321a3667 | ||

|

|

8836691510 | ||

|

|

6568f6525d | ||

|

|

473fc27b49 | ||

|

|

74aeb55acb | ||

|

|

8efcf0529f | ||

|

|

06071c2f9c | ||

|

|

5eb4eca487 | ||

|

|

33f6153e12 | ||

|

|

df3283f526 | ||

|

|

b5901a2819 | ||

|

|

6d5f1402fe | ||

|

|

65e3782b2e | ||

|

|

135981dd30 | ||

|

|

fe5cf2d922 | ||

|

|

e15425cc2e | ||

|

|

c3cb0a4e33 | ||

|

|

cc32976bdd | ||

|

|

bc08318716 | ||

|

|

ee1ab30c2c | ||

|

|

7fa1a66f7e | ||

|

|

946bf37406 | ||

|

|

8706f6931a | ||

|

|

f551674860 | ||

|

|

d90fe0ef07 | ||

|

|

bf979fa3b3 | ||

|

|

b3b88891e9 | ||

|

|

01c5de60dc | ||

|

|

47b8fe5022 | ||

|

|

324b37b875 | ||

|

|

76e7e192d8 | ||

|

|

f9f3c4d923 | ||

|

|

a476476bd1 | ||

|

|

82a60a884a | ||

|

|

f17727de18 | ||

|

|

f1f33c79f4 | ||

|

|

d52eaafdbb | ||

|

|

e7a3e50ed1 | ||

|

|

2e09a87baa | ||

|

|

b92ae7e47e | ||

|

|

f98446e139 | ||

|

|

57a48dadaa | ||

|

|

c65ec68e46 | ||

|

|

d6559be3fc | ||

|

|

6fbf67f9a9 | ||

|

|

59df5b24fe | ||

|

|

3e1544294b | ||

|

|

a12c398816 | ||

|

|

0bd3e28348 | ||

|

|

ad4e39c088 | ||

|

|

2668d96e6a | ||

|

|

357c496aad | ||

|

|

22a513ba22 | ||

|

|

e6dd1119be | ||

|

|

2dbe454e04 | ||

|

|

e3a59b76eb | ||

|

|

01008acfcd | ||

|

|

b67a162d3f | ||

|

|

8bfde9fbaf | ||

|

|

1fdecf8def | ||

|

|

1141d4b833 | ||

|

|

cdac92ca7b | ||

|

|

2a57c260cc | ||

|

|

f41e29ab3a | ||

|

|

8f10624073 | ||

|

|

eb1f8be11e | ||

|

|

3333501ab9 | ||

|

|

0f40820315 | ||

|

|

5f1a839620 | ||

|

|

b9bb1c775d | ||

|

|

1059b7376b | ||

|

|

f38ab4a9ce | ||

|

|

9e7450c012 | ||

|

|

99a3e360fe | ||

|

|

d45f8f78d6 | ||

|

|

648af61116 | ||

|

|

eebf1b89b1 | ||

|

|

f8094bb624 | ||

|

|

ed13e0d2c2 | ||

|

|

aa830589b4 | ||

|

|

999a2bd929 | ||

|

|

d69ee98450 | ||

|

|

f6712c24ad | ||

|

|

89d2772194 | ||

|

|

03352142b6 | ||

|

|

73a51e0c00 | ||

|

|

2e26f8caa6 | ||

|

|

f9bcce9e43 | ||

|

|

2ecc877ba8 | ||

|

|

3f8a3c69e3 | ||

|

|

67c37a0984 | ||

|

|

a58a55d00d | ||

|

|

06d51dd0b8 | ||

|

|

d5db028f57 | ||

|

|

fcb85ff4be | ||

|

|

3695b4363d | ||

|

|

cb11e6437c | ||

|

|

5127bd11ce | ||

|

|

91f90aefa1 | ||

|

|

0a067bce36 | ||

|

|

f0aba433bf | ||

|

|

f06467a0e3 | ||

|

|

68bcd3c710 | ||

|

|

a645733cc5 | ||

|

|

49fe5baf94 | ||

|

|

411ee55653 | ||

|

|

e351ce7411 | ||

|

|

f33e585a71 | ||

|

|

77f3096e0d | ||

|

|

9a5b18c4e6 | ||

|

|

0c7112869a | ||

|

|

f66a4d71ea | ||

|

|

9b0ab878df | ||

|

|

d30b90dfd0 | ||

|

|

efd28f8c27 | ||

|

|

e05e722387 | ||

|

|

748e81956d | ||

|

|

c9a41febce | ||

|

|

18e244b756 | ||

|

|

47676139a3 | ||

|

|

1ed933b7ad | ||

|

|

f6a343ccd6 | ||

|

|

dd6cdc22e5 | ||

|

|

f70f4348b3 | ||

|

|

ec7f801929 | ||

|

|

0f8aca382e | ||

|

|

0270f77eaa | ||

|

|

dcba71ada4 | ||

|

|

6080f76a9c | ||

|

|

e7349161f3 | ||

|

|

2e2907ea09 | ||

|

|

25e84b2a6c | ||

|

|

5efd424172 | ||

|

|

2672502c07 | ||

|

|

83440cc3d9 | ||

|

|

8e5f93be1c | ||

|

|

c1afc07955 | ||

|

|

4a83e14878 | ||

|

|

832320abc6 | ||

|

|

70c237da72 | ||

|

|

edfcc5c023 | ||

|

|

0668debec6 | ||

|

|

02d6463faa | ||

|

|

1fdb85234c | ||

|

|

44b7dd1808 | ||

|

|

e983ee3101 | ||

|

|

75e7e81c05 | ||

|

|

31ce3b9c08 | ||

|

|

ed93c50fef | ||

|

|

4845660eb5 | ||

|

|

c7919210a2 | ||

|

|

9491418f3b | ||

|

|

e8de403286 | ||

|

|

dfb625377b | ||

|

|

2c0f2a8be6 | ||

|

|

787d3cb3e9 | ||

|

|

96ca17d26c | ||

|

|

3dd0f7f2c3 | ||

|

|

10ba0cf976 | ||

|

|

276c15cc23 | ||

|

|

2584b848ad | ||

|

|

6471efed5f | ||

|

|

5b7d7ad65d | ||

|

|

712851a8a5 | ||

|

|

63d291cb47 | ||

|

|

f825c92111 | ||

|

|

419eb2ea41 | ||

|

|

89b58dd64e | ||

|

|

6bc5f81440 | ||

|

|

424f4b7b5e | ||

|

|

9271a1caac | ||

|

|

0ee4df03f9 | ||

|

|

8ac713ce32 | ||

|

|

76b2489fe9 | ||

|

|

6786095154 | ||

|

|

2c5793ef37 | ||

|

|

d483f25b96 | ||

|

|

7118368979 | ||

|

|

59256c2e80 | ||

|

|

1fb8a0db1e | ||

|

|

07d0c8e8fa | ||

|

|

98452ead17 | ||

|

|

d8c9f40377 | ||

|

|

8148d5eec6 | ||

|

|

4c429ad604 | ||

|

|

a9c52de8d5 | ||

|

|

f648aa1f91 | ||

|

|

eaba388bdd | ||

|

|

73e6afcbc6 | ||

|

|

8c3b72adf2 | ||

|

|

ae18ff4262 | ||

|

|

1adc8af543 | ||

|

|

7413df6f1e | ||

|

|

bda8559190 | ||

|

|

b74612fa41 | ||

|

|

22e0c20dcd | ||

|

|

08f92e1100 | ||

|

|

bb12ece46e | ||

|

|

0065438305 | ||

|

|

7f115c1b3e | ||

|

|

4e0114ab0d | ||

|

|

0ef64fa4bd | ||

|

|

84dbc17c22 | ||

|

|

16e16e356d | ||

|

|

978ee885c4 | ||

|

|

850d43df63 | ||

|

|

fc109fd1b1 | ||

|

|

9aefc55534 | ||

|

|

2829947b93 | ||

|

|

0c2af89a1c | ||

|

|

14c2dc9624 | ||

|

|

4f35d710a6 | ||

|

|

fdb5e018e5 | ||

|

|

6001fde25c | ||

|

|

ae63c0adaf | ||

|

|

ad1539c8f6 | ||

|

|

634a0c8cd0 | ||

|

|

773f9a0c63 | ||

|

|

e4e320e9e3 | ||

|

|

3b4b400e6b | ||

|

|

a950be2d95 | ||

|

|

ba6f5ab984 | ||

|

|

f3a5e3f5ed | ||

|

|

e685e621f3 | ||

|

|

2cd2be9b67 | ||

|

|

e73d9e8a03 | ||

|

|

476f74a604 | ||

|

|

ab0d1d99e6 | ||

|

|

d5680ffd5d | ||

|

|

3c091a88d4 | ||

|

|

49b70b33de | ||

|

|

c5ff2716fb | ||

|

|

400fdf0896 | ||

|

|

cbb8c7323c | ||

|

|

60e79f8f77 | ||

|

|

0e829d739a | ||

|

|

62abb274e0 | ||

|

|

e4028785de | ||

|

|

2bb44bcb76 | ||

|

|

684599f81b | ||

|

|

b56d28f5df | ||

|

|

02b9ac04c8 | ||

|

|

2fc283990a | ||

|

|

abb652ebd5 | ||

|

|

55786cb7f7 | ||

|

|

447a575f4f | ||

|

|

49280a8617 | ||

|

|

ff78a9cc35 | ||

|

|

3fea5c9c8c | ||

|

|

aea63cad52 | ||

|

|

800abe9920 | ||

|

|

dd6069e41a | ||

|

|

90d31aeff0 | ||

|

|

4d9a327b1f | ||

|

|

06a97ef076 | ||

|

|

76c2477387 | ||

|

|

bc4dac9cad | ||

|

|

36e3d6c18a | ||

|

|

edfd84a8e3 | ||

|

|

fb20cf6069 | ||

|

|

abbe47f6b9 | ||

|

|

f84d250134 | ||

|

|

3ffb4b8990 | ||

|

|

f70cfabede | ||

|

|

3a81783d77 | ||

|

|

237a4a90ff | ||

|

|

99c7dfc98d | ||

|

|

48aba34370 | ||

|

|

29cca36f2c | ||

|

|

0f5819f5c2 | ||

|

|

373772de2d | ||

|

|

7f5bbe8b5f | ||

|

|

daee57167b | ||

|

|

03467196b9 | ||

|

|

d3f3531cdb | ||

|

|

883b694592 | ||

|

|

6c89d66af9 | ||

|

|

fb0a76b418 | ||

|

|

64f77fca5b | ||

|

|

b1fca2c5be | ||

|

|

108d705f09 | ||

|

|

a77242e66c | ||

|

|

8b153113ff | ||

|

|

6d0ec37135 |

224

.gitignore

vendored

@@ -1,112 +1,112 @@

|

||||

### Intellij ###

|

||||

# Covers JetBrains IDEs: IntelliJ, RubyMine, PhpStorm, AppCode, PyCharm, CLion, Android Studio and Webstorm

|

||||

|

||||

*.iml

|

||||

|

||||

## Directory-based project format:

|

||||

.idea/

|

||||

# if you remove the above rule, at least ignore the following:

|

||||

|

||||

# User-specific stuff:

|

||||

# .idea/workspace.xml

|

||||

# .idea/tasks.xml

|

||||

# .idea/dictionaries

|

||||

# .idea/shelf

|

||||

|

||||

# Sensitive or high-churn files:

|

||||

.idea/dataSources.ids

|

||||

.idea/dataSources.xml

|

||||

.idea/sqlDataSources.xml

|

||||

.idea/dynamic.xml

|

||||

.idea/uiDesigner.xml

|

||||

|

||||

|

||||

# Mongo Explorer plugin:

|

||||

.idea/mongoSettings.xml

|

||||

|

||||

## File-based project format:

|

||||

*.ipr

|

||||

*.iws

|

||||

|

||||

## Plugin-specific files:

|

||||

|

||||

# IntelliJ

|

||||

/out/

|

||||

|

||||

# mpeltonen/sbt-idea plugin

|

||||

.idea_modules/

|

||||

|

||||

# JIRA plugin

|

||||

atlassian-ide-plugin.xml

|

||||

|

||||

# Crashlytics plugin (for Android Studio and IntelliJ)

|

||||

com_crashlytics_export_strings.xml

|

||||

crashlytics.properties

|

||||

crashlytics-build.properties

|

||||

fabric.properties

|

||||

|

||||

|

||||

### Java ###

|

||||

*.class

|

||||

|

||||

# Mobile Tools for Java (J2ME)

|

||||

.mtj.tmp/

|

||||

|

||||

# Package Files #

|

||||

*.jar

|

||||

*.war

|

||||

*.ear

|

||||

|

||||

# virtual machine crash logs, see http://www.java.com/en/download/help/error_hotspot.xml

|

||||

hs_err_pid*

|

||||

|

||||

|

||||

### OSX ###

|

||||

.DS_Store

|

||||

.AppleDouble

|

||||

.LSOverride

|

||||

|

||||

# Icon must end with two \r

|

||||

Icon

|

||||

|

||||

|

||||

# Thumbnails

|

||||

._*

|

||||

|

||||

# Files that might appear in the root of a volume

|

||||

.DocumentRevisions-V100

|

||||

.fseventsd

|

||||

.Spotlight-V100

|

||||

.TemporaryItems

|

||||

.Trashes

|

||||

.VolumeIcon.icns

|

||||

|

||||

# Directories potentially created on remote AFP share

|

||||

.AppleDB

|

||||

.AppleDesktop

|

||||

Network Trash Folder

|

||||

Temporary Items

|

||||

.apdisk

|

||||

|

||||

/target

|

||||

target/

|

||||

*.log

|

||||

*.log.*

|

||||

*.bak

|

||||

*.vscode

|

||||

*/.vscode/*

|

||||

*/.vscode

|

||||

*/velocity.log*

|

||||

*/*.log

|

||||

*/*.log.*

|

||||

web/node_modules/

|

||||

web/node_modules/*

|

||||

workspace.xml

|

||||

/output/*

|

||||

.gitversion

|

||||

*/node_modules/*

|

||||

web/src/main/resources/templates/*

|

||||

*/out/*

|

||||

*/dist/*

|

||||

.DS_Store

|

||||

kafka-manager-web/src/main/resources/templates/*

|

||||

### Intellij ###

|

||||

# Covers JetBrains IDEs: IntelliJ, RubyMine, PhpStorm, AppCode, PyCharm, CLion, Android Studio and Webstorm

|

||||

|

||||

*.iml

|

||||

|

||||

## Directory-based project format:

|

||||

.idea/

|

||||

# if you remove the above rule, at least ignore the following:

|

||||

|

||||

# User-specific stuff:

|

||||

# .idea/workspace.xml

|

||||

# .idea/tasks.xml

|

||||

# .idea/dictionaries

|

||||

# .idea/shelf

|

||||

|

||||

# Sensitive or high-churn files:

|

||||

.idea/dataSources.ids

|

||||

.idea/dataSources.xml

|

||||

.idea/sqlDataSources.xml

|

||||

.idea/dynamic.xml

|

||||

.idea/uiDesigner.xml

|

||||

|

||||

|

||||

# Mongo Explorer plugin:

|

||||

.idea/mongoSettings.xml

|

||||

|

||||

## File-based project format:

|

||||

*.ipr

|

||||

*.iws

|

||||

|

||||

## Plugin-specific files:

|

||||

|

||||

# IntelliJ

|

||||

/out/

|

||||

|

||||

# mpeltonen/sbt-idea plugin

|

||||

.idea_modules/

|

||||

|

||||

# JIRA plugin

|

||||

atlassian-ide-plugin.xml

|

||||

|

||||

# Crashlytics plugin (for Android Studio and IntelliJ)

|

||||

com_crashlytics_export_strings.xml

|

||||

crashlytics.properties

|

||||

crashlytics-build.properties

|

||||

fabric.properties

|

||||

|

||||

|

||||

### Java ###

|

||||

*.class

|

||||

|

||||

# Mobile Tools for Java (J2ME)

|

||||

.mtj.tmp/

|

||||

|

||||

# Package Files #

|

||||

*.jar

|

||||

*.war

|

||||

*.ear

|

||||

*.tar.gz

|

||||

|

||||

# virtual machine crash logs, see http://www.java.com/en/download/help/error_hotspot.xml

|

||||

hs_err_pid*

|

||||

|

||||

|

||||

### OSX ###

|

||||

.DS_Store

|

||||

.AppleDouble

|

||||

.LSOverride

|

||||

|

||||

# Icon must end with two \r

|

||||

Icon

|

||||

|

||||

|

||||

# Thumbnails

|

||||

._*

|

||||

|

||||

# Files that might appear in the root of a volume

|

||||

.DocumentRevisions-V100

|

||||

.fseventsd

|

||||

.Spotlight-V100

|

||||

.TemporaryItems

|

||||

.Trashes

|

||||

.VolumeIcon.icns

|

||||

|

||||

# Directories potentially created on remote AFP share

|

||||

.AppleDB

|

||||

.AppleDesktop

|

||||

Network Trash Folder

|

||||

Temporary Items

|

||||

.apdisk

|

||||

|

||||

/target

|

||||

target/

|

||||

*.log

|

||||

*.log.*

|

||||

*.bak

|

||||

*.vscode

|

||||

*/.vscode/*

|

||||

*/.vscode

|

||||

*/velocity.log*

|

||||

*/*.log

|

||||

*/*.log.*

|

||||

node_modules/

|

||||

node_modules/*

|

||||

workspace.xml

|

||||

/output/*

|

||||

.gitversion

|

||||

out/*

|

||||

dist/

|

||||

dist/*

|

||||

km-rest/src/main/resources/templates/

|

||||

*dependency-reduced-pom*

|

||||

@@ -7,7 +7,7 @@ Thanks for considering to contribute this project. All issues and pull requests

|

||||

Before sending pull request to this project, please read and follow guidelines below.

|

||||

|

||||

1. Branch: We only accept pull request on `dev` branch.

|

||||

2. Coding style: Follow the coding style used in kafka-manager.

|

||||

2. Coding style: Follow the coding style used in LogiKM.

|

||||

3. Commit message: Use English and be aware of your spell.

|

||||

4. Test: Make sure to test your code.

|

||||

|

||||

|

||||

203

README.md

@@ -1,64 +1,139 @@

|

||||

|

||||

---

|

||||

|

||||

|

||||

|

||||

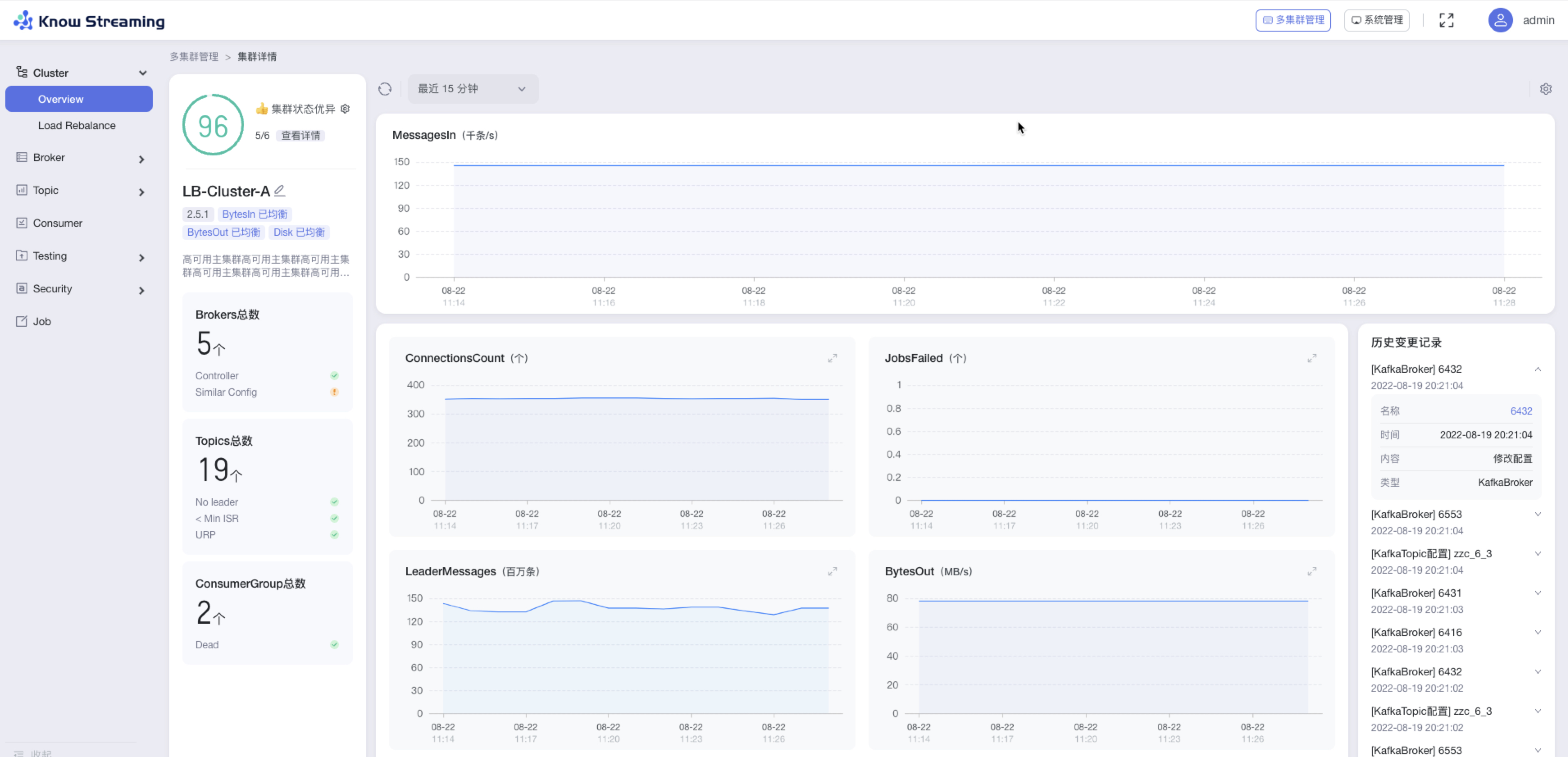

**一站式`Apache Kafka`集群指标监控与运维管控平台**

|

||||

|

||||

---

|

||||

|

||||

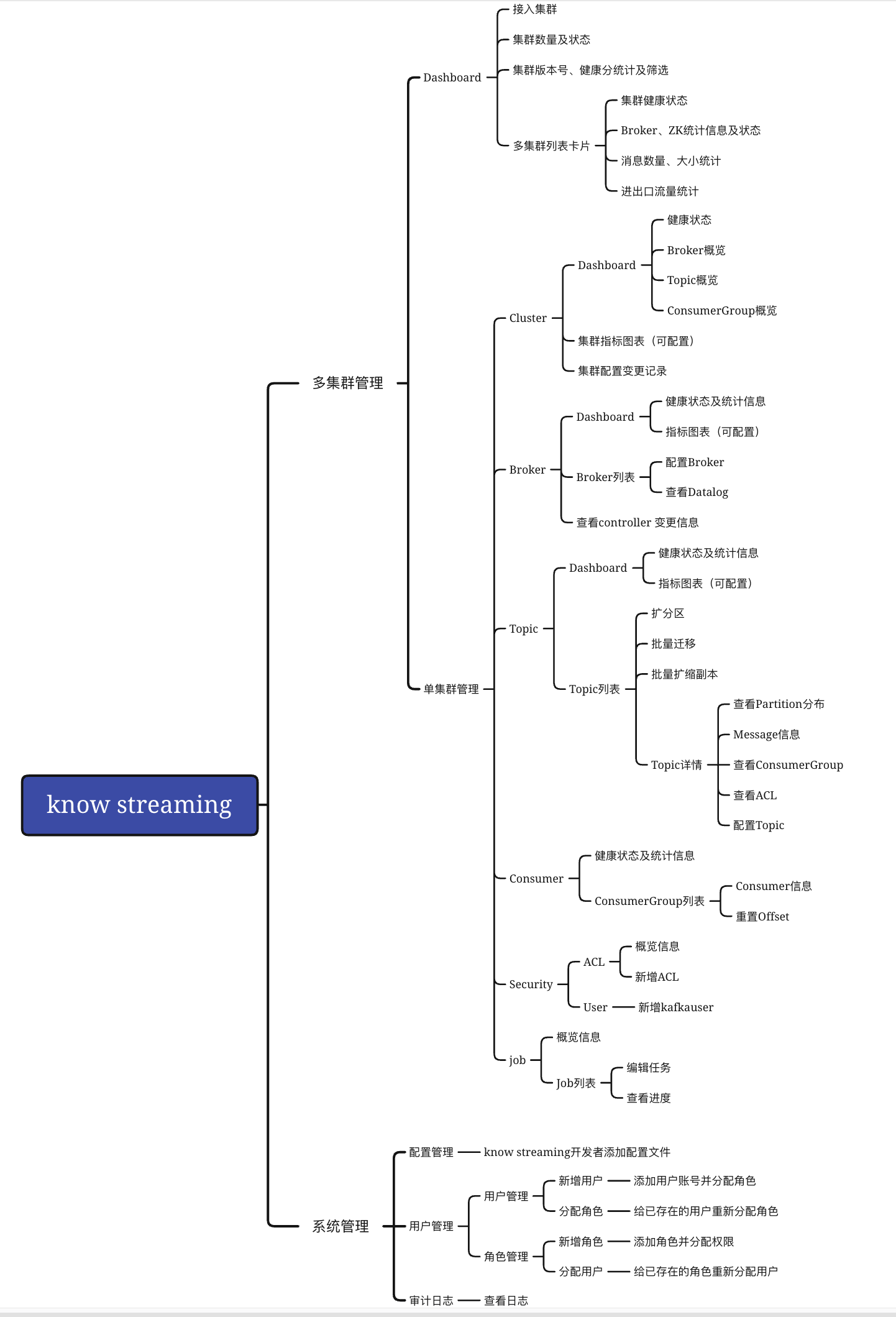

## 主要功能特性

|

||||

|

||||

|

||||

### 集群监控维度

|

||||

|

||||

- 多版本集群管控,支持从`0.10.2`到`2.x`版本;

|

||||

- 集群Topic、Broker等多维度历史与实时关键指标查看;

|

||||

|

||||

|

||||

### 集群管控维度

|

||||

|

||||

- 集群运维,包括逻辑Region方式管理集群

|

||||

- Broker运维,包括优先副本选举

|

||||

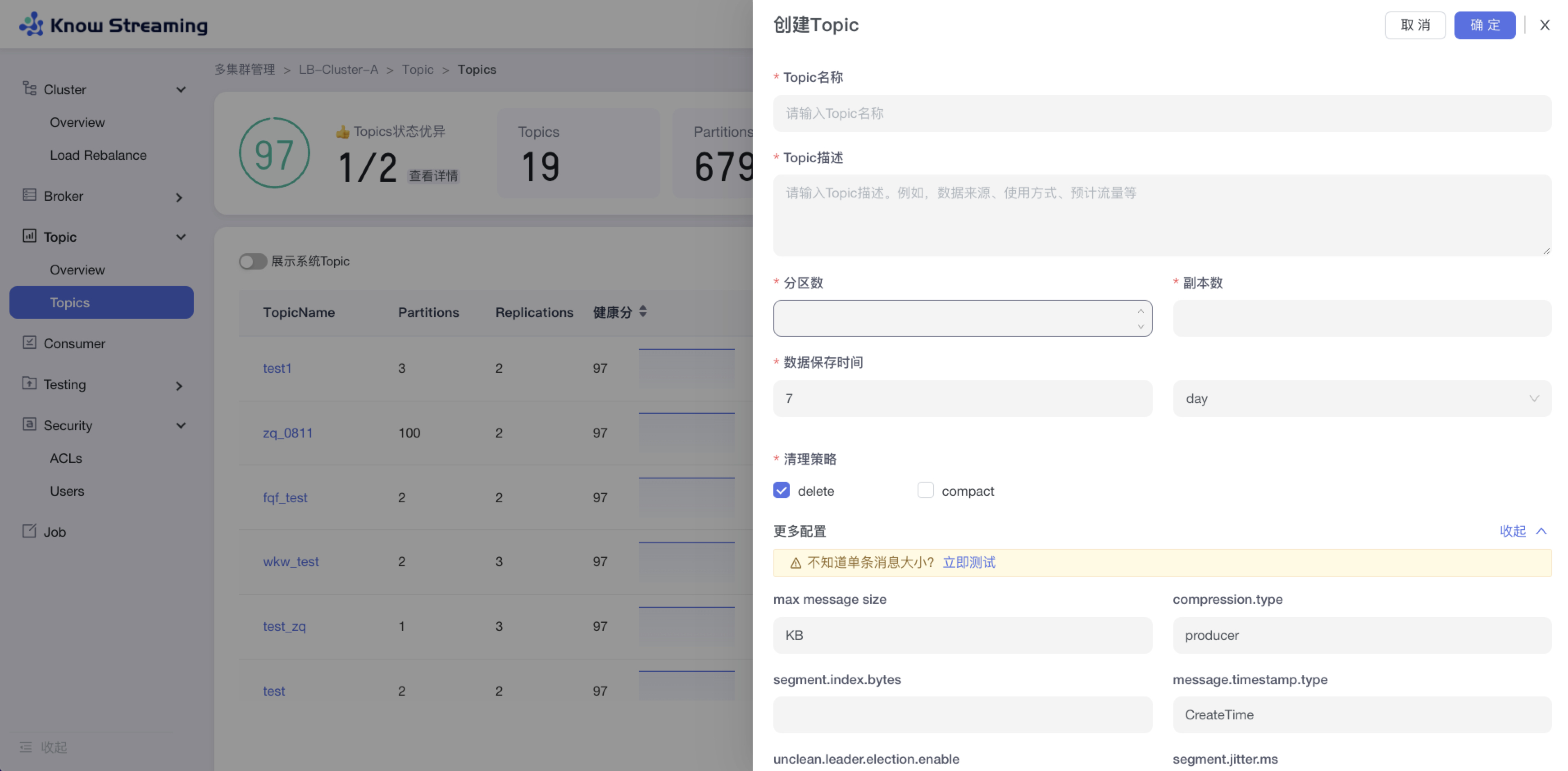

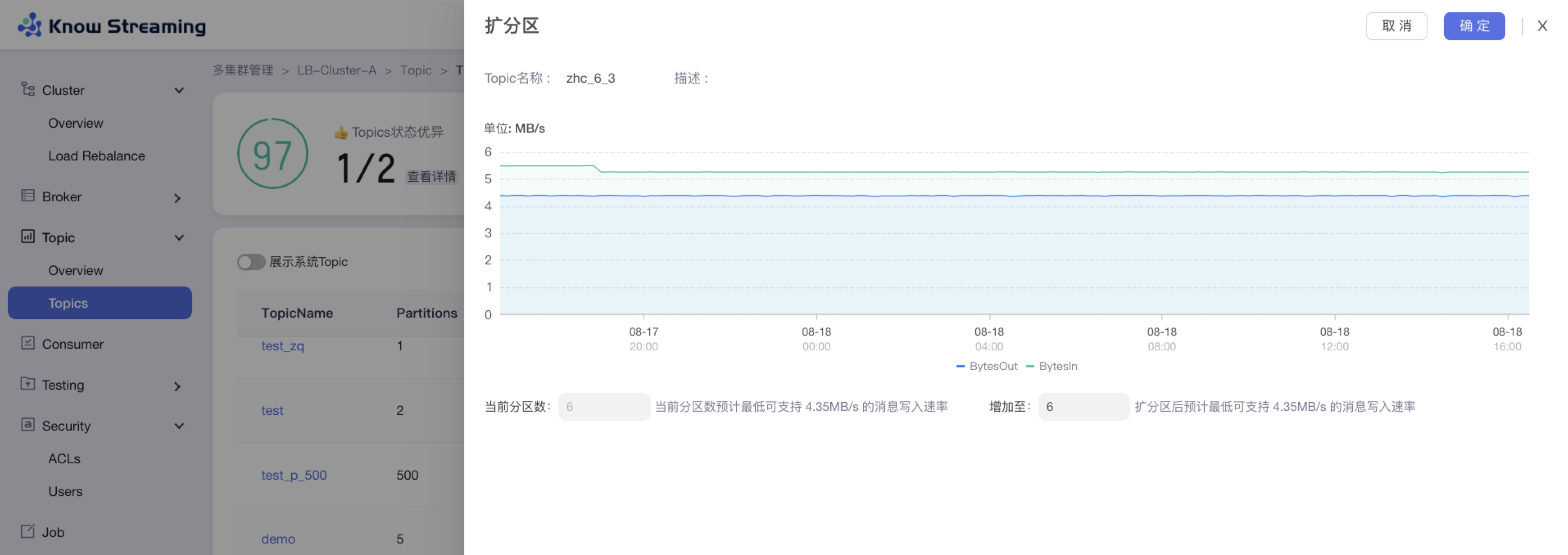

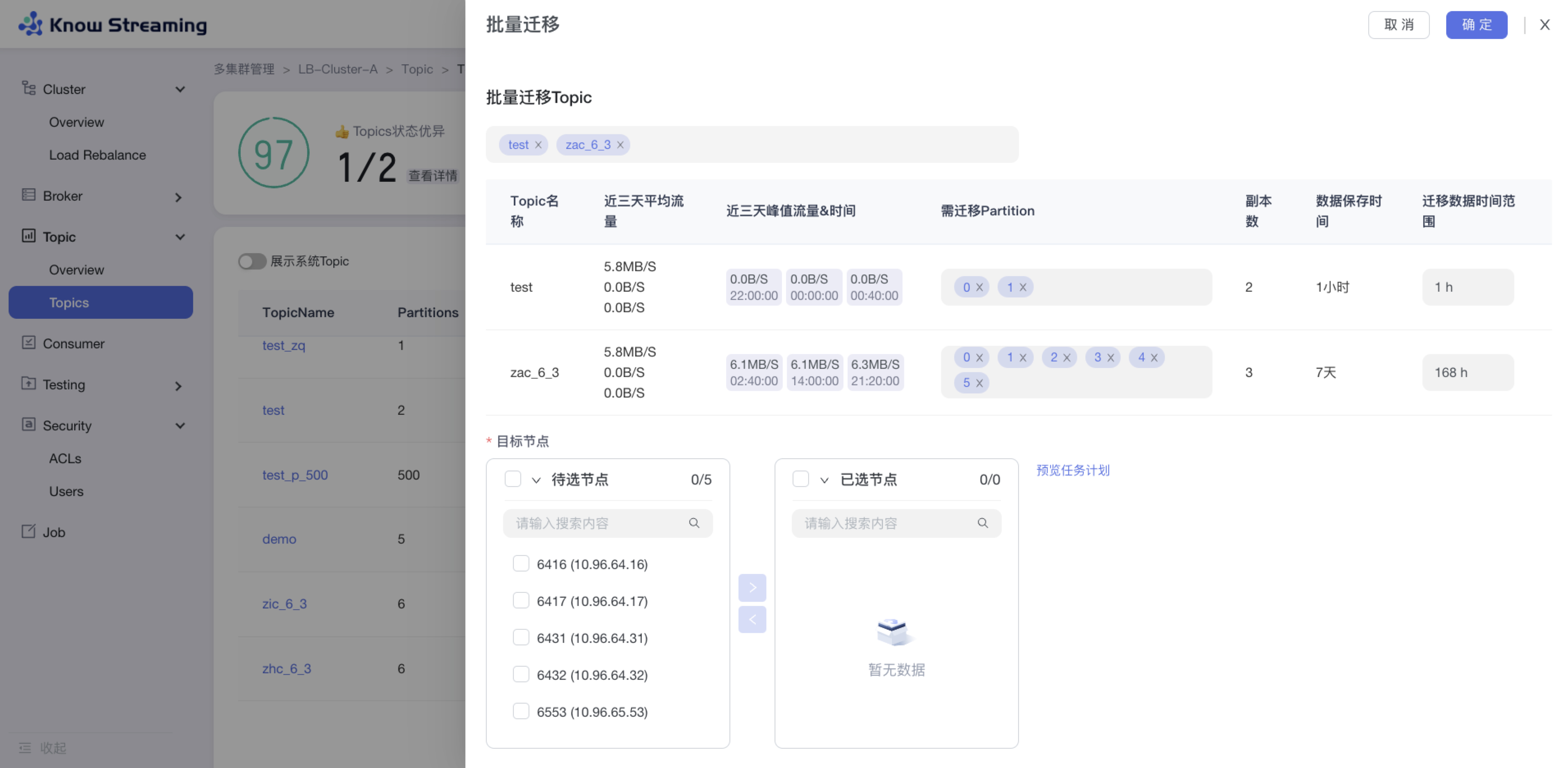

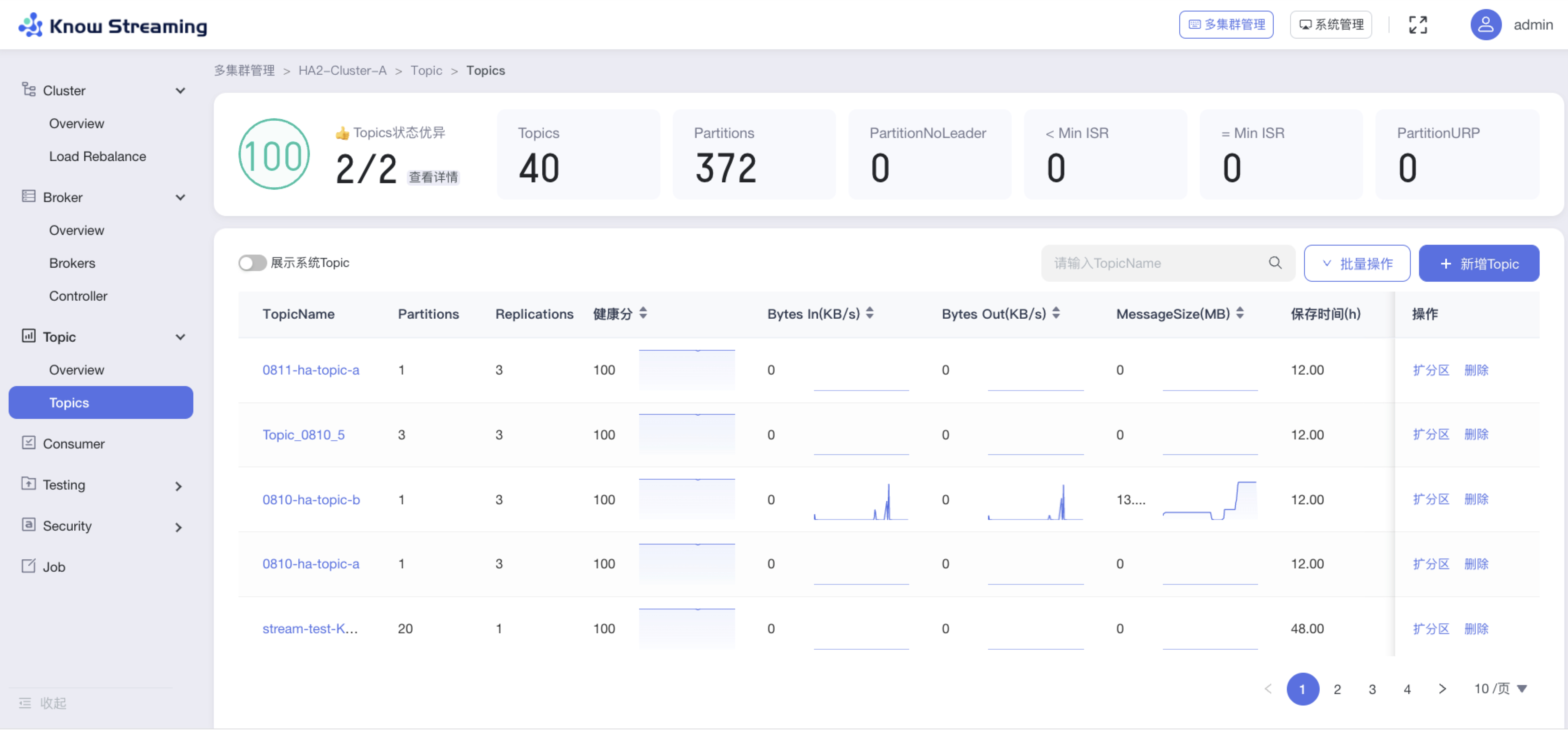

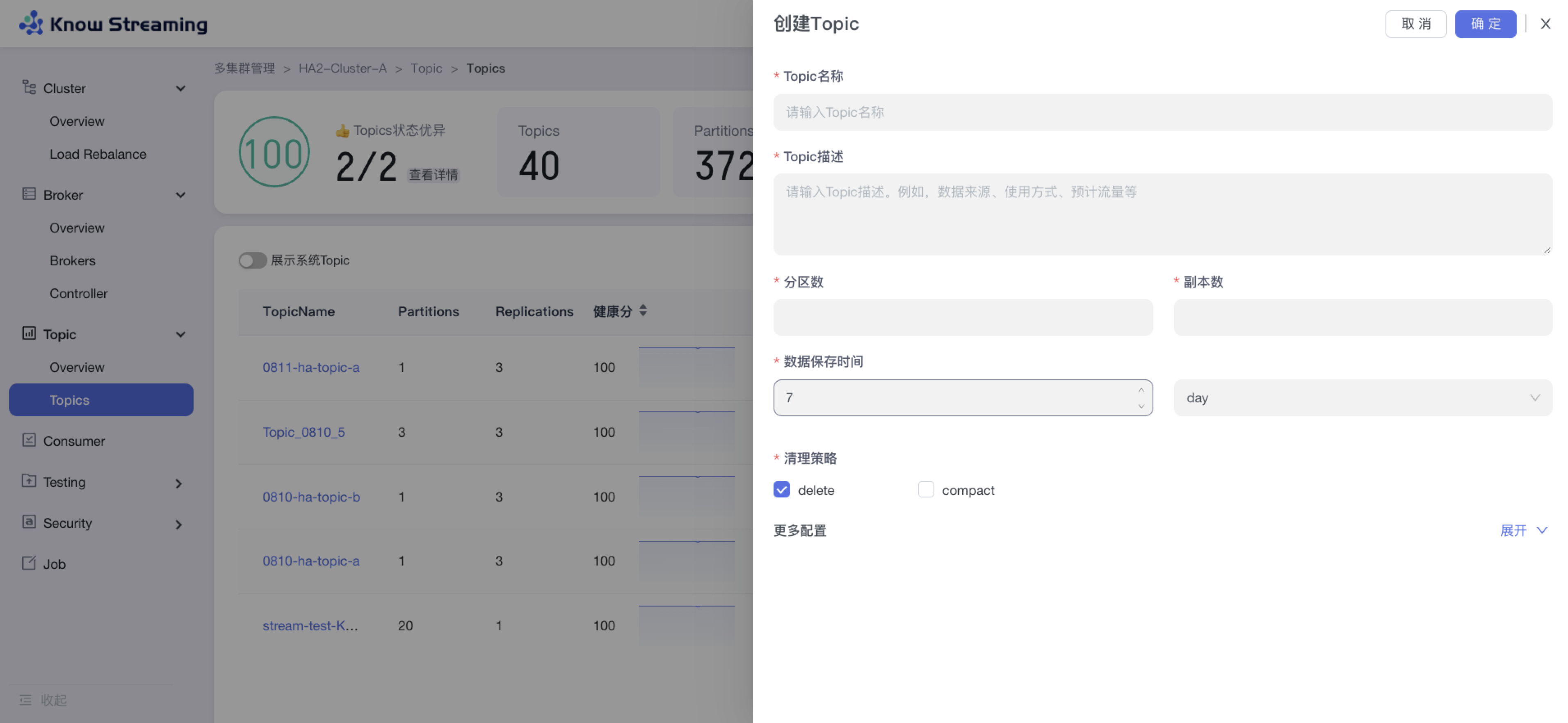

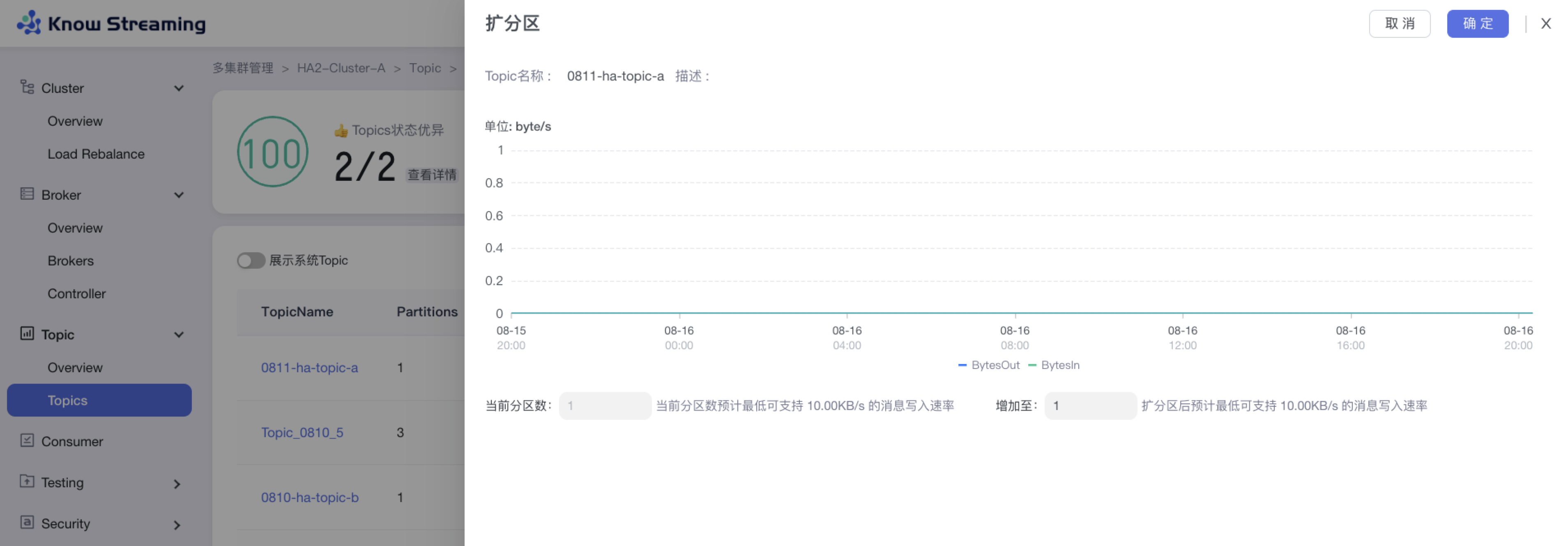

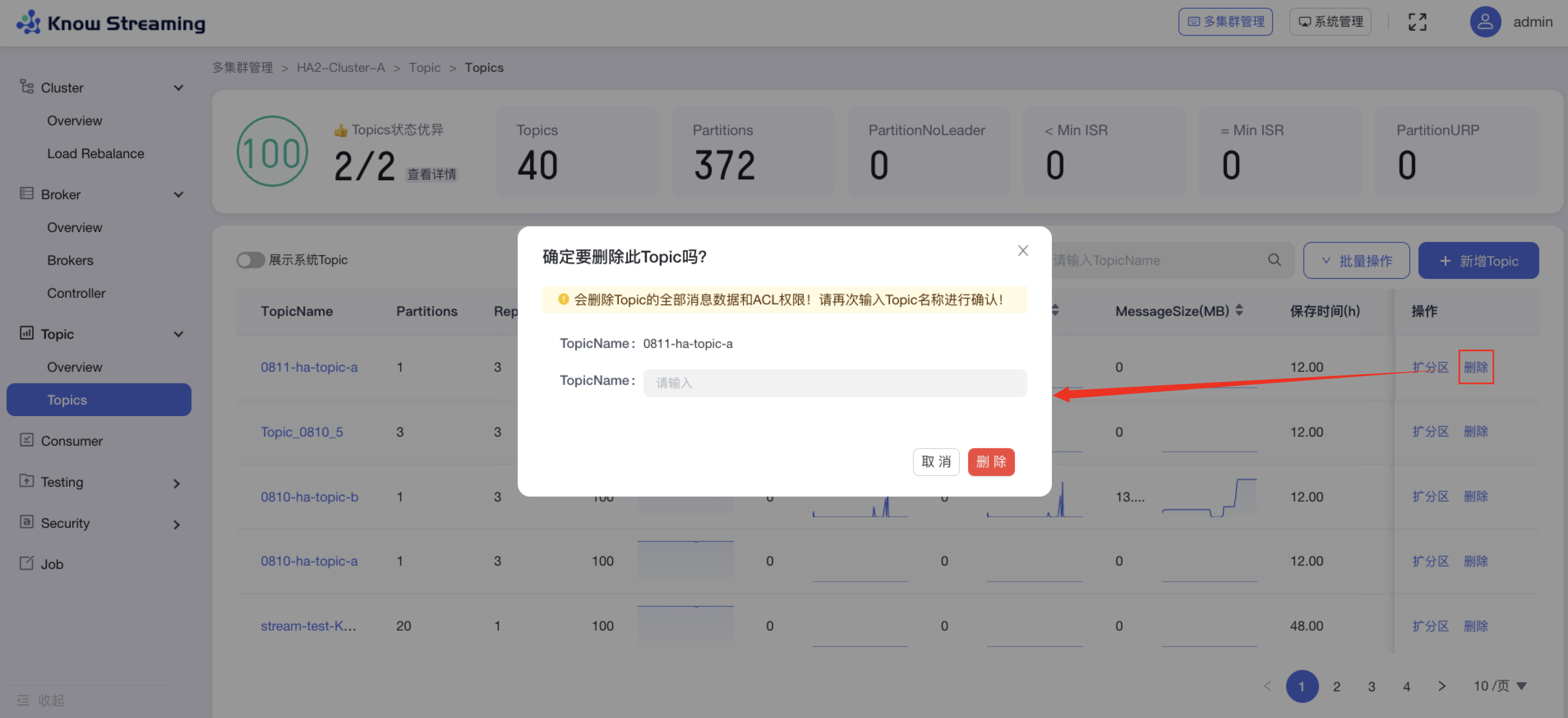

- Topic运维,包括创建、查询、扩容、修改属性、数据采样及迁移等;

|

||||

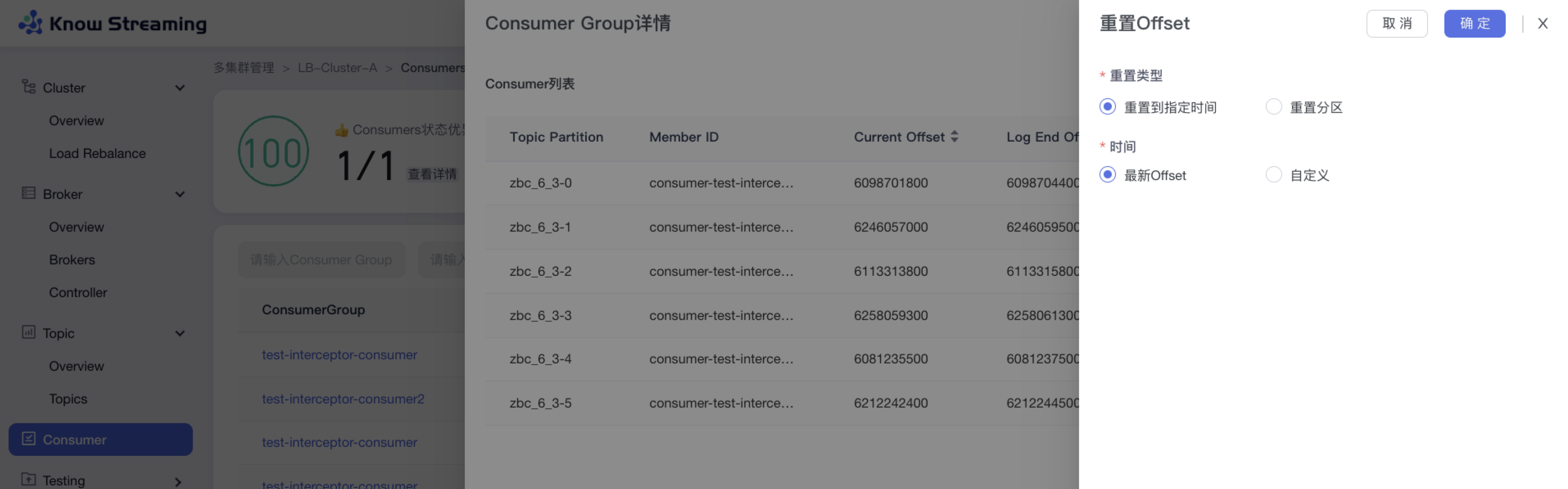

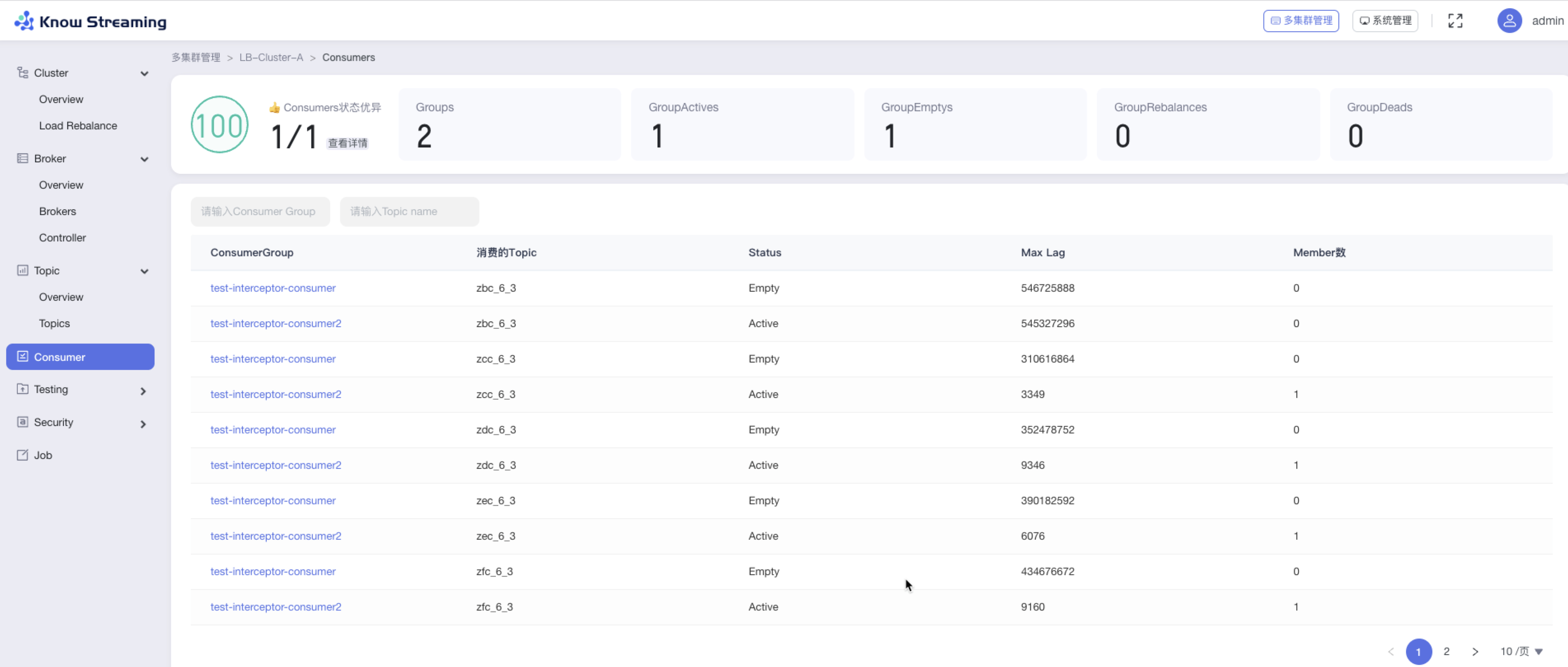

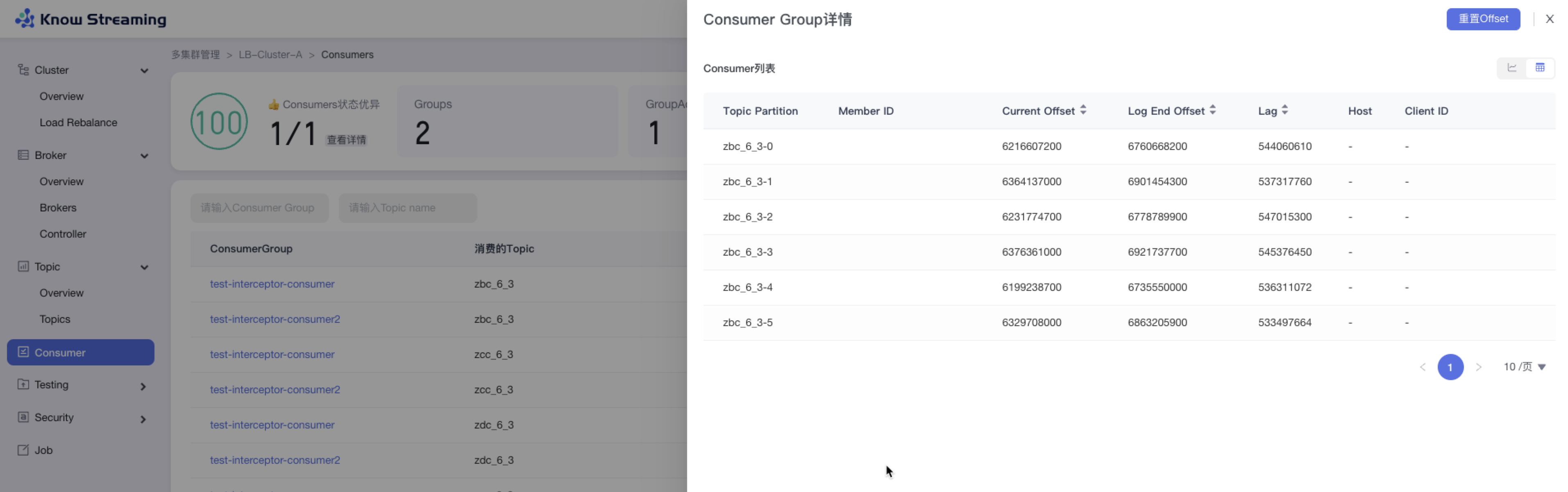

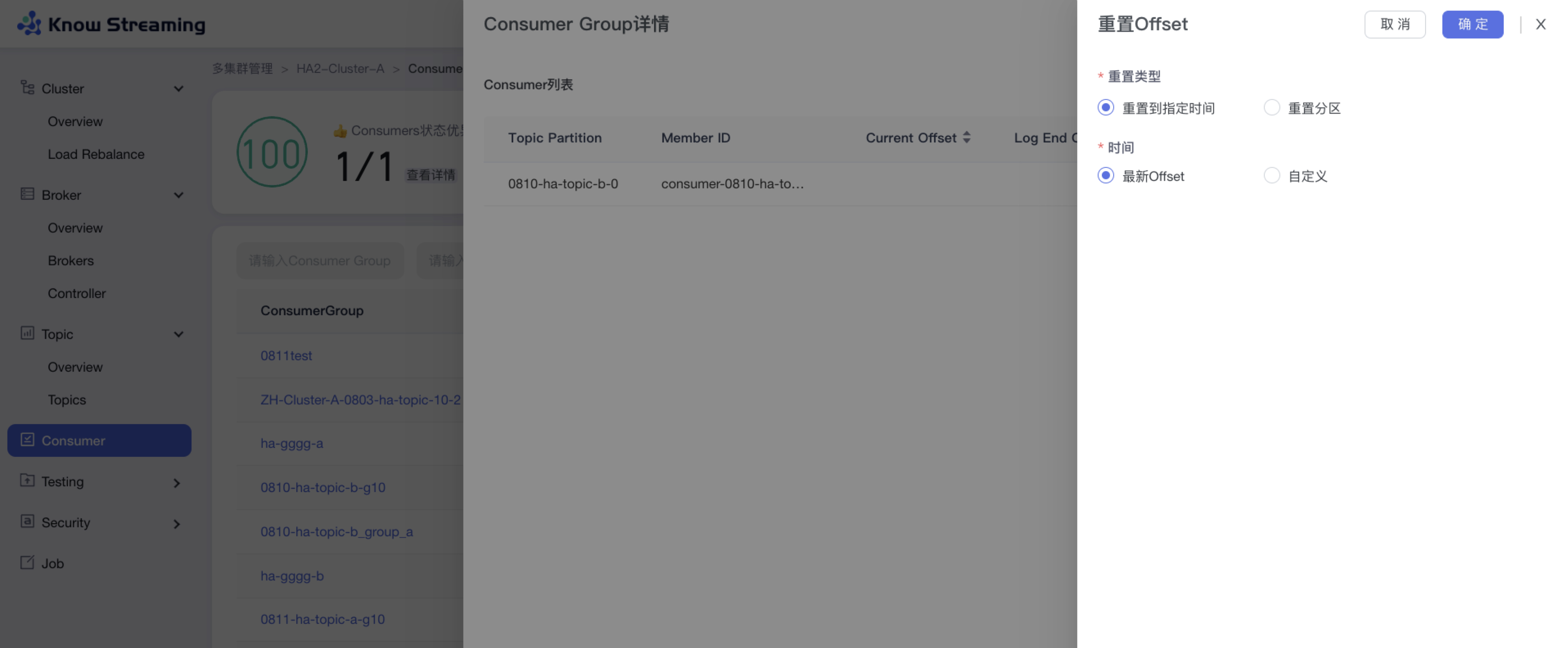

- 消费组运维,包括指定时间或指定偏移两种方式进行重置消费偏移

|

||||

|

||||

|

||||

### 用户使用维度

|

||||

|

||||

- Kafka用户、Kafka研发、Kafka运维 视角区分

|

||||

- Kafka用户、Kafka研发、Kafka运维 权限区分

|

||||

|

||||

|

||||

## kafka-manager架构图

|

||||

|

||||

|

||||

|

||||

|

||||

## 相关文档

|

||||

|

||||

- [kafka-manager安装手册](./docs/install_cn_guide.md)

|

||||

- [kafka-manager接入集群](./docs/manual_kafka_op/add_cluster.md)

|

||||

- [kafka-manager使用手册-待更新](./docs/user_cn_guide.md)

|

||||

|

||||

|

||||

## 钉钉交流群

|

||||

|

||||

|

||||

|

||||

|

||||

## 项目成员

|

||||

|

||||

### 内部核心人员

|

||||

|

||||

`iceyuhui`、`liuyaguang`、`limengmonty`、`zhangliangmike`、`nullhuangyiming`、`zengqiao`、`eilenexuzhe`、`huangjiaweihjw`

|

||||

|

||||

|

||||

### 外部贡献者

|

||||

|

||||

`fangjunyu`、`zhoutaiyang`

|

||||

|

||||

|

||||

## 协议

|

||||

|

||||

`kafka-manager`基于`Apache-2.0`协议进行分发和使用,更多信息参见[协议文件](./LICENSE)

|

||||

|

||||

<p align="center">

|

||||

<img src="https://user-images.githubusercontent.com/71620349/185368586-aed82d30-1534-453d-86ff-ecfa9d0f35bd.png" width = "256" div align=center />

|

||||

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

<a href="https://knowstreaming.com">产品官网</a> |

|

||||

<a href="https://github.com/didi/KnowStreaming/releases">下载地址</a> |

|

||||

<a href="https://doc.knowstreaming.com/product">文档资源</a> |

|

||||

<a href="https://demo.knowstreaming.com">体验环境</a>

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

<!--最近一次提交时间-->

|

||||

<a href="https://img.shields.io/github/last-commit/didi/KnowStreaming">

|

||||

<img src="https://img.shields.io/github/last-commit/didi/KnowStreaming" alt="LastCommit">

|

||||

</a>

|

||||

|

||||

<!--最新版本-->

|

||||

<a href="https://github.com/didi/KnowStreaming/blob/master/LICENSE">

|

||||

<img src="https://img.shields.io/github/v/release/didi/KnowStreaming" alt="License">

|

||||

</a>

|

||||

|

||||

<!--License信息-->

|

||||

<a href="https://github.com/didi/KnowStreaming/blob/master/LICENSE">

|

||||

<img src="https://img.shields.io/github/license/didi/KnowStreaming" alt="License">

|

||||

</a>

|

||||

|

||||

<!--Open-Issue-->

|

||||

<a href="https://github.com/didi/KnowStreaming/issues">

|

||||

<img src="https://img.shields.io/github/issues-raw/didi/KnowStreaming" alt="Issues">

|

||||

</a>

|

||||

|

||||

<!--知识星球-->

|

||||

<a href="https://z.didi.cn/5gSF9">

|

||||

<img src="https://img.shields.io/badge/join-%E7%9F%A5%E8%AF%86%E6%98%9F%E7%90%83-red" alt="Slack">

|

||||

</a>

|

||||

|

||||

</p>

|

||||

|

||||

|

||||

---

|

||||

|

||||

|

||||

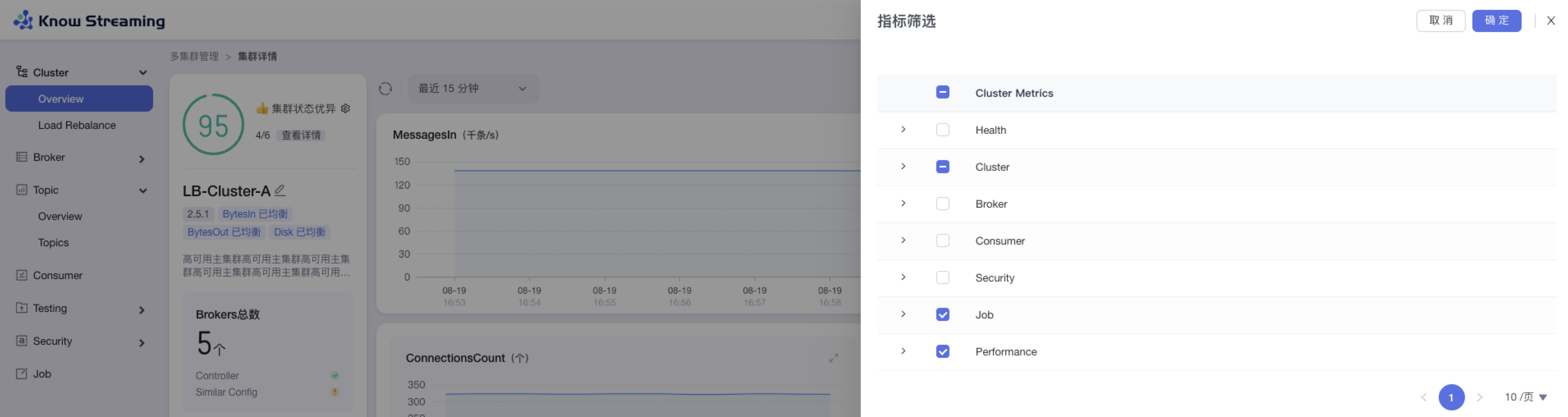

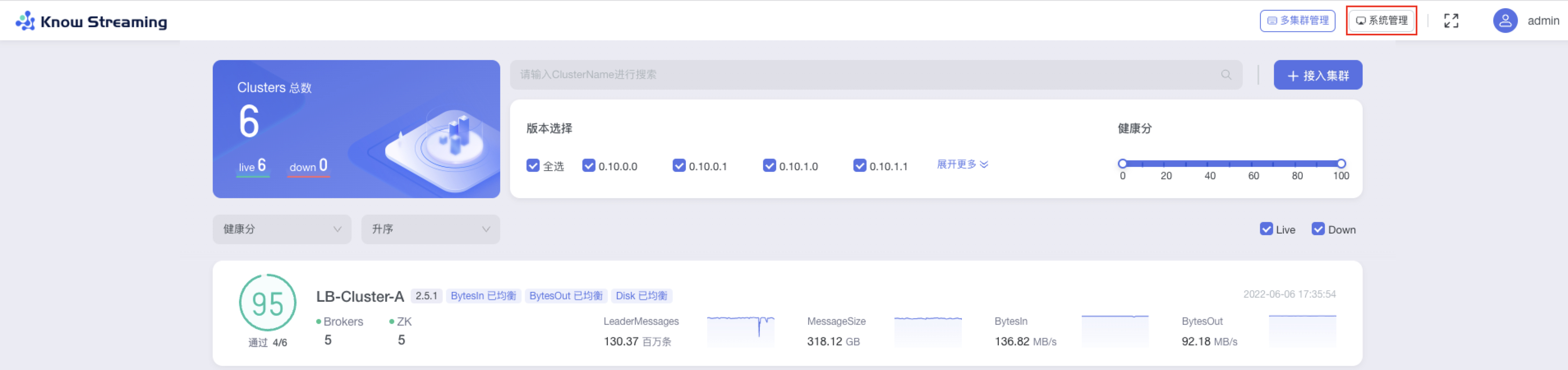

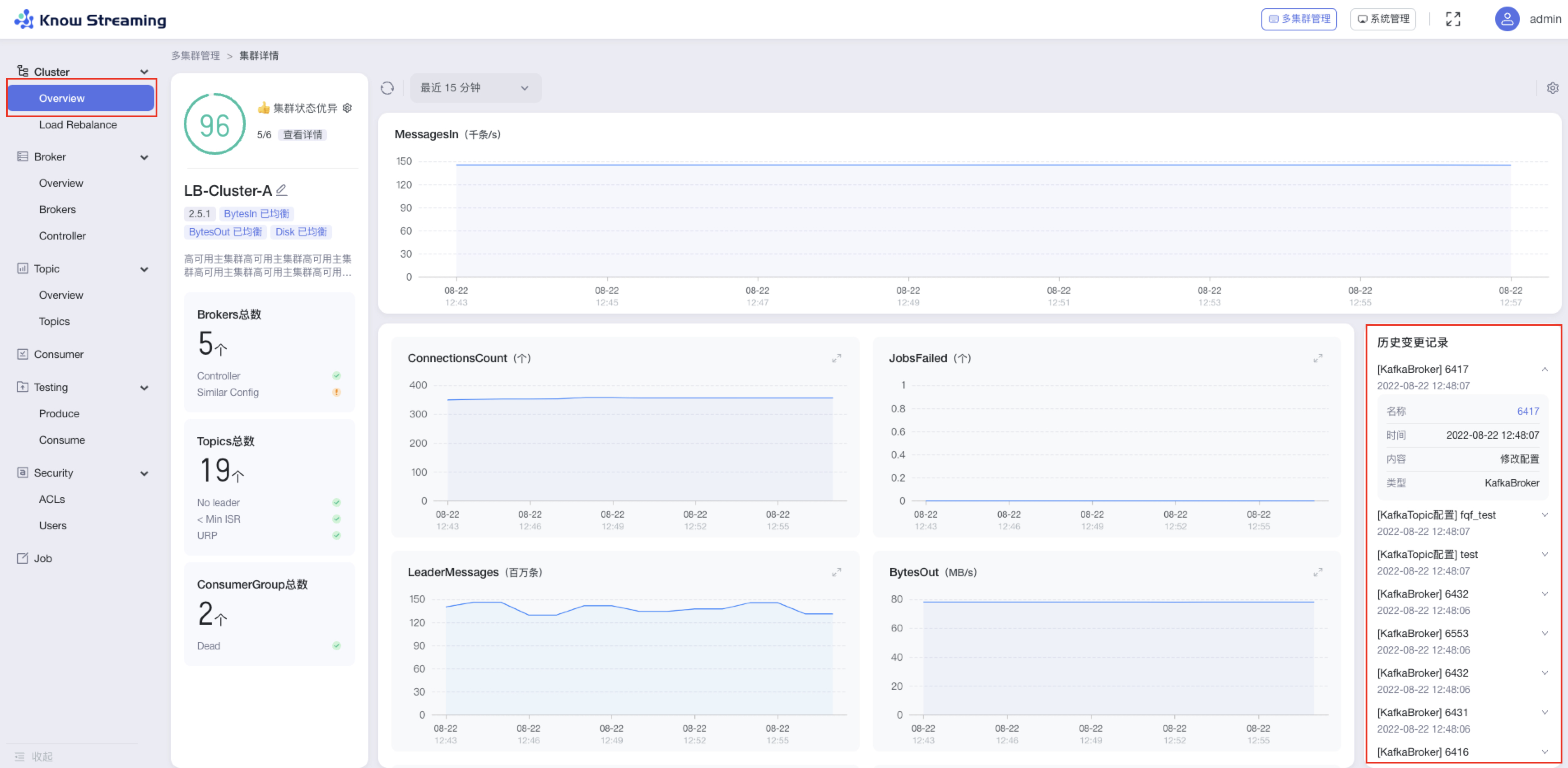

## `Know Streaming` 简介

|

||||

|

||||

`Know Streaming`是一套云原生的Kafka管控平台,脱胎于众多互联网内部多年的Kafka运营实践经验,专注于Kafka运维管控、监控告警、资源治理、多活容灾等核心场景。在用户体验、监控、运维管控上进行了平台化、可视化、智能化的建设,提供一系列特色的功能,极大地方便了用户和运维人员的日常使用,让普通运维人员都能成为Kafka专家。整体具有以下特点:

|

||||

|

||||

- 👀 **零侵入、全覆盖**

|

||||

- 无需侵入改造 `Apache Kafka` ,一键便能纳管 `0.10.x` ~ `3.x.x` 众多版本的Kafka,包括 `ZK` 或 `Raft` 运行模式的版本,同时在兼容架构上具备良好的扩展性,帮助您提升集群管理水平;

|

||||

|

||||

- 🌪️ **零成本、界面化**

|

||||

- 提炼高频 CLI 能力,设计合理的产品路径,提供清新美观的 GUI 界面,支持 Cluster、Broker、Topic、Group、Message、ACL 等组件 GUI 管理,普通用户5分钟即可上手;

|

||||

|

||||

- 👏 **云原生、插件化**

|

||||

- 基于云原生构建,具备水平扩展能力,只需要增加节点即可获取更强的采集及对外服务能力,提供众多可热插拔的企业级特性,覆盖可观测性生态整合、资源治理、多活容灾等核心场景;

|

||||

|

||||

- 🚀 **专业能力**

|

||||

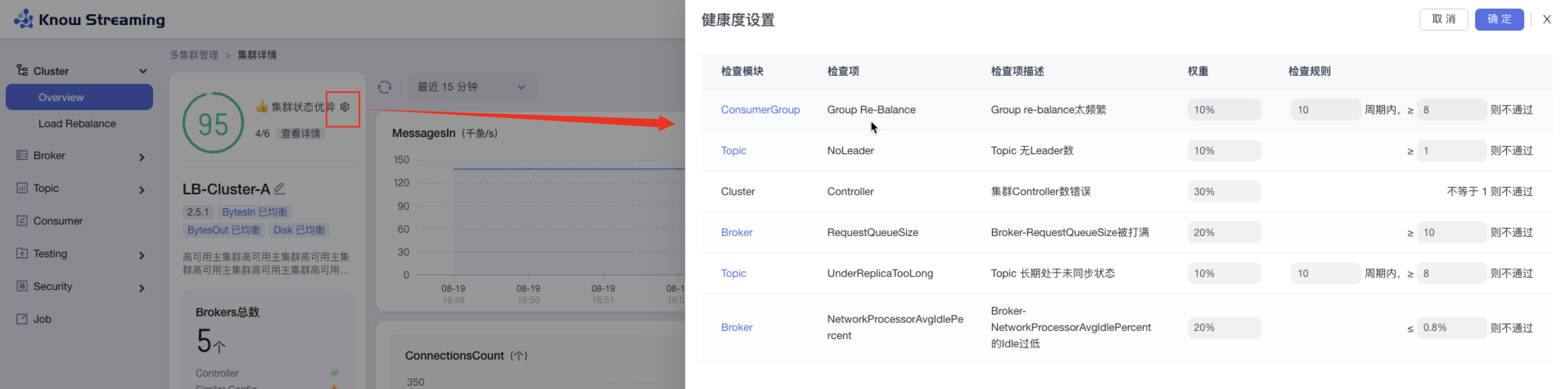

- 集群管理:支持集群一键纳管,健康分析、核心组件观测 等功能;

|

||||

- 观测提升:多维度指标观测大盘、观测指标最佳实践 等功能;

|

||||

- 异常巡检:集群多维度健康巡检、集群多维度健康分 等功能;

|

||||

- 能力增强:Topic扩缩副本、Topic副本迁移 等功能;

|

||||

|

||||

|

||||

|

||||

**产品图**

|

||||

|

||||

<p align="center">

|

||||

|

||||

<img src="http://img-ys011.didistatic.com/static/dc2img/do1_sPmS4SNLX9m1zlpmHaLJ" width = "768" height = "473" div align=center />

|

||||

|

||||

</p>

|

||||

|

||||

|

||||

|

||||

|

||||

## 文档资源

|

||||

|

||||

**`开发相关手册`**

|

||||

|

||||

- [打包编译手册](docs/install_guide/源码编译打包手册.md)

|

||||

- [单机部署手册](docs/install_guide/单机部署手册.md)

|

||||

- [版本升级手册](docs/install_guide/版本升级手册.md)

|

||||

- [本地源码启动手册](docs/dev_guide/本地源码启动手册.md)

|

||||

|

||||

**`产品相关手册`**

|

||||

|

||||

- [产品使用指南](docs/user_guide/用户使用手册.md)

|

||||

- [2.x与3.x新旧对比手册](docs/user_guide/新旧对比手册.md)

|

||||

- [FAQ](docs/user_guide/faq.md)

|

||||

|

||||

|

||||

**点击 [这里](https://doc.knowstreaming.com/product),也可以从官网获取到更多文档**

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

## 成为社区贡献者

|

||||

|

||||

点击 [这里](CONTRIBUTING.md),了解如何成为 Know Streaming 的贡献者

|

||||

|

||||

|

||||

|

||||

## 加入技术交流群

|

||||

|

||||

**`1、知识星球`**

|

||||

|

||||

<p align="left">

|

||||

<img src="https://user-images.githubusercontent.com/71620349/185357284-fdff1dad-c5e9-4ddf-9a82-0be1c970980d.JPG" height = "180" div align=left />

|

||||

</p>

|

||||

|

||||

<br/>

|

||||

<br/>

|

||||

<br/>

|

||||

<br/>

|

||||

<br/>

|

||||

<br/>

|

||||

<br/>

|

||||

<br/>

|

||||

|

||||

👍 我们正在组建国内最大,最权威的 **[Kafka中文社区](https://z.didi.cn/5gSF9)**

|

||||

|

||||

在这里你可以结交各大互联网的 Kafka大佬 以及 4000+ Kafka爱好者,一起实现知识共享,实时掌控最新行业资讯,期待 👏 您的加入中~ https://z.didi.cn/5gSF9

|

||||

|

||||

有问必答~! 互动有礼~!

|

||||

|

||||

PS: 提问请尽量把问题一次性描述清楚,并告知环境信息情况~!如使用版本、操作步骤、报错/警告信息等,方便大V们快速解答~

|

||||

|

||||

|

||||

|

||||

**`2、微信群`**

|

||||

|

||||

微信加群:添加`mike_zhangliang`、`PenceXie`的微信号备注KnowStreaming加群。

|

||||

|

||||

## Star History

|

||||

|

||||

[](https://star-history.com/#didi/KnowStreaming&Date)

|

||||

|

||||

279

Releases_Notes.md

Normal file

@@ -0,0 +1,279 @@

|

||||

|

||||

## v3.0.0-beta.1

|

||||

|

||||

**文档**

|

||||

- 新增Task模块说明文档

|

||||

- FAQ补充 `Specified key was too long; max key length is 767 bytes ` 错误说明

|

||||

- FAQ补充 `出现ESIndexNotFoundException报错` 错误说明

|

||||

|

||||

|

||||

**Bug修复**

|

||||

- 修复 Consumer 点击 Stop 未停止检索的问题

|

||||

- 修复创建/编辑角色权限报错问题

|

||||

- 修复多集群管理/单集群详情均衡卡片状态错误问题

|

||||

- 修复版本列表未排序问题

|

||||

- 修复Raft集群Controller信息不断记录问题

|

||||

- 修复部分版本消费组描述信息获取失败问题

|

||||

- 修复分区Offset获取失败的日志中,缺少Topic名称信息问题

|

||||

- 修复GitHub图地址错误,及图裂问题

|

||||

- 修复Broker默认使用的地址和注释不一致问题

|

||||

- 修复 Consumer 列表分页不生效问题

|

||||

- 修复操作记录表operation_methods字段缺少默认值问题

|

||||

- 修复集群均衡表中move_broker_list字段无效的问题

|

||||

- 修复KafkaUser、KafkaACL信息获取时,日志一直重复提示不支持问题

|

||||

- 修复指标缺失时,曲线出现掉底的问题

|

||||

|

||||

|

||||

**体验优化**

|

||||

- 优化前端构建时间和打包体积,增加依赖打包的分包策略

|

||||

- 优化产品样式和文案展示

|

||||

- 优化ES客户端数为可配置

|

||||

- 优化日志中大量出现的MySQL Key冲突日志

|

||||

|

||||

|

||||

**能力提升**

|

||||

- 增加周期任务,用于主动创建缺少的ES模版及索引的能力,减少额外的脚本操作

|

||||

- 增加JMX连接的Broker地址可选择的能力

|

||||

|

||||

|

||||

## v3.0.0-beta.0

|

||||

|

||||

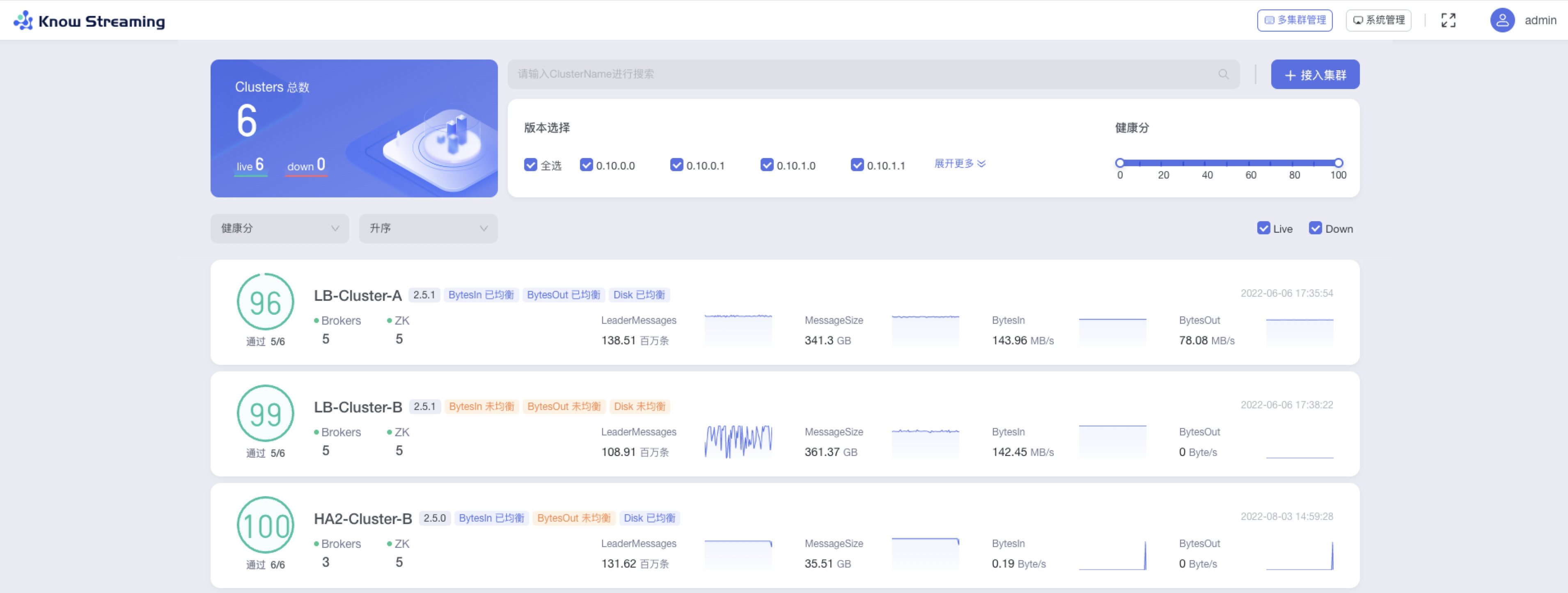

**1、多集群管理**

|

||||

|

||||

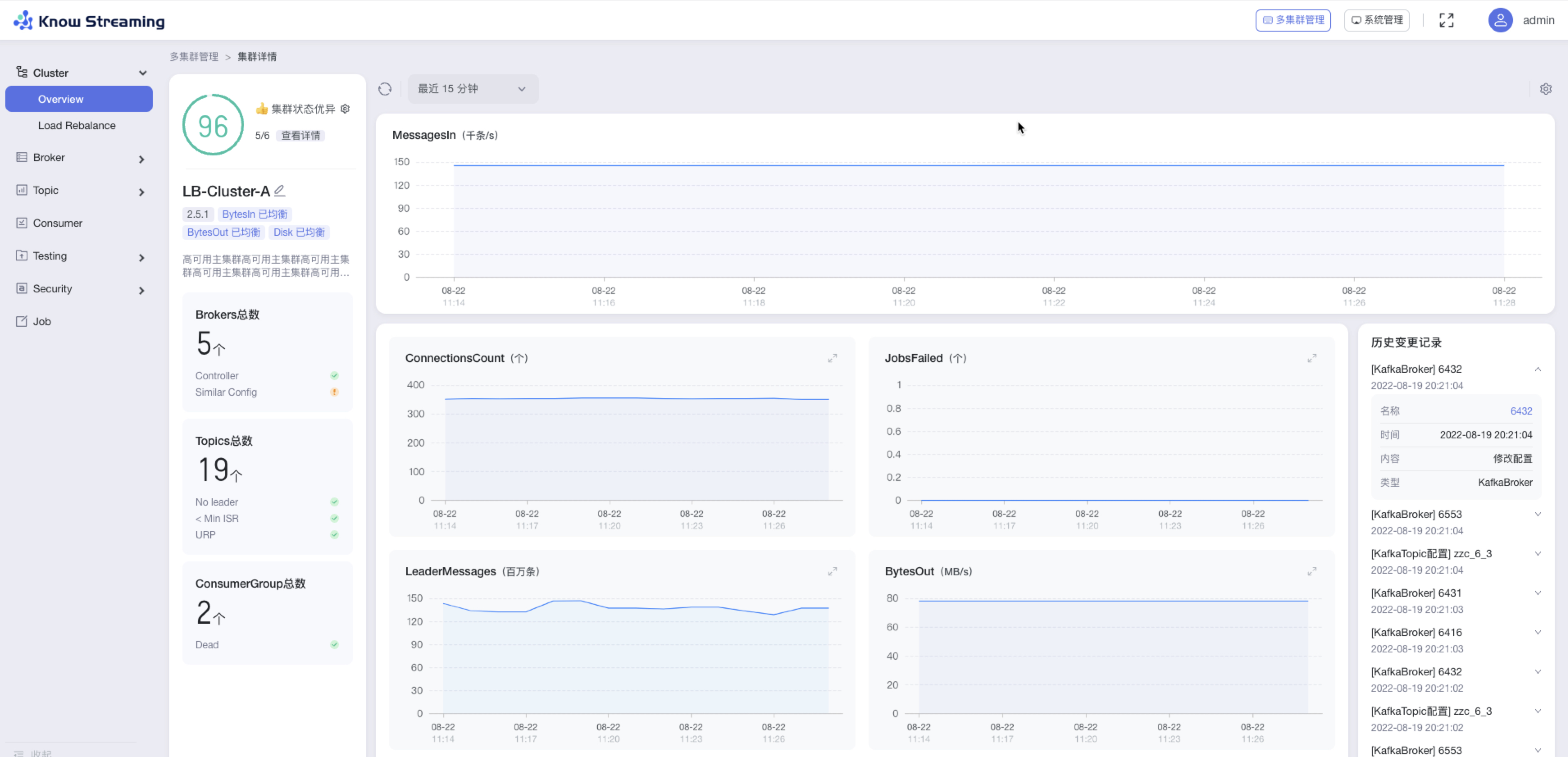

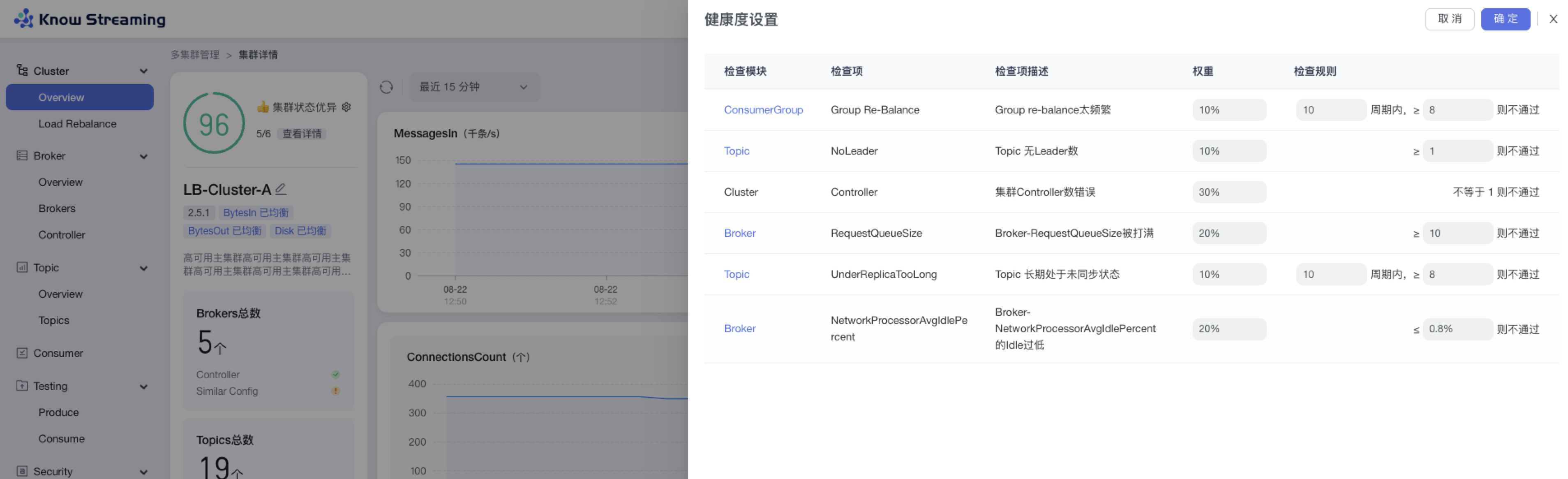

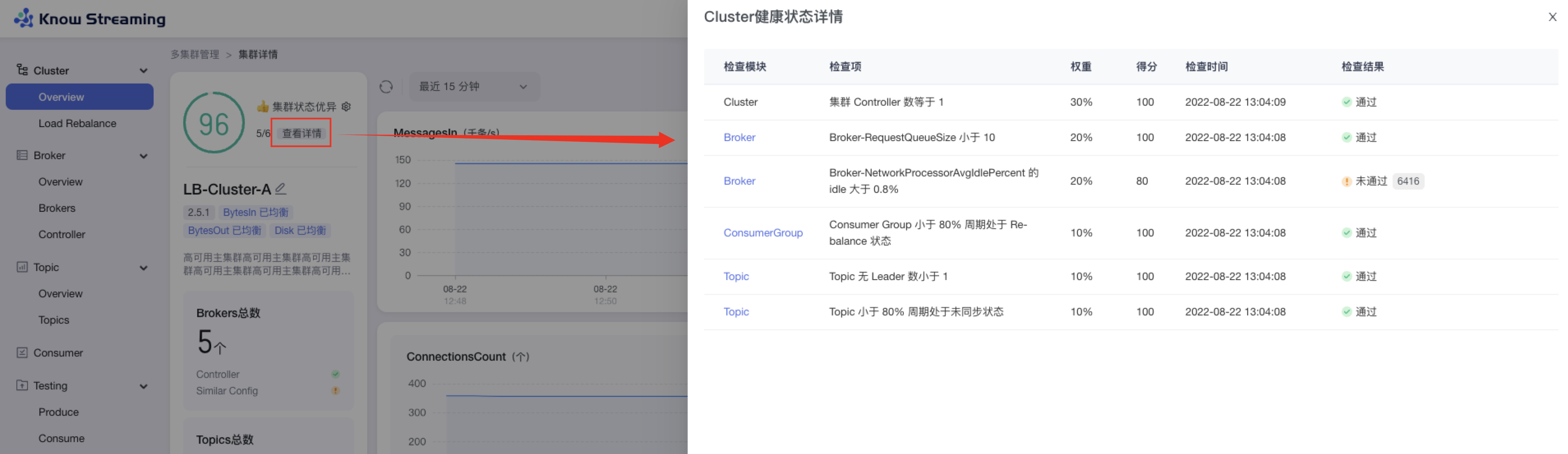

- 增加健康监测体系、关键组件&指标 GUI 展示

|

||||

- 增加 2.8.x 以上 Kafka 集群接入,覆盖 0.10.x-3.x

|

||||

- 删除逻辑集群、共享集群、Region 概念

|

||||

|

||||

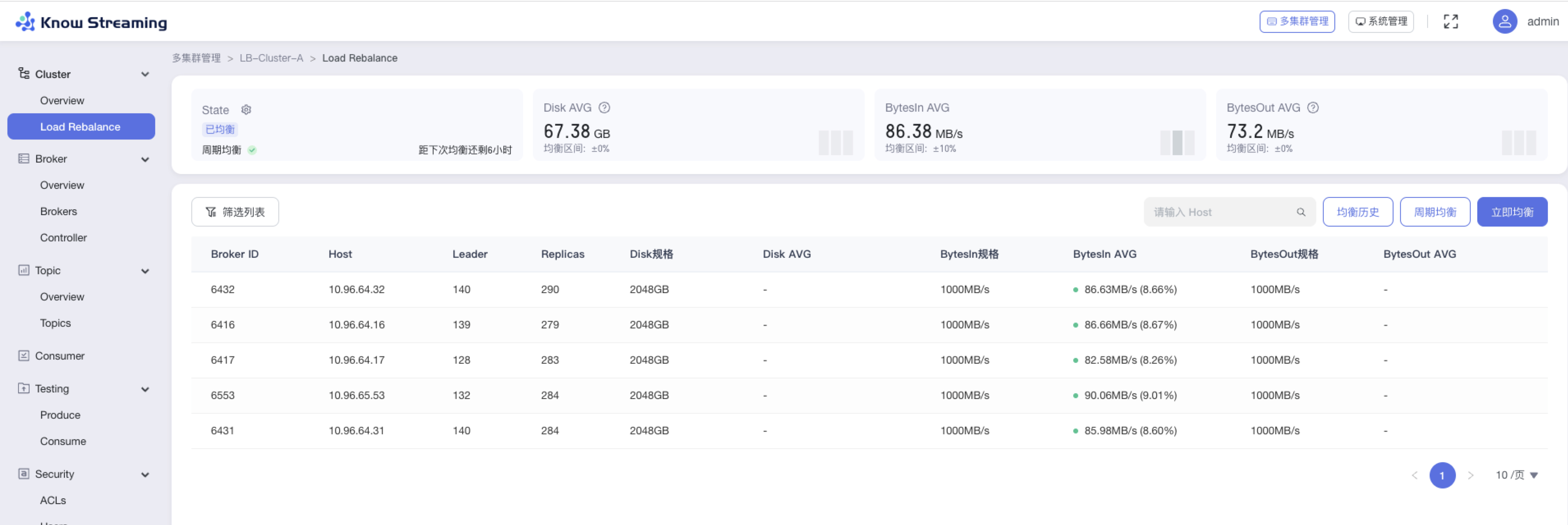

**2、Cluster 管理**

|

||||

|

||||

- 增加集群概览信息、集群配置变更记录

|

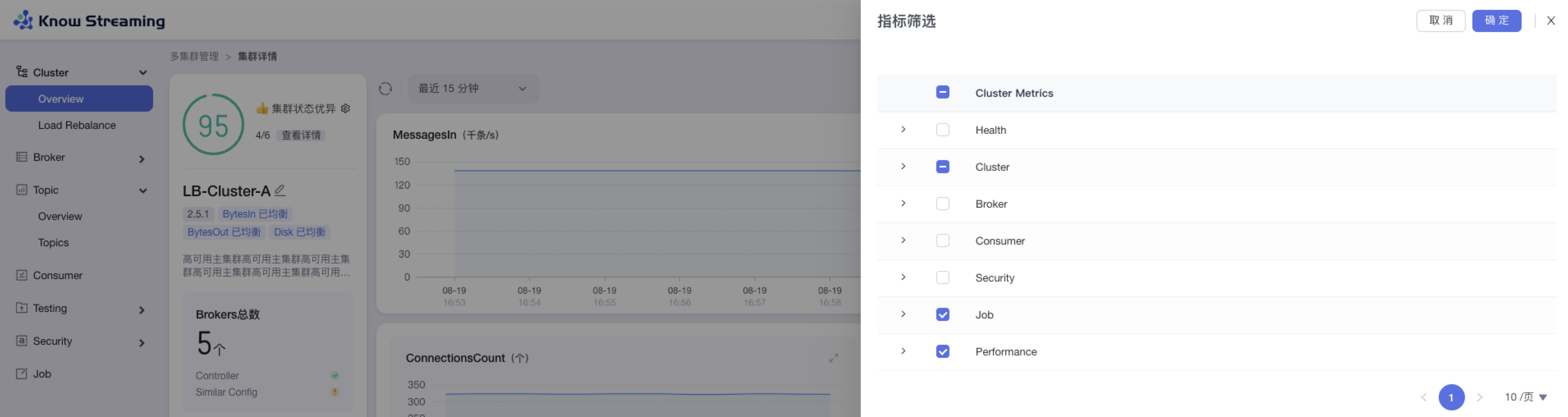

||||

- 增加 Cluster 健康分,健康检查规则支持自定义配置

|

||||

- 增加 Cluster 关键指标统计和 GUI 展示,支持自定义配置

|

||||

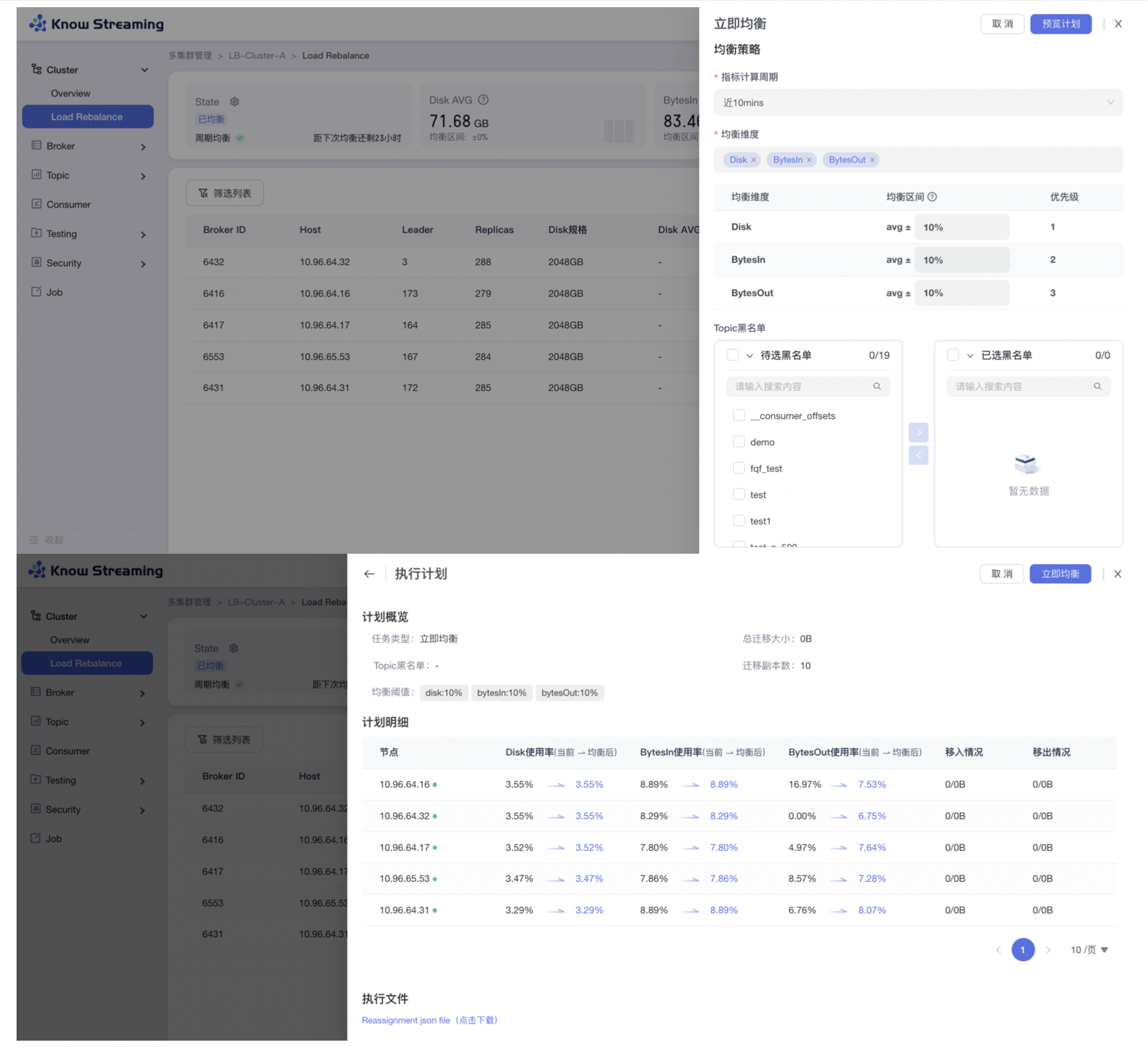

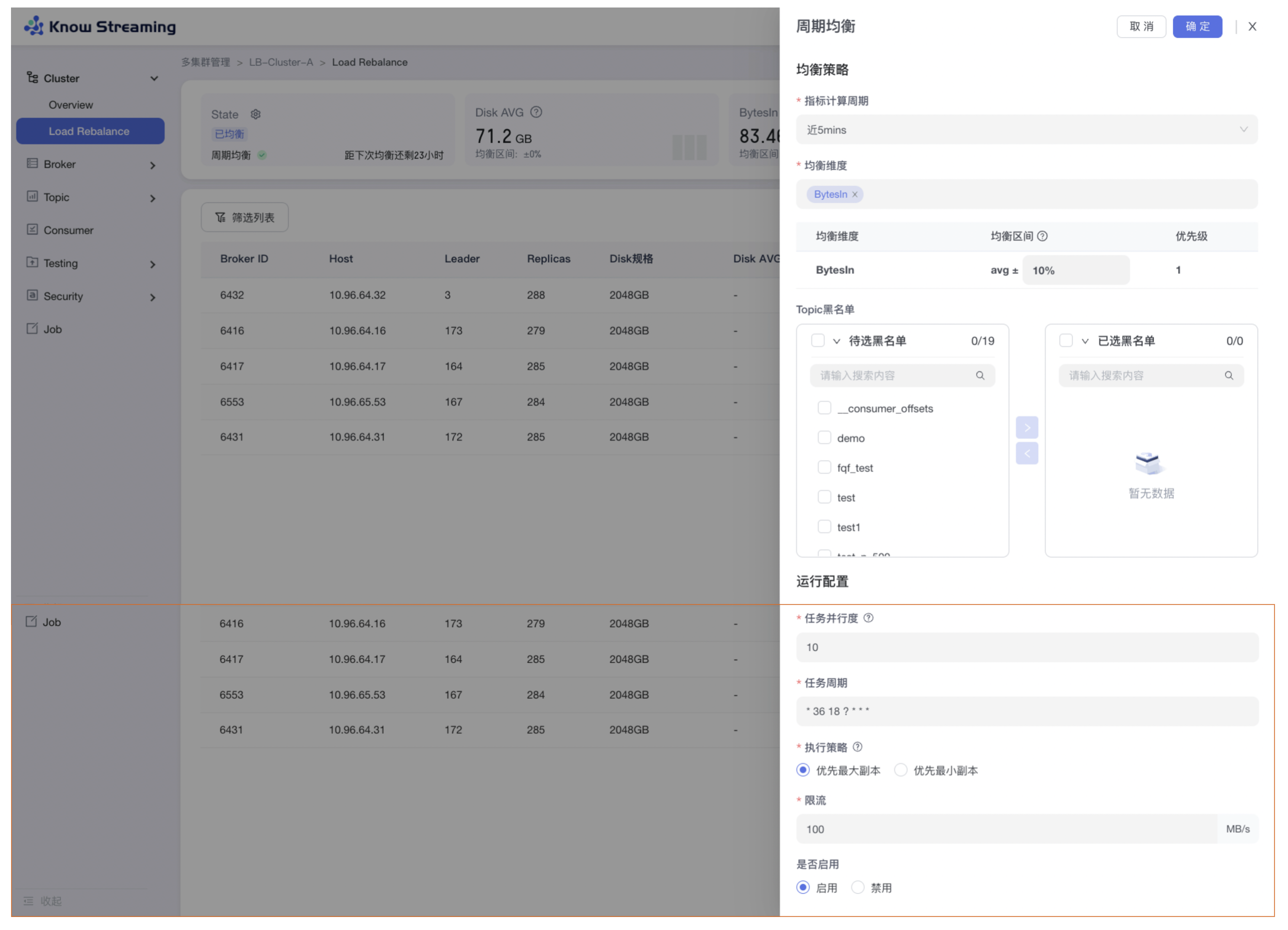

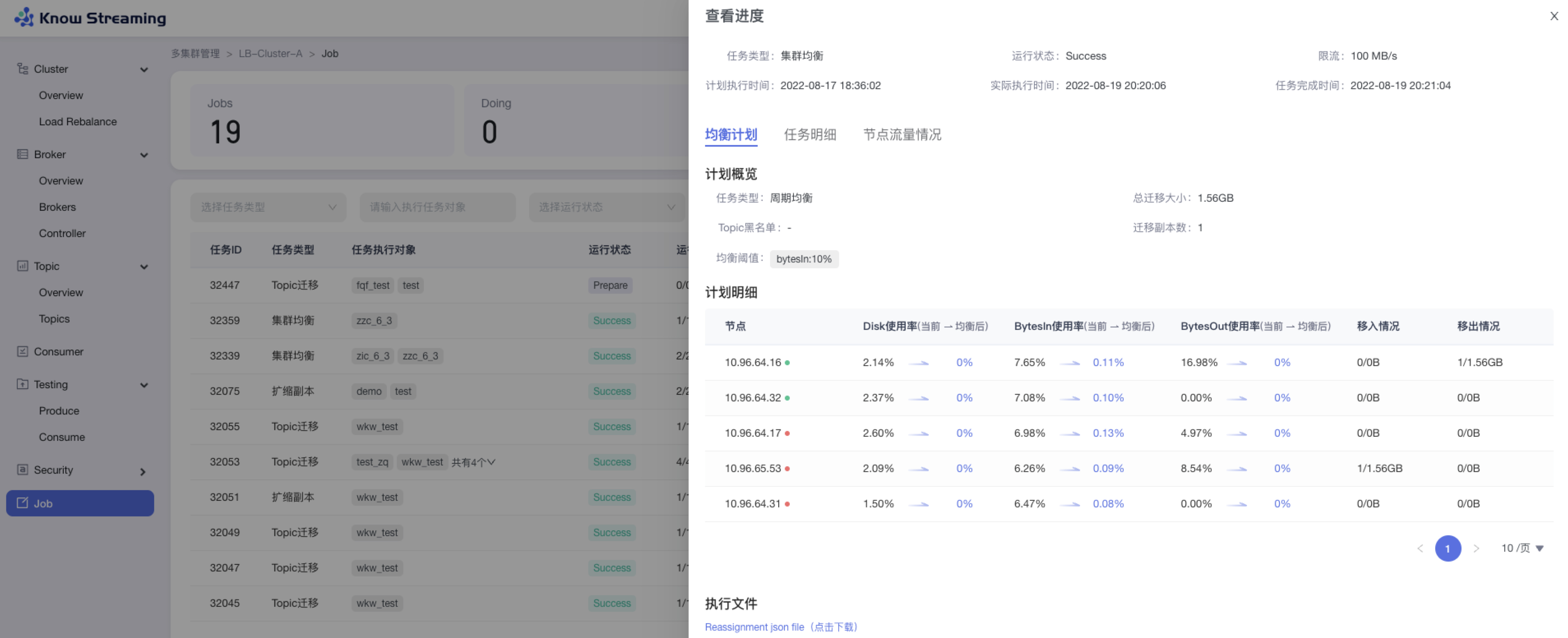

- 增加 Cluster 层 I/O、Disk 的 Load Reblance 功能,支持定时均衡任务(企业版)

|

||||

- 删除限流、鉴权功能

|

||||

- 删除 APPID 概念

|

||||

|

||||

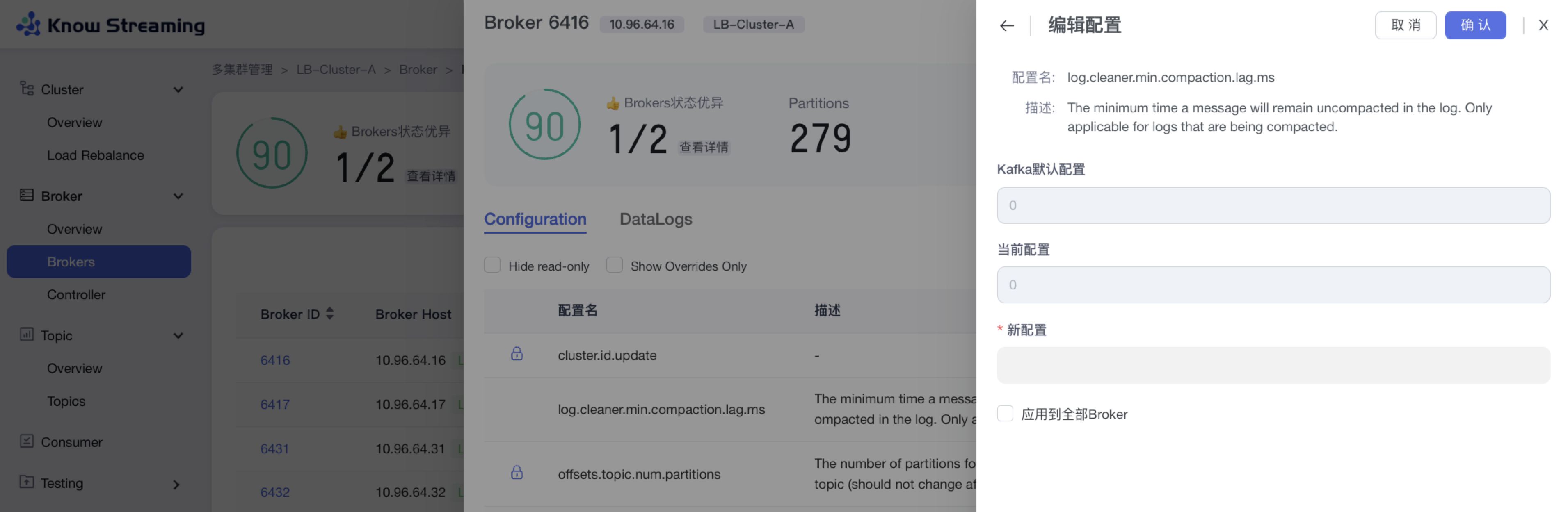

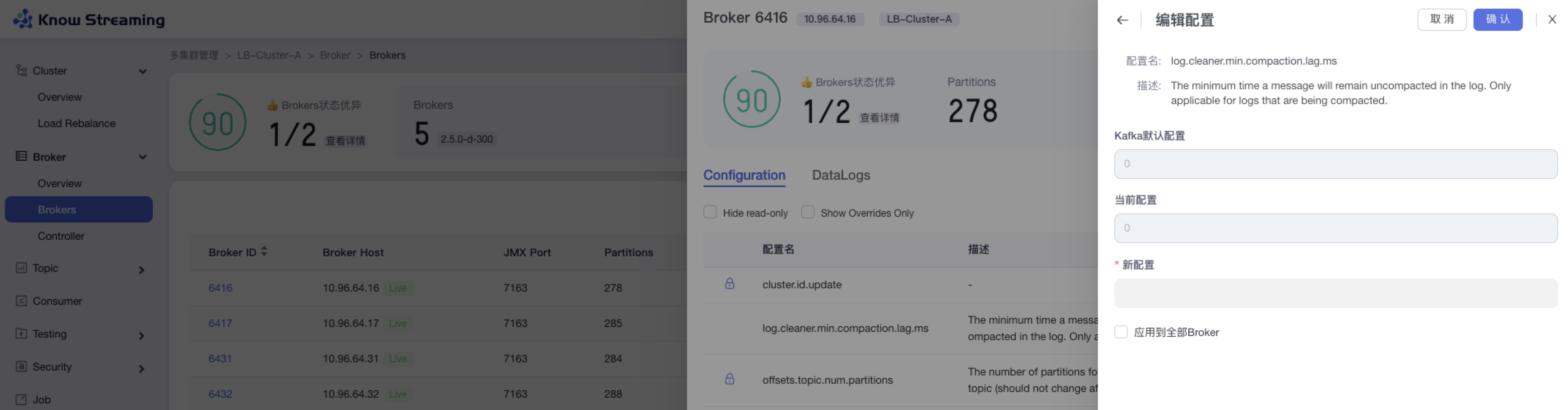

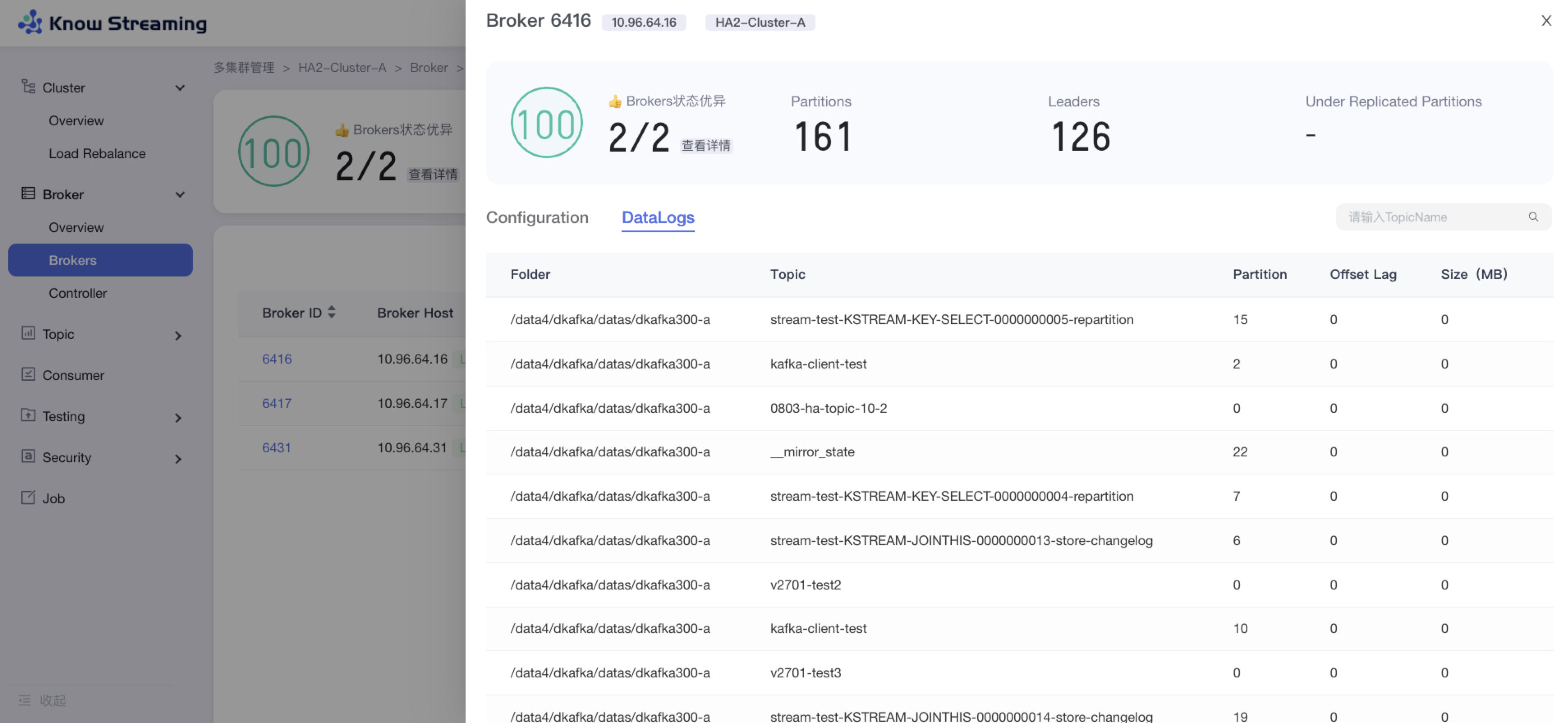

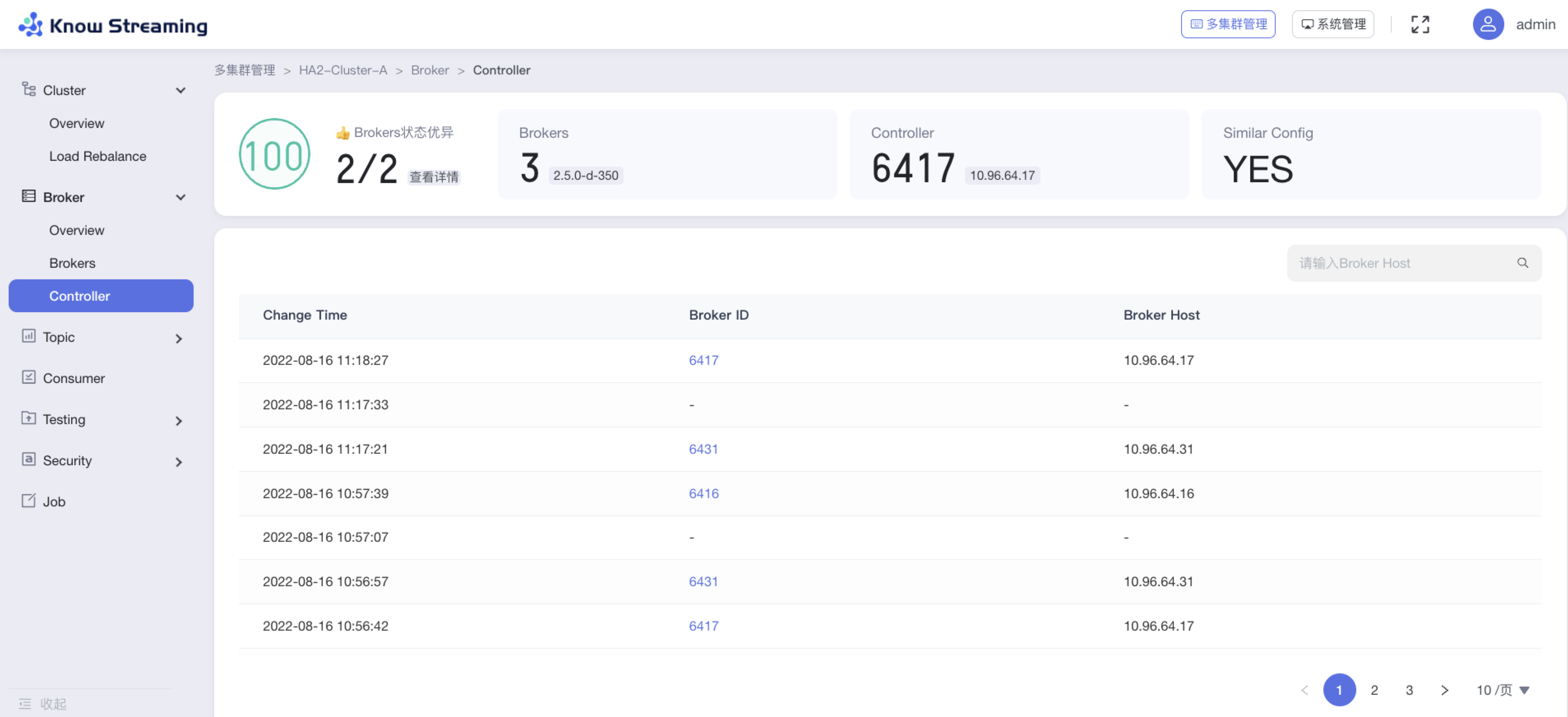

**3、Broker 管理**

|

||||

|

||||

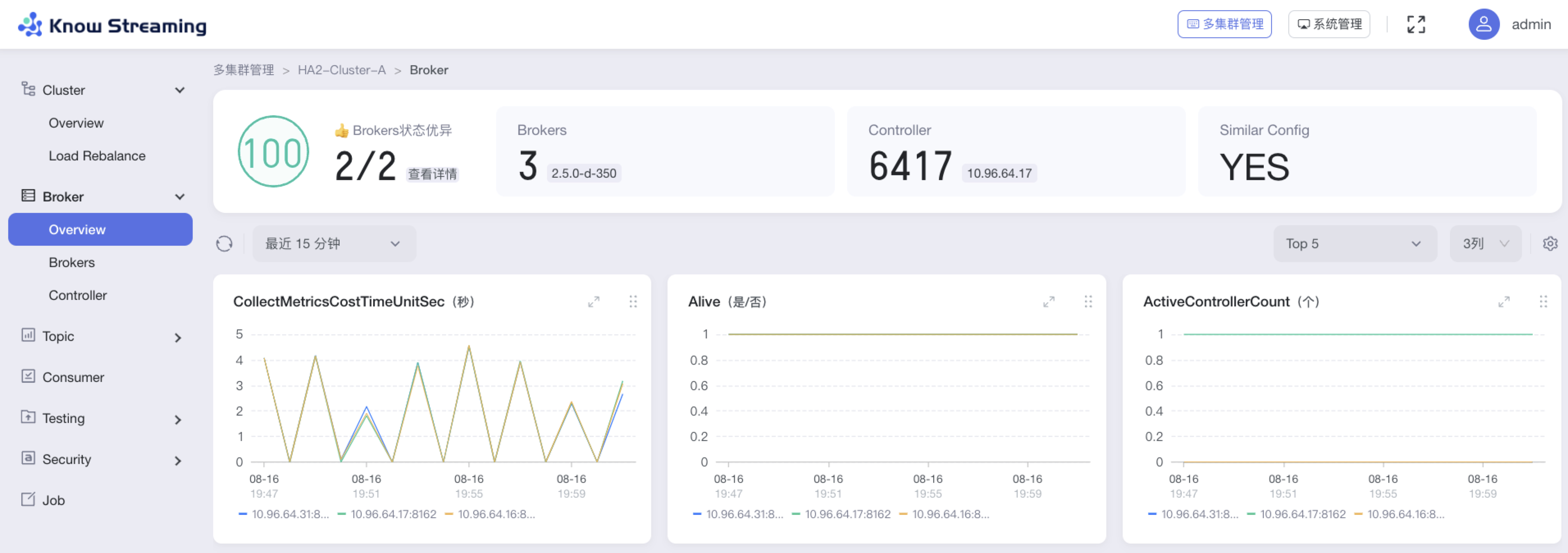

- 增加 Broker 健康分

|

||||

- 增加 Broker 关键指标统计和 GUI 展示,支持自定义配置

|

||||

- 增加 Broker 参数配置功能,需重启生效

|

||||

- 增加 Controller 变更记录

|

||||

- 增加 Broker Datalogs 记录

|

||||

- 删除 Leader Rebalance 功能

|

||||

- 删除 Broker 优先副本选举

|

||||

|

||||

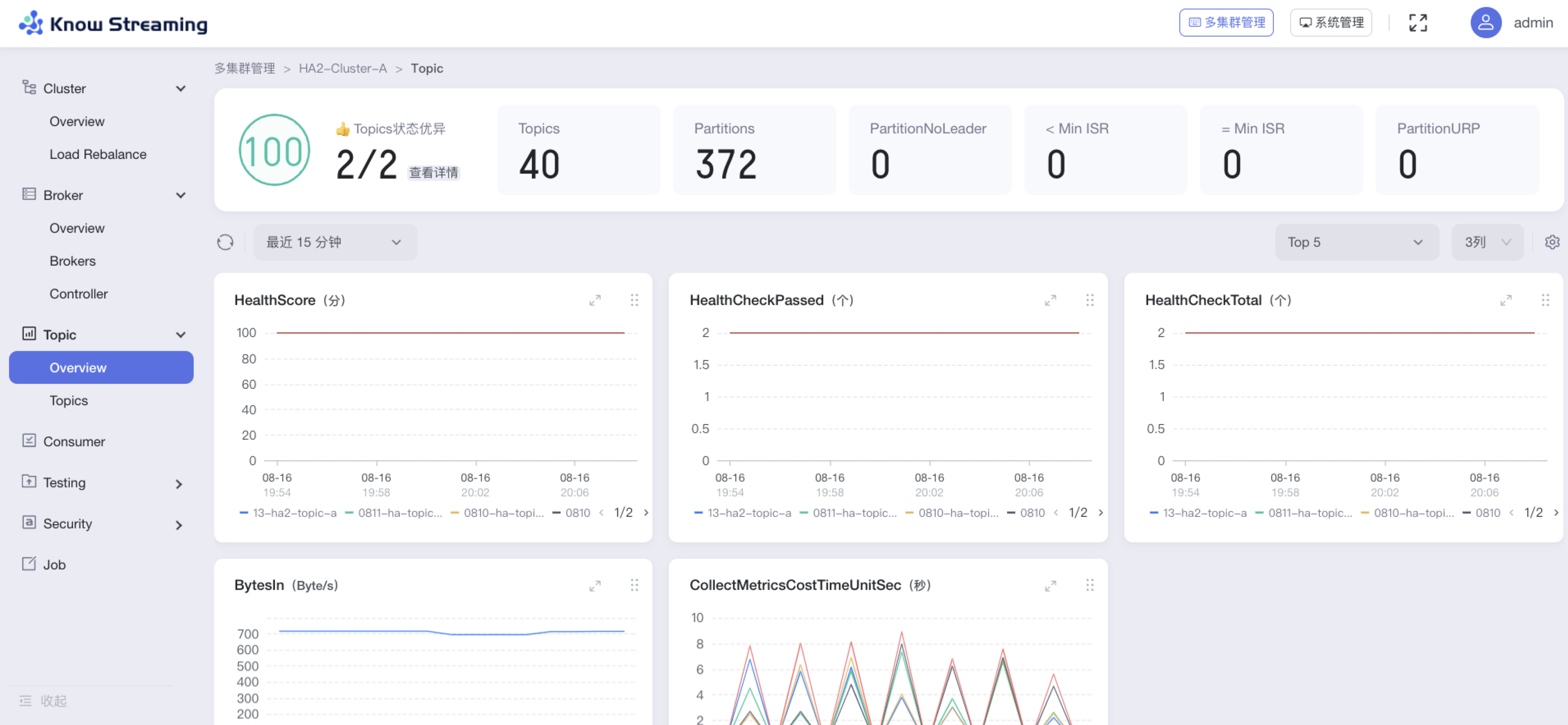

**4、Topic 管理**

|

||||

|

||||

- 增加 Topic 健康分

|

||||

- 增加 Topic 关键指标统计和 GUI 展示,支持自定义配置

|

||||

- 增加 Topic 参数配置功能,可实时生效

|

||||

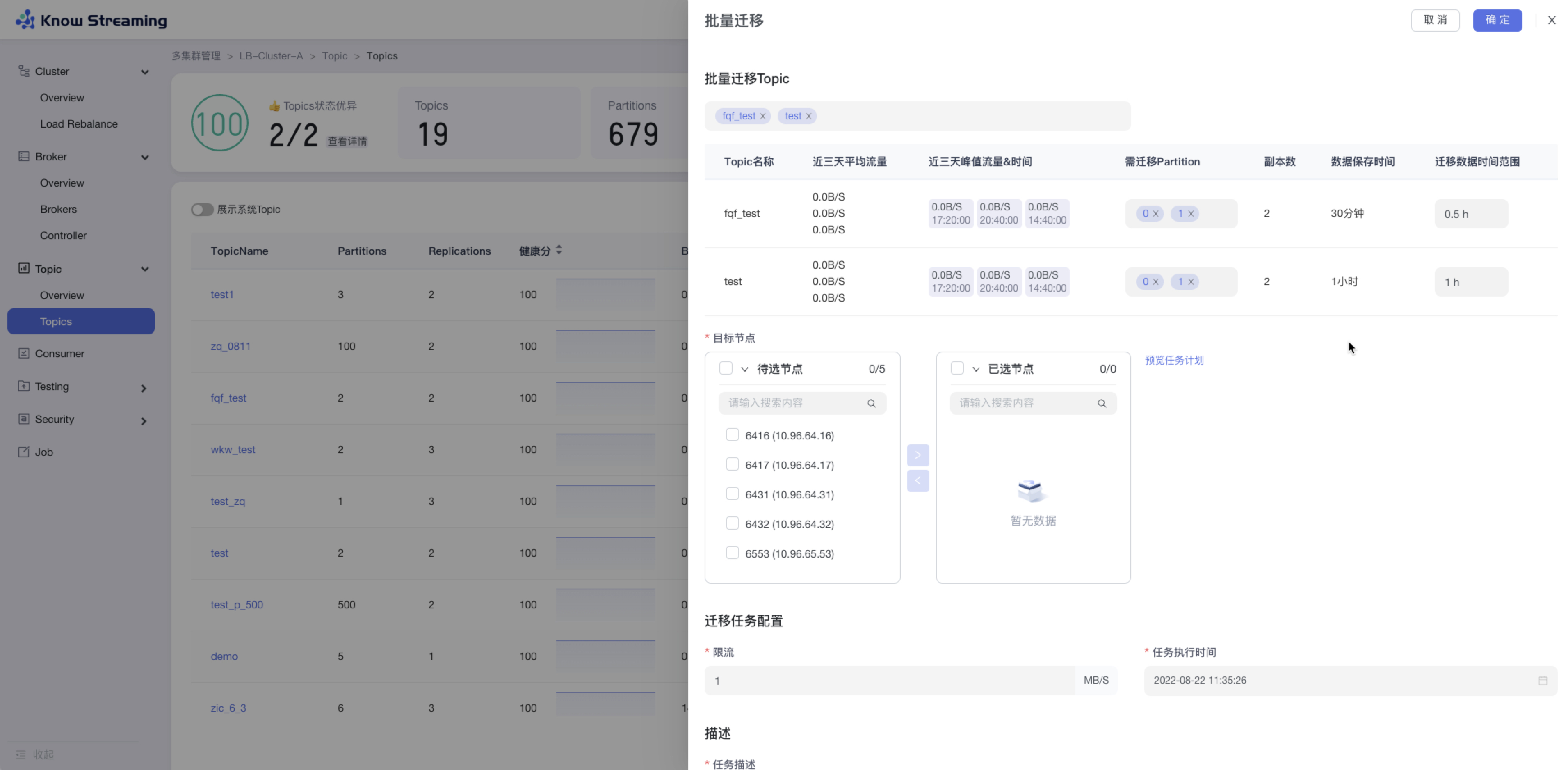

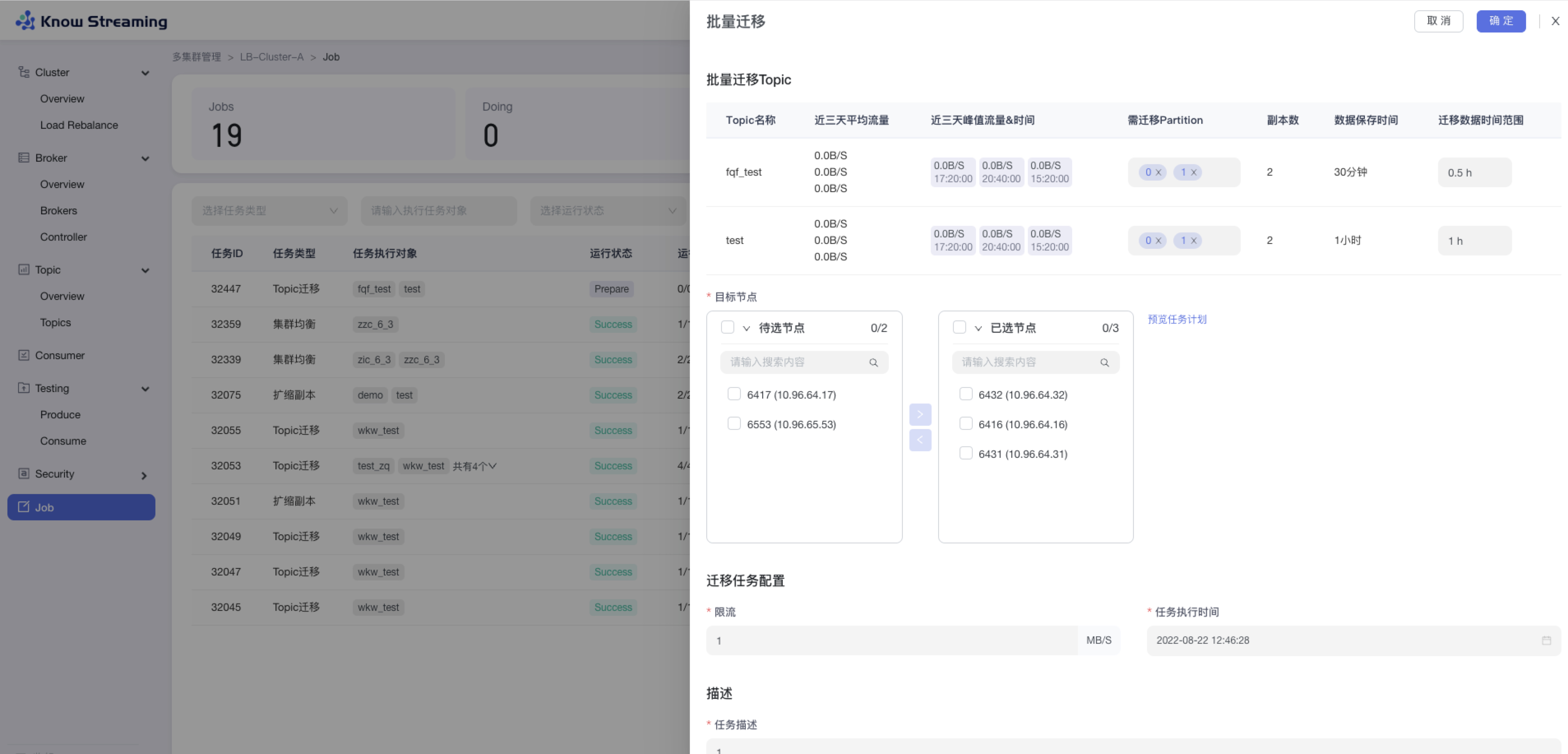

- 增加 Topic 批量迁移、Topic 批量扩缩副本功能

|

||||

- 增加查看系统 Topic 功能

|

||||

- 优化 Partition 分布的 GUI 展示

|

||||

- 优化 Topic Message 数据采样

|

||||

- 删除 Topic 过期概念

|

||||

- 删除 Topic 申请配额功能

|

||||

|

||||

**5、Consumer 管理**

|

||||

|

||||

- 优化了 ConsumerGroup 展示形式,增加 Consumer Lag 的 GUI 展示

|

||||

|

||||

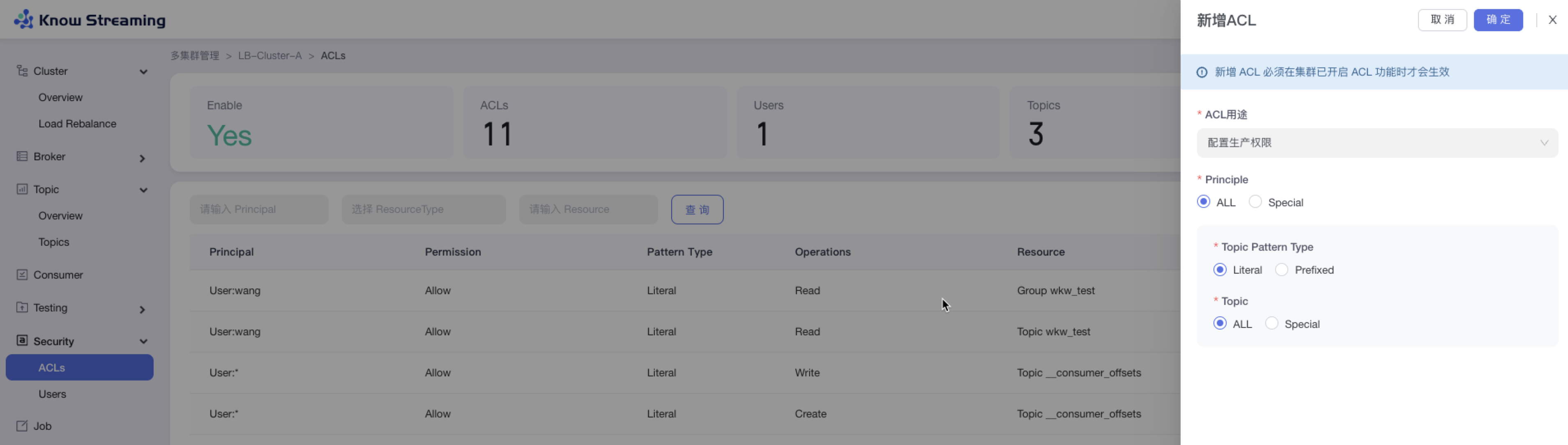

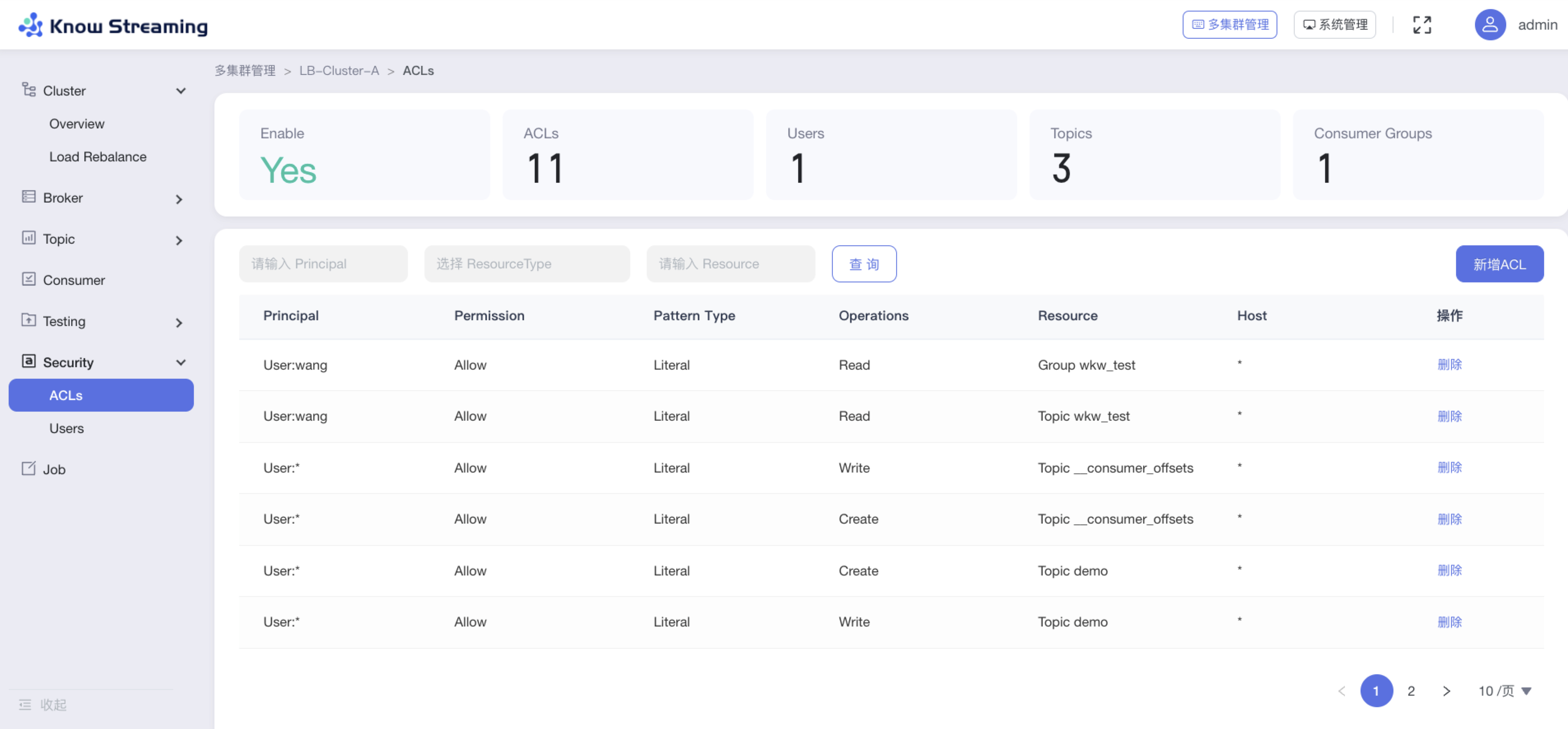

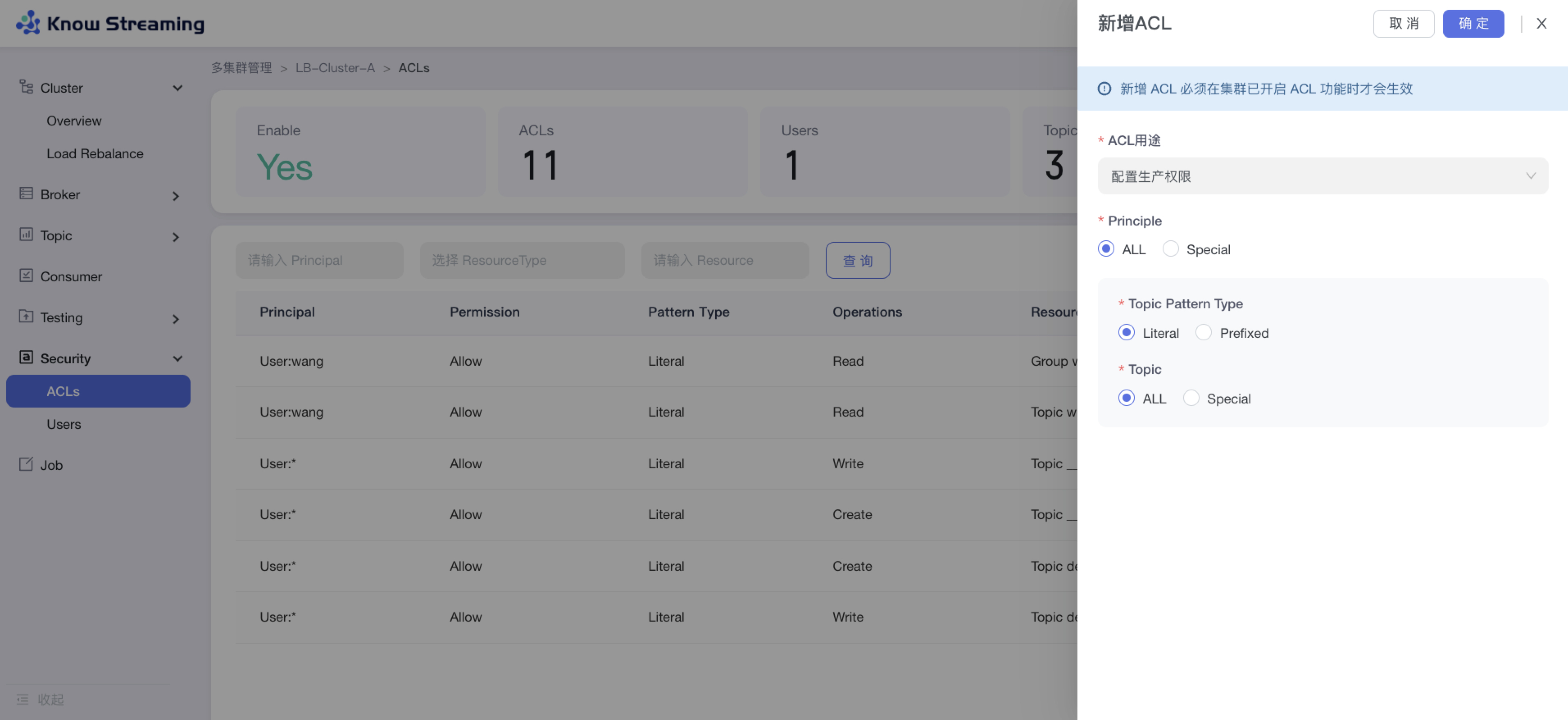

**6、ACL 管理**

|

||||

|

||||

- 增加原生 ACL GUI 配置功能,可配置生产、消费、自定义多种组合权限

|

||||

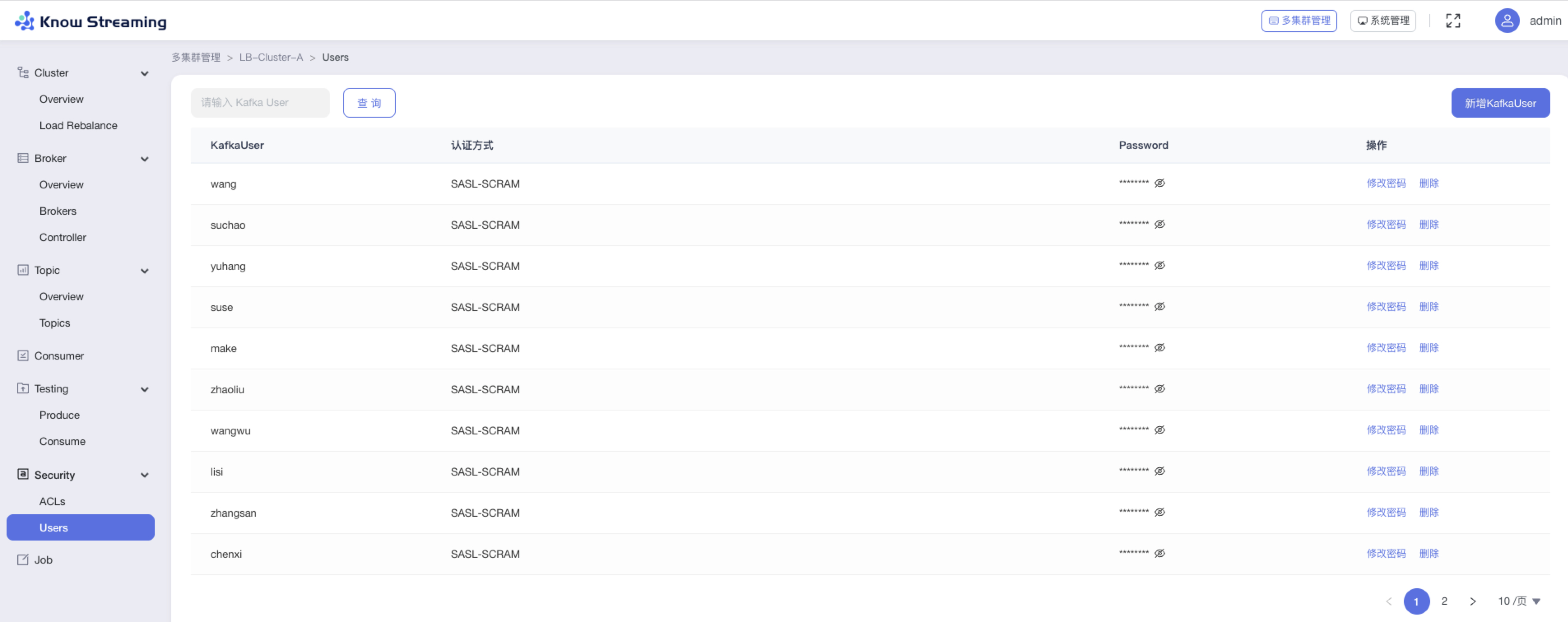

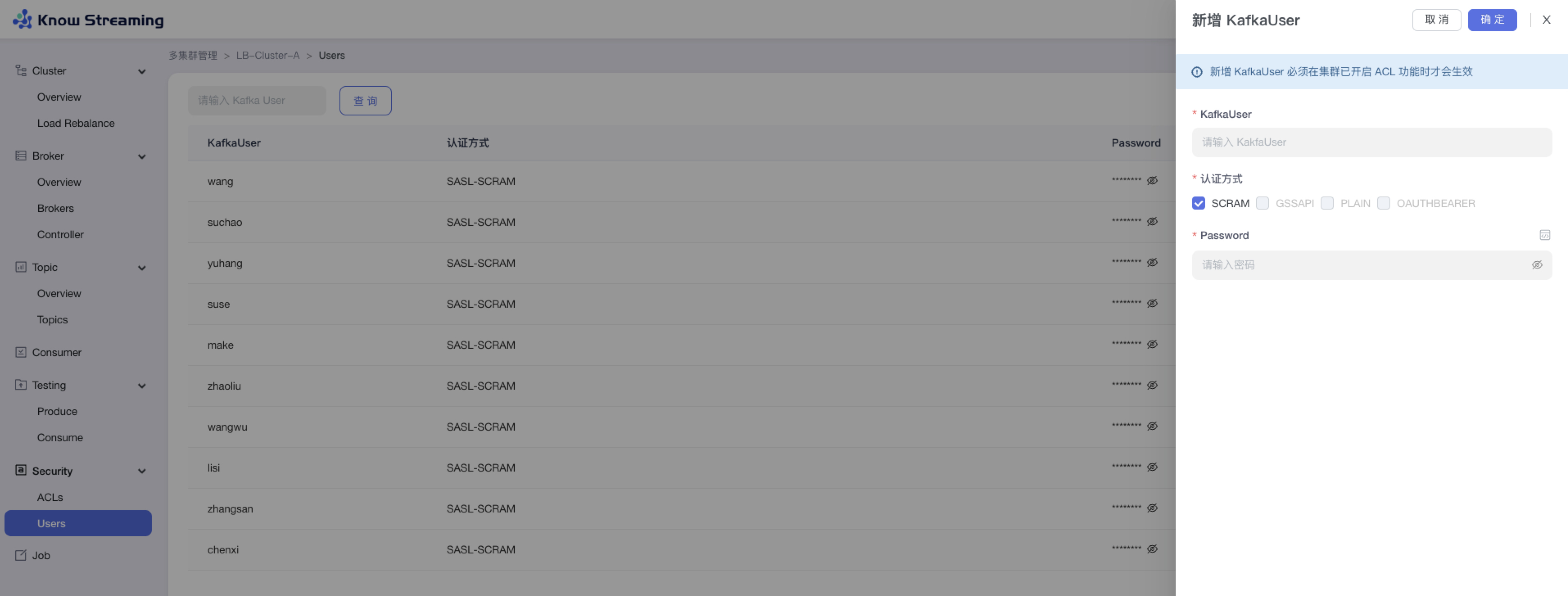

- 增加 KafkaUser 功能,可自定义新增 KafkaUser

|

||||

|

||||

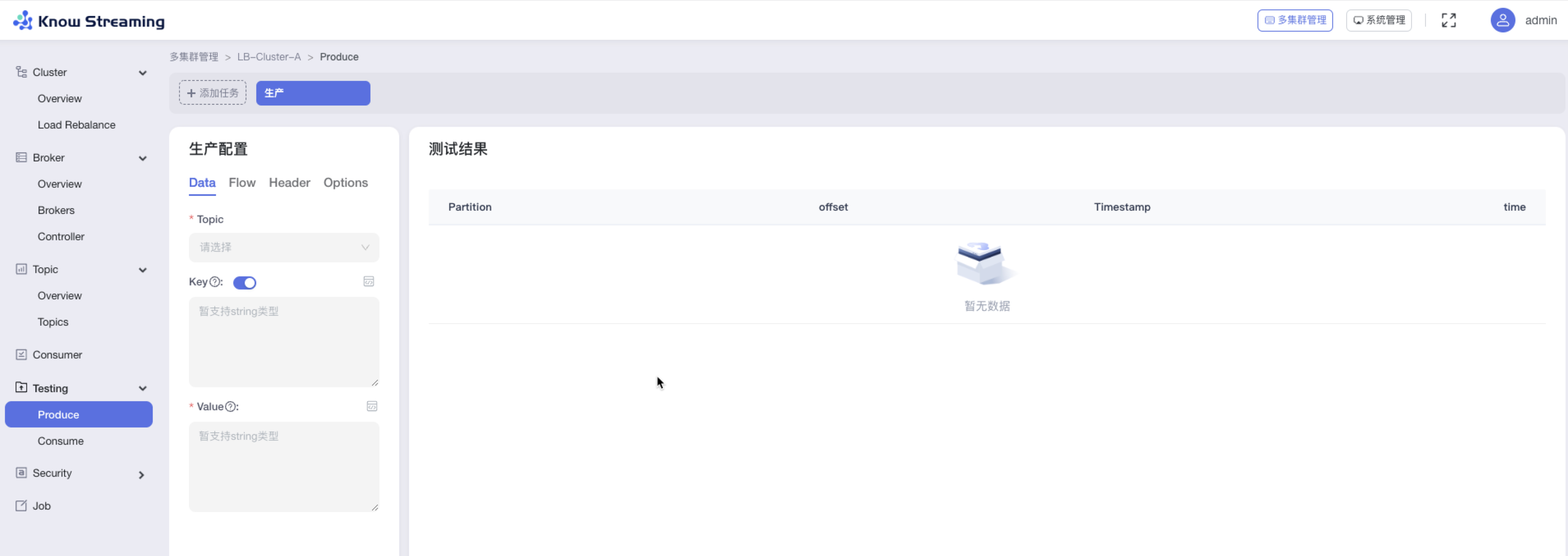

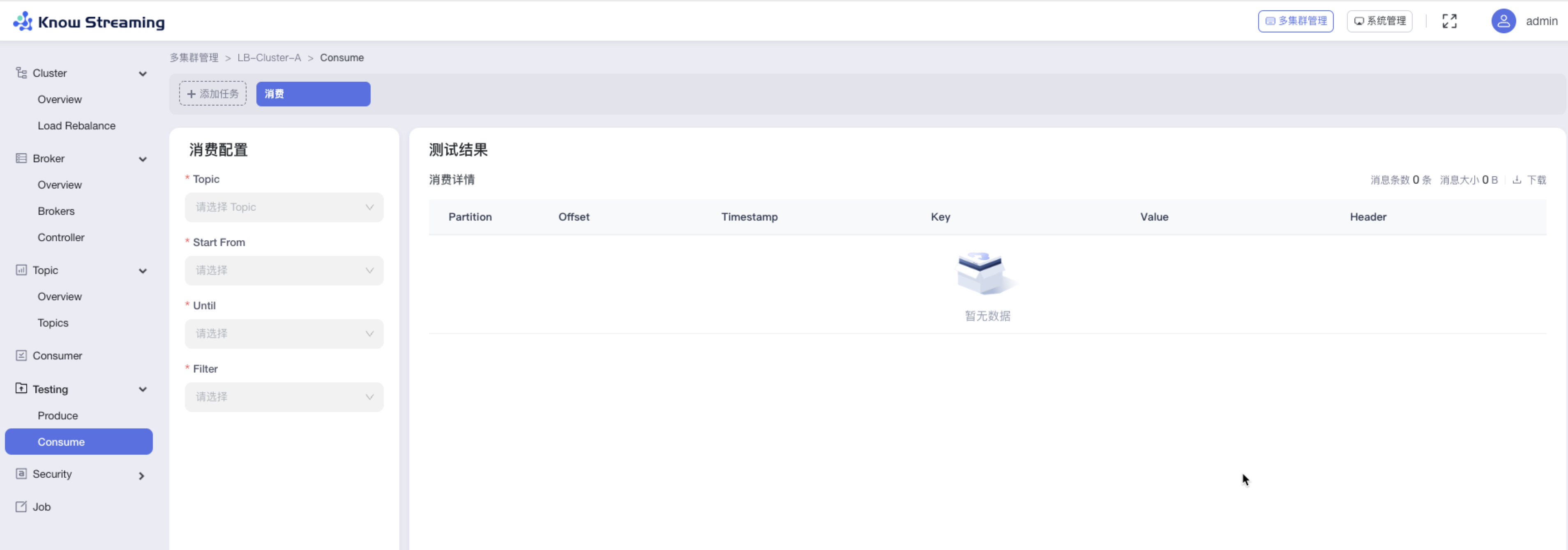

**7、消息测试(企业版)**

|

||||

|

||||

- 增加生产者消息模拟器,支持 Data、Flow、Header、Options 自定义配置(企业版)

|

||||

- 增加消费者消息模拟器,支持 Data、Flow、Header、Options 自定义配置(企业版)

|

||||

|

||||

**8、Job**

|

||||

|

||||

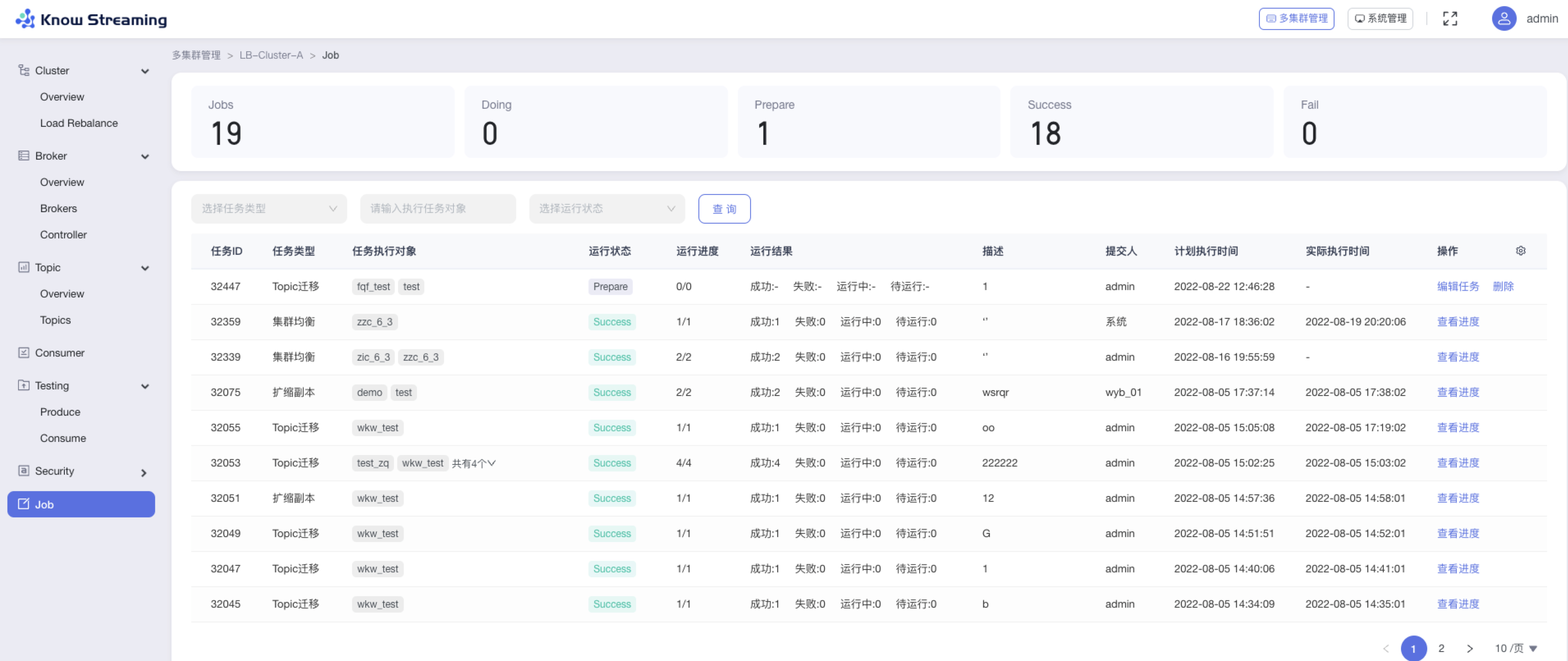

- 优化 Job 模块,支持任务进度管理

|

||||

|

||||

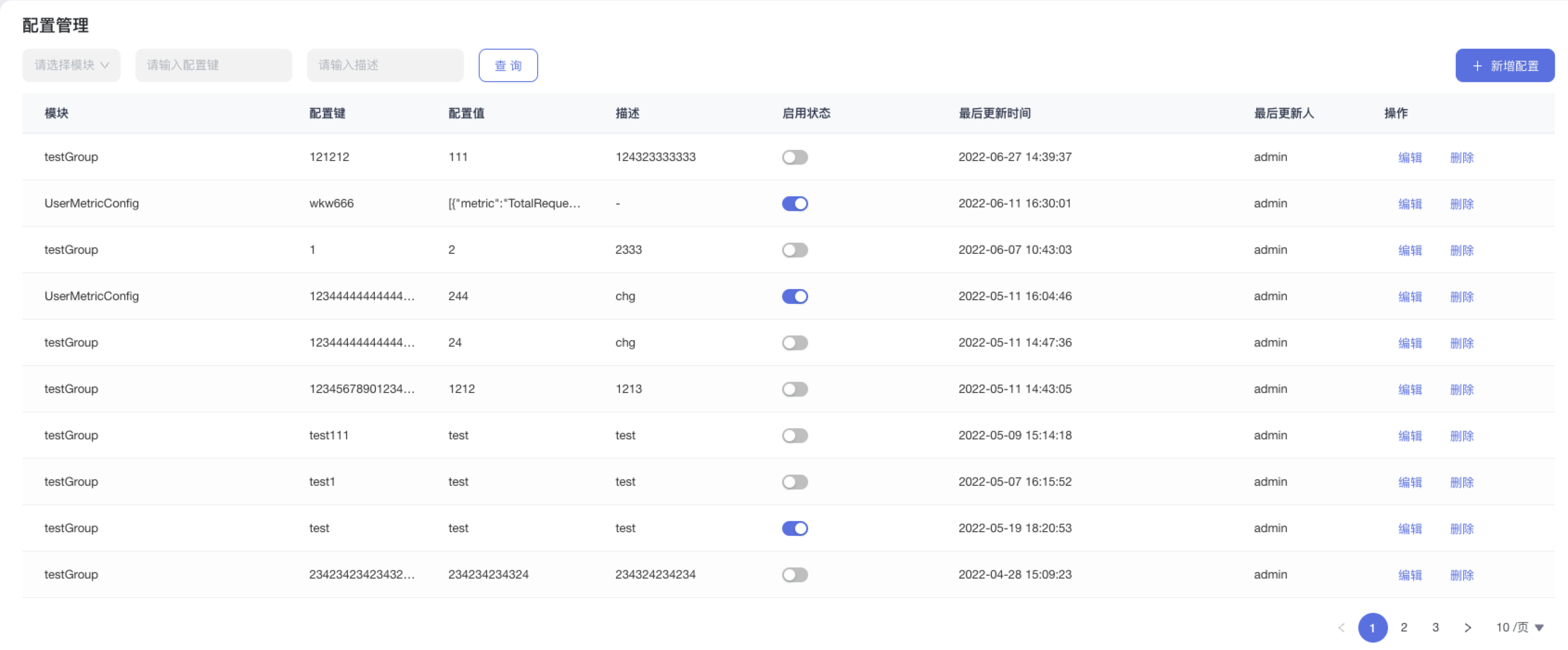

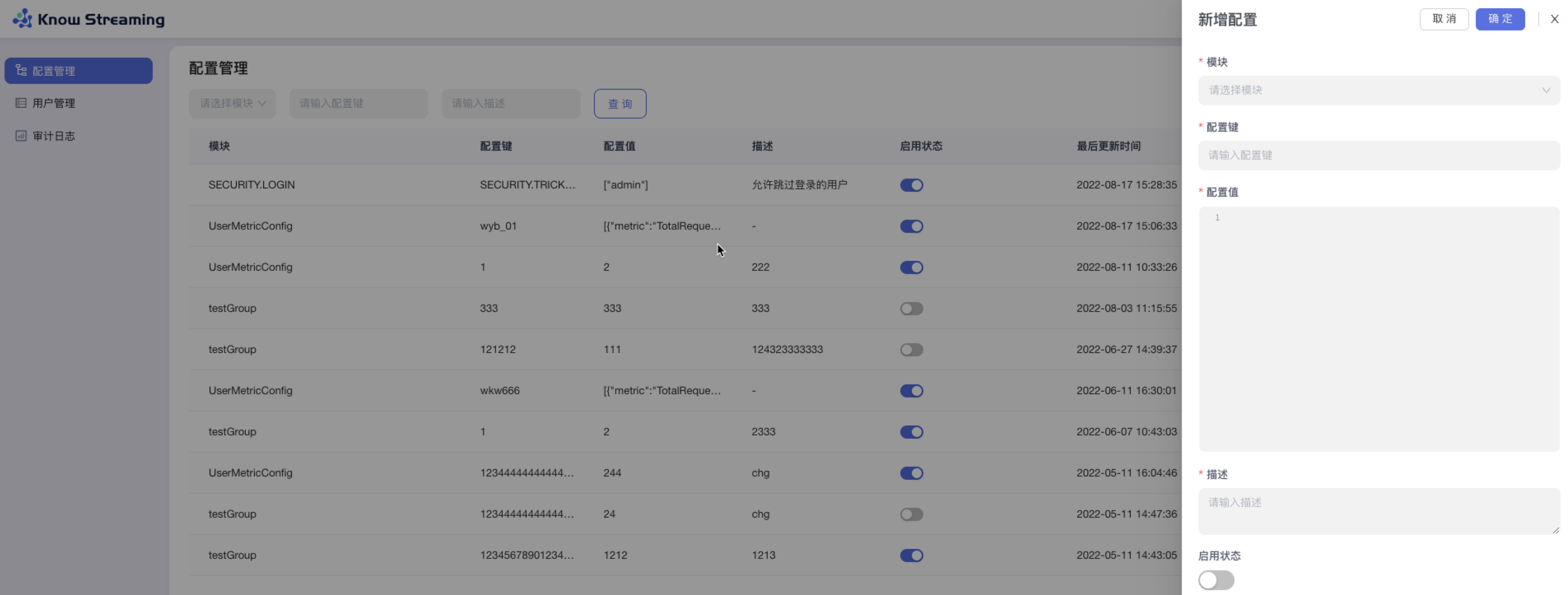

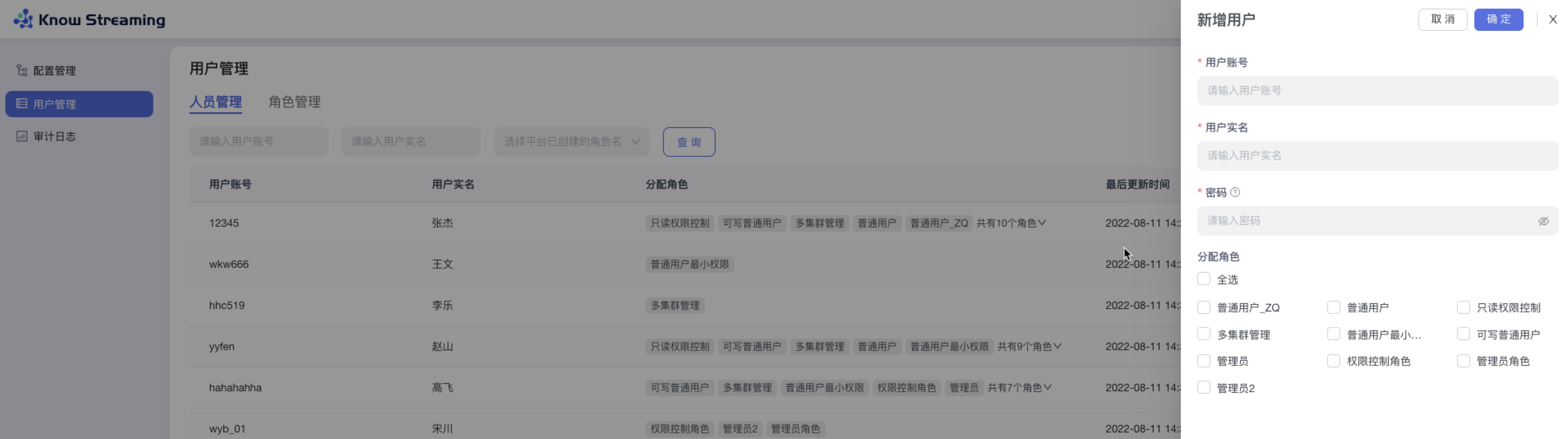

**9、系统管理**

|

||||

|

||||

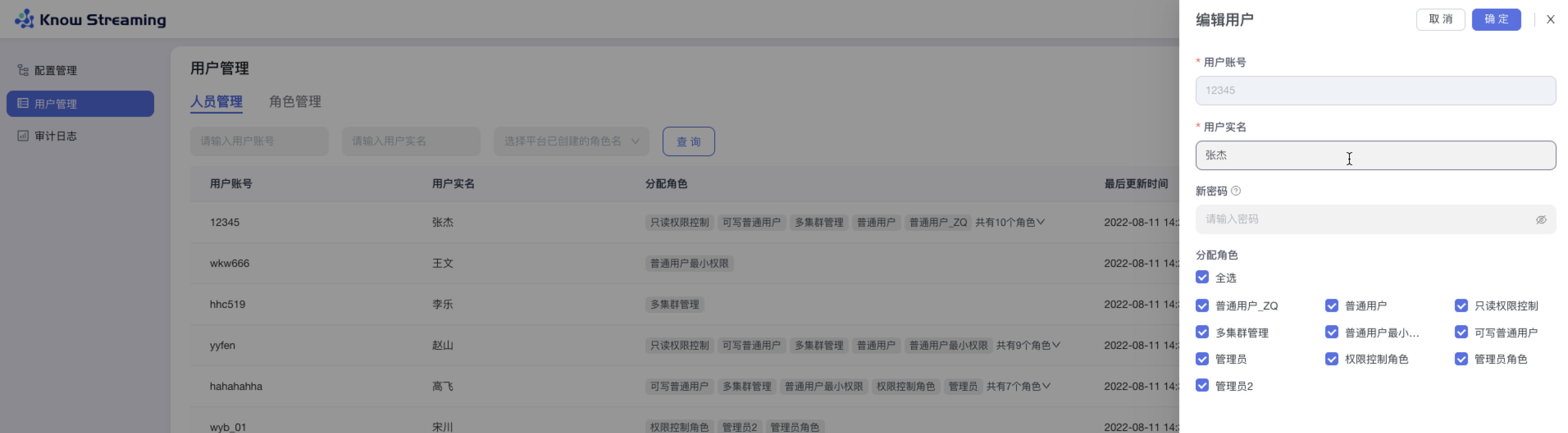

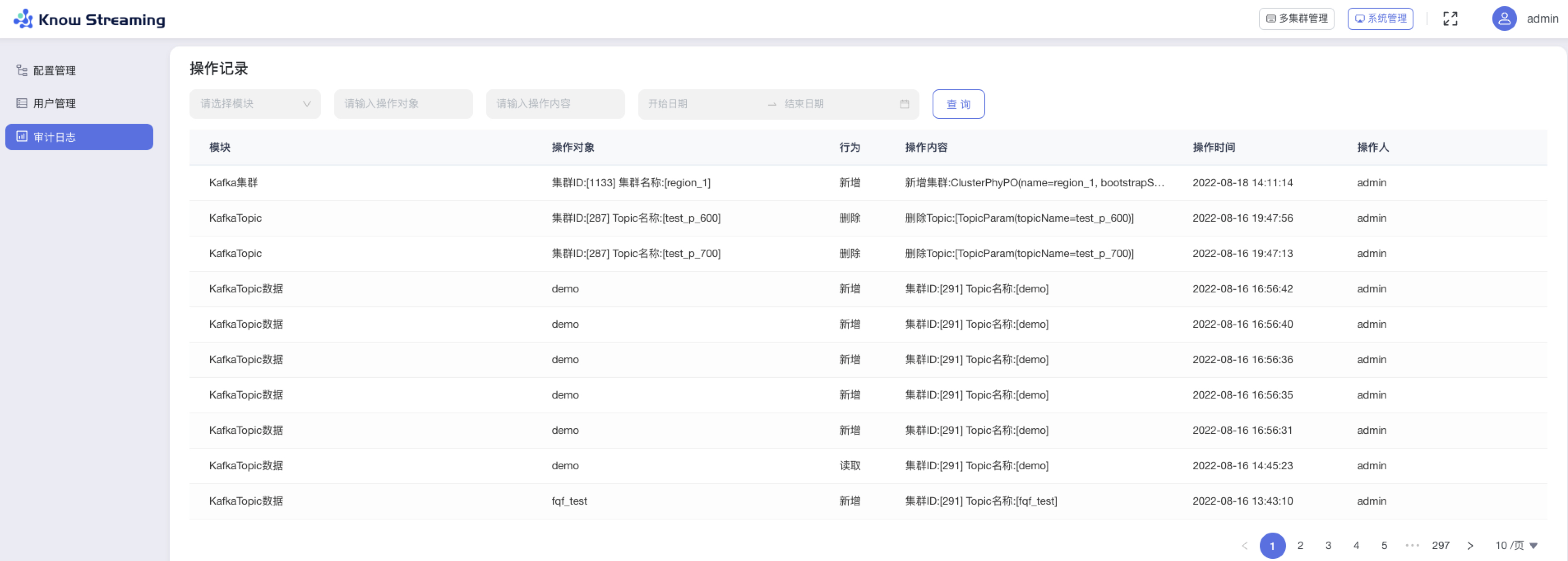

- 优化用户、角色管理体系,支持自定义角色配置页面及操作权限

|

||||

- 优化审计日志信息

|

||||

- 删除多租户体系

|

||||

- 删除工单流程

|

||||

|

||||

---

|

||||

|

||||

## v2.6.0

|

||||

|

||||

版本上线时间:2022-01-24

|

||||

|

||||

### 能力提升

|

||||

- 增加简单回退工具类

|

||||

|

||||

### 体验优化

|

||||

- 补充周期任务说明文档

|

||||

- 补充集群安装部署使用说明文档

|

||||

- 升级Swagger、SpringFramework、SpringBoot、EChats版本

|

||||

- 优化Task模块的日志输出

|

||||

- 优化corn表达式解析失败后退出无任何日志提示问题

|

||||

- Ldap用户接入时,增加部门及邮箱信息等

|

||||

- 对Jmx模块,增加连接失败后的回退机制及错误日志优化

|

||||

- 增加线程池、客户端池可配置

|

||||

- 删除无用的jmx_prometheus_javaagent-0.14.0.jar

|

||||

- 优化迁移任务名称

|

||||

- 优化创建Region时,Region容量信息不能立即被更新问题

|

||||

- 引入lombok

|

||||

- 更新视频教程

|

||||

- 优化kcm_script.sh脚本中的LogiKM地址为可通过程序传入

|

||||

- 第三方接口及网关接口,增加是否跳过登录的开关

|

||||

- extends模块相关配置调整为非必须在application.yml中配置

|

||||

|

||||

### bug修复

|

||||

- 修复批量往DB写入空指标数组时报SQL语法异常的问题

|

||||

- 修复网关增加配置及修改配置时,version不变化问题

|

||||

- 修复集群列表页,提示框遮挡问题

|

||||

- 修复对高版本Broker元信息协议解析失败的问题

|

||||

- 修复Dockerfile执行时提示缺少application.yml文件的问题

|

||||

- 修复逻辑集群更新时,会报空指针的问题

|

||||

|

||||

|

||||

## v2.5.0

|

||||

|

||||

版本上线时间:2021-07-10

|

||||

|

||||

### 体验优化

|

||||

- 更改产品名为LogiKM

|

||||

- 更新产品图标

|

||||

|

||||

|

||||

## v2.4.1+

|

||||

|

||||

版本上线时间:2021-05-21

|

||||

|

||||

### 能力提升

|

||||

- 增加直接增加权限和配额的接口(v2.4.1)

|

||||

- 增加接口调用可绕过登录的功能(v2.4.1)

|

||||

|

||||

### 体验优化

|

||||

- Tomcat 版本提升至8.5.66(v2.4.2)

|

||||

- op接口优化,拆分util接口为topic、leader两类接口(v2.4.1)

|

||||

- 简化Gateway配置的Key长度(v2.4.1)

|

||||

|

||||

### bug修复

|

||||

- 修复页面展示版本错误问题(v2.4.2)

|

||||

|

||||

|

||||

## v2.4.0

|

||||

|

||||

版本上线时间:2021-05-18

|

||||

|

||||

|

||||

### 能力提升

|

||||

|

||||

- 增加App与Topic自动化审批开关

|

||||

- Broker元信息中增加Rack信息

|

||||

- 升级MySQL 驱动,支持MySQL 8+

|

||||

- 增加操作记录查询界面

|

||||

|

||||

### 体验优化

|

||||

|

||||

- FAQ告警组说明优化

|

||||

- 用户手册共享及 独享集群概念优化

|

||||

- 用户管理界面,前端限制用户删除自己

|

||||

|

||||

### bug修复

|

||||

|

||||

- 修复op-util类中创建Topic失败的接口

|

||||

- 周期同步Topic到DB的任务修复,将Topic列表查询从缓存调整为直接查DB

|

||||

- 应用下线审批失败的功能修复,将权限为0(无权限)的数据进行过滤

|

||||

- 修复登录及权限绕过的漏洞

|

||||

- 修复研发角色展示接入集群、暂停监控等按钮的问题

|

||||

|

||||

|

||||

## v2.3.0

|

||||

|

||||

版本上线时间:2021-02-08

|

||||

|

||||

|

||||

### 能力提升

|

||||

|

||||

- 新增支持docker化部署

|

||||

- 可指定Broker作为候选controller

|

||||

- 可新增并管理网关配置

|

||||

- 可获取消费组状态

|

||||

- 增加集群的JMX认证

|

||||

|

||||

### 体验优化

|

||||

|

||||

- 优化编辑用户角色、修改密码的流程

|

||||

- 新增consumerID的搜索功能

|

||||

- 优化“Topic连接信息”、“消费组重置消费偏移”、“修改Topic保存时间”的文案提示

|

||||

- 在相应位置增加《资源申请文档》链接

|

||||

|

||||

### bug修复

|

||||

|

||||

- 修复Broker监控图表时间轴展示错误的问题

|

||||

- 修复创建夜莺监控告警规则时,使用的告警周期的单位不正确的问题

|

||||

|

||||

|

||||

|

||||

## v2.2.0

|

||||

|

||||

版本上线时间:2021-01-25

|

||||

|

||||

|

||||

|

||||

### 能力提升

|

||||

|

||||

- 优化工单批量操作流程

|

||||

- 增加获取Topic75分位/99分位的实时耗时数据

|

||||

- 增加定时任务,可将无主未落DB的Topic定期写入DB

|

||||

|

||||

### 体验优化

|

||||

|

||||

- 在相应位置增加《集群接入文档》链接

|

||||

- 优化物理集群、逻辑集群含义

|

||||

- 在Topic详情页、Topic扩分区操作弹窗增加展示Topic所属Region的信息

|

||||

- 优化Topic审批时,Topic数据保存时间的配置流程

|

||||

- 优化Topic/应用申请、审批时的错误提示文案

|

||||

- 优化Topic数据采样的操作项文案

|

||||

- 优化运维人员删除Topic时的提示文案

|

||||

- 优化运维人员删除Region的删除逻辑与提示文案

|

||||

- 优化运维人员删除逻辑集群的提示文案

|

||||

- 优化上传集群配置文件时的文件类型限制条件

|

||||

|

||||

### bug修复

|

||||

|

||||

- 修复填写应用名称时校验特殊字符出错的问题

|

||||

- 修复普通用户越权访问应用详情的问题

|

||||

- 修复由于Kafka版本升级,导致的数据压缩格式无法获取的问题

|

||||

- 修复删除逻辑集群或Topic之后,界面依旧展示的问题

|

||||

- 修复进行Leader rebalance操作时执行结果重复提示的问题

|

||||

|

||||

|

||||

## v2.1.0

|

||||

|

||||

版本上线时间:2020-12-19

|

||||

|

||||

|

||||

|

||||

### 体验优化

|

||||

|

||||

- 优化页面加载时的背景样式

|

||||

- 优化普通用户申请Topic权限的流程

|

||||

- 优化Topic申请配额、申请分区的权限限制

|

||||

- 优化取消Topic权限的文案提示

|

||||

- 优化申请配额表单的表单项名称

|

||||

- 优化重置消费偏移的操作流程

|

||||

- 优化创建Topic迁移任务的表单内容

|

||||

- 优化Topic扩分区操作的弹窗界面样式

|

||||

- 优化集群Broker监控可视化图表样式

|

||||

- 优化创建逻辑集群的表单内容

|

||||

- 优化集群安全协议的提示文案

|

||||

|

||||

### bug修复

|

||||

|

||||

- 修复偶发性重置消费偏移失败的问题

|

||||

|

||||

|

||||

|

||||

|

||||

655

bin/init_es_template.sh

Normal file

@@ -0,0 +1,655 @@

|

||||

esaddr=127.0.0.1

|

||||

port=8060

|

||||

curl -s --connect-timeout 10 -o /dev/null http://${esaddr}:${port}/_cat/nodes >/dev/null 2>&1

|

||||

if [ "$?" != "0" ];then

|

||||

echo "Elasticserach 访问失败, 请安装完后检查并重新执行该脚本 "

|

||||

exit

|

||||

fi

|

||||

|

||||

curl -s --connect-timeout 10 -o /dev/null -X POST -H 'cache-control: no-cache' -H 'content-type: application/json' http://${esaddr}:${port}/_template/ks_kafka_broker_metric -d '{

|

||||

"order" : 10,

|

||||

"index_patterns" : [

|

||||

"ks_kafka_broker_metric*"

|

||||

],

|

||||

"settings" : {

|

||||

"index" : {

|

||||

"number_of_shards" : "10"

|

||||

}

|

||||

},

|

||||

"mappings" : {

|

||||

"properties" : {

|

||||

"brokerId" : {

|

||||

"type" : "long"

|

||||

},

|

||||

"routingValue" : {

|

||||

"type" : "text",

|

||||

"fields" : {

|

||||

"keyword" : {

|

||||

"ignore_above" : 256,

|

||||

"type" : "keyword"

|

||||

}

|

||||

}

|

||||

},

|

||||

"clusterPhyId" : {

|

||||

"type" : "long"

|

||||

},

|

||||

"metrics" : {

|

||||

"properties" : {

|

||||

"NetworkProcessorAvgIdle" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"UnderReplicatedPartitions" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"BytesIn_min_15" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"HealthCheckTotal" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"RequestHandlerAvgIdle" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"connectionsCount" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"BytesIn_min_5" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"HealthScore" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"BytesOut" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"BytesOut_min_15" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"BytesIn" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"BytesOut_min_5" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"TotalRequestQueueSize" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"MessagesIn" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"TotalProduceRequests" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"HealthCheckPassed" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"TotalResponseQueueSize" : {

|

||||

"type" : "float"

|

||||

}

|

||||

}

|

||||

},

|

||||

"key" : {

|

||||

"type" : "text",

|

||||

"fields" : {

|

||||

"keyword" : {

|

||||

"ignore_above" : 256,

|

||||

"type" : "keyword"

|

||||

}

|

||||

}

|

||||

},

|

||||

"timestamp" : {

|

||||

"format" : "yyyy-MM-dd HH:mm:ss Z||yyyy-MM-dd HH:mm:ss||yyyy-MM-dd HH:mm:ss.SSS Z||yyyy-MM-dd HH:mm:ss.SSS||yyyy-MM-dd HH:mm:ss,SSS||yyyy/MM/dd HH:mm:ss||yyyy-MM-dd HH:mm:ss,SSS Z||yyyy/MM/dd HH:mm:ss,SSS Z||epoch_millis",

|

||||

"index" : true,

|

||||

"type" : "date",

|

||||

"doc_values" : true

|

||||

}

|

||||

}

|

||||

},

|

||||

"aliases" : { }

|

||||

}'

|

||||

|

||||

curl -s -o /dev/null -X POST -H 'cache-control: no-cache' -H 'content-type: application/json' http://${esaddr}:${port}/_template/ks_kafka_cluster_metric -d '{

|

||||

"order" : 10,

|

||||

"index_patterns" : [

|

||||

"ks_kafka_cluster_metric*"

|

||||

],

|

||||

"settings" : {

|

||||

"index" : {

|

||||

"number_of_shards" : "10"

|

||||

}

|

||||

},

|

||||

"mappings" : {

|

||||

"properties" : {

|

||||

"routingValue" : {

|

||||

"type" : "text",

|

||||

"fields" : {

|

||||

"keyword" : {

|

||||

"ignore_above" : 256,

|

||||

"type" : "keyword"

|

||||

}

|

||||

}

|

||||

},

|

||||

"clusterPhyId" : {

|

||||

"type" : "long"

|

||||

},

|

||||

"metrics" : {

|

||||

"properties" : {

|

||||

"Connections" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"BytesIn_min_15" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"PartitionURP" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthScore_Topics" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"EventQueueSize" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"ActiveControllerCount" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"GroupDeads" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"BytesIn_min_5" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthCheckTotal_Topics" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"Partitions" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"BytesOut" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"Groups" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"BytesOut_min_15" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"TotalRequestQueueSize" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthCheckPassed_Groups" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"TotalProduceRequests" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthCheckPassed" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"TotalLogSize" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"GroupEmptys" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"PartitionNoLeader" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthScore_Brokers" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"Messages" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"Topics" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"PartitionMinISR_E" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthCheckTotal" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"Brokers" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"Replicas" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthCheckTotal_Groups" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"GroupRebalances" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"MessageIn" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthScore" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthCheckPassed_Topics" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthCheckTotal_Brokers" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"PartitionMinISR_S" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"BytesIn" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"BytesOut_min_5" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"GroupActives" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"MessagesIn" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"GroupReBalances" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthCheckPassed_Brokers" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthScore_Groups" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"TotalResponseQueueSize" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"Zookeepers" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"LeaderMessages" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthScore_Cluster" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthCheckPassed_Cluster" : {

|

||||

"type" : "double"

|

||||

},

|

||||

"HealthCheckTotal_Cluster" : {

|

||||

"type" : "double"

|

||||

}

|

||||

}

|

||||

},

|

||||

"key" : {

|

||||

"type" : "text",

|

||||

"fields" : {

|

||||

"keyword" : {

|

||||

"ignore_above" : 256,

|

||||

"type" : "keyword"

|

||||

}

|

||||

}

|

||||

},

|

||||

"timestamp" : {

|

||||

"format" : "yyyy-MM-dd HH:mm:ss Z||yyyy-MM-dd HH:mm:ss||yyyy-MM-dd HH:mm:ss.SSS Z||yyyy-MM-dd HH:mm:ss.SSS||yyyy-MM-dd HH:mm:ss,SSS||yyyy/MM/dd HH:mm:ss||yyyy-MM-dd HH:mm:ss,SSS Z||yyyy/MM/dd HH:mm:ss,SSS Z||epoch_millis",

|

||||

"type" : "date"

|

||||

}

|

||||

}

|

||||

},

|

||||

"aliases" : { }

|

||||

}'

|

||||

|

||||

curl -s -o /dev/null -X POST -H 'cache-control: no-cache' -H 'content-type: application/json' http://${esaddr}:${port}/_template/ks_kafka_group_metric -d '{

|

||||

"order" : 10,

|

||||

"index_patterns" : [

|

||||

"ks_kafka_group_metric*"

|

||||

],

|

||||

"settings" : {

|

||||

"index" : {

|

||||

"number_of_shards" : "10"

|

||||

}

|

||||

},

|

||||

"mappings" : {

|

||||

"properties" : {

|

||||

"group" : {

|

||||

"type" : "keyword"

|

||||

},

|

||||

"partitionId" : {

|

||||

"type" : "long"

|

||||

},

|

||||

"routingValue" : {

|

||||

"type" : "text",

|

||||

"fields" : {

|

||||

"keyword" : {

|

||||

"ignore_above" : 256,

|

||||

"type" : "keyword"

|

||||

}

|

||||

}

|

||||

},

|

||||

"clusterPhyId" : {

|

||||

"type" : "long"

|

||||

},

|

||||

"topic" : {

|

||||

"type" : "keyword"

|

||||

},

|

||||

"metrics" : {

|

||||

"properties" : {

|

||||

"HealthScore" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"Lag" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"OffsetConsumed" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"HealthCheckTotal" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"HealthCheckPassed" : {

|

||||

"type" : "float"

|

||||

}

|

||||

}

|

||||

},

|

||||

"groupMetric" : {

|

||||

"type" : "keyword"

|

||||

},

|

||||

"key" : {

|

||||

"type" : "text",

|

||||

"fields" : {

|

||||

"keyword" : {

|

||||

"ignore_above" : 256,

|

||||

"type" : "keyword"

|

||||

}

|

||||

}

|

||||

},

|

||||

"timestamp" : {

|

||||

"format" : "yyyy-MM-dd HH:mm:ss Z||yyyy-MM-dd HH:mm:ss||yyyy-MM-dd HH:mm:ss.SSS Z||yyyy-MM-dd HH:mm:ss.SSS||yyyy-MM-dd HH:mm:ss,SSS||yyyy/MM/dd HH:mm:ss||yyyy-MM-dd HH:mm:ss,SSS Z||yyyy/MM/dd HH:mm:ss,SSS Z||epoch_millis",

|

||||

"index" : true,

|

||||

"type" : "date",

|

||||

"doc_values" : true

|

||||

}

|

||||

}

|

||||

},

|

||||

"aliases" : { }

|

||||

}'

|

||||

|

||||

curl -s -o /dev/null -X POST -H 'cache-control: no-cache' -H 'content-type: application/json' http://${esaddr}:${port}/_template/ks_kafka_partition_metric -d '{

|

||||

"order" : 10,

|

||||

"index_patterns" : [

|

||||

"ks_kafka_partition_metric*"

|

||||

],

|

||||

"settings" : {

|

||||

"index" : {

|

||||

"number_of_shards" : "10"

|

||||

}

|

||||

},

|

||||

"mappings" : {

|

||||

"properties" : {

|

||||

"brokerId" : {

|

||||

"type" : "long"

|

||||

},

|

||||

"partitionId" : {

|

||||

"type" : "long"

|

||||

},

|

||||

"routingValue" : {

|

||||

"type" : "text",

|

||||

"fields" : {

|

||||

"keyword" : {

|

||||

"ignore_above" : 256,

|

||||

"type" : "keyword"

|

||||

}

|

||||

}

|

||||

},

|

||||

"clusterPhyId" : {

|

||||

"type" : "long"

|

||||

},

|

||||

"topic" : {

|

||||

"type" : "keyword"

|

||||

},

|

||||

"metrics" : {

|

||||

"properties" : {

|

||||

"LogStartOffset" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"Messages" : {

|

||||

"type" : "float"

|

||||

},

|

||||

"LogEndOffset" : {

|

||||

"type" : "float"

|

||||

}

|

||||

}

|

||||

},

|

||||

"key" : {

|

||||

"type" : "text",

|

||||

"fields" : {

|

||||

"keyword" : {

|

||||

"ignore_above" : 256,

|

||||

"type" : "keyword"

|

||||

}

|

||||

}

|

||||

},

|

||||

"timestamp" : {

|

||||

"format" : "yyyy-MM-dd HH:mm:ss Z||yyyy-MM-dd HH:mm:ss||yyyy-MM-dd HH:mm:ss.SSS Z||yyyy-MM-dd HH:mm:ss.SSS||yyyy-MM-dd HH:mm:ss,SSS||yyyy/MM/dd HH:mm:ss||yyyy-MM-dd HH:mm:ss,SSS Z||yyyy/MM/dd HH:mm:ss,SSS Z||epoch_millis",

|

||||

"index" : true,

|

||||

"type" : "date",

|

||||

"doc_values" : true

|

||||

}

|

||||

}

|

||||

},

|

||||

"aliases" : { }

|

||||

}'

|

||||

|

||||

curl -s -o /dev/null -X POST -H 'cache-control: no-cache' -H 'content-type: application/json' http://${esaddr}:${port}/_template/ks_kafka_replication_metric -d '{

|

||||

"order" : 10,

|

||||

"index_patterns" : [

|

||||

"ks_kafka_partition_metric*"

|

||||

],

|

||||

"settings" : {

|

||||

"index" : {

|

||||

"number_of_shards" : "10"

|

||||

}

|

||||

},

|

||||

"mappings" : {

|

||||

"properties" : {

|

||||

"brokerId" : {

|

||||

"type" : "long"

|

||||

},

|

||||